C_DS_42 Practice Exam - SAP Certified Application Associate Data Integration with SAP Data Services 4.2

Reliable Study Materials & Testing Engine for C_DS_42 Exam Success!

Exam Code: C_DS_42

Exam Name: SAP Certified Application Associate Data Integration with SAP Data Services 4.2

Certification Provider: SAP

Corresponding Certifications: SAP Certified Application Associate , SAP Other Certification

Free Updates PDF & Test Engine

Verified By IT Certified Experts

Guaranteed To Have Actual Exam Questions

Up-To-Date Exam Study Material

99.5% High Success Pass Rate

100% Accurate Answers

100% Money Back Guarantee

Instant Downloads

Free Fast Exam Updates

Exam Questions And Answers PDF

Best Value Available in Market

Try Demo Before You Buy

Secure Shopping Experience

C_DS_42: SAP Certified Application Associate Data Integration with SAP Data Services 4.2 Study Material and Test Engine

Last Update Check: Mar 17, 2026

Latest 80 Questions & Answers

45-75% OFF

Hurry up! offer ends in 00 Days 00h 00m 00s

*Download the Test Player for FREE

Dumpsarena SAP SAP Certified Application Associate Data Integration with SAP Data Services 4.2 (C_DS_42) Free Practice Exam Simulator Test Engine Exam preparation with its cutting-edge combination of authentic test simulation, dynamic adaptability, and intuitive design. Recognized as the industry-leading practice platform, it empowers candidates to master their certification journey through these standout features.

What is in the Premium File?

Satisfaction Policy – Dumpsarena.co

At DumpsArena.co, your success is our top priority. Our dedicated technical team works tirelessly day and night to deliver high-quality, up-to-date Practice Exam and study resources. We carefully craft our content to ensure it’s accurate, relevant, and aligned with the latest exam guidelines. Your satisfaction matters to us, and we are always working to provide you with the best possible learning experience. If you’re ever unsatisfied with our material, don’t hesitate to reach out—we’re here to support you. With DumpsArena.co, you can study with confidence, backed by a team you can trust.

SAP C_DS_42 Exam FAQs

Introduction of SAP C_DS_42 Exam!

The SAP Certified Application Associate - Data Integration with SAP Data Services 4.2 (C_DS_42) exam is a certification exam for professionals who want to demonstrate their knowledge and skills in using SAP Data Services 4.2. The exam covers topics such as data integration, data quality, data profiling, data transformation, and data governance. It also tests the candidate's ability to design, develop, and deploy data integration solutions using SAP Data Services 4.2.

What is the Duration of SAP C_DS_42 Exam?

The duration of the SAP C_DS_42 exam is 180 minutes.

What are the Number of Questions Asked in SAP C_DS_42 Exam?

There are 80 questions in the SAP C_DS_42 exam.

What is the Passing Score for SAP C_DS_42 Exam?

The passing score required in the SAP C_DS_42 exam is 65%.

What is the Competency Level required for SAP C_DS_42 Exam?

The required competency level for the SAP C_DS_42 exam is Professional.

What is the Question Format of SAP C_DS_42 Exam?

The SAP C_DS_42 exam consists of 80 multiple-choice questions. Each question has four possible answers, and there is one correct answer.

How Can You Take SAP C_DS_42 Exam?

SAP C_DS_42 exam can be taken both online and in a testing center. To take the exam online, you must register for the exam on the SAP website. To take the exam in a testing center, you must contact the nearest testing center to schedule your exam.

What Language SAP C_DS_42 Exam is Offered?

The SAP C_DS_42 exam is offered in the English language.

What is the Cost of SAP C_DS_42 Exam?

The SAP C_DS_42 exam is offered for a fee of $500.

What is the Target Audience of SAP C_DS_42 Exam?

The target audience of the SAP C_DS_42 exam is SAP Certified Application Associate professionals who possess a deep understanding of the SAP Data Services 4.2 solution and its core capabilities. This exam is designed to test an individual's knowledge and skills related to the installation, configuration, administration, and troubleshooting of the SAP Data Services 4.2 solution.

What is the Average Salary of SAP C_DS_42 Certified in the Market?

The exact salary after SAP C_DS_42 certification will vary depending on your job title and experience level. Generally, individuals with SAP C_DS_42 certification can expect to earn an average salary of $100,000 - $150,000 per year.

Who are the Testing Providers of SAP C_DS_42 Exam?

SAP provides official certification exams for the C_DS_42 exam. Candidates can take the exam at Pearson VUE Test Centers, or online from the comfort of their own homes. The exam is available in English and German.

What is the Recommended Experience for SAP C_DS_42 Exam?

The recommended experience for the SAP C_DS_42 exam is at least one year of implementation experience with SAP Data Services or equivalent knowledge. Additionally, candidates should have a basic understanding of data warehousing concepts and the technical aspects of the Data Services application.

What are the Prerequisites of SAP C_DS_42 Exam?

The Prerequisite for SAP C_DS_42 Exam is that candidates should have a strong knowledge of the SAP Data Services application. They should also have hands-on experience with the product and be familiar with SAP HANA and SAP BusinessObjects Data Services.

What is the Expected Retirement Date of SAP C_DS_42 Exam?

The official website for SAP C_DS_42 exam is https://training.sap.com/certification/c_ds_42-sap-certified-application-associate-data-science-with-sap-hana-2020. The expected retirement date of this exam is not available on this website.

What is the Difficulty Level of SAP C_DS_42 Exam?

The difficulty level of the SAP C_DS_42 exam is intermediate.

What is the Roadmap / Track of SAP C_DS_42 Exam?

The SAP C_DS_42 exam is a certification exam for the SAP Data Services 4.2 software. It is designed to test the knowledge and skills of an individual in the areas of data integration, data quality, and data governance. The exam covers topics such as data integration and transformation, data quality, data governance, and data security. It also covers topics related to the use of SAP Data Services 4.2 software. The certification track/roadmap for the SAP C_DS_42 exam includes passing the exam, completing a course, and earning a certificate.

What are the Topics SAP C_DS_42 Exam Covers?

The SAP C_DS_42 exam covers the following topics:

1. Data Warehousing: This topic covers the design and implementation of data warehouses and data marts. It includes topics such as data modeling, ETL, data integration, data quality, and data governance.

2. Big Data: This topic covers the fundamentals of big data and its applications. It includes topics such as Hadoop, NoSQL databases, distributed computing, and data analytics.

3. SAP HANA: This topic covers the architecture and components of the SAP HANA platform. It includes topics such as data modeling, SQLScript, and the SAP HANA Studio.

4. SAP Analytics Cloud: This topic covers the features and functionality of the SAP Analytics Cloud platform. It includes topics such as data visualization, predictive analytics, and machine learning.

5. SAP Data Services: This topic covers the features and functionality of SAP Data Services. It includes topics such as

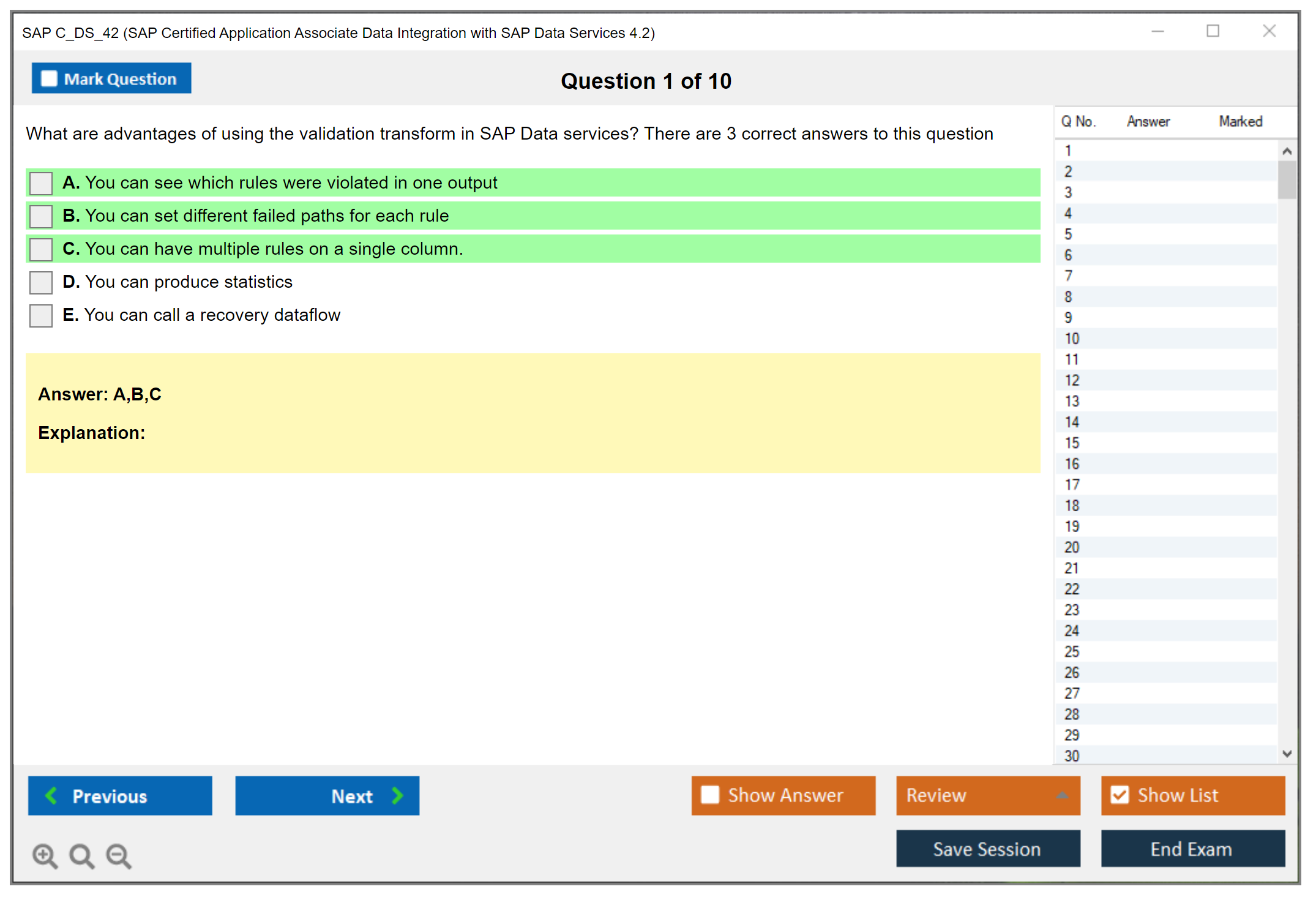

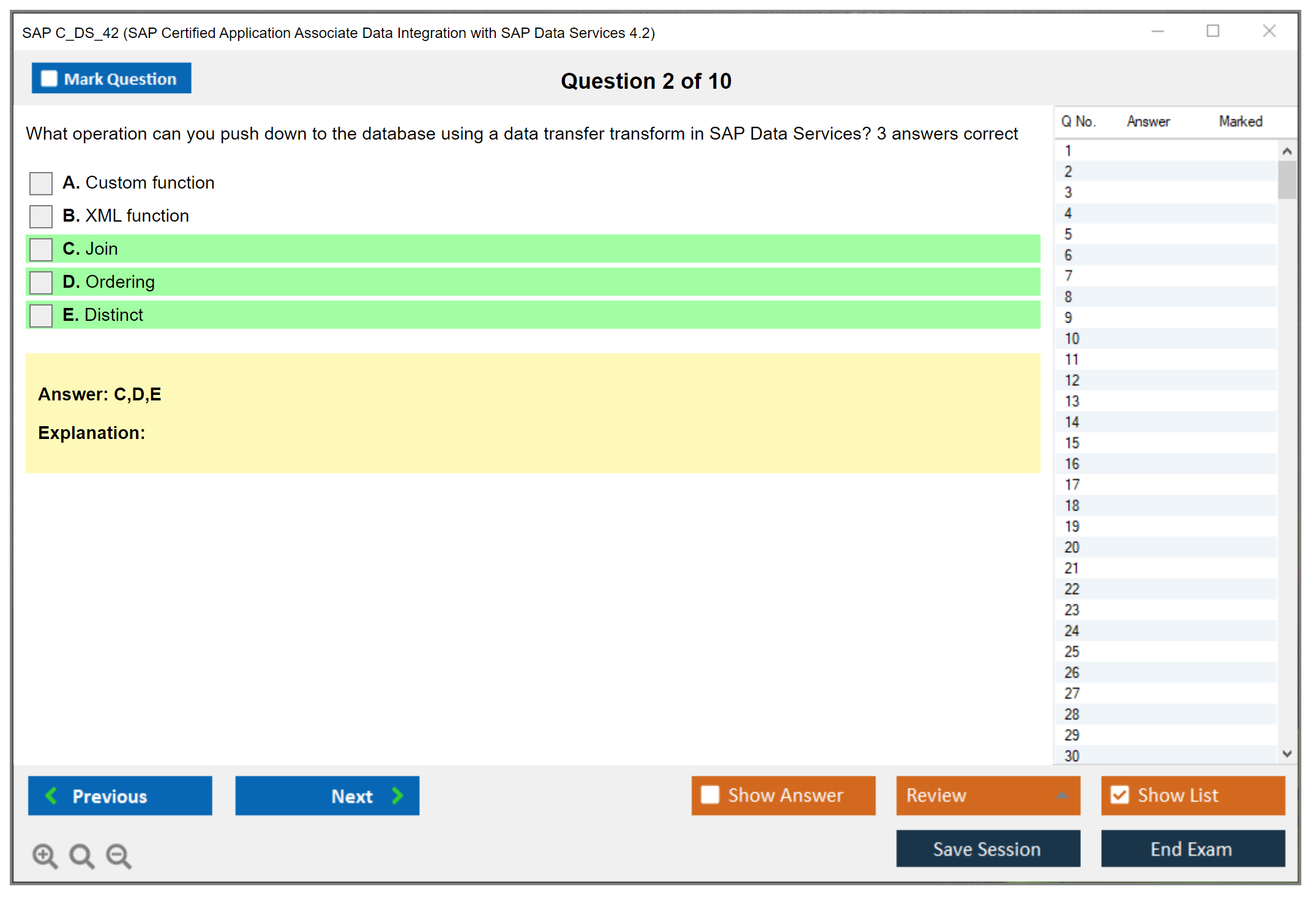

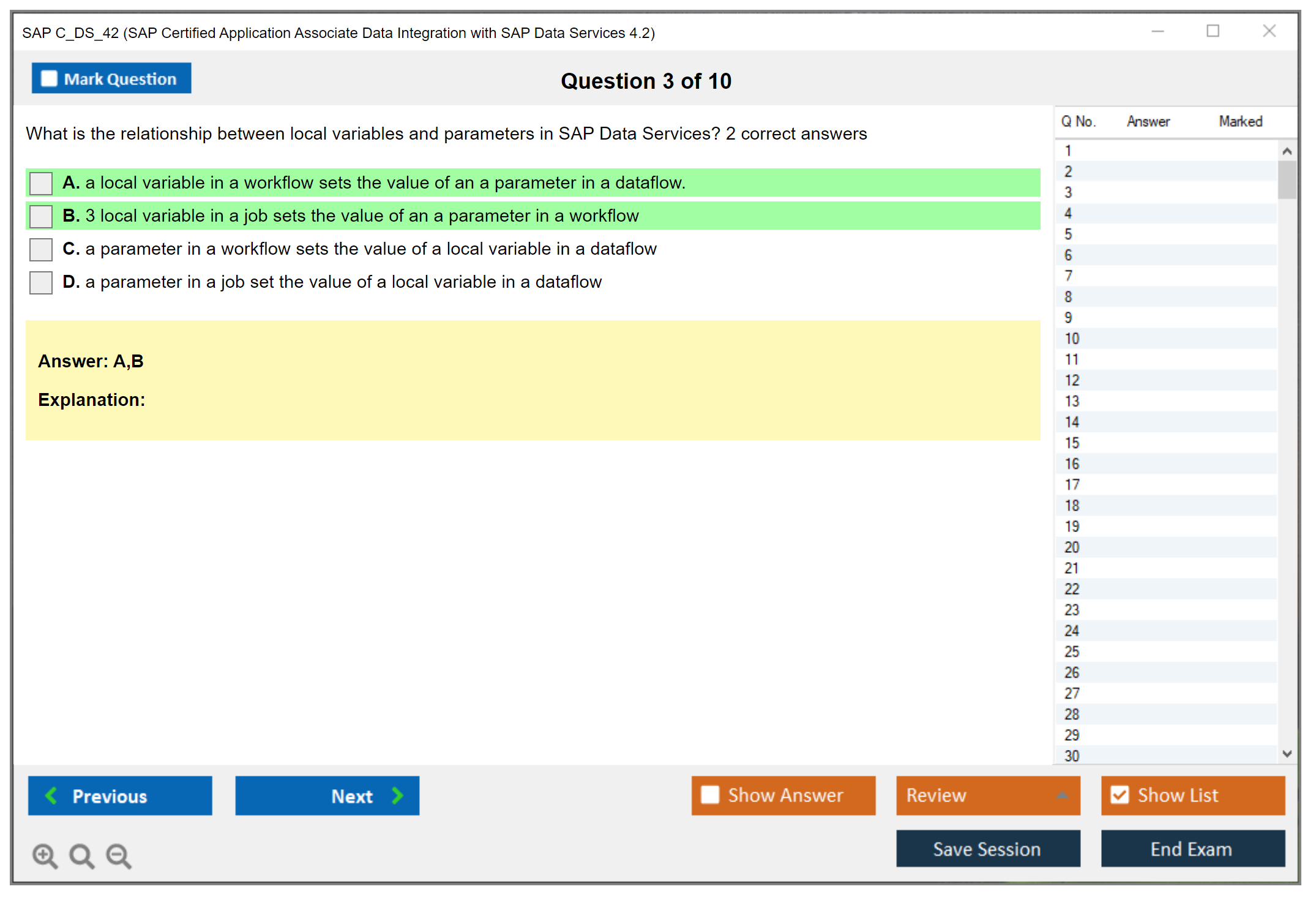

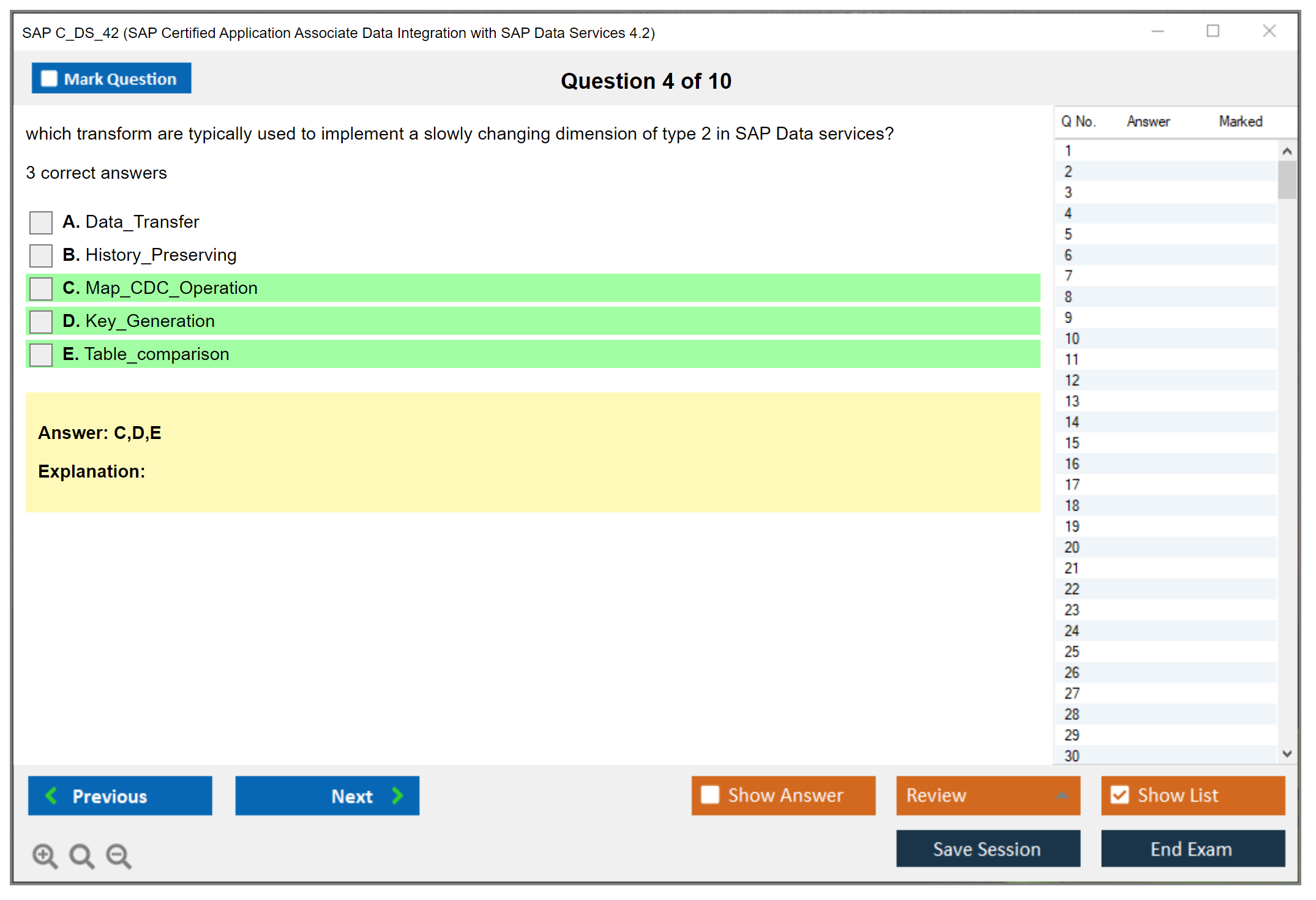

What are the Sample Questions of SAP C_DS_42 Exam?

1. What are the benefits of using SAP C/4HANA for customer data management?

2. How does SAP C/4HANA enable predictive analysis?

3. What are the components of the SAP C/4HANA suite?

4. How does SAP C/4HANA help to manage customer data across multiple channels?

5. What is the role of the SAP Data Services application in SAP C/4HANA?

6. How can SAP C/4HANA be used to manage customer data across multiple systems?

7. What are the best practices for implementing SAP C/4HANA?

8. How can SAP C/4HANA be used to ensure data quality?

9. What are the key features of SAP Data Services?

10. What are the benefits of using SAP C/4HANA for customer data integration?

SAP C_DS_42 (SAP Certified Application Associate Data Integration with SAP Data Services 4.2) SAP C_DS_42 Certification Overview and Value Proposition What SAP C_DS_42 actually means for your career The SAP C_DS_42 certification is the official SAP Certified Application Associate credential proving you know how to work with SAP Data Services 4.2. This isn't some generic data cert. This one specifically validates that you can build, deploy, and manage data integration solutions using this particular platform. If you're serious about working with SAP data ecosystems, this certification tells employers you're not just dabbling around. The C_DS_42 exam validates your fundamental knowledge and proven skills in implementing ETL processes, data quality management, and end-to-end data integration solutions. You're showing that you understand how to move data between systems, clean it up, transform it, and make sure it actually works in production. That's what matters. Not just theory but the... Read More

SAP C_DS_42 (SAP Certified Application Associate Data Integration with SAP Data Services 4.2)

SAP C_DS_42 Certification Overview and Value Proposition

What SAP C_DS_42 actually means for your career

The SAP C_DS_42 certification is the official SAP Certified Application Associate credential proving you know how to work with SAP Data Services 4.2. This isn't some generic data cert. This one specifically validates that you can build, deploy, and manage data integration solutions using this particular platform. If you're serious about working with SAP data ecosystems, this certification tells employers you're not just dabbling around.

The C_DS_42 exam validates your fundamental knowledge and proven skills in implementing ETL processes, data quality management, and end-to-end data integration solutions. You're showing that you understand how to move data between systems, clean it up, transform it, and make sure it actually works in production. That's what matters. Not just theory but the ability to design jobs and workflows that don't break when real data hits them. Anyone can follow a tutorial, but can you troubleshoot a failed production job at 2 AM? That's different.

Core competencies the exam actually tests

First up is Data Services architecture understanding. You need to know how the Designer, Repository, and Management Console fit together. Can't fake that.

Then there's datastore configuration. Connecting to SAP ECC, S/4HANA, HANA database, Oracle, SQL Server, flat files, whatever's in your enterprise space. The exam will test whether you know how to set up these connections properly and manage metadata without breaking integrations. Job and workflow design is huge too. You'll need to demonstrate you can build dataflows, use the right transforms (Query, Map, Case, Data_Transfer, the whole toolkit), and structure jobs that actually perform well instead of choking on large datasets.

I've seen people who can click through the Designer but don't understand execution order or error handling. Those folks really struggle with C_DS_42 because it tests practical application, not just interface familiarity. Sometimes they've done plenty of classroom labs but never dealt with a production environment where users are breathing down your neck about delayed reports.

Data profiling and quality management get tested pretty thoroughly here. You need to know how to profile source data, identify quality issues that'll cause downstream problems, build validation rules that actually catch bad records, and implement cleansing logic that doesn't accidentally destroy legitimate data. This isn't abstract stuff. The exam uses real-world scenarios where you have to choose the right approach under constraints. Operational monitoring rounds it out: understanding logs, performance tuning basics, troubleshooting failed jobs, and knowing where to look when things go sideways in production environments.

Who should actually take this exam

Data integration specialists and ETL developers are the obvious candidates. If you're building data pipelines for SAP environments, C_DS_42 validates what you already do daily. Business intelligence consultants who work with SAP BI/BW systems benefit because Data Services often feeds those warehouses. Data warehouse architects need this if they're designing solutions that include Data Services as the integration layer. Can't design what you don't understand.

SAP technical consultants working on data migration projects should consider it, especially if you're moving clients from ECC to S/4HANA or consolidating systems after mergers. Having C_DS_42 on your profile makes you way more attractive for those engagements where clients are paying premium rates.

The ideal candidate has 1-3 years of hands-on experience with SAP Data Services in real implementations. Entry-level folks can pass it, but you'll struggle if you've only done classroom exercises without production pressure. The exam assumes you've dealt with actual project challenges. Performance issues that impact business processes. Complex transformations that need to handle edge cases. Integration with multiple source systems that don't always play nice. You need that context to understand why certain design choices matter beyond "because the training said so."

Why this certification still matters in 2026

Yeah, SAP keeps pushing newer tools and cloud-native options, but Data Services isn't going anywhere soon. Organizations continue digital transformation initiatives that require solid ETL capabilities. Not everything moves to the cloud overnight. Cloud migration projects often use Data Services to move data from on-premises SAP systems to cloud platforms in controlled, validated ways. Master data management initiatives rely heavily on Data Services for consolidation and synchronization across systems that were never meant to talk to each other.

The job market shows sustained demand for these skills. I'm seeing postings that specifically ask for Data Services experience, and having the C_DS_42 certification gets you past HR filters that screen out otherwise qualified candidates. Recruiters search for this exact credential when filling urgent roles. While tools like SAP Integration Suite and Cloud Data Integration are growing, many enterprise clients still run Data Services 4.2 and will for years. They've got massive investments in existing jobs and can't just rip-and-replace. They need people who can maintain and enhance those solutions without causing outages.

How C_DS_42 fits in the SAP certification space

This cert's pretty specialized. Narrow focus. The SAP Certified Application Associate - SAP BusinessObjects Business Intelligence Platform 4.2 covers the whole BI suite with reporting and dashboards, while C_DS_42 drills deep into just the Data Services component. It's different from SAP Certified Associate - Business Process Integration with SAP S/4HANA 2020 which focuses on process integration rather than technical data movement. Those are functional consultants. We're technical implementers.

Working with master data? C_DS_42 complements the SAP Certified Application Associate - SAP Master Data Governance nicely since Data Services often implements MDG synchronization jobs that keep everything consistent. For technical consultants, pairing C_DS_42 with SAP Certified Technology Associate - System Administration (SAP HANA) with SAP NetWeaver 7.5 creates a solid technical profile that covers both infrastructure and integration layers.

The version specificity matters here. Can't stress that enough. C_DS_42 certifies specifically on 4.2 version features and capabilities, not generic ETL concepts. Data Services 4.3 exists with newer features, but many clients haven't upgraded because upgrades are expensive and risky, so 4.2 skills remain highly relevant in actual client environments. Just be aware that some newer features in later versions won't be on this exam, which keeps it focused.

Real-world scenarios where this certification proves valuable

Master data management projects use Data Services to consolidate customer, supplier, and material master data from multiple SAP and non-SAP systems that grew organically over decades. You're building complex matching and merging logic, implementing data quality rules that business users actually trust, and orchestrating workflows that span multiple applications without creating duplicates or losing critical information.

Data migration projects, especially SAP transformations, rely heavily on Data Services because it handles the complexity. Moving from ECC to S/4HANA, you're extracting legacy data with all its quirks, transforming it to new structures that don't match one-to-one, validating business rules that weren't documented anywhere, and loading it into the target without disrupting operations. The C_DS_42 skills directly apply. This is literally what the tool was designed for.

Data warehousing ETL is the classic use case that never goes away. Loading SAP BW or BW/4HANA from operational systems, you're designing incremental loads that don't duplicate data, handling slowly changing dimensions that need historical tracking, implementing complex business logic in transforms because source systems can't be modified. Real-time data replication scenarios use Data Services for near-real-time synchronization between systems when batch windows are too slow. Regulatory compliance projects need data lineage that auditors can follow, audit trails that prove what changed when, and quality validation that demonstrates control effectiveness. All core Data Services capabilities tested in C_DS_42.

The professional and organizational benefits you actually get

For you personally, the certification enhances credibility when talking to clients or interviewing for new roles where they're skeptical of resume claims. It provides competitive advantage in a job market where many people claim data skills but can't prove them beyond "I used Excel once." Salary increases aren't guaranteed. Let's be realistic. But certified professionals typically command 10-15% more than non-certified peers with similar experience according to industry surveys. You qualify for advanced SAP projects that require certified team members as contractual obligations.

Organizations benefit too. Validated team expertise reduces project risk because certified people know what they don't know. Improved project success rates come from having people who understand best practices instead of reinventing wheels badly. Access to SAP partner programs often requires certified consultants. It's a gating requirement. Customer confidence increases when they see certified resources on their projects, and it's a checkbox item in many RFPs that determines whether you even get considered.

The C_DS_42 exam costs around $550-650 depending on your region. Not cheap but not outrageous either. Preparation time runs 6-8 weeks if you're working full-time and studying evenings and weekends. The return on investment comes from career advancement opportunities you wouldn't get otherwise, project assignments that pay better rates, and the knowledge that actually makes you better at your job instead of just looking good on paper. Not every certification delivers ROI. Some are just resume padding. But if you're working in SAP data integration, C_DS_42 is one of the solid ones that clients actually recognize.

The pathway doesn't end here either, which is important for long-term planning. After C_DS_42, you might pursue SAP Certified Associate - SAP Activate Project Manager to add project management capabilities that let you lead instead of just implement, or specialize further in areas like SAP Certified Application Professional - Financials in SAP S/4HANA if you're working in finance data integration where the money and complexity are. The certification portfolio approach works. Stack complementary credentials that tell a coherent story instead of random certifications that don't connect.

C_DS_42 Exam Structure and Logistics

SAP C_DS_42 certification overview (SAP Data Services 4.2)

The SAP C_DS_42 certification is the official associate-level credential for folks doing ETL with SAP Data Services. You're building dataflows, wiring up datastores, keeping tabs on data quality basics. Standard stuff. This isn't one of those "do you remember which menu button to click" quizzes. More like: can you actually read a situation, pick the right transform, and sidestep the common stuff that breaks jobs at 2 a.m. when you're trying to sleep?

The certification title is long. For a reason, I guess. Official exam code is C_DS_42 - SAP Certified Application Associate - Data Integration with SAP Data Services 4.2. It maps pretty cleanly to what you're doing day-to-day in Designer plus the SAP Data Services repository and management console. That's where the magic happens. Or chaos, depending on the week.

What the certification validates

You'll see coverage of architecture and components. Real build choices too. Questions that nudge you toward understanding Data Services jobs, workflows, datastores, plus when to use certain SAP DS transforms and mappings without just guessing wildly and hoping for the best.

Here's the thing. If you've only watched videos and never actually built a dataflow with a lookup, a query transform, a validation step, and some error handling, the scenario-based questions will feel mean. They're not mean, technically. They just expose "book-only" prep fast. It's brutal.

Who should take C_DS_42 (roles and experience fit)

This fits ETL developers, data integration consultants, BI platform folks who inherited SAP DS (sympathies), and data engineers in SAP-heavy shops. Also fine for someone aiming at Data Services jobs on purpose, because recruiters still keyword-match "SAP Data Services 4.2 certification" like it's 2018 and LinkedIn search filters haven't evolved.

If you're brand new to ETL, you can pass, but you'll spend way more time learning fundamentals than "C_DS_42 exam" specifics. Might be good for your career but frustrating for your timeline. I knew someone who tried jumping straight in with zero SQL background. That was a rough six weeks.

C_DS_42 exam details

The exam's a computer-based test delivered through the SAP Certification Hub or authorized Pearson VUE testing centers. You either go to a test center or you take it at home with remote proctoring, depending on what your region offers and what you can tolerate in terms of having a camera watch you for three hours.

Exam format (question types, duration, delivery)

Question types? Usually a mix of multiple-choice single-answer, multiple-choice multiple-answer, and scenario-based questions testing practical application knowledge. The kind where you need to think, not just recall. Some questions are straightforward. Others get wordy. A few feel like "which two settings fix this issue," and those are the ones where people drop points because partial credit doesn't exist here.

Total number of questions is typically 80. Exam duration? 180 minutes (3 hours). That's about 2.25 minutes per question on average, but it never feels evenly distributed because you'll burn time on scenarios and then sprint through simpler items like you're suddenly in a hurry.

Exam delivery platform is SAP's own interface. You get navigation controls, the ability to flag questions, a review screen, and a timer that counts down in a mildly anxiety-inducing way. The basics are fine. Just don't assume it behaves like every other testing UI you've used.

Cost (exam price and regional variations)

Pricing changes, but the commonly quoted exam cost is around $540 USD globally. That's a lot. Europe tends to land around €500 to €550. Asia-Pacific regions vary by country, and some markets price lower in developing economies, which is nice because the full sticker price makes you pause before clicking "confirm."

Payment methods usually include credit cards, purchase orders for corporate-sponsored candidates, and sometimes SAP Learning Hub subscription benefits where applicable. Corporate POs are common. Individuals mostly swipe a card and wince a little.

Passing score (how scoring works and where to confirm the latest)

The passing score requirement most people reference is 64%, but SAP can adjust scoring based on question difficulty and exam version. Treat 64% as "typical," not a legal guarantee carved into stone by some ancient SAP council.

Scoring is based on cut scores determined through psychometric analysis. That's SAP's way of saying they try to keep difficulty consistent across versions. One important gotcha: no partial credit for multiple-answer questions. If it says choose two and you pick one right and one wrong? That's still wrong. Full stop.

Language availability is primarily English. Select languages may appear depending on region and SAP localization efforts, but don't plan your whole schedule around finding a non-English slot unless you've confirmed it in the booking flow and you've seen it with your own eyes.

Registration process (SAP Certification Hub)

Registration is pretty standard, but there are steps people skip and then panic about. You create or confirm your SAP Universal ID, then go into the SAP Training and Certification Shop, select the exam, and schedule through the Pearson VUE integration. Works fine until it doesn't.

One sentence warning. Names. Must. Match.

Your government-issued photo ID has to match the registration name exactly, letter for letter. Some testing centers also ask for a secondary ID at their discretion, so bring one if you have it because fixing a name mismatch on exam morning is a special kind of pain nobody should endure.

Scheduling flexibility and remote proctoring

Testing centers typically offer multiple time slots on business days. Some locations have weekend availability if you're lucky. Depends on the center. Big cities? Easier. Smaller towns? Not so much.

Remote proctoring options are usually available via OnVUE in many regions. Convenient until you realize how strict it is. You'll need a quiet private room, stable internet, webcam and microphone, a clean workspace, and you can't have extra monitors. That rules out half the setups people actually use daily. Browser requirements can be picky too, so do the system test early, not ten minutes before check-in when your heart rate's already up.

Testing center protocols and remote environment rules

At a test center, plan to arrive about 15 minutes early because they need time to check you in and verify your ID. Phones, bags, notes, and basically anything interesting is prohibited. They typically provide scratch paper or a whiteboard-style option, and a calculator only if the exam allows it. Varies by program. You should check.

Remote testing? Stricter in a different way. No extra screens. No "just my phone on silent." No talking, not even muttering to yourself. Your desk needs to be empty enough that it feels silly, and the proctor can end the session if you keep looking off-screen. Some people do that naturally when thinking, but the proctor doesn't care about your thinking style.

Accessibility accommodations

Accommodations exist for candidates with disabilities, including extended time, screen readers, and other assistive technologies, but you have to request them ahead of time through SAP/Pearson VUE's process with documentation. Don't assume you can just show up and ask. The approvals can take time, sometimes weeks. Start early if you need this.

Rescheduling, cancellation, no-show policies

Rescheduling and cancellation policies typically require at least 24 to 48 hours notice to avoid losing the fee. That's significant given what you paid. Miss that window? You're often repurchasing.

No-show and late arrival policies are also strict. If you arrive more than about 15 minutes late, or you fail to appear at all, you usually forfeit the exam fee completely. This is why I book morning slots whenever possible. Fewer surprises. Less traffic drama. Lower chance of something weird happening.

Results, score report, certificate, verification

You usually get a preliminary pass/fail immediately after finishing. Both a relief and terrifying depending on the outcome. The official certificate often shows up within 2 to 4 weeks via SAP Certification Hub, depending on processing speed and whatever internal queues exist.

Score report details typically include pass/fail plus performance by topic area, so you know where you were strong or weak. Exact numerical scores? Often not disclosed, which annoys people who want a clean percentage, but you do at least see where you were weak enough to matter.

Certificate format is digital. You access it through the SAP Training and Certification portal, and employers can verify through SAP's public certification check, which is a URL they can look up. That verification link matters more than the PDF, honestly. PDFs can be faked but SAP's database can't.

C_DS_42 exam objectives (skills measured)

The C_DS_42 exam objectives usually orbit around Data Services architecture and components like Designer, repositories, and the management console. The tools you actually use. You also need comfort with datastores, connections, and metadata management, because half the real-world problems are "why can't it see the table" or "why does it run in dev but not QA." Those are metadata issues.

Jobs, workflows, dataflows, and scheduling concepts show up a lot in questions. Same with transforms, mappings, and ETL logic, including common transforms and when you'd pick one over another instead of just guessing based on the name. Data profiling and data quality in SAP DS is also a theme. Not super advanced, but enough to test if you know what profiling is for and what quality transforms can and can't do in practice.

Monitoring, execution, and troubleshooting matters too. Logs. Performance tuning basics. Error handling strategies. Security and transport basics might appear too, depending on version, because SAP loves asking about landscapes and how you move things between environments.

Prerequisites and recommended background

Official prerequisites can be light or nonexistent, but recommended background? Not light. SQL helps immensely. Data warehousing concepts help. Basic SAP space familiarity helps, because the tool doesn't live alone and you'll see terminology that assumes you know what a repo is and why environments differ and what transport means.

Hands-on practice is the difference maker between pass and fail. Build a tiny project: two datastores, a dataflow with a query transform and a lookup, a validation rule, write to a target, then deploy and run it while watching logs in the management console. That's the muscle memory the exam pokes at constantly.

Difficulty and preparation time

Difficulty? Depends heavily on whether you've actually shipped something in Data Services or just read about it. The challenging part is the mix of product knowledge and practical judgment, where two answers sound plausible but only one fits how SAP DS behaves when you're not looking at documentation.

Study timelines vary wildly. A 2 to 4 week track can work if you already build Data Services regularly and you're just aligning to exam wording and filling gaps. A 6 to 8 week track is more realistic if you're learning from scratch or you haven't touched the tool recently and need to rebuild context.

Common pitfalls? Over-trusting memory. Ignoring scenario questions during prep because they're annoying. And spending all your time on random SAP Data Services exam questions online instead of mapping your prep to the C_DS_42 exam objectives. That's where the actual structure lives.

Best study materials for SAP C_DS_42

For SAP-provided stuff, prioritize official training paths and documentation tied to SAP Data Services 4.2 training. Dry but accurate. Product guides and admin guides matter more than people expect, especially for repository concepts, management console behavior, and how jobs execute under different conditions.

A C_DS_42 study guide is helpful if it's basically an objectives checklist plus labs or examples. If it's just flashcards with no context? Won't carry you across the finish line. I mean it might help with memorization but not understanding.

Practice tests and exam strategy

A C_DS_42 practice test is useful when it explains why answers are wrong, not just what's right. That's how you learn to eliminate bad options. Memorizing letter choices is pointless because SAP rotates questions and the scenario wording changes between versions. You need to understand concepts, not patterns.

For exam-day strategy, flag long scenario items early, answer what you can quickly, then come back with remaining time and a fresher brain. For multiple-answer questions, be extra careful because there's no partial credit. Guessing "one extra just in case" actively hurts you instead of helping.

Renewal, validity, and retake policy

SAP's maintenance rules can change, and versioned exams like 4.2 have that awkward reality where the market moves but the credential name stays anchored to an older version. Check SAP's current policy for renewal and staying current, especially if SAP pushes newer data integration options in your org and you need to stay relevant.

Retake rules and waiting periods also change periodically. Verify inside SAP Certification Hub before you assume anything. Policy pages get updated more often than blog posts do and you don't want surprises when you're trying to reschedule after a fail.

FAQs (PAA-style)

What is the SAP C_DS_42 exam and who should take it?

It's the associate exam for SAP Certified Application Associate Data Integration with SAP Data Services 4.2. Aimed at people building and operating ETL with SAP Data Services in real projects, not just theoretically.

What is the passing score for C_DS_42?

Commonly referenced is 64%, but SAP can adjust cut scores by version and difficulty. That's a guideline, not a promise.

How much does the SAP C_DS_42 exam cost?

Around $540 USD, with Europe often €500 to €550, and Asia-Pacific varying by country and sometimes local economic factors.

How hard is the C_DS_42 certification and how long should I study?

Hard if you lack hands-on time with the tool. Plan 2 to 4 weeks with experience, or 6 to 8 weeks if you're building fundamentals plus tool practice from scratch.

What are the best practice tests and study materials for SAP Data Services 4.2?

Best is official SAP training plus docs, then a quality mock exam that explains answers and reasoning. Everything else is secondary or filler.

C_DS_42 Exam Objectives and Content Breakdown

Topic weighting overview: where your study time actually matters

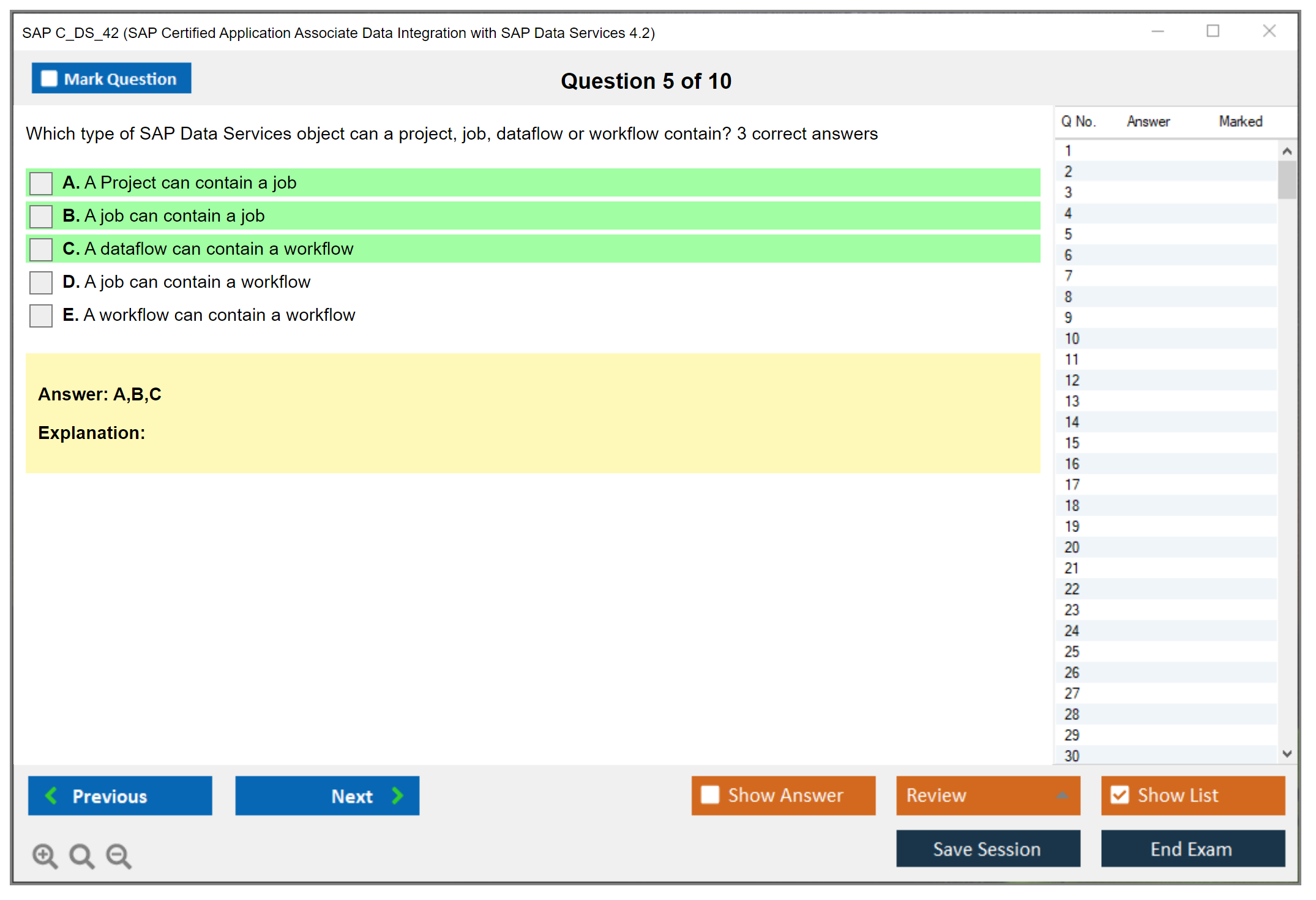

The C_DS_42 exam doesn't treat all topics equally, and honestly that's something tons of people miss when they start preparing. You're looking at roughly 80 questions spread across several knowledge domains, and understanding which areas carry the most weight is gonna save you hours of inefficient studying.

Transforms and data manipulation consume the biggest chunk at 20-25% of the exam content. Makes sense when you think about it. That's where the real work happens in Data Services, the actual heavy lifting that gets your data from messy to useful. Jobs, workflows, and dataflows come in second at 18-22%, because you can't build anything without understanding these foundational structures. Data quality and profiling gets 12-16%, datastores and connections take up 10-15%, and monitoring and troubleshooting claims 10-14%. Architecture fundamentals sits at 8-12%, with security and transport rounding out the bottom at 5-8%.

I mean look, you can't skip the smaller sections entirely. But if you're cramming or short on time, focus on transforms and job structures first. That's nearly half the exam right there.

SAP Data Services architecture fundamentals: the backbone stuff

Not gonna lie. This section (8-12% of exam weight) covers how Data Services actually works under the hood, and it feels dry compared to building actual dataflows, but you need it.

The platform uses a multi-tier design with distinct components that handle different responsibilities. You've got the Designer where developers build everything, the Repository that stores all your metadata and object definitions, Job Servers that execute the actual processing work, and the Management Console for administration and monitoring. Adapters sit in there too, handling connectivity to different source and target systems.

Understanding repository structure matters more than you'd think. I mean, the central repository is where shared objects live and version control happens, and local repositories are workspace copies on your machine. The exam loves asking about when objects get promoted between repositories and how version control works when multiple developers touch the same dataflow.

The way components interact forms a complete solution architecture. Designer talks to Repository to save your work, Job Server pulls job definitions from Repository to execute them, Management Console queries Repository for monitoring data. It's all connected, and the exam will test whether you understand those relationships beyond just surface level.

Designer interface and workspace: where you'll actually spend your time

Navigation in Data Services Designer follows a project-based structure. Projects contain jobs, workflows, dataflows, and reusable objects like transforms and custom functions. Object management involves organizing these components logically. Grouping related dataflows. Creating shared transforms. Maintaining naming conventions.

Workspace customization isn't heavily tested, but you should know how to configure your development environment. Setting up local repositories, connecting to central repositories, configuring trace options for debugging. Basic stuff, but it comes up in scenario-based questions where you need to troubleshoot why a developer can't see certain objects or connect to shared resources.

Repository concepts and management: version control that actually works

The central repository architecture is different from what you might expect if you come from Git-based version control. Data Services uses a check-in and check-out model. When you check out an object, it's locked for your exclusive editing. Check it back in, and others can grab it.

Local versus central repository differences trip people up. Your local repository is basically a workspace database on your development machine where you can build and test without affecting shared objects. The thing is, the central repository is the source of truth that Job Servers execute from and where team collaboration happens.

Object sharing works through importing and exporting, but also through direct central repository connections. Version control features let you see who modified what and when, roll back changes if needed. Repository maintenance tasks include periodic cleanup of unused objects, managing repository database growth, and backing up metadata. The exam touches on these operational aspects more than you'd expect for a development-focused certification.

Management Console functionality: admin interface you can't ignore

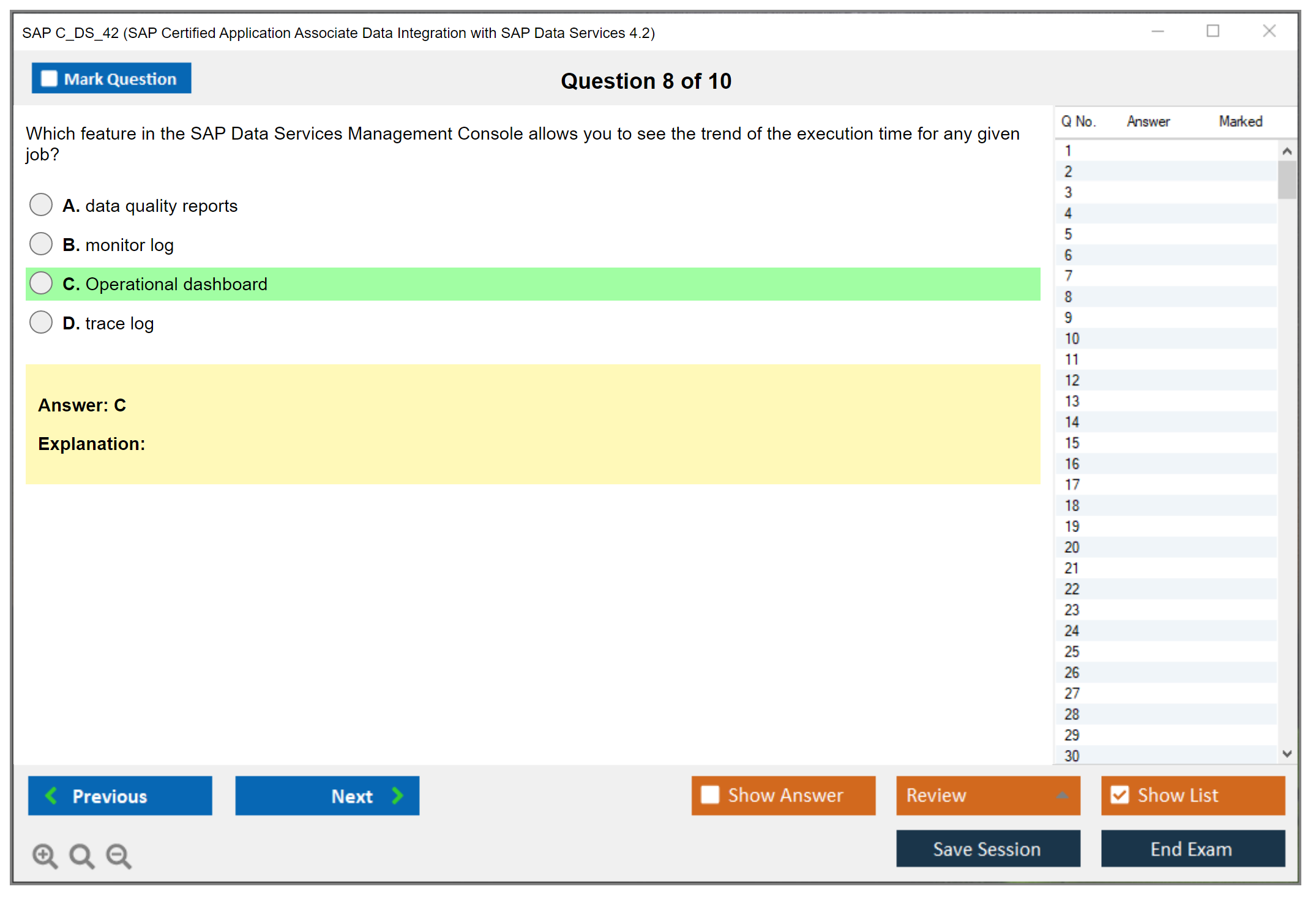

Management Console is your administrative hub for everything operational. Monitoring jobs means watching real-time execution status, seeing row counts as data flows through transforms, identifying which dataflow is currently running. It's also where you configure system settings like Job Server properties, adapter configurations, and global performance parameters.

User management happens here too. Creating accounts, assigning permissions, controlling who can execute jobs versus who can only view logs. Viewing execution logs is critical for troubleshooting, and the exam expects you to know what information appears in which log type and how to interpret error messages.

How all the components work together in practice

Designer creates jobs and stores them in Repository. When you execute a job from Designer during development, it's actually submitting the job to a Job Server which reads the definition from Repository, connects to source and target systems using configured Adapters, and processes data according to your dataflow logic. Management Console monitors this entire process and logs results back to Repository.

This end-to-end understanding shows up in scenario questions where something breaks and you need to identify which component is causing the problem. If metadata import fails, is that a Designer issue, an Adapter problem, or a datastore configuration error? Understanding component interaction helps you eliminate wrong answers quickly.

If you're prepping for the full certification path, checking out resources like the C_DS_42 Practice Exam Questions Pack helps you see exactly how SAP phrases these architecture questions. They're not straightforward. You get scenarios, not definitions.

Datastores and connections: making Data Services talk to everything

Datastores represent connections to source and target systems, and this topic claims 10-15% of exam weight. Creating and configuring datastores varies wildly depending on what you're connecting to. Databases need different parameters than applications, flat files have completely different requirements.

Database datastore configuration requires knowing connection specifics for each platform. Oracle uses TNS entries or direct connection strings with SID or Service Name options, SQL Server needs instance names and authentication modes, SAP HANA has its own connection requirements with multi-tenant database considerations. DB2, Sybase, MySQL. Each has platform-specific settings you should recognize.

Authentication methods matter too. Windows authentication versus SQL authentication for SQL Server, OS authentication versus database authentication for Oracle. The exam tests whether you know which authentication type works in which scenario, especially for cross-platform environments.

Application datastores: connecting to SAP and beyond

Application datastores use specialized adapters to connect to enterprise systems. SAP ECC connections require RFC credentials, client numbers, application server details, or message server configurations for load balancing. S/4HANA connections work similarly but might use different protocols depending on whether you're extracting through RFC, ODP, or SLT replication.

Salesforce datastores need API credentials, security tokens, and endpoint URLs. The adapter handles the REST API complexity under the hood, but you need to configure authentication properly. Similar patterns apply for other SaaS applications. Credentials, endpoints, API versions.

This overlaps a bit with what you'd see in broader SAP integration topics, kind of like what's covered in C_TS410_2020 for business process integration, though Data Services focuses specifically on data movement rather than process orchestration.

File format datastores and schema definition

Flat file datastores seem simple until you hit delimited files with embedded delimiters, or fixed-width formats where column positions matter, or XML with complex nested hierarchies. Schema definition for files means specifying column names, data types, lengths, delimiters, text qualifiers, header row presence.

Excel datastores read spreadsheets directly but require specifying which worksheet to read and whether the first row contains column headers. XML datastores need schema definitions that map hierarchical structures to flat or semi-structured formats Data Services can process.

Format specifications get detailed. Character encodings (UTF-8, ASCII, EBCDIC), line terminators (CRLF versus LF), date formats, decimal separators. The exam includes questions where you troubleshoot why a file isn't reading correctly, and the answer often lies in these detailed format settings.

Metadata import and management: keeping structures in sync

Importing table definitions from datastores brings source and target structure information into Data Services. Metadata browsing lets you explore available tables, views, and columns before importing. You don't have to import everything. Selective import of only the tables you need keeps your repository cleaner.

Refreshing metadata becomes necessary when source structures change. Added a column to your source table? You need to refresh the imported metadata in Data Services so that column becomes available in your dataflows. The exam asks about when and how to refresh, and what happens to existing dataflows when metadata changes.

Datastore performance optimization: making connections faster

Connection pooling reduces the overhead of establishing new database connections for each operation. Configuring appropriate pool sizes based on concurrent job execution patterns improves performance. Parallel processing configurations at the datastore level determine how many simultaneous connections Data Services can open for parallel data extraction or loading.

Best practices vary by platform. SAP HANA benefits from bulk loading operations and reducing round trips. SQL Server performs better with certain isolation levels and batch sizes. Oracle has its own optimization approaches around array fetch sizes and SQL*Loader integration. You don't need to memorize every platform's tuning guide, but understanding that different databases require different approaches is exam-relevant.

Jobs, workflows, and dataflows: the core development objects

This is your 18-22% chunk, and it's absolutely critical. Jobs are the top-level executable objects. What you schedule, what you run, what appears in job execution logs. Jobs contain workflows, scripts, variables, and error handling logic.

Workflow design patterns organize your processing logic. A typical workflow might contain multiple dataflows that run sequentially, or conditional logic that determines which dataflow executes based on row counts or file existence. Looping constructs let you process multiple files or iterate through parameter lists. Try-catch error handling wraps risky operations so failures don't crash the entire job.

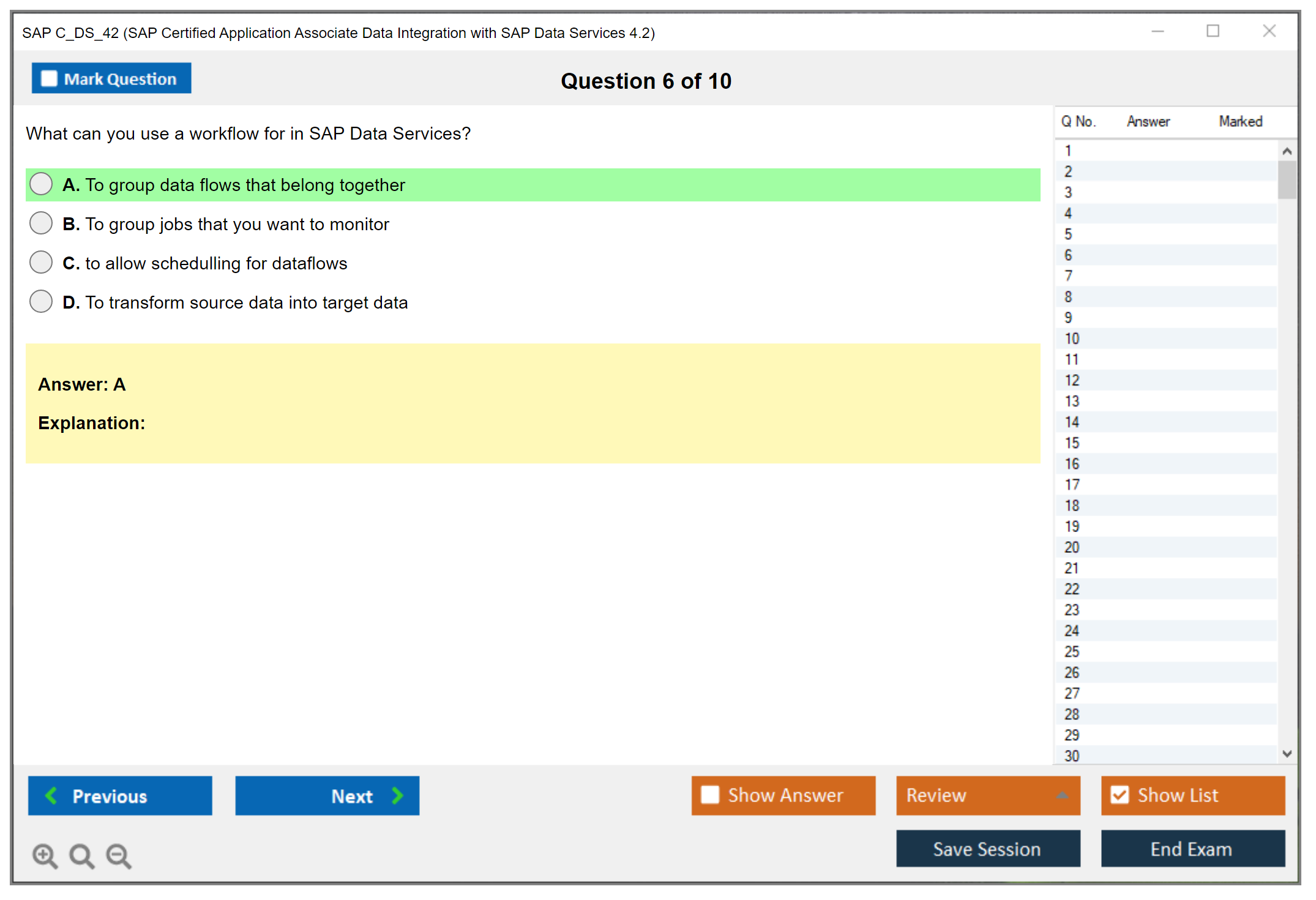

Dataflow architecture is where transformation happens. Every dataflow is a source-to-target mapping container with query transforms for filtering and sorting, transformation logic in the middle, and target tables at the end. Understanding what belongs in workflows versus what belongs in dataflows is fundamental. Workflows orchestrate, dataflows transform.

Nested dataflows and reusability

Nested dataflows are dataflows called from within other dataflows. When do you use them? Complex transformations that you need in multiple places, modularity for easier testing and maintenance, or when you hit limitations in single-level dataflow complexity.

Performance implications exist though. Nested dataflows add overhead, and sometimes flattening the logic into a single dataflow performs better. The exam presents scenarios where you choose between nested and flat approaches based on requirements.

Execution sequencing and dependencies

Controlling order of operations uses conditional workflows with if-then-else logic, wait-for-file transforms that pause until external files arrive, and explicit dependencies between job components. You can't just drop everything into a workflow and hope it runs in the right order. Data Services processes components based on data flow dependencies and explicit sequencing you configure.

Job parameters and variables create reusability. Parameters pass values into jobs at runtime. File paths, date ranges, filter criteria. Global variables are accessible throughout the job, local variables scope to specific workflows or dataflows. Substitution parameters let you dynamically build SQL statements or file names based on runtime values.

Scheduling and automation integration

Configuring job execution schedules happens in Management Console. Daily at 2 AM, hourly during business hours, monthly on the first Sunday. Event-based triggering responds to file arrivals or completion of upstream jobs. Integration with enterprise schedulers like Control-M or Autosys allows Data Services jobs to participate in broader ETL orchestration frameworks.

The C_ACTIVATE13 certification touches on project management aspects of implementing these kinds of integrated solutions, though from a methodology perspective rather than the technical configuration you need for C_DS_42.

Transforms and data manipulation: the 20-25% heavyweight

This is where the exam really digs in. Query transform capabilities include filtering rows with WHERE clauses that use SQL-like syntax, sorting on multiple columns, grouping for aggregations like SUM and COUNT, and HAVING clauses to filter grouped results.

Row generation and data generation transforms create test data or generate sequences. You might generate 1000 rows for testing, or create a sequence of dates between two values. Table comparison transform is huge for change data capture. It identifies inserts, updates, and deletes between source and target datasets, outputting separate streams for each operation type.

Map operation and column transformations handle renaming columns, converting data types (string to integer, date format changes), formula-based calculations using Data Services formula language. Simple stuff like concatenation or substring extraction, complex calculations involving case statements and nested functions.

Merge, join, and combination transforms

Merge transforms combine data from multiple sorted inputs. Both inputs must be pre-sorted on the merge key. It's faster than join for large datasets when sort order already exists. Join transforms offer inner, outer, left, and right join operations. Performance considerations matter here. Join ordering affects performance, and sometimes pushing join logic to the database performs better than joining in Data Services.

Hierarchy and XML transforms process hierarchical data structures. Flattening XML converts nested XML into relational rows, generating XML creates structured XML output from relational data. These transforms have complex configuration options for handling multiple hierarchy levels and element attributes.

Pivot transforms convert rows to columns (pivoting), reverse pivot does the opposite (unpivoting). Common in dimensional modeling where you need to convert transactional row data into columnar measures, or flatten dimension attributes from columnar to row format.

Case, validation, and business rules

Case transforms implement if-then-else logic for routing data or setting values based on conditions. Validation transforms check data against business rules. Is this value within acceptable range, does this field match the required pattern, is this combination of values valid? Rows failing validation can route to error tables, get flagged for review, or trigger alerts.

Data transfer and bulk loading optimization uses database-specific bulk loaders. SQL*Loader for Oracle, BCP for SQL Server, specialized HANA loaders. Array fetch sizes control how many rows Data Services retrieves in each database round trip. Larger arrays mean fewer network calls but more memory usage.

For those working across different SAP modules, the data quality concepts here overlap with master data concerns in areas like C_MDG_90, though Data Services focuses on the technical implementation rather than governance processes.

Data quality and profiling: the 12-16% quality focus

Data profiling fundamentals involve analyzing source data before building transformations. Profile analysis shows null percentages, distinct value counts, pattern distributions, min and max values. This information drives cleansing decisions. If 30% of phone numbers are null, you need a strategy for handling that.

Interpretation of profiling results identifies data anomalies. Unexpected value distributions might indicate data quality issues. High cardinality in what should be a low-cardinality field suggests inconsistent entry. Profiling guides your understanding of what cleaning you actually need.

Cleansing, matching, and address validation

Data cleansing transforms standardize formats, parse compound fields into components, match records to identify duplicates, and enrich data with additional information. Customer data cleansing might standardize name formats, parse addresses into components, validate against postal databases.

Address cleansing and geocoding uses reference data libraries to standardize addresses and add latitude and longitude coordinates. The exam expects you to understand when address validation is appropriate and what data quality improvements it provides.

Match and merge capabilities identify duplicate records using fuzzy matching algorithms that handle spelling variations, transpositions, and phonetic similarities. Consolidating redundant data after identifying matches requires business rules for choosing which values to keep when duplicates conflict.

Monitoring, troubleshooting, and performance tuning

Job execution monitoring in Management Console shows real-time progress. Which transform is currently executing, how many rows have processed, what's the throughput rate. Bottleneck identification comes from seeing which transform is taking longest or where row counts unexpectedly drop.

Log file analysis requires understanding different log types. Trace logs contain detailed execution information, error logs capture failure messages and stack traces, debug logs show granular data values and transformation results. Knowing which log to check for which problem saves troubleshooting time.

Error handling strategies in production environments use try-catch blocks around risky operations, error tables to capture rejected records, and notification mechanisms like email alerts when failures occur. The exam presents scenarios where you design appropriate error handling for different failure types.

Performance tuning approaches

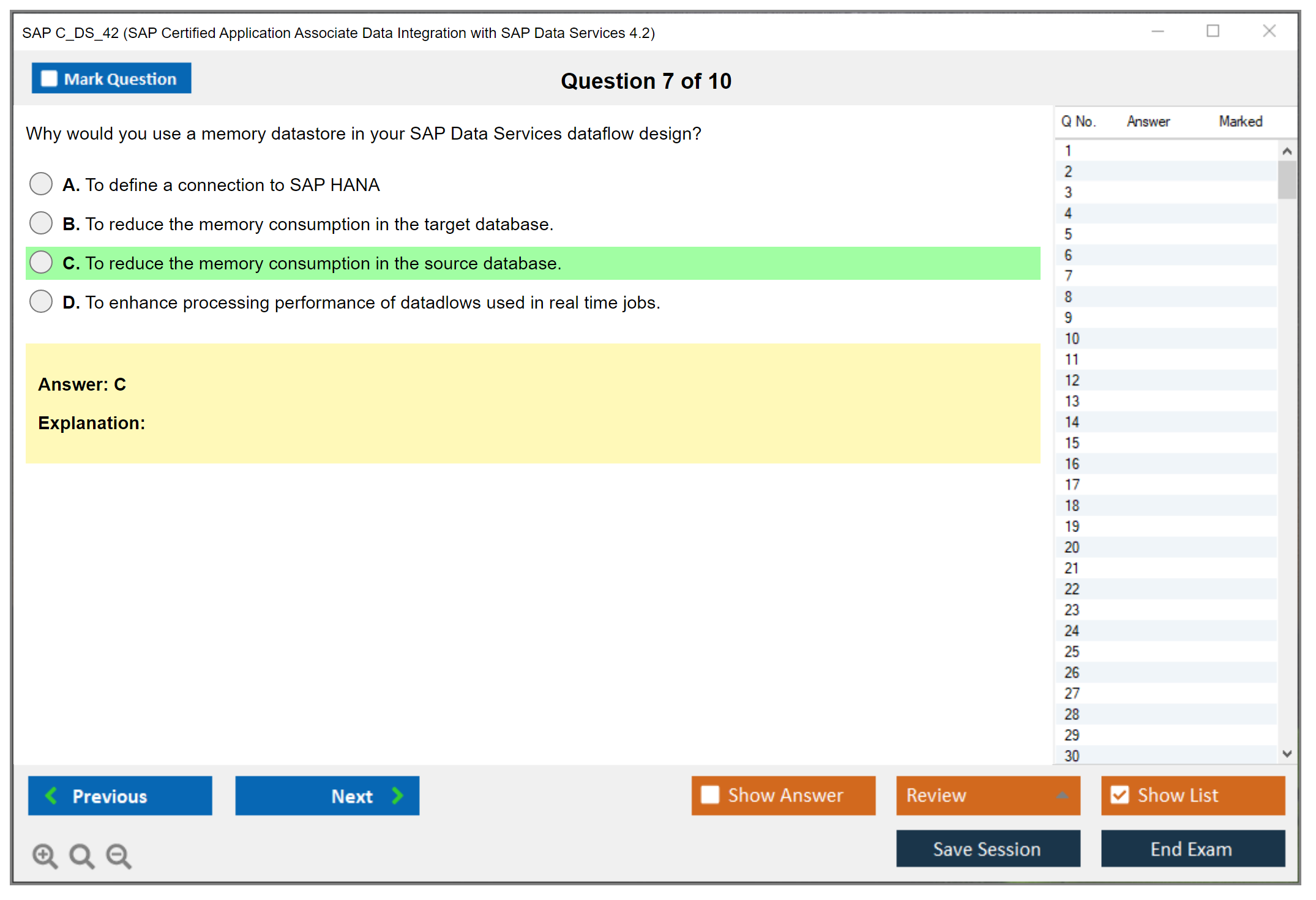

Pushdown optimization moves transformation logic from Data Services to the source database where possible. If your source database can filter and aggregate efficiently, pushing that logic down via SQL reduces the data volume Data Services processes. Parallel processing splits large datasets across multiple threads for simultaneous processing. Cache sizing affects how much data Data Services holds in memory during complex transformations.

Database-specific optimization includes using bulk loaders, tuning fetch array sizes, and adjusting join strategies based on platform capabilities. SAP HANA can handle certain operations incredibly fast if you structure your approach correctly. Wait, actually traditional databases might need different strategies entirely. I spent two weeks once debugging what turned out to be a simple array size problem that was killing performance on an Oracle extract.

Debugger usage involves setting breakpoints in dataflows, inspecting data values at each transform, and stepping through execution to identify where transformation logic produces unexpected results. It's tedious but necessary when formulas aren't calculating correctly or conditional logic routes data incorrectly.

Security and transport: the smaller but necessary piece

User management and authorization control who can develop versus execute versus administer. Transport mechanisms move objects between development, test, and production environments. Repository export creates ATL files containing job definitions, import loads those definitions into target repositories.

Best practices include maintaining separate repositories for each environment, testing thoroughly before production promotion, and documenting dependencies so you transport related objects together. This is your 5-8% section. Not huge, but you can't completely ignore it.

The C_DS_42 Practice Exam Questions Pack at $36.99 covers all these topic areas with scenario-based questions that mirror actual exam difficulty. It's one thing to understand concepts, another to apply

Prerequisites and Recommended Background Knowledge

Prerequisites and recommended background knowledge

Look, SAP's pretty relaxed here with paperwork. Officially, the SAP C_DS_42 certification has no mandatory prerequisites for registration, which means you can sign up for the C_DS_42 exam even if you didn't take a specific SAP course first. That openness's great for self-starters who already live in data tools all day.

That said. Reality bites. Experience matters.

When people ask me what "ready" looks like for SAP Certified Application Associate Data Integration with SAP Data Services 4.2, I point to a very unglamorous number: about 1 to 3 years of hands-on work building and running actual pipelines with SAP Data Services, not toy examples, and not just watching videos, because the exam expects you to recognize how Designer features behave when the data's messy, the source system's slow, and the job log's yelling at you for a reason that's not obvious.

Prerequisites (official vs. recommended)

Official SAP prerequisites are basically: none. SAP doesn't mandate formal prerequisites for C_DS_42 exam registration, allowing motivated candidates to pursue certification independently. Cool. You can buy an attempt and go.

Recommended prerequisites are the stuff that saves you from "I memorized terms but I can't solve problems." The thing is, the SAP Data Services 4.2 certification isn't a pure trivia test. If you've never built a repository, never created datastores, never debugged a failed workflow, the question wording'll feel weirdly specific, because it's written by people assuming you've touched the tool.

A good baseline is project exposure where you've done at least some of the following: build jobs and workflows, design dataflows, map source to target with real constraints, set up datastores, schedule runs, and then support the thing after go-live when business users notice a number's off by 2%. That last part teaches you more than any C_DS_42 study guide ever will.

Foundational technical skills you really need

The core foundation is boring database stuff. You need a strong understanding of relational database concepts, SQL, data modeling basics, and ETL methodology, because Data Services is a graphical way of expressing data movement and transformation logic that still behaves like a database-driven system under the hood.

Short version. Know tables. Know keys. Know joins.

If someone says "cardinality" and you freeze, fix that first. If you can't explain why a LEFT OUTER JOIN might explode row counts when the lookup isn't unique, you'll struggle with real-world SAP DS transforms and mappings. The exam questions tend to poke at those "gotchas" because they're exactly what breaks production loads.

SQL proficiency (yes, it matters)

SQL's the language Data Services keeps translating your intent into. You don't need to be a query wizard who writes recursive CTEs for fun, but you should be comfortable writing and reading SELECT queries with JOIN, WHERE, GROUP BY, and common aggregate functions, because Data Services builds on SQL-like operations even when you're dragging objects around in Designer.

Here's why this matters in practice: when you build a dataflow with queries, lookups, and aggregations, you're still dealing with join conditions, filter pushdown, grouping logic, and null behavior. When performance's bad you'll end up thinking in SQL anyway, because you'll be asking "what's the database doing" and "what's DS doing in the engine." You can't troubleshoot that if SQL's a mystery.

One more thing. Nulls are evil. Treat them carefully.

I once spent three hours debugging a customer extract where the root cause turned out to be a single nullable column in a lookup that nobody flagged during design. Three hours. The lesson? Null handling isn't optional trivia, it's survival.

Database knowledge breadth (not just one platform)

The exam's SAP Data Services focused, but your day-to-day isn't. You should be familiar with multiple database platforms like Oracle, SQL Server, and SAP HANA, especially around connection concepts, performance characteristics, and data types, because DS lives and dies by connectivity and metadata.

In real projects, you'll see small differences that matter: date/time precision, string padding, numeric scale behavior, case sensitivity, and how each platform handles indexes and execution plans. A lot of "Data Services problems" are just "the database's doing exactly what you asked but not what you meant."

Also learn the basic components you'll touch: datastores, connection testing, and how metadata gets imported and refreshed. Questions around Data Services jobs, workflows, and datastores often assume you know what's stored where and what breaks when a source column changes.

Data warehousing concepts that show up everywhere

If your background's app dev only, spend time on data warehousing. You should understand dimensional modeling (star schema and snowflake), fact and dimension tables, slowly changing dimensions, and the idea of a data mart architecture. A huge chunk of DS usage is feeding warehouses and marts, not just copying tables.

Slowly changing dimensions are a classic pain point. Type 1 vs Type 2 behavior. Surrogate keys. Effective dates. If those terms feel fuzzy, the tool features'll feel fuzzy too, and you'll end up guessing on SAP Data Services exam questions that are actually testing whether you can reason about warehouse patterns.

ETL methodology foundation (the stuff behind the tool)

ETL methodology isn't optional. You should know extract-transform-load patterns, data staging concepts, incremental vs. full load strategies, and change data capture approaches. Even if the exam doesn't ask you to implement CDC step-by-step, it'll expect you to understand why you'd choose one approach over another.

Full load's simple. Incremental's realistic. CDC's political.

And yes, staging. People skip staging in labs and then wonder why production's painful. Knowing when to land raw data, when to standardize it, and when to publish it's part of being effective with ETL with SAP Data Services. It bleeds into design and troubleshooting questions.

SAP space familiarity (enough to not be lost)

You don't need to be an ABAP developer, but you should have basic understanding of SAP ECC or S/4HANA architecture and table structures like transparent tables and cluster tables, plus familiarity with common modules like FI, CO, SD, and MM. DS integrations often pull from SAP sources where naming and relationships aren't intuitive if you've never seen them.

Even basic business context helps. If you understand order-to-cash and procure-to-pay at a high level, requirements make more sense, and mapping discussions stop sounding like random acronyms. It also helps you sanity-check data loads, because you can spot when a "sales order" extract's missing the documents you'd expect.

Operating system and networking basics (the unsexy prerequisites)

You'll touch servers. So you should be comfortable with Windows or Linux/Unix environments where Data Services components are installed and configured. Not advanced admin stuff, but enough to find logs, understand services, and not panic when a port's blocked.

Client-server basics matter too: TCP/IP concepts, how distributed installations connect, and basic connectivity troubleshooting. DS often has Designer on a workstation, Job Server on another machine, and databases somewhere else. When it fails you need to reason through "what talks to what" without guessing.

Ports. Firewalls. Name resolution.

Data quality concepts (because DS is not only ETL)

Data Services includes data quality capabilities, so you should at least be aware of data profiling, cleansing, standardization, matching, and basic master data management ideas. You don't need to be a full-time MDM person, but you should understand what profiling tells you, why standardization rules exist, and what matching's trying to do. "Data profiling and data quality in SAP DS" is part of what the platform's known for.

Project experience and lifecycle exposure

Project lifecycle exposure's a big deal: requirements gathering, design, development, testing, and production deployment. Even one full cycle teaches you how specs turn into mappings, how test cases find edge cases, and how production support changes your design priorities overnight.

If you've only built jobs in isolation, you might pass, sure, but you'll be fragile. The exam tends to reward people who've seen the whole flow, especially around monitoring, error handling, and operational thinking tied to the SAP Data Services repository and management console.

Training, Learning Hub, and practice environment (where most people mess up)

SAP offers official instructor-led training "DS42 - Data Services 4.2 Development" and it's the cleanest way to cover the platform end-to-end, especially if you like structured coverage mapped to C_DS_42 exam objectives. SAP Learning Hub's also a solid boost because it bundles e-learning, aligned materials, and often system access for practice. That matters because theoretical knowledge alone isn't enough for this exam.

You need hands-on. Period.

You need an actual Data Services installation where you can practice job development, repository setup, and running loads. Otherwise the UI terms and configuration details won't stick, and you'll burn time memorizing screenshots instead of building muscle memory.

System access options vary: personal installation (resource-intensive), cloud-based training systems, employer dev environments, or SAP Learning Hub integrated systems. If you install locally, plan for it: Data Services Designer wants significant RAM, with 8GB minimum and 16GB recommended, and you'll need a compatible database for the repository. Setup friction's part of the learning, because it forces you to understand what components exist and why.

Practice projects that actually build exam-ready skill

If you want practice that maps to real DS work, pick small projects with real constraints. A customer data migration job's a great start because it forces you to deal with duplicates, standardization, and survivorship rules. A sales data warehouse ETL process's solid too because it touches facts, dimensions, and incremental loads. Implementing a product master data quality solution's another strong one if you want to get comfortable with profiling and cleansing transforms, even at a basic level.

Other ideas exist. Keep it simple. Make it run daily.

If you want extra drills, a C_DS_42 practice test can help you identify weak areas fast, but don't let practice questions replace lab time. If you do want a pack to sanity-check readiness, the C_DS_42 Practice Exam Questions Pack is a decent option to pressure-test recall after you've already built things, and it can highlight gaps you missed in your own notes.

Alternative paths, extra certs, and how to assess gaps

Some candidates pass through intensive self-study with extensive lab practice even without formal project experience. I've seen it happen, but the common denominator's always the same: lots of time in the tool, lots of failure, lots of fixing.

Complementary certifications can help with context. SAP BW or SAP HANA background makes warehousing and modeling feel more natural, and database certifications like Oracle OCP or Microsoft MCSA (or modern equivalents) help because you already think in query plans, datatypes, and constraints.

Last piece: documentation reading skills. You need to be able to work through SAP docs, release notes, and technical guides without getting lost, because that's how you resolve ambiguity on the job and how you stay aligned with what SAP says the product does. SAP Community forums and practitioner blogs also help, because you'll see the "this is what happens in real installs" side of things, which's often missing from polished training slides.

Do a gap assessment. Be honest. Map what you know to the C_DS_42 exam objectives, then pick two weak areas and fix them with labs, not just reading. If you're using something like the C_DS_42 Practice Exam Questions Pack as a checkpoint, treat wrong answers as a to-do list for your next hands-on session, not as a score to brag about.

Study Plan and Preparation Strategy

Planning your C_DS_42 study timeline

Most people need 6-8 weeks to properly prep for the SAP C_DS_42 certification, putting in about 10-15 hours weekly. Not gonna lie, I've seen experienced folks crush it in 3-4 weeks, but they're using Data Services literally every single day at work and just need to fill knowledge gaps for exam-specific topics. You know, those weird theoretical questions that don't come up when you're actually building jobs and solving real problems. The key is being honest about where you stand right now with SAP Data Services 4.2.

Daily DS transforms? Building jobs, troubleshooting errors? You probably know more than you think. You might be ready for the accelerated track. But if you're coming from a different ETL tool or just learning data integration concepts, honestly, give yourself the full 10-12 weeks. Rushing through foundational stuff creates gaps that'll haunt you during the exam.

I mean, cramming might work for some exams. This isn't one of them.

The accelerated study track for experienced practitioners

This 3-4 week sprint works if you've got 2+ years of daily Data Services usage under your belt. You already know how to build dataflows and troubleshoot job failures. Your focus should be exam-specific topics and whatever gaps exist in your practical knowledge. Maybe you've never touched data profiling features because your team doesn't use them. That's what you need to nail down.

The C_DS_42 Practice Exam Questions Pack becomes critical here since you need to identify blind spots fast. Take a diagnostic practice test in week one. Horrible score on data quality questions? That's your week two focus. Performance optimization weak? Dedicate week three to pushdown operations and parallel processing experiments.

You're not learning from scratch. You're filling gaps and learning how SAP phrases exam questions, which honestly feels different from real-world problem-solving. The exam asks about theoretical best practices while you've been making pragmatic decisions based on actual system constraints. Sometimes those don't line up.

Standard study track for the typical candidate

Six to eight weeks works for most candidates who have some Data Services exposure but need full coverage. Maybe you've worked on a couple of projects, touched the Designer interface, built basic jobs, but you need structured learning to connect all the pieces and understand the full platform capabilities. There's a lot more to DS than just dragging transforms around, turns out.

Week by week, you're building up knowledge instead of jumping around. This approach gives you time to practice, make mistakes, review, and actually retain information. The weekly structure I recommend: 40% hands-on practice, 30% reading documentation and guides, 20% video tutorials, 10% practice questions. That ratio matters because Data Services is a hands-on tool. You can't learn it by reading alone.

My neighbor tried that once with a different cert. Didn't go well.

Extended timeline for career changers

Ten to twelve weeks suits career changers or people completely new to Data Services. They need foundational learning time. ETL concepts, data warehousing basics, how SAP Data Services fits into the larger data integration space, all this takes time to absorb. If you're coming from something like SAP S/4HANA financial accounting or SAP Commerce Cloud development, the mindset shift alone requires adjustment.

Don't rush this. The extended timeline allows for practice without feeling overwhelmed. You'll build confidence gradually, which honestly matters more than people realize when you're sitting for the actual C_DS_42 exam.

Breaking down the first two weeks

Week 1-2 is all about architecture overview and getting comfortable in the Designer interface. You need to understand repository concepts, how Central Repository, Local Repository, and Profiler Repository interact. Basic job, workflow, and dataflow structures come next. Simple source-to-target mappings should feel natural by week two.

Spend real time in the Designer. Click everything. Break things. Figure out how to work through between different views. The interface isn't exactly intuitive at first, but after a few dozen hours, muscle memory kicks in. Create a basic job that reads a flat file and writes to a database table. Boring? Sure. Essential? Absolutely.

Weeks 3-4 dive into transforms

This is where things get interesting. Transform deep-dive covering query, join, merge, table comparison, and data manipulation operations. Each transform has specific use cases, syntax requirements, and performance implications. The Query transform alone deserves several hours of practice. Filtering conditions, aggregate functions, GROUP BY logic, all that jazz.

Join operations trip people up constantly. Understand inner vs. outer joins, how Data Services processes joins differently than databases, and when to use Join vs. Merge transforms. Build practical exercises where you combine data from multiple sources, handle mismatched keys, and deal with duplicate records. The C_DS_42 Practice Exam Questions Pack includes transform-specific scenarios that mirror exam questions surprisingly well.

Table comparison transforms seem straightforward until you're dealing with large datasets and trying to optimize performance while tracking deltas. Practice with real scenarios where you need to identify inserts, updates, and deletes between source and target datasets. Not as simple as it sounds.

Weeks 5-6 focus on quality and performance

Data quality and profiling capabilities become your focus here. Most practitioners skip this stuff in real projects because of time constraints, but the exam definitely covers it. Profiling data sources, creating data quality rules, implementing validation logic. All exam topics that require hands-on practice to understand properly.

Performance optimization techniques matter more for the exam than you'd expect. Understanding pushdown optimization, when Data Services pushes processing to the database vs. processing in the engine, parallel processing settings, cache configurations. These topics appear frequently. Error handling patterns and monitoring approaches round out this phase. Learn how to interpret execution logs, understand error propagation, and implement proper exception handling.

Final weeks bring everything together

Week 7-8 focuses on advanced topics review, integrated practice projects, and practice test sessions. You're building complete end-to-end scenarios that incorporate everything you've learned. Multi-source jobs with complex transformations, error handling, performance optimization, and data quality validation all in one project.

This is when weak areas get reinforced. If your practice tests show consistent struggles with specific transform types or architecture concepts, drill down on those. The C_DS_42 exam doesn't let you skip topics. You need knowledge across all exam objectives.

Daily study routine that actually works

Morning theory review works well. 30-45 minutes reading documentation or watching tutorials before work. Your brain's fresh, you can absorb concepts better. Lunch break practice questions, maybe 15-20 minutes, keeps information active in your mind throughout the day. Evening hands-on lab work, 1-2 hours, is where real learning happens.

Consistency beats intensity here. Two hours daily for six weeks produces better results than weekend cramming sessions. Your brain needs time to process and consolidate information, especially with complex technical material like SAP Data Services architecture and transform operations.

Prioritizing hands-on practice

Real talk? Building complete jobs from requirements teaches you how different components connect. Working through realistic scenarios cements understanding. Troubleshooting deliberate errors, intentionally breaking things to see what happens, prepares you for exam scenarios about identifying problems. Playing with performance scenarios helps you understand the impact of different configuration choices.

Similar to how SAP Activate project managers need practical implementation experience beyond theoretical knowledge, Data Services certification requires hands-on competency. You can't fake understanding when the exam asks specific questions about transform behavior or error handling approaches.

Documentation focus areas

The Data Services Designer Guide, Administrator Guide, Performance Optimization Guide, and Transform Reference sections are your primary resources. Don't try reading everything cover-to-cover, that's hundreds of pages of dense technical content. Instead, focus on sections relevant to exam objectives and topics where you need deeper understanding.

Create a personal reference guide as you study. Transform syntax examples, common patterns, troubleshooting steps, performance tips, all organized for quick review. This becomes valuable during final exam prep when you need fast refreshers on specific topics.

Practice projects that build skills progressively

Start simple. Flat-file-to-database loads. No fancy transforms, just reading data and writing it somewhere else. Then progress to multi-source joins where you're combining customer data from one source with transaction data from another. Advance to complex transformations with error handling, data quality validation, and performance optimization considerations.