Google Professional Cloud Database Engineer Certification Overview

What this certification actually means for your career

Real talk? The Google Professional Cloud Database Engineer certification separates the pretenders from the practitioners. Anyone can spin up a Cloud SQL instance. I mean, it's basically just clicking buttons in the console. But designing a multi-region, highly available database architecture that doesn't completely blow your budget while maintaining performance under real-world conditions where traffic patterns are unpredictable and business requirements keep shifting? That's a whole different ball game.

This certification validates you can design, manage, and optimize database solutions on Google Cloud Platform. We're talking migration strategies, performance tuning, keeping databases running when your app suddenly gets featured on TechCrunch and traffic spikes 1000%. Sure, it's industry-recognized. More importantly? It shows you understand how Cloud SQL, Cloud Spanner, Firestore, Bigtable, and the whole GCP database ecosystem actually work together rather than just existing as separate services.

What I like about this cert is it differentiates you from general cloud practitioners who know a bit about everything but can't really dive deep. Enterprises need specialists. They need people who can architect scalable, secure, cost-effective database solutions, and this certification signals you're that person. The thing is, it lines up pretty well with what companies actually need during cloud migrations or when building cloud-native applications from scratch.

Who actually benefits from taking this exam

Not everyone needs this. Let's be honest.

If you're just starting out in cloud, go get the Associate Cloud Engineer cert first. But database administrators with 3+ years of experience who want to validate cloud skills? This is your path.

Cloud architects focusing on data infrastructure should definitely consider this. Database engineers responsible for migrating on-premises databases to Google Cloud will find the exam content directly applicable to their daily work. Like, I'm talking about the exact scenarios you're dealing with on Tuesday afternoon when the migration plan hits unexpected schema incompatibilities. DevOps engineers managing database operations in production can use this to formalize what they already know and fill in gaps they didn't know existed.

Solution architects designing multi-database setups for enterprise applications? Absolutely. You're probably already doing half this stuff, might as well get recognized. IT professionals transitioning from traditional database roles to cloud-native positions will find this certification helps bridge that gap and makes the resume stronger during the transition.

I've seen people with only 1-2 years of experience try this exam and struggle hard. The questions assume you've actually dealt with database performance issues, planned migrations, handled production incidents at 3 AM when everything's on fire.

You can't just memorize dumps and pass.

Actually reminds me of a DBA I worked with who thought his decade of Oracle experience would translate directly. Spent two weeks cramming the night before, confident he'd ace it because "databases are databases." Failed spectacularly. Turned out knowing B-tree indexes inside and out doesn't help much when the exam asks about Spanner's TrueTime API or Bigtable row key design for time-series data. Came back three months later, humbled, actually used GCP for a real project, passed easily.

The actual career impact and what it's worth

Here's where it gets interesting. Certified professionals typically see salary bumps of 15-25% compared to non-certified peers in similar roles. Real money. But beyond direct compensation, there's boosted credibility when you're in those architecture meetings and people are debating whether to use Spanner or Cloud SQL for a new application and your opinion actually carries weight.

The job market for GCP database specialists is growing faster than a poorly indexed table on a viral application. Having this certification gives you competitive advantage because enterprises are actively seeking validated Google Cloud database expertise. I've seen recruiters specifically filter for this cert when looking for senior database roles, which is both encouraging and slightly frustrating if you don't have it yet.

You also get access to the exclusive Google Cloud certified community and networking opportunities. Sounds like marketing fluff, I know. But it actually matters when you're trying to solve weird edge cases or looking for your next role and someone in that community can make an introduction. It positions you for senior-level database architect and principal engineer roles that you might not even be considered for otherwise.

The certification works really well alongside other Google Cloud certifications like Professional Cloud Architect or Professional Data Engineer. Each one reinforces the others and shows you understand the broader ecosystem, not just one narrow slice of technology.

Validity period and what employers actually think

Valid for two years from the date you pass. Yeah, you'll need to recertify, which some people complain about. But honestly? Technology changes fast enough that two years is reasonable. It's recognized globally by enterprises adopting Google Cloud Platform, and I've seen it make a real difference in hiring decisions. Like, the difference between getting an interview or having your resume filtered out by automated systems.

It demonstrates commitment to continuous learning and professional growth. Matters more than people think. Anyone can coast on old knowledge for a while, but keeping current shows you're serious about the craft rather than just collecting certifications and letting them expire. Organizations undergoing digital transformation and cloud migration initiatives specifically value this certification because it means they don't have to train you from scratch on GCP database services.

What you actually need to know to pass

The certification validates some pretty specific competencies.

Design and implementation of highly available and scalable database solutions is the big one. You need to understand how to architect databases that don't fall over when traffic spikes or when an entire region goes down because of some infrastructure issue outside your control.

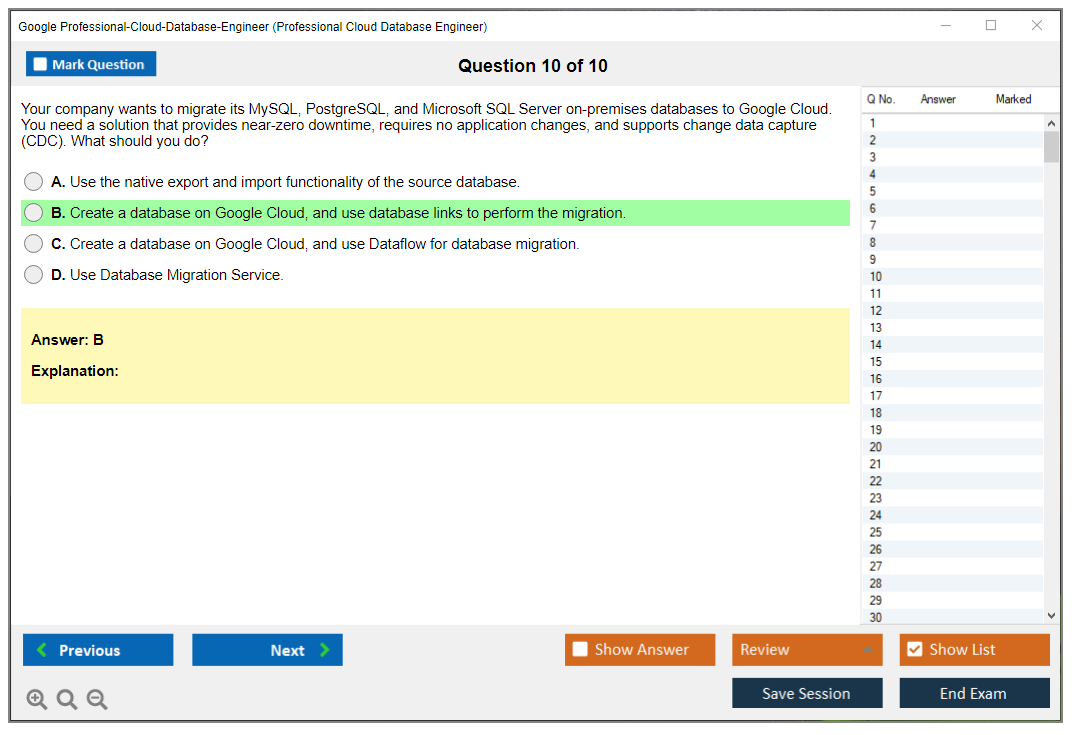

Database migration planning and execution from on-premises to cloud environments comes up constantly on the exam. You'll need to know Database Migration Service, how to handle minimal downtime migrations, what to do when things go wrong. Because they always do. Performance optimization and troubleshooting across multiple database engines means you can't just focus on one technology and call it a day.

The exam covers Cloud SQL (MySQL and PostgreSQL), Spanner, Firestore, Bigtable, and even touches on Dataproc for big data workloads. Security implementation gets heavy emphasis too. Encryption, access controls, compliance requirements matter especially for anyone working in regulated industries like healthcare or finance where a mistake can mean massive fines.

Cost optimization strategies matter because nobody wants to be the person who racks up $50,000 in database costs in a month. Disaster recovery and business continuity planning for mission-critical databases rounds out the core competencies. You need to understand RPO, RTO, and how to actually implement backup and restore procedures that work under pressure, not just in theory.

How this differs from other Google certs

This certification is more focused than the Associate Cloud Engineer certification, which is more of a generalist entry-level cert. It has deeper database focus compared to Professional Cloud Architect, which covers databases but also networking, compute, security, and everything else under the cloud umbrella.

It's complementary to Professional Data Engineer but emphasizes operational database management rather than data pipelines and analytics. The Data Engineer cert is more about BigQuery, Dataflow, ETL processes, and batch processing workflows. This one? It's about keeping transactional databases running smoothly when users are actively reading and writing data.

The key difference is this requires hands-on experience with specific database services rather than broad cloud knowledge. You can't fake your way through questions about Spanner internals or Bigtable schema design if you've never actually used them in production and dealt with the real-world tradeoffs.

What you'll actually do with this knowledge

In real-world terms, you'll be building database solutions for applications with millions of users. Managing database migrations with minimal downtime requirements becomes part of your toolkit. I've done migrations where we had a four-hour maintenance window to move 2TB of data with zero acceptable data loss, and the exam content directly applies to planning those scenarios where everything needs to go right the first time.

Implementing automated backup and recovery procedures sounds boring. Until you need to restore a database at 2 AM and your manual procedures don't work and executives are breathing down your neck about revenue loss. Optimizing query performance and reducing database operational costs is something you'll do constantly. The thing is, small optimizations compound into massive savings over time.

Designing multi-region database setups for global applications requires understanding replication, consistency models, and network latency. Establishing database security policies and compliance frameworks matters especially if you're in healthcare, finance, or any regulated industry where auditors actually check your configurations.

The certification validates you understand how to implement these requirements on GCP. Not just in theory. In practice with the actual tools and services available, dealing with real limitations and tradeoffs that textbooks don't mention.

Exam Details: Cost, Format, and Registration Process

Money stuff first (because it matters)

The Google Professional Cloud Database Engineer certification exam runs $200 USD as the standard registration fee. Thing is, regional taxes and currency conversions can bump that number around a bit, so don't be shocked if your checkout total looks different than what a blog post (including mine) says.

Here's the part people miss.

Retakes cost the same. Every single time. There's no discounted retake, and Google doesn't make you sit out a waiting period, so if you fail on a Tuesday and want to rebook for Friday, you can, but you're paying another full fee. Painful, honestly. Also, no bundled discounts exist for stacking multiple Google Cloud certs, so if you're planning to grab Database Engineer plus Architect plus DevOps, you're buying three separate exam attempts at full price each. No "cert bundle" deals. Zero combo packs.

Payment is straightforward. Most regions accept major credit cards, and PayPal is available in select regions. If you're getting reimbursed, company vouchers and training credits might cover it, but that depends entirely on how your employer buys Google training. Some orgs hand out exam vouchers like candy, while others make you fight finance for a month over a $200 receipt.

Cost comparison is where it gets interesting. I mean really interesting. AWS Certified Database Specialty is $300, and Microsoft's Azure Database Administrator Associate exam runs around $165. So Google lands smack in the middle. Not cheap, not wild. If you're budgeting across a year of certs, that extra $100 on AWS is real money. Especially if you're the kind of person who likes to do a "first attempt to see what it's like" and then a second attempt after patching gaps.

Oh, and speaking of budgeting across multiple attempts, I've noticed people underestimate study time because they compare this to easier cloud fundamentals exams. Bad move. Different beast entirely.

If you're searching the web for Professional Cloud Database Engineer exam cost, that's the answer plus the gotchas. Taxes, retakes, and zero bundles. That's the real breakdown.

What you actually face on exam day

Format wise, expect 50 to 60 questions, a mix of multiple choice and multiple select. Two hours. Exactly 120 minutes of active testing time. No extra reading period. No warm-up. Sit down, timer starts, go.

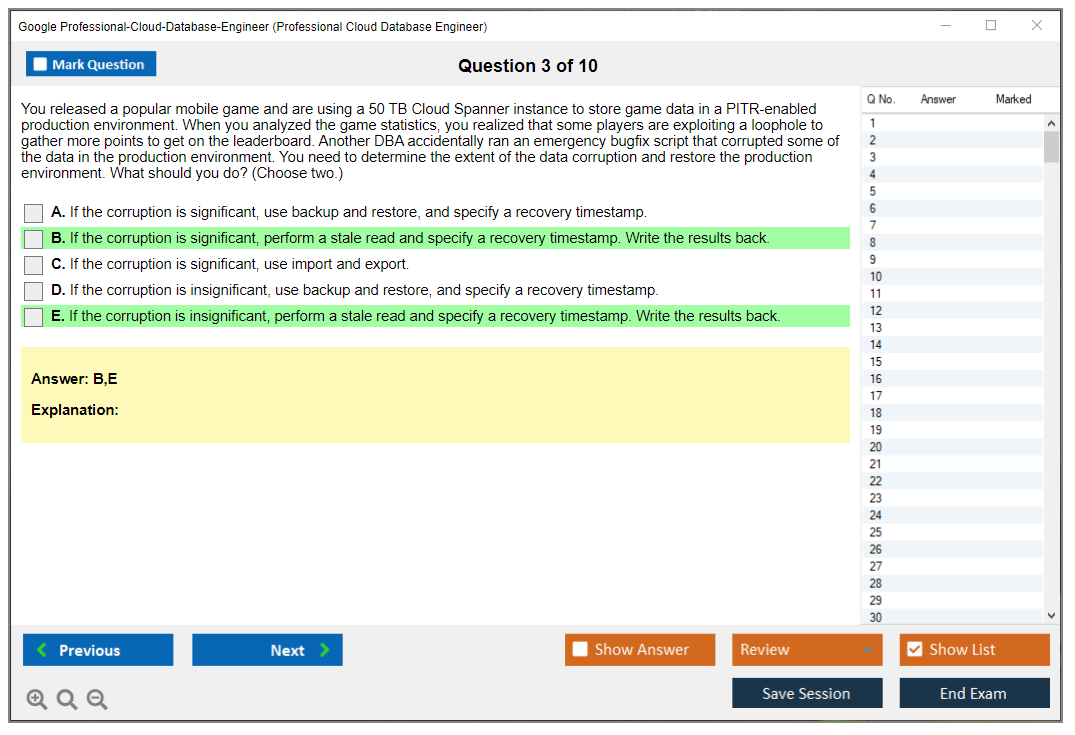

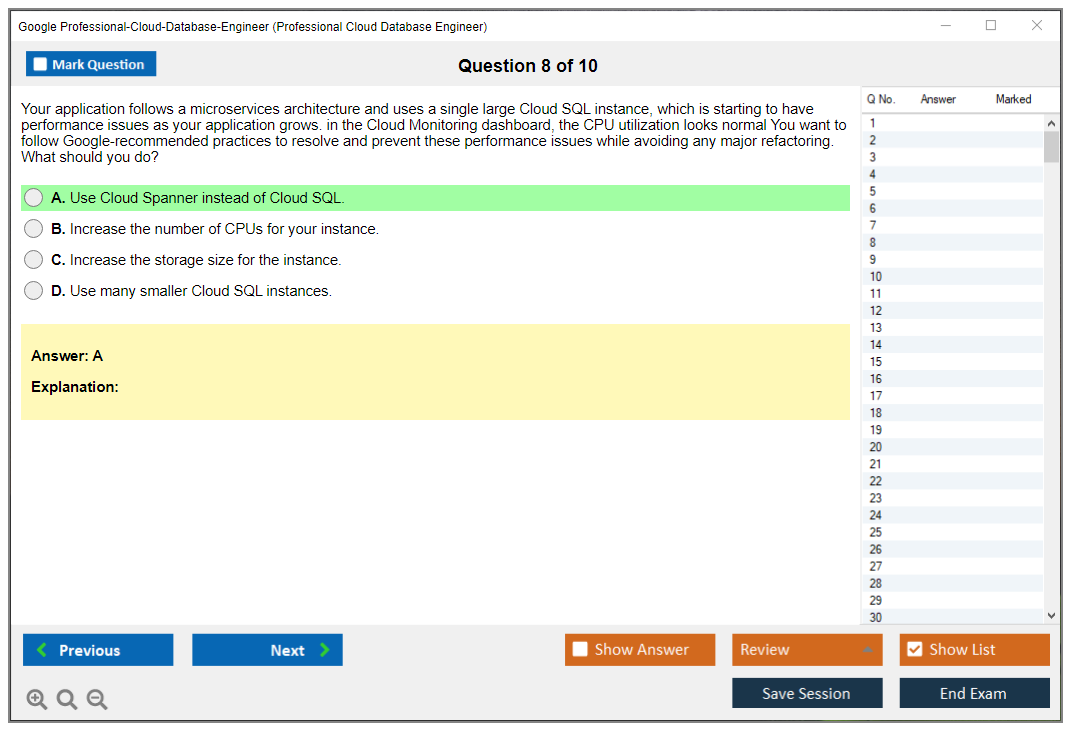

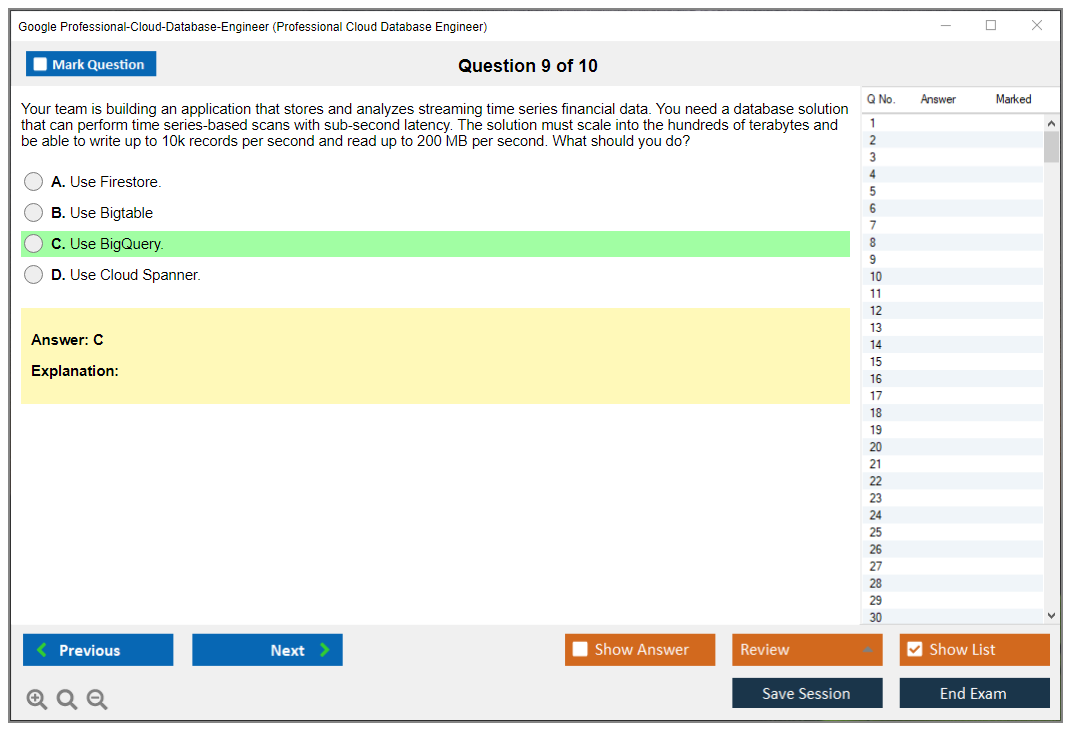

And look, the questions aren't trivia most of the time. They're usually scenario-based, which changes everything. You'll get a company situation, constraints, current architecture, and then you're asked what you'd do next, what service you'd pick, how you'd design for scale, security, or reliability. Occasionally what you'd change to fix a mess that somebody else built. That's why people talk about Professional Cloud Database Engineer exam difficulty even though the question count isn't huge.

There's no penalty for wrong answers, so guessing becomes part of the game. If you can eliminate two options and you're down to a 50/50, you pick one and move on. Never leave blanks. Questions are weighted equally, and the interface won't tell you what's "hard" versus "easy", so don't waste time trying to infer it. Some questions feel like they're worth ten points.

They're not.

Same weight.

You also get 2 to 3 case studies, which are bigger scenarios with multiple questions tied to the same context. Read carefully. Then read again, honestly. Here's the annoying part: you can't go back to a case study section once you complete it. So if you rush through the first case study question and later realize you misread the storage or latency requirement, wait, I should mention, too bad, it's locked. That design choice alone changes how you pace the whole exam.

If you've been hunting for Professional Cloud Database Engineer exam objectives, this is where the objectives show up in disguise: HA choices, backup and restore, IAM and encryption, migration planning, and picking between services like Cloud SQL Spanner Firestore Bigtable Dataproc depending on the workload. Not theory. Tradeoffs.

Remote proctoring vs test center (pick your poison)

Google uses Kryterion's Webassessor for scheduling and delivery. You can take the exam remote proctored or at a physical test center.

Remote testing is convenient. It's also picky as hell. You need stable internet, webcam, microphone, and a private room where no random roommate walk-ins happen. No second monitor. No "my phone's on silent" excuses. Proctors can and will stop the exam if they think your setup breaks rules, and that's the kind of stress you don't want while you're trying to reason through a database migration to Google Cloud with RPO and RTO targets.

Test centers are boring in a good way. Controlled environment. No Wi-Fi drama. No "my webcam driver crashed" nonsense. If you're anxious or your home setup's chaotic, the test center's a calmer bet. The tradeoff is scheduling: remote exams are basically 24/7, while test centers usually run business hours, plus you've got travel time.

Registration and scheduling (the part people mess up)

You register through Kryterion Webassessor. Create an account. Pick the exam. Choose remote or test center. Then select your date and time.

Important detail.

You need to schedule at least 24 hours in advance. No same-day booking. So if you wake up on Saturday feeling brave, you're not testing Saturday. Plan ahead.

Rescheduling's free up to 72 hours before your appointment. Inside that 72-hour window, cancellation means you forfeit the full fee. A no-show also means you lose the fee, no refund, no "I overslept" exception. Brutal, but common across certification vendors.

If you're coordinating with work, don't book a time that's "probably fine". I mean, meetings multiply like rabbits. Book time off. Block your calendar. Protect the slot.

ID rules and check-in reality

You need a government-issued photo ID: passport, driver's license, national ID card. The name must match your registration exactly. Not "close enough". Not "my nickname". Exact match. In some jurisdictions, you may need a secondary ID, so check the local rules when you book.

Remote check-in starts 15 to 30 minutes early because you'll do system checks, room scans, and verification steps. At a test center, show up 15 minutes early for sign-in and storage lockers.

Prohibited items are what you'd expect: phones, watches, notes, bags, electronics, reference materials. If you're remote, clear your desk like you're moving out, and I've got mixed feelings about this requirement because some people's desks are their whole workspace setup. If you're at a test center, leave extra stuff in your car if you can. Less drama.

Exam interface stuff that affects strategy

It's a web-based platform. Simple enough. You can mark questions for review and come back before you submit, and there's a navigator showing answered, unanswered, and flagged questions. Timer's visible, with warnings around 30 and 10 minutes remaining.

Two quirks matter. One, the submit button requires confirmation, so you're not gonna accidentally end the exam with a stray click. Two, case studies are one-way doors. Once you leave them, you're done. That means you treat case studies like mini-exams inside the exam.

Accommodations and special arrangements

If you need accommodations, you can request extended time for documented disabilities. Some regions also offer language assistance for non-native English speakers, but it's not universal, so you check availability before you assume it's an option.

Request accommodations at least 10 business days before the exam date. You'll need documentation like medical certification or disability verification. Some arrangements include extra break time for medical needs without extending the overall exam duration, which sounds small until you're two coffees deep and the clock's yelling at you.

After you hit submit (results and what you get)

When you finish, you get a preliminary pass/fail immediately on screen. The official score report shows up by email within 7 to 10 business days.

Pass and you'll get a digital badge through Credly, plus a certificate download from the certification portal. There's also an administrative detail people forget: your results are valid for certification claim within 18 months of passing, so don't procrastinate on tying the pass to the right account.

People always ask about Professional Cloud Database Engineer passing score. Google doesn't publish an exact number. You get pass/fail, not "you needed 78%". That's normal for Google Cloud exams, and yeah, it's a little annoying because you can't game your study plan around a precise threshold.

If you're also thinking ahead about the Professional Cloud Database Engineer renewal policy, treat this cert like something you'll refresh periodically. Google updates services fast, and database design patterns change fast, so even if recert timing feels far away right now, it sneaks up.

And if you're planning your prep next, you're gonna want a Professional Cloud Database Engineer study guide and some Professional Cloud Database Engineer practice tests, but keep your eyes open for ones that focus on real architecture decisions like backup restore high availability on GCP, permissions models, and service selection, not just definition flashcards.

Passing Score Requirements and Scoring Methodology

What Google actually tells you about passing

Here's the deal. Google won't publish the exact passing score for the Professional Cloud Database Engineer certification. They keep that locked down tight, which I guess makes sense from their angle but drives candidates crazy. You won't find some official "need 75% to pass" statement anywhere on their certification page.

Industry estimates and candidate experiences suggest you're probably looking at somewhere between 70-75% correct answers to pass, give or take. But that's not the complete picture because Google uses scaled scoring. The thing is, you get a pass or fail result. Not your actual percentage. Not any numerical score.

Scaled scoring is where things get interesting

Google uses what's called a scaled scoring system, and it's pretty clever when you think about it. Your raw score (meaning the actual number of questions you got right) gets converted to a scaled score that accounts for differences in difficulty across exam versions. Not all exam questions are created equal, right? Some versions might have slightly tougher scenarios than others, and the scaling process ensures that passing one version isn't easier than passing another version.

Here's how it works. Each question is weighted based on its difficulty level and how important it is to the actual job role. A complex scenario about migrating a multi-terabyte database from Oracle to Cloud Spanner while maintaining ACID compliance? That's weighted heavier than a straightforward question about Cloud SQL backup retention policies. Google runs psychometric analysis on all their questions using data from thousands of test-takers to figure out which questions separate competent database engineers from people who just memorized documentation.

This statistical modeling protects you, honestly. If you happen to get an exam form that's slightly harder, the passing threshold adjusts accordingly. The goal is consistent standards over time, not a fixed percentage of correct answers.

Oh, and speaking of standards, I once had a colleague who swore the morning exam slots were harder than afternoon ones. Total superstition, but he'd only book PM tests after that. People get weird about these things.

You won't see your actual score

When you finish? Pass or fail.

That's it. No percentage breakdown, no "you scored 78%," no domain-by-domain performance analysis showing you crushed migration questions but struggled with high availability scenarios. Google keeps it simple, which I find both annoying and oddly refreshing.

If you fail, you do get some general feedback about areas where you were weak. It's not super detailed, don't expect question-by-question explanations, but it'll point you toward domains that need work. Something like "you should review database migration strategies and backup/restore procedures." Helpful? Somewhat. Specific enough to guide focused study? Eh, marginally.

You can't appeal your results either. No manual review process, no "hey can someone double-check question 37 because I'm pretty sure I was right." The scoring is automated and final. Your results also stay confidential unless you explicitly consent to share them, which matters for some employment verification situations.

How questions break down across domains

The exam covers four main performance domains, and understanding the distribution helps you focus your prep time where it matters. Design database solutions makes up roughly 30% of the exam. This is your biggest chunk, so don't sleep on it. You're looking at questions about choosing between Cloud SQL, Spanner, Firestore, Bigtable, or Memorystore based on requirements. Real scenario-based stuff, not theoretical nonsense.

Manage and provision database solutions hits around 25% of questions. Think configuration, scaling, security controls, IAM policies for databases. Deploy scalable and highly available databases also runs about 25%. This covers replication strategies, failover mechanisms, disaster recovery planning, the stuff that keeps databases running when things go sideways.

Migrate data solutions takes up the remaining 20% roughly. Moving from on-prem Oracle to Cloud Spanner, MySQL to Cloud SQL, handling data transformation during migration, minimizing downtime. I mean, this is huge in real-world scenarios but slightly less represented on the exam.

Important note: questions aren't evenly distributed and some questions test multiple domains at once. You might get a migration scenario that also requires you to design for high availability in the target environment. Those questions hit two domains simultaneously, which can mess with your mental math on time allocation.

Multiple-select questions are brutal

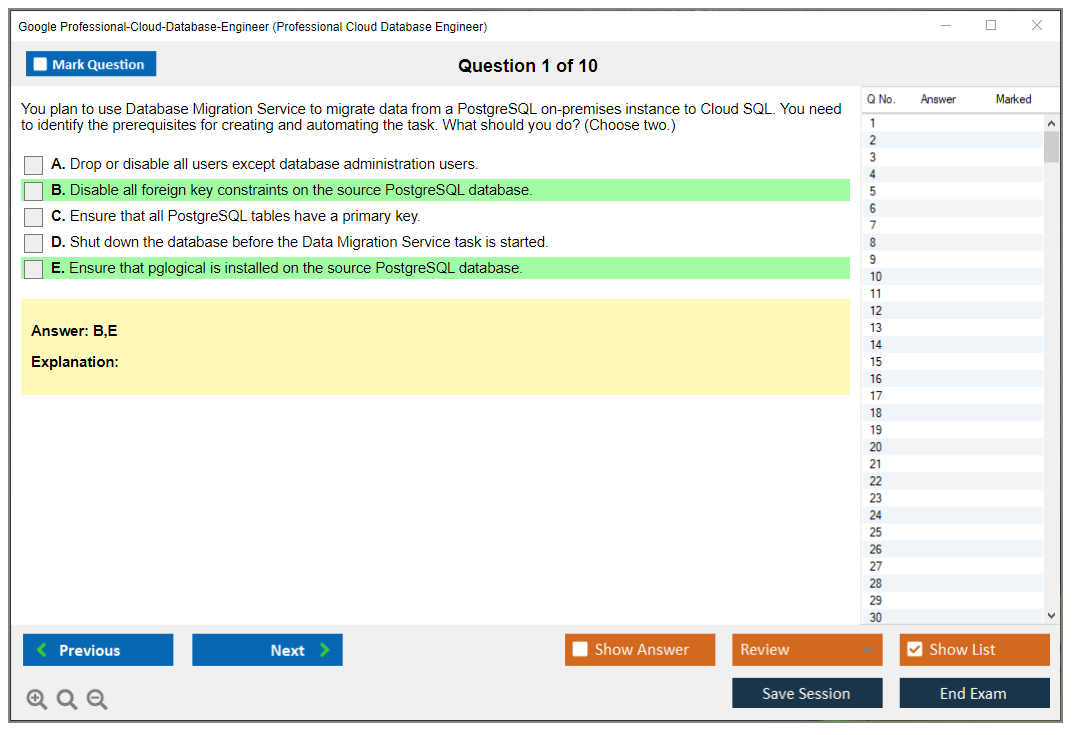

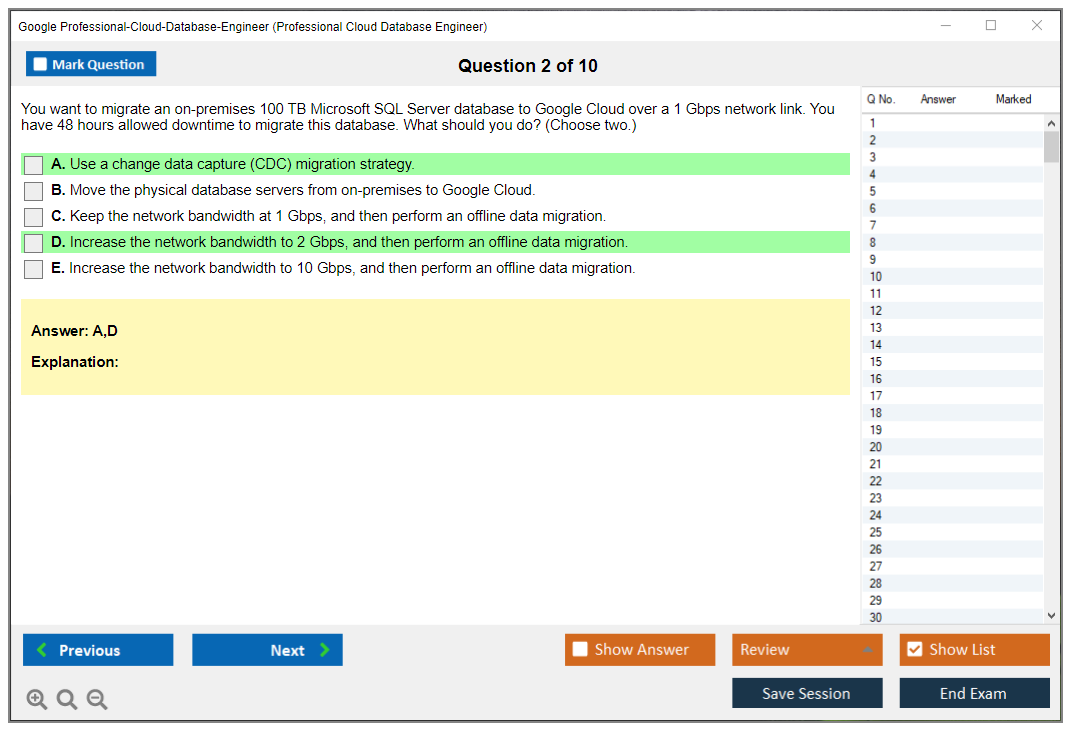

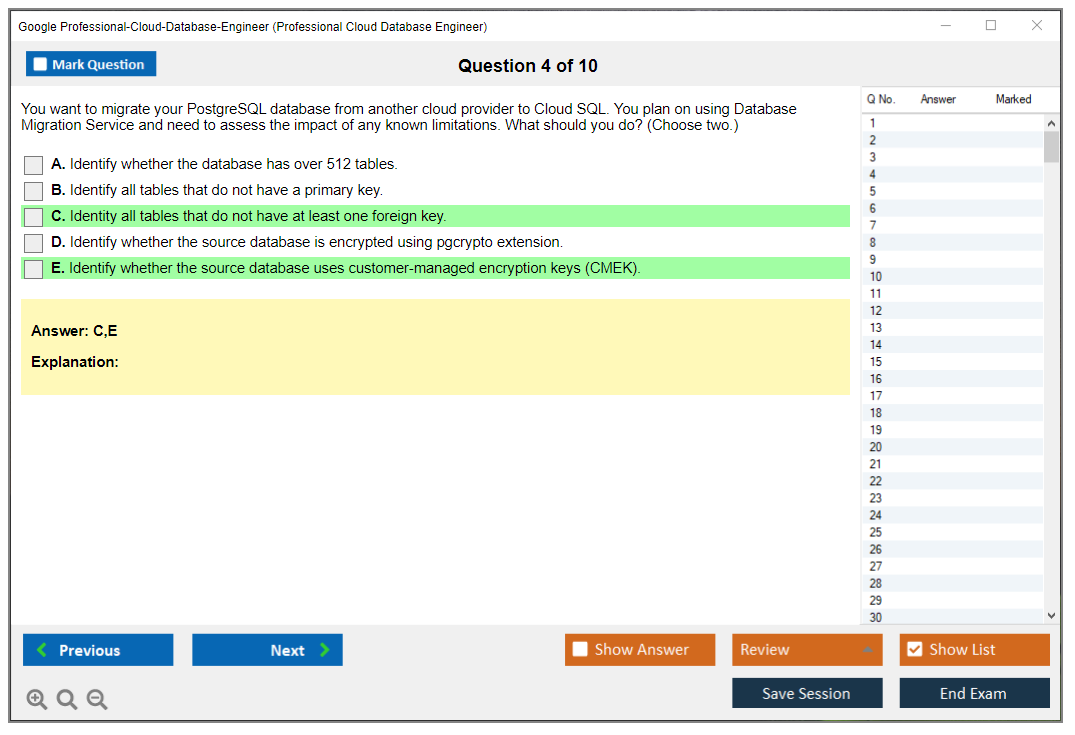

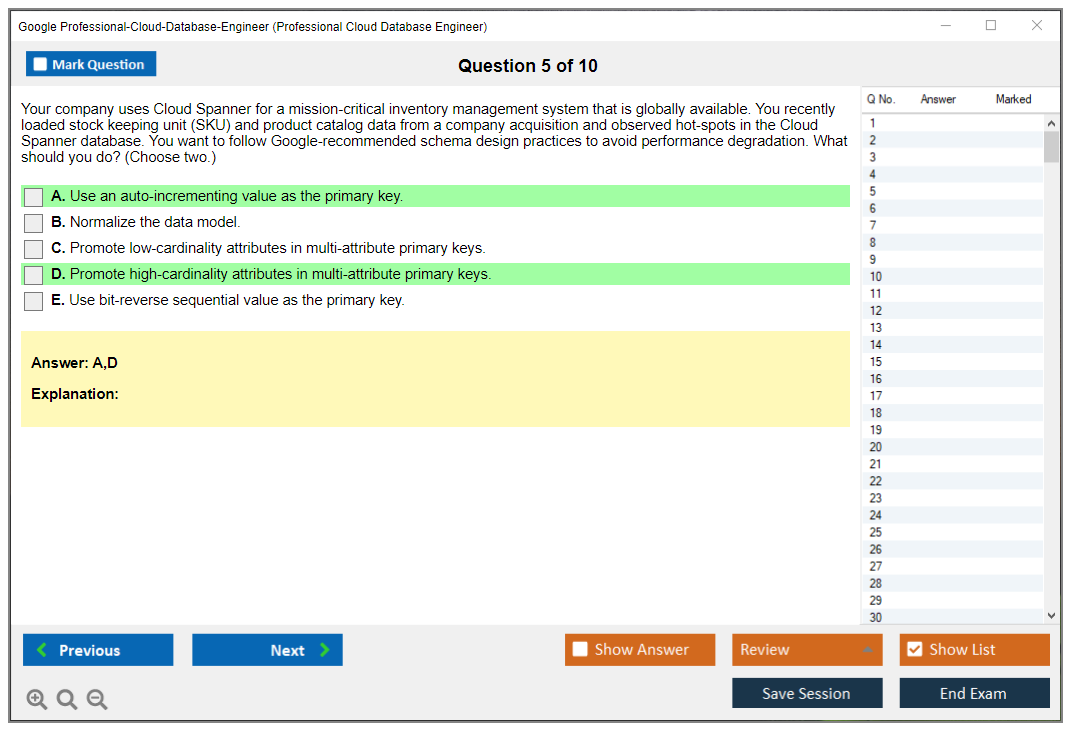

Here's something that trips people up every single time. For questions with multiple correct answers, you need ALL correct answers to get credit. No partial points for selecting three out of four correct options. This makes those questions way harder than single-select, and you'll see several of them throughout the exam.

If the question says "select three correct answers" and you only pick two correct ones, that's zero points. Same if you pick three but one of them is wrong. This scoring approach rewards full knowledge over partial understanding, which makes sense for a professional-level certification but definitely raises the difficulty bar.

What happens when you fail

Good news? There's no waiting period between attempts. You can retake immediately if you want, though honestly I'd recommend against it. Taking the same exam the next day when you just failed isn't a great strategy, like, think about it. You need the full exam fee again (currently around $200) and there's no limit on total attempts.

Your previous performance doesn't carry over or influence future attempts. Each exam is independent. Most people who fail benefit from 2-4 weeks of focused study on their weak areas before trying again. Rushing the retake usually just burns money.

Actually studying with proper materials makes a huge difference here. The Professional-Cloud-Database-Engineer Practice Exam Questions Pack runs $36.99 and gives you realistic question formats so you're not seeing the exam style for the first time during your actual attempt.

Time management matters

You get 50 questions in 120 minutes. Sounds generous?

Wait till you hit the case study questions. Some scenarios require reading through a detailed business situation, technical requirements, and constraints before answering 4-5 related questions. Those eat up time fast, and before you know it you've spent 18 minutes on one case study.

Spending too long on difficult questions is how people run out of time with 8 questions still unanswered. Flag the tough ones, move on, circle back during your final review period. I always recommend leaving 15-20 minutes at the end for a complete pass-through.

There's no penalty for wrong answers, so answer everything even if you're guessing. Blank answers definitely count against you. Educated guessing after eliminating obviously wrong options is a legitimate strategy.

Hands-on experience is the real differentiator

Look, you can memorize documentation all you want, but scenario-based questions require practical problem-solving that you only get from actual experience. People with actual hands-on experience deploying Cloud Spanner, configuring Cloud SQL high availability, setting up Bigtable clusters, or managing Firestore at scale perform way better than those who just read docs. It's not even close.

The exam tests practical implementation knowledge. Questions like "A client needs to migrate their 8TB PostgreSQL database with minimal downtime and must maintain read replicas in three regions. What's your approach?" You need to have worked through these scenarios or at least practiced them in labs.

Common weak areas I see: not understanding when to use Bigtable vs Spanner, insufficient knowledge of Cloud SQL replication topologies, weak grasp of migration tools like Database Migration Service. If you haven't touched these services hands-on, you're at a disadvantage compared to the Professional Cloud Architect exam which can be passed with more theoretical knowledge.

Validity and certification claims

Once you pass, your result is valid for 18 months to claim the certification. You need to accept Google's certification terms and create your profile to receive the credential. If you don't claim it within that window, it expires and you'd need to retake the exam. No extensions. No exceptions.

This is different from certification renewal, by the way. Once claimed, the Professional Cloud Database Engineer certification is valid for two years from the certification date. You'll need to recertify before it expires, but that's separate from the initial claim period.

How this compares to other Google certs

The scoring model is consistent across all Professional-level Google Cloud certifications. Same pass/fail approach, same scaled scoring approach, same lack of numerical scores. But difficulty varies. The Professional Cloud Database Engineer is generally considered comparable to Professional Cloud Architect in terms of difficulty, definitely harder than the Associate Cloud Engineer certification.

Estimated passing rates for well-prepared candidates run around 60-70%, though Google doesn't publish official statistics. That's lower than associate-level certs but reasonable for a professional certification requiring specialized database expertise. The Professional Data Engineer exam has some overlap in content but focuses more broadly on data pipelines and analytics rather than database administration specifically.

Exam Difficulty Assessment and Preparation Timeline

What you're signing up for

The Google Professional Cloud Database Engineer certification is an advanced credential, and honestly it feels like Google built it for people who've already been burned by real production databases. Not book-smart burned. Pager burned. You're expected to make decisions with incomplete info, pick between two "fine" options, and defend the trade-off like you're in an architecture review.

Look, this exam isn't friendly to surface-level familiarity.

If you've done Cloud SQL once. If you watched a Spanner video. If you mostly live in Postgres.

You'll feel it.

Money, logistics, and the annoying details

The Professional Cloud Database Engineer exam cost is typically $200 USD (plus tax where applicable). Pricing can vary a bit by country, but $200's the number most people should plan around.

Format-wise, it's the usual Google pro-level setup: multiple choice and multiple select, heavy on scenarios, and you'll get case study style prompts that eat time. You can sit it online or at a test center. The online experience is fine as long as your room, webcam, and internet are boringly reliable.

About the passing score (and why you won't get a clean number)

People always ask for the Professional Cloud Database Engineer passing score. Google doesn't publish it. Not gonna lie, that's frustrating because it encourages weird score mythology on forums.

What you should expect: scaled scoring, different question weights, and a pass/fail outcome that rewards sound decisions more than memorizing product trivia. So if you're hunting for "I need 72% to pass," you're chasing the wrong thing. Aim for consistent correctness on scenario questions. Especially around Spanner, Bigtable, migrations, and security.

How hard is it, really

The Professional Cloud Database Engineer exam difficulty is advanced-level. Period. It's comparable to AWS Certified Database Specialty and Azure Database Administrator Associate, with one catch: Google's questions tend to be more "business situation + constraints + pick the least-wrong architecture" than "what does this parameter do."

Also, expect a real fail rate for underprepared folks. Based on what I've seen across teams and study groups, roughly 40 to 50% of first-time test-takers fail when they treat it like a docs-reading exam and skip hands-on work.

Scenario questions are the whole game here. They're built to test whether you can apply knowledge to messy enterprise requirements: compliance, latency targets, RPO/RTO, cost ceilings, migration windows, and team skill constraints. Many questions have multiple plausible answers. That's intentional. The exam wants your decision-making.

Why this exam feels rough

Breadth is the first punch. You're covering six services, and the exam expects you to know when each is the right tool: Cloud SQL, Spanner, Firestore, Bigtable, Memorystore, Dataproc. The "Cloud SQL Spanner Firestore Bigtable Dataproc" mix isn't just name-dropping either, because the questions bounce between transactional patterns, analytics, caching, and streaming-ish ingestion setups in a single case.

Depth is the second punch. You need database internals and performance tuning thinking, not just "turn on HA." Read replicas, failover behavior, index choices, hot spotting, schema design, connection limits, query patterns, and operational monitoring all show up. Then Google adds migration scenarios: legacy systems, weird data types, transformation requirements, dual writes, cutover planning, validation, and rollback.

Time pressure is the third punch. You get these long prompts with multi-part requirements, and you have to extract the real constraint quickly. Slow at reading case studies? You'll be guessing on the last chunk of the exam. That's common.

One more thing. Google Cloud changes fast. Services get new features, docs evolve, and older blog posts go stale, so your Professional Cloud Database Engineer study guide has to be anchored in current documentation and recent practice questions.

I remember prepping for a different Google cert a few years back and confidently citing a best practice from some Medium article. Turned out that feature had been deprecated six months earlier and replaced with something completely different. Cost me about three questions before I figured out why my answers kept feeling wrong. Now I cross-check everything against current docs, even the stuff that seems obvious.

Why candidates fail (the patterns are boringly consistent)

The biggest cause is insufficient hands-on time with Google Cloud database services. People overestimate how far "I've used Postgres for years" carries them. Then Spanner shows up with a totally different architecture and consistency model, and suddenly their intuition's wrong.

Over-reliance on theory is another. You can memorize what Bigtable is, sure, but if you've never designed row keys to avoid hot tablets, you'll miss questions that are basically performance troubleshooting in disguise.

Spanner is a repeat offender. Candidates don't grok where it fits. Why schema design's different. What interleaving does to access patterns. How global distribution changes trade-offs. Confusion between similar services is right behind it, like mixing up Firestore vs Bigtable vs "just use Cloud SQL," or assuming Memorystore solves persistence problems.

Then there's time management. People spend forever early on, then rush the final questions, and those last ones are often the ones where you can pick up easy points if you're calm.

Migration experience matters too. If you've never done a database migration to Google Cloud with real constraints, Database Migration Service feels abstract. You'll miss tool-selection and sequencing questions. Add security, compliance, and DR? Weak coverage there's a fast fail.

Gaps that make the exam feel even harder

Coming from classic RDBMS-only backgrounds? NoSQL will hurt at first. Firestore modeling, Bigtable access patterns, and "design for queries you will run" is a mindset shift. Not a feature list.

Networking surprises a lot of people too. Private IP, VPCs, shared VPC, DNS, PSC, firewall rules, and connectivity patterns affect database designs. The exam absolutely expects you to understand that. IAM's another one. You need comfort with IAM policies, service accounts, least privilege, and how that connects to database-level access.

Troubleshooting is a quiet killer. The exam asks "what would you do next" when there's a performance bottleneck, replication lag, hotspotting, or a noisy neighbor situation. Never used Cloud Monitoring, logs, query insights, and basic operational playbooks? You'll feel lost.

Also, multi-region and global architectures show up a lot. Backup strategies. Point-in-time recovery. This is where "backup restore high availability on GCP" stops being a phrase and starts being your weekend.

How long you should study (based on your background)

If you're an experienced DBA and you already have real GCP exposure, plan 4 to 6 weeks and roughly 60 to 80 hours. That's enough time to revisit fundamentals, drill Spanner/Bigtable, and do practice questions without panicking.

IT pro with cloud experience but limited database depth? You're more like 8 to 12 weeks and 100 to 120 hours. You'll need time for internals, modeling, and operations. Not just product overview.

If you're new to both cloud and databases, 12 to 16 weeks and 150 to 200 hours is realistic. It's a lot. But cramming doesn't work here. Consistency beats intensity. Daily sessions win.

A 6-week intensive plan that actually works

Week 1: Cloud SQL fundamentals. MySQL and PostgreSQL on GCP. HA configs, read replicas, backups, maintenance windows. Build a couple instances, break one, restore it. Simple. Necessary.

Week 2: Spanner architecture. Schema design. Performance optimization. Spend extra time here. If Spanner's fuzzy, the whole exam feels like fog, because so many scenario questions hinge on "should this be Spanner or not" and what the consequences are for latency, cost, and operational overhead.

Week 3: Firestore and Bigtable. Data modeling approaches. Do hands-on reads and writes, test access patterns, and for Bigtable, practice row key design and hotspot avoidance. This week's where people either level up or keep guessing.

Week 4: Migration strategies. Database Migration Service, transfer options, validation, cutover planning. Run at least one test migration and write down the steps, because the exam likes sequencing questions.

Week 5: Security, compliance, DR. IAM, CMEK basics, private connectivity patterns, backup/restore, PITR, RPO/RTO thinking. This is also where you stop hand-waving and start being specific.

Week 6: Practice exams. Weak areas. Case study analysis. Time yourself. Review misses ruthlessly. If you want structured drilling, the Professional-Cloud-Database-Engineer Practice Exam Questions Pack is a decent way to pressure-test your gaps, especially when you're trying to build speed and pattern recognition.

Topic difficulty ratings (my take)

Spanner global distribution's the hardest. 9/10. It's different, and Google loves asking about it.

Bigtable schema design and tuning is next. 8/10. Row keys, access paths, performance symptoms.

Cloud SQL HA and replication is 7/10. Not conceptually wild, but the exam throws operational constraints at you.

Firestore modeling and security rules is 6/10. Manageable if you've actually built anything with it.

Basic database concepts and general GCP services is 5/10. If this is hard, pause and backfill fundamentals.

Experience you should have before you book it

No official Professional Cloud Database Engineer prerequisites exist, but practically, you want at least 2 years working with relational databases in production. You also want 6 to 12 months of hands-on GCP time. Plus experience deploying and operating at least three GCP database services. Not just one.

Migration project experience helps a ton. Even a proof-of-concept counts if you did the planning, the tool choice, the validation steps, and the rollback plan.

How you know you're ready (and the red flags)

You're ready when you can design a database solution quickly. Explain trade-offs between services without spiraling. Troubleshoot common issues using GCP monitoring tools. Practice exams matter too. If you're consistently hitting 85%+ on reputable Professional Cloud Database Engineer practice tests, you're in a good spot. If you want extra reps close to exam day, the Professional-Cloud-Database-Engineer Practice Exam Questions Pack at $36.99 is an easy add-on for repetition and timing.

Red flags are pretty obvious. Confusing Spanner vs Cloud SQL. Not being able to explain Bigtable row key design. Being unsure about migration approaches and tools. Practice scores stuck below 75%. Also, if you've barely touched the console, that's a problem, because the Professional Cloud Database Engineer exam objectives assume you've done this stuff, not just read about it.

Quick FAQ stuff people ask

How much does the Google Professional Cloud Database Engineer exam cost? Usually $200 USD plus tax.

What is the passing score for the Professional Cloud Database Engineer exam? Google doesn't publish a fixed Professional Cloud Database Engineer passing score. Treat practice score trends as your signal.

How hard is the Google Cloud Database Engineer certification? Hard. Advanced. Very scenario-heavy. The Google Cloud database certification expects real-world judgment.

What are the best study materials for the Professional Cloud Database Engineer exam? Official docs, hands-on labs, and a current question bank. A solid Professional Cloud Database Engineer study guide should map directly to the exam guide, and you should validate with timed practice like the Professional-Cloud-Database-Engineer Practice Exam Questions Pack.

How do I renew the Professional Cloud Database Engineer certification? Check Google's current Professional Cloud Database Engineer renewal policy for validity length and recert steps. This changes. Plan to recert before expiration, and keep up with service updates so you're not relearning everything under pressure.

Full Exam Objectives and Domain Breakdown

How Google structures the exam blueprint

Google's exam? Four domains.

The Google Professional Cloud Database Engineer certification follows a structure that Google updates periodically. Honestly, you've gotta verify which exam guide version's current before diving into study materials because they tweak things more often than you'd think. The exam weights different domains differently, and look, that distribution literally tells you where to dump your study hours when you're grinding through labs at 2am wondering if Spanner consistency models will ever click.

Domain 1? Heaviest hitter at 30%. That's designing scalable and highly available cloud database solutions. Basically the strategic thinking stuff. Domain 2 sits at 25% covering management and provisioning, more hands-on configuration work. Domain 3 handles migrations at 20%, and Domain 4 rounds things out at 25% with deployment and maintenance tasks. These percentages matter when planning study time, I mean really matter.

What makes this exam tricky is domain overlap. A single question might test your high availability design knowledge (Domain 1) while simultaneously asking about specific Cloud SQL configuration settings (Domain 2). You can't memorize facts in isolation and expect to pass. The thing is, they want synthesis.

Breaking down the design domain in detail

Domain 1 proves you can actually architect database solutions, not just click console buttons. You'll analyze application requirements and determine whether the dev team needs Cloud SQL, Spanner, Firestore, or Bigtable. Choosing the wrong database for a workload? Expensive and painful to fix later, so Google really wants confirmation you understand the differences.

The exam digs into schema design optimized for performance and scalability. This means understanding normalization versus denormalization tradeoffs, how to design composite indexes in Firestore, or when to use interleaved tables in Spanner. Wait, actually interleaved tables got deprecated, so scratch that. They're pushing foreign key relationships now instead. You'll need to architect multi-region and global database solutions giving users low-latency access whether they're in Tokyo or Frankfurt.

High availability design? Constantly tested. Replicas, failover configurations, redundancy strategies are all fair game. You might get a scenario where an application needs 99.99% uptime and you've gotta choose the right combination of regional Cloud SQL with read replicas, or maybe Spanner's multi-region configuration. Capacity planning based on workload patterns is another huge piece here. Can you estimate how many Bigtable nodes you need for 100K writes per second with 10ms latency? The exam wants that answer.

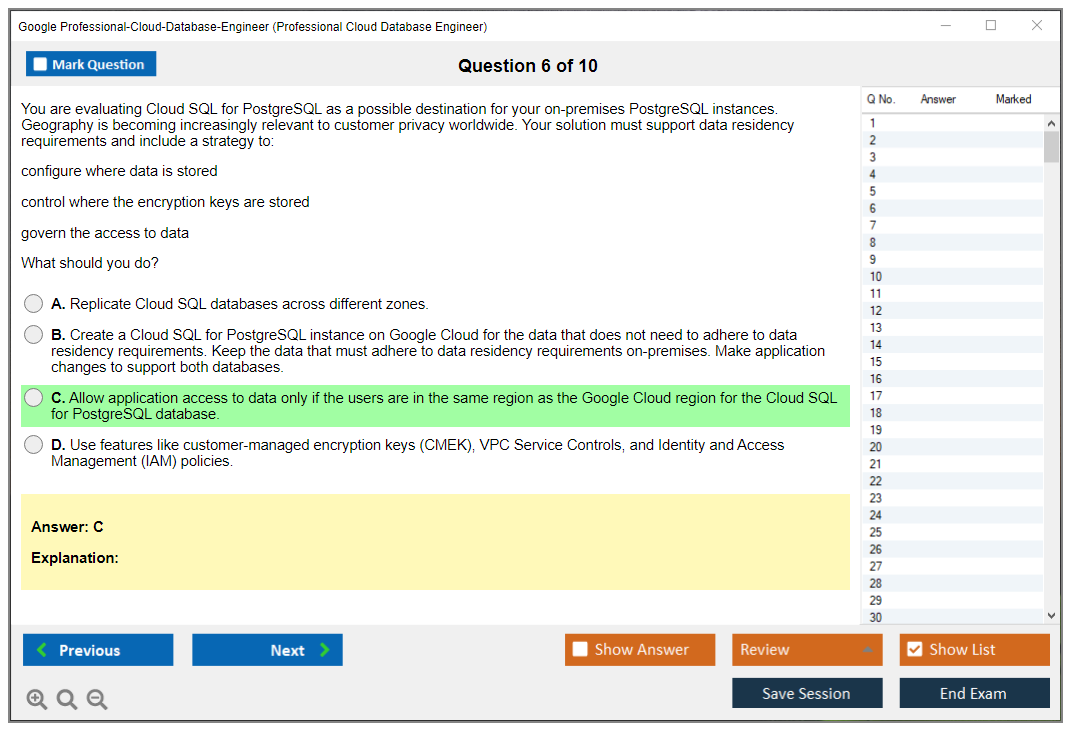

Data partitioning and sharding strategies for horizontal scaling show up in various forms. Compliance requirements like HIPAA, PCI-DSS, or GDPR add complexity layers to your designs. Plus you'll see hybrid and multi-cloud connectivity scenarios where you're connecting on-premises databases to GCP services. Consistency models matter too. Understanding when you need strong consistency versus when eventual consistency works fine can make or break your architecture, no exaggeration.

Management and provisioning in the real world

Domain 2 shifts to implementation.

You're provisioning Cloud SQL instances and need to pick the right machine types, storage configurations, and understand the performance implications. Not gonna lie, there's a lot of knobs to turn here. Same with Cloud Spanner instances where node counts and regional configurations directly impact both performance and cost. I've seen people over-provision Spanner nodes by 3x because they didn't understand how to calculate processing units correctly. Honestly that's thousands of dollars monthly just evaporating.

Setting up Firestore in Native versus Datastore mode is a one-way decision you can't reverse, so the exam tests whether you know which mode fits which use case. Bigtable instance and cluster configuration for production workloads requires understanding replication, storage types (SSD vs HDD), and how to size clusters appropriately.

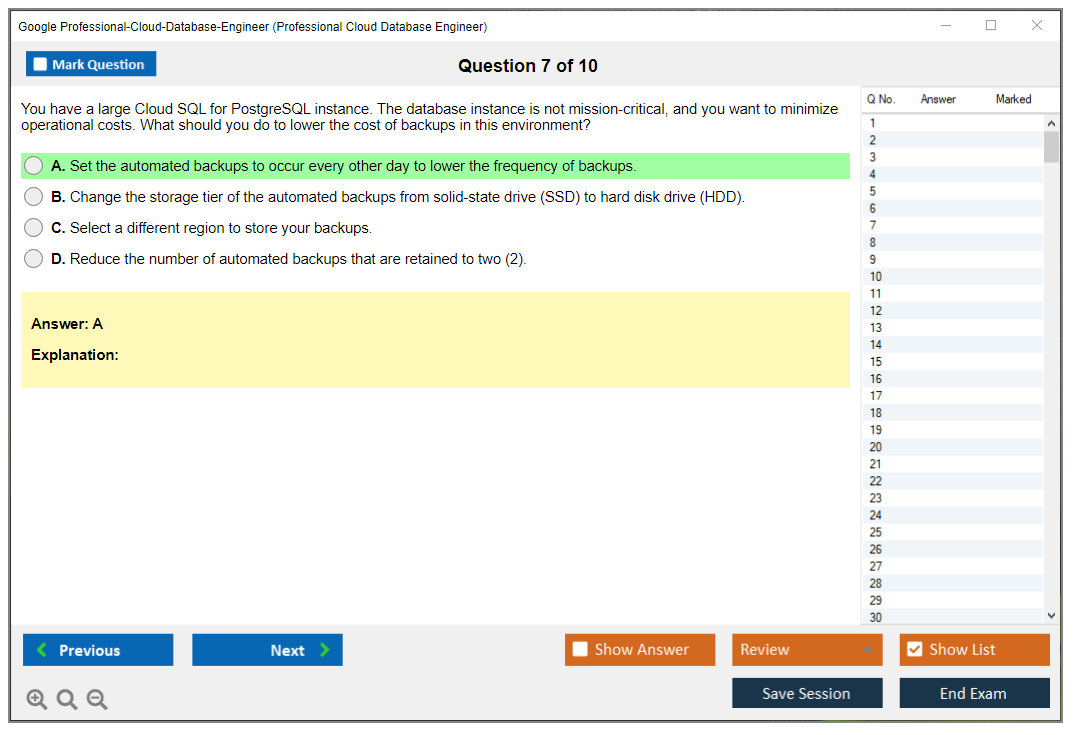

Memorystore for Redis and Memcached shows up as caching layers you'll implement in front of primary databases. The exam covers automated backups and retention policies across different database services. Each service handles backups differently. Cloud SQL does automated backups natively. Bigtable needs you to set up backup tables or use the managed backup feature. Firestore has export functionality.

Database users, roles, and authentication? Tested thoroughly. IAM policies for database access control are critical, especially understanding the difference between database-level authentication and GCP IAM. VPC networking, private IP configurations, and Cloud SQL Proxy connections appear in multiple question types. You'll configure monitoring using Cloud Monitoring and Cloud Logging, set up alerts for things like replication lag or high CPU usage.

Managing maintenance windows and version upgrades matters for production systems. Encryption at rest and in transit for data protection is non-negotiable in most scenarios. The exam wants verification you can actually secure data properly, similar to what the Professional Cloud Security Engineer certification covers for broader security topics.

Migration strategies and execution

Domain 3? All about database migration.

This focuses entirely on moving databases into GCP, which is honestly where loads of real-world projects start. You'll assess existing on-premises or cloud database environments for migration readiness. Can the current database move as-is? Does the application code need changes? What's the acceptable downtime window? All questions you'll answer.

Planning migration strategies involves choosing between lift-and-shift (move the database with minimal changes), re-platform (move to a managed service but keep the same engine), or re-architect (redesign using cloud-native databases). Database Migration Service is Google's tool for minimal-downtime migrations and you absolutely need hands-on experience with it. Reading docs won't cut it.

Homogeneous migrations like MySQL to Cloud SQL MySQL or PostgreSQL to Cloud SQL PostgreSQL are more straightforward. Heterogeneous migrations requiring schema conversion and data transformation are harder. Think Oracle to Cloud SQL PostgreSQL where you're dealing with PL/SQL to PL/pgSQL conversion and data type mapping issues that'll make you question your career choices at 3am.

Continuous data replication during migration phases keeps the source and target synchronized while you validate everything. Side note, but I once watched a migration fail spectacularly because someone forgot to account for time zone differences in timestamp columns. Just, you know, something to keep in mind. Data integrity and consistency validation post-migration is critical because you can't just assume everything copied correctly. The exam tests whether you know how to verify row counts, checksums, and application functionality before cutting over.

Deployment and ongoing maintenance

Domain 4 covers deploying and maintaining databases once they're running. This overlaps with Domain 2 but focuses more on operational aspects. You're implementing automated deployment pipelines, handling database patches and updates, and responding to performance issues.

Scaling operations? Frequently tested. When do you scale vertically versus horizontally? How do you add read replicas without impacting production traffic? Disaster recovery and business continuity planning requires understanding RPO (Recovery Point Objective) and RTO (Recovery Time Objective) and designing backup procedures that meet those targets, along with restore procedures.

Performance tuning and optimization is huge. Query optimization, index tuning, connection pool sizing, caching strategies all get tested. You'll troubleshoot slow queries using Query Insights in Cloud SQL or examining query execution plans. The exam presents scenarios where an application's slow and you need to identify whether it's a database configuration issue, a schema design problem, or an application code issue.

Monitoring and alerting tie everything together. Setting up meaningful metrics and alerts that catch problems before they become outages is necessary. Cost optimization appears in various forms too, since running databases in the cloud can get expensive fast if you're not paying attention to storage, backup retention, and instance sizing. I mean, seriously fast.

Look, these domains interconnect constantly throughout the exam. A question about migrating a PostgreSQL database (Domain 3) might also test your high availability design knowledge (Domain 1) and specific Cloud SQL configuration settings (Domain 2). That's why just memorizing facts doesn't work. You need understanding of how everything fits together in real production scenarios. The exam guide gets updated periodically, so verify you're studying the current version before committing to a study plan, especially if you're also pursuing related certifications like the Professional Cloud Architect or Professional Data Engineer.

Conclusion

Wrapping up your prep

Look, this certification won't just fall into your lap. You need hands-on time with Cloud SQL, Spanner, Firestore, Bigtable, and yeah, Dataproc too when you're dealing with analytics workloads that bump up against database migration patterns. Actual real work, not just reading about it. The exam costs $200, which isn't pocket change, but that's what Google charges for professional-level certs and the credential does carry weight when you're sitting across from hiring managers who need someone that can architect database solutions at scale.

The passing score sits around 70-75% from what most people report. But don't get comfortable with that number. The difficulty doesn't come from obscure trivia. It comes from scenario questions where you have to pick the best solution among multiple technically correct options, which means memorizing services and their features won't cut it. You need to understand when Spanner makes sense over Cloud SQL. How backup and restore work differently across database types. Why certain migration strategies fit specific workloads better than others.

What separates candidates who pass from those who don't?

Practice.

Reading through the study guide helps. Videos build foundational knowledge. But you really need exam prep that simulates actual testing conditions. I spent way too long thinking I could just review documentation and be fine. Wrong. The exam objectives cover five major domains, and you'll get questions testing your ability to design for performance, implement security controls, plan migrations, and keep things running under realistic constraints that mirror production environments.

Your prerequisites? Technically there aren't hard requirements listed anywhere official, but three years working with databases and at least a year with Google Cloud database services makes a huge difference in how quickly concepts click. Oh, and the renewal policy requires recertification every two years, so plan for that down the road.

Before you schedule your exam, get serious about testing your readiness. Like actually serious. The practice tests at our Professional-Cloud-Database-Engineer Practice Exam Questions Pack mirror the real exam format and difficulty level. They give you that realistic assessment you need to spot weak areas while there's still time to fix them. Don't walk into that testing center guessing where you stand.