Google Cloud Certified Generative AI Leader Exam Overview

The Google Cloud Certified Generative AI Leader exam targets a completely different audience than most cloud certifications you'll encounter. This isn't about spinning up VMs or writing Terraform configs. If you're a C-suite exec trying to figure out whether your company should dump millions into generative AI projects, or you're a product manager who needs to explain hallucination risks to your board without sounding like you're reading a research paper, this certification validates exactly those skills.

What gets validated when you pass

The Google Generative AI Leader certification demonstrates your ability to guide organizations through generative AI adoption from a strategic perspective, not a coding one. You're proving you understand how to assess business value, identify use cases that actually make sense (not just "let's AI all the things"), and manage the very real risks that come with deploying these systems.

You'll lead transformation initiatives across enterprise environments using Google Cloud technologies. The certification validates knowledge of frameworks for responsible AI implementation, governance structures, and compliance considerations. Wait, let me clarify. It's validating the leadership competencies needed when you're the person everyone looks to asking "should we even be doing this?"

Most Google Cloud certifications test your ability to architect solutions, write code, or manage infrastructure. Think Professional Cloud Architect or Professional Data Engineer. The Generative AI Leader exam? Business-focused. It's for executives, program managers, product owners, strategy consultants, and decision-makers who shape AI adoption roadmaps rather than implement technical solutions directly.

You won't write a single line of Python. You won't configure a Vertex AI endpoint. Instead, you're judged on whether you can communicate AI capabilities to non-technical stakeholders, build business cases that hold up under CFO scrutiny, and establish governance frameworks that keep your company out of regulatory trouble.

Who actually benefits from this thing

Business leaders, definitely.

C-suite executives who need credibility when presenting AI strategies to boards. Product managers evaluating whether generative AI solves actual customer problems or just sounds cool in a pitch deck. Program directors responsible for multi-million dollar AI transformation initiatives. Innovation officers, digital transformation leads, strategy consultants, enterprise architects in advisory roles. Basically anyone who needs to make high-stakes decisions about AI investments without necessarily building the systems themselves.

Department heads evaluating AI investments benefit because the certification provides a structured framework for assessment. Non-technical stakeholders responsible for AI governance frameworks get a common language and set of practices. If your job involves saying "yes" or "no" to AI projects and you don't have a technical background, this certification gives you the vocabulary and conceptual models to have informed conversations with your engineering teams without feeling like you're faking it.

I've seen people use this credential as a stepping stone into consulting too. Sometimes it opens doors you didn't expect.

The value proposition for your career

Earning this credential boosts credibility when you're presenting AI strategies to boards and executives. It's a recognized signal that you're not just repeating buzzwords from TechCrunch articles. It differentiates candidates in competitive job markets where "AI expertise" appears on every resume but few people can articulate responsible deployment practices.

The certification validates your understanding of responsible AI principles and demonstrates commitment to ethical AI deployment. It provides a recognized credential for consulting engagements focused on AI transformation.

Not gonna lie, the career impact matters. Professionals holding the Google Generative AI Leader certification report better career mobility into strategic AI roles, stronger positioning in transformation initiatives, improved stakeholder confidence, and potential salary premiums ranging from 8-15% in AI-focused positions compared to non-certified peers. Major enterprises, consulting firms, technology partners, and government agencies recognize this as validation of AI leadership competency, particularly in organizations committed to Google Cloud ecosystems.

What organizations get from certified leaders

Companies employing Google Cloud gen AI leadership certification holders gain trusted advisors who can bridge technical and business stakeholders. Someone who can translate "we need to fine-tune a foundation model with LoRA adapters" into "this'll cost $X and deliver Y business value in Z timeframe." These certified professionals reduce costly missteps in AI adoption (like deploying a chatbot that hallucinates legal advice to customers).

They implement governance frameworks aligned with regulatory requirements. They accelerate time-to-value for generative AI initiatives and build organizational AI literacy through informed leadership.

The organizational benefits extend beyond individual projects.

Certified leaders establish responsible AI governance committees that actually function instead of becoming rubber-stamp meetings. They assess legal and compliance risks before deployment, not after a regulatory complaint arrives. They prioritize use cases based on ROI potential rather than hype cycles. They develop organizational change management strategies that address the very real human concerns when AI starts automating tasks. That's where the actual resistance happens, right?

How this differs from technical Google Cloud exams

Unlike hands-on certifications requiring coding skills or infrastructure management expertise, the Generative AI Leader exam focuses on strategic thinking, business case development, risk assessment, stakeholder communication, and ethical considerations. High-level architectural awareness matters more than implementation details or command-line proficiency.

You won't troubleshoot API errors. You won't optimize model inference costs through batch prediction strategies. Instead, you'll evaluate whether a proposed use case fits with organizational values. Does the data quality support the intended application? Does the compliance framework address relevant regulations?

The exam maintains accessibility for business professionals. You don't need prior Google Cloud certifications, coding experience, or machine learning engineering background. That said, familiarity with cloud computing concepts, basic AI terminology, and business strategy frameworks improves preparation efficiency and exam performance. If you've never heard of "foundation models" or "prompt engineering," you'll spend more time getting up to speed. If you understand the difference between Cloud Digital Leader concepts and deeper technical certifications like Associate Cloud Engineer, you'll have context for where this certification fits in the ecosystem.

Real-world applications after you pass

Certified professionals apply this learning when evaluating vendor solutions. Distinguishing marketing claims from actual capabilities matters.

They build business cases for AI investments that account for hidden costs like data preparation, ongoing monitoring, and model retraining. They establish responsible AI governance committees with clear decision-making authority. They assess legal and compliance risks across jurisdictions with different AI regulations. They communicate AI capabilities to non-technical audiences without either oversimplifying to the point of meaninglessness or drowning people in jargon that makes everyone's eyes glaze over.

The 2026 version reflects rapid generative AI advancements. Multimodal models, upgraded Vertex AI capabilities, updated responsible AI frameworks, new compliance requirements like EU AI Act considerations. Expanded coverage of AI agents and assistants. Evolved practices for production deployment at enterprise scale. This isn't a static certification. The content changes as the technology and regulatory environment change, which means ongoing learning matters even after you pass. Maybe even more so.

The certification path and what comes next

The Google Generative AI Leader certification is an entry point for business professionals into Google Cloud's certification ecosystem. It often precedes or complements technical certifications. You might earn this first, then pursue Professional Machine Learning Engineer if you decide to deepen technical knowledge. It provides foundation for specialized credentials in data analytics, machine learning, or cloud architecture as professionals transition from pure strategy roles into more hybrid positions.

Credential holders gain access to exclusive Google Cloud communities, early information about platform updates, and networking opportunities with AI leaders globally. Invitations to specialized events and webinars. Resources supporting continuous learning as generative AI technologies and practices evolve rapidly. The pace of change in this field means the community access and ongoing learning resources might be more valuable long-term than the initial certification itself. That's just how fast things move now.

This credential addresses growing demand for AI-literate business leaders as generative AI becomes mainstream. It responds to increased regulatory scrutiny requiring governance expertise. It meets market need for responsible AI implementation guidance and positions professionals for emerging roles like Chief AI Officer or AI Ethics Director. If you're positioning yourself for executive leadership in any organization that'll deploy generative AI systems, this certification demonstrates you've done the homework instead of just winging it.

Exam Format, Cost, and Logistics

What you'll pay and why it's cheaper

The Google Cloud Certified Generative AI Leader exam runs $125 USD in most regions. That number catches people off guard because they expect the usual $200 hit from Google's Professional-level certs, but the pricing actually makes sense when you think about what this credential's trying to accomplish: getting more leaders, product folks, and those "AI sponsor" types certified without it feeling like some high-stakes engineering gauntlet.

Regional pricing varies. Taxes too. Promotions pop up sometimes, and if you're at a company rolling this out as an internal upskilling requirement, there might be voucher programs and occasional volume pricing for bulk purchases, though they don't exactly advertise it loudly. Ask your training coordinator.

Also? Don't overthink what the fee "includes". You're paying for a single exam attempt and the delivery platform. Not a bundled course. Not a Generative AI Leader study guide. And definitely not a built-in Generative AI Leader practice test. That's separate. Budget accordingly.

Registering and scheduling without drama

Registration goes through the Google Cloud certification portal, and you'll end up creating a Webassessor account (Kryterion's system). Different login. Different password. Of course it is.

Once you're in, you pick the exam, choose a delivery method, pick a date and time, and pay. Payment's typically credit card, but organizations can sometimes pay via purchase order, which matters if you're working through corporate learning budgets and those delightful procurement approvals. After checkout, you get a confirmation email with your appointment details and the instructions for launch day.

Rescheduling flexibility? Decent. You can change the appointment up to 72 hours before your start time without penalty, which is basically the line in the sand for the whole Google/Kryterion ecosystem, and look, it's fair as long as you plan like an adult. Anything inside that 72-hour window usually means you lose the fee. No-shows included. Though documented emergencies can sometimes get exceptions if you go through support.

How long the exam is, and what that means for pacing

Expect 50 to 60 questions in a 90-minute window. No scheduled breaks. That works out to about 1.5 minutes per question, which sounds chill until you hit a scenario question that reads like a mini internal memo about a company's data, compliance constraints, and stakeholder drama. Wait, that's basically every third question.

Time management matters here more than people expect. Flag stuff early. Move on fast. Come back later.

Online proctoring sometimes allows very brief pauses, but the timer generally keeps running, so don't treat it like a break-enabled exam. If you need accommodations, request them up front rather than hoping the proctor'll "be cool about it" mid-session. They won't.

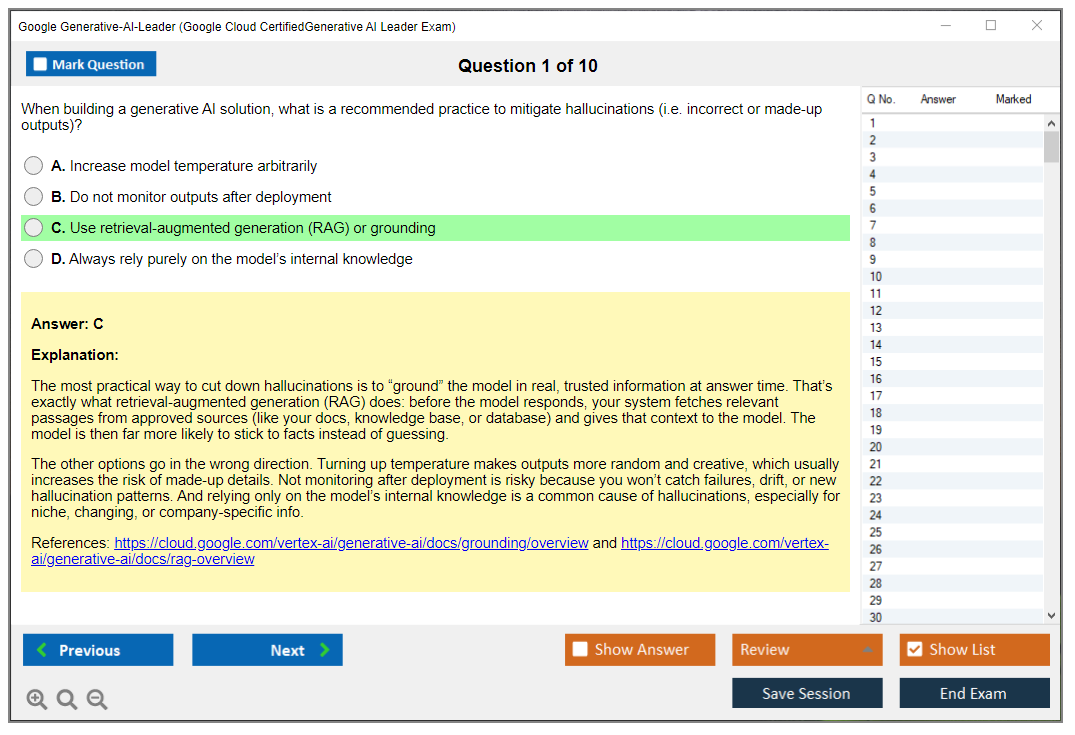

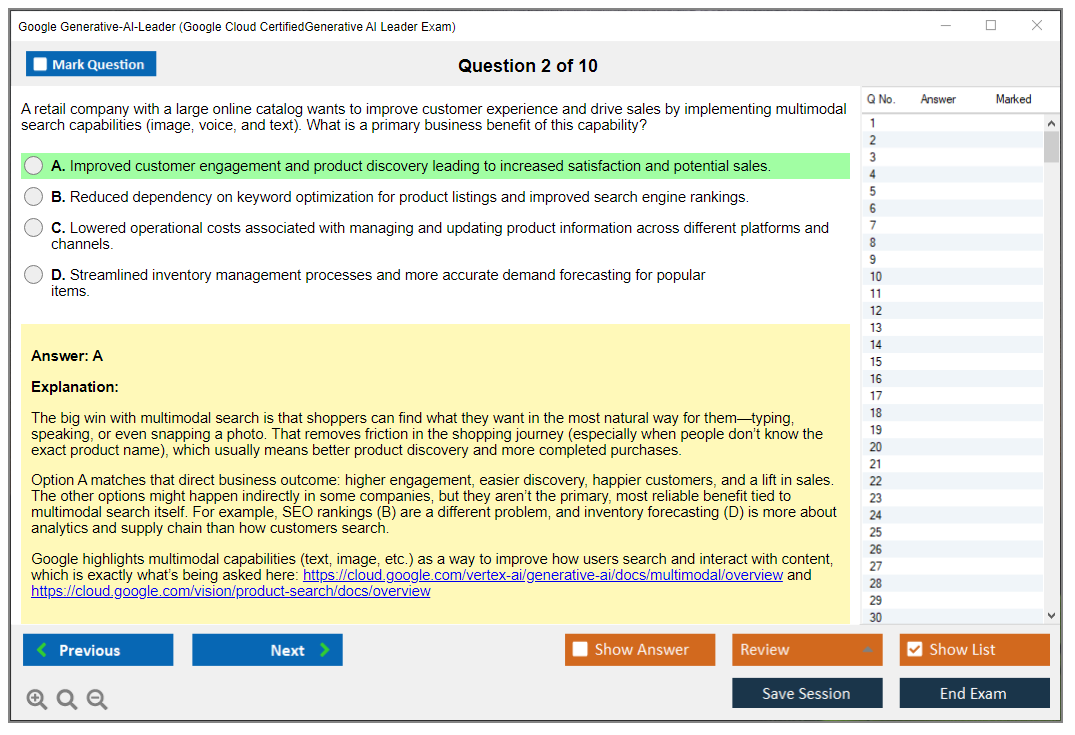

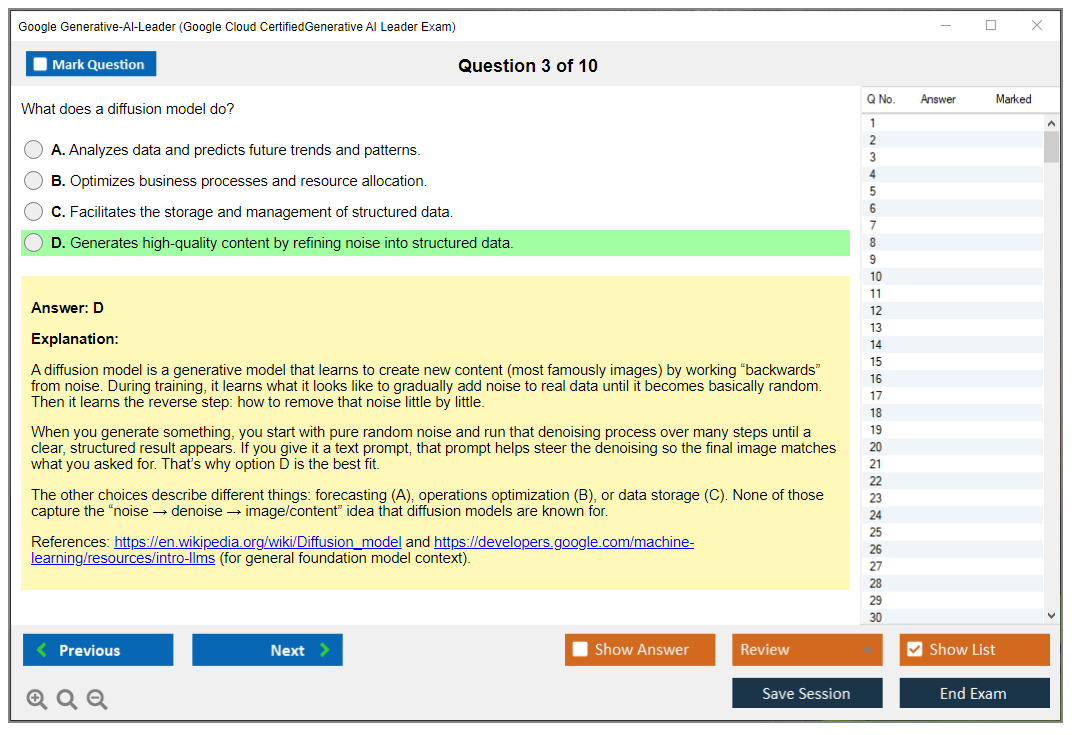

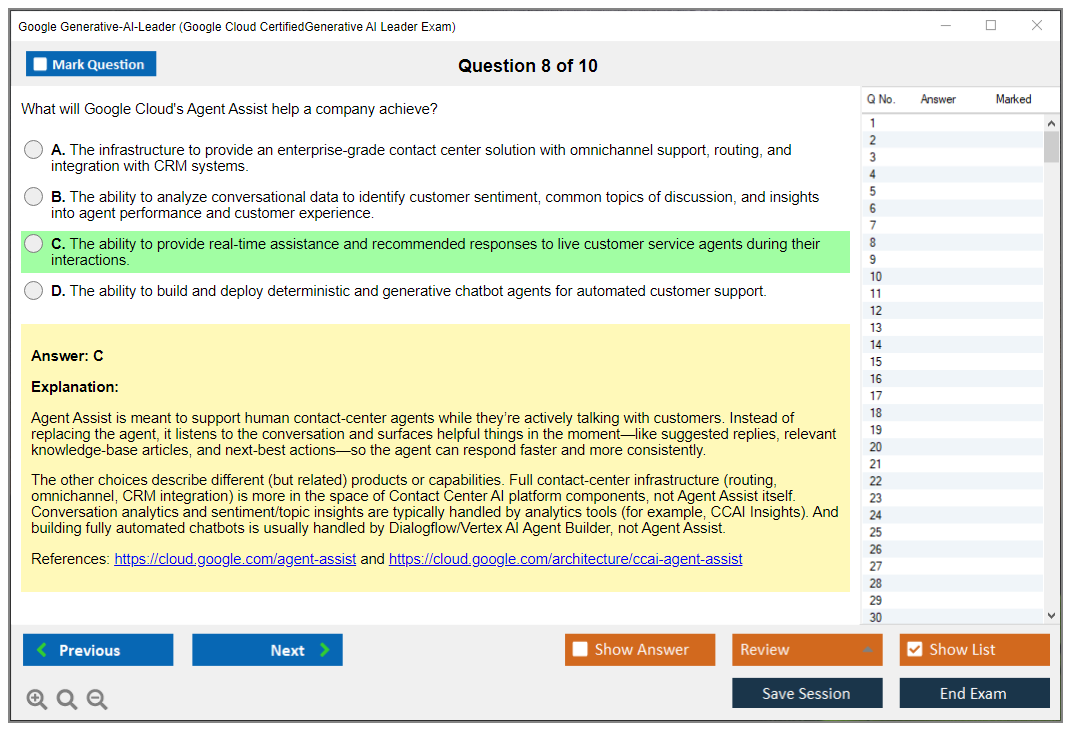

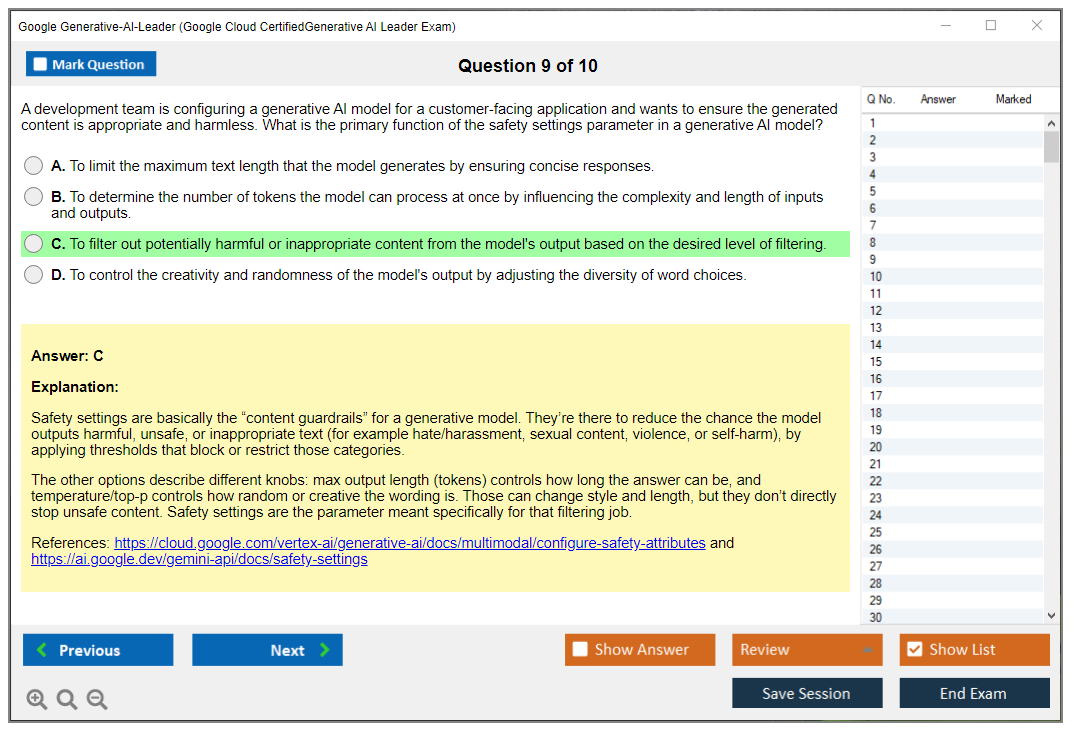

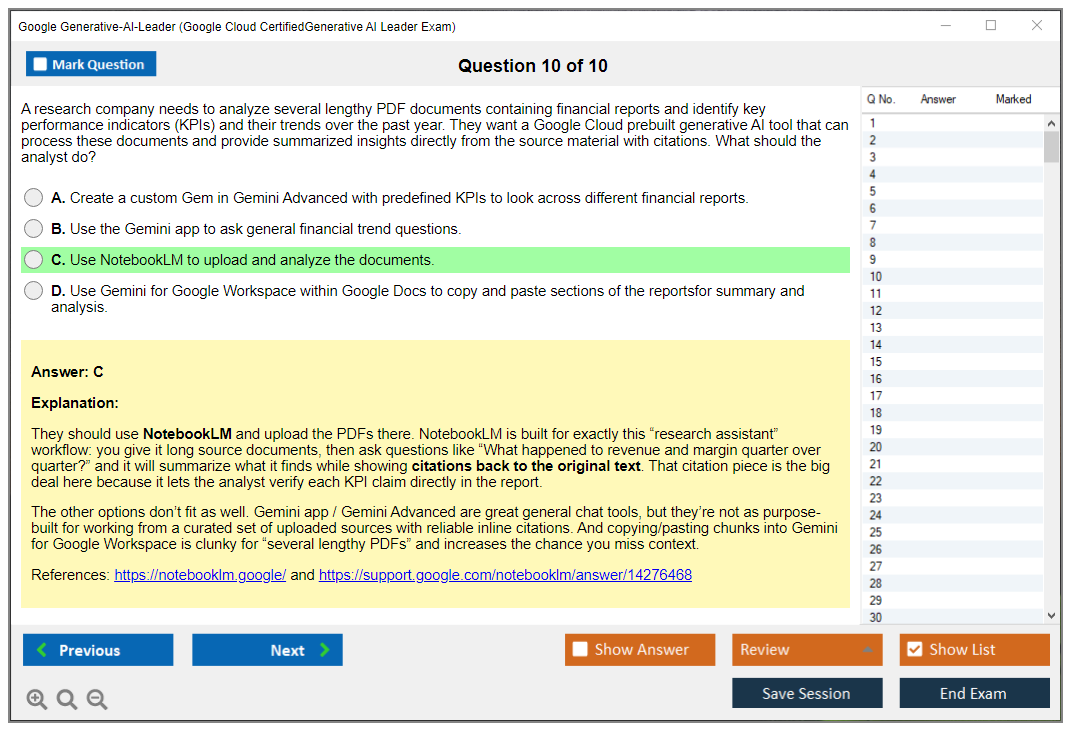

What the questions actually look like

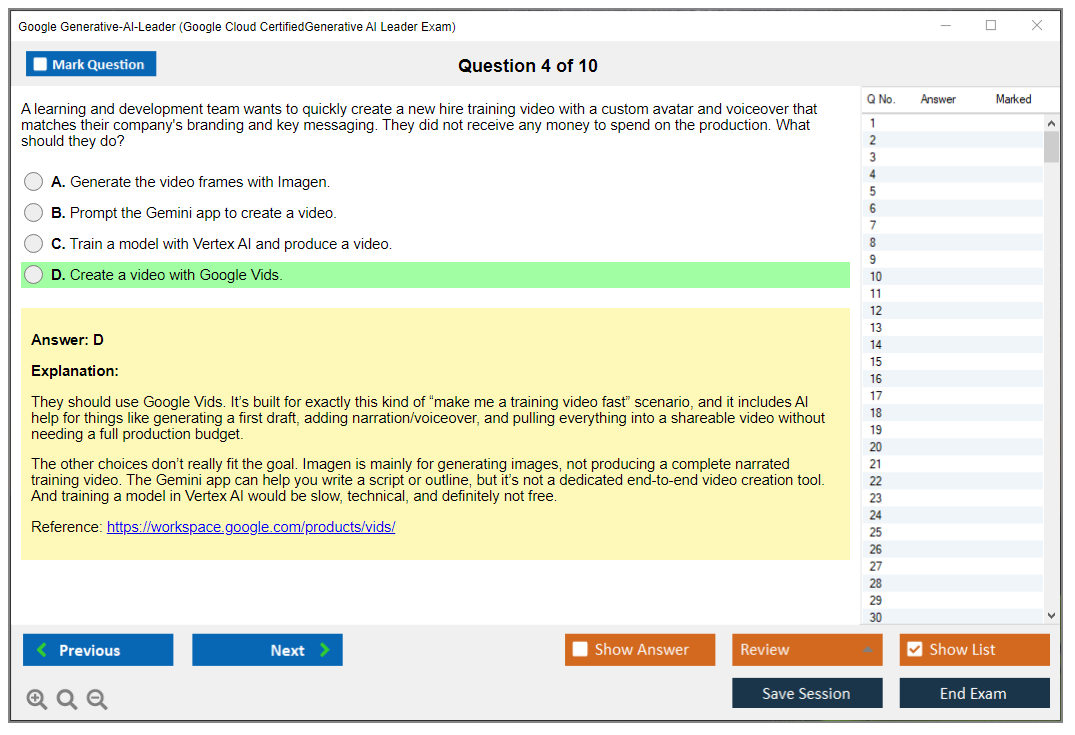

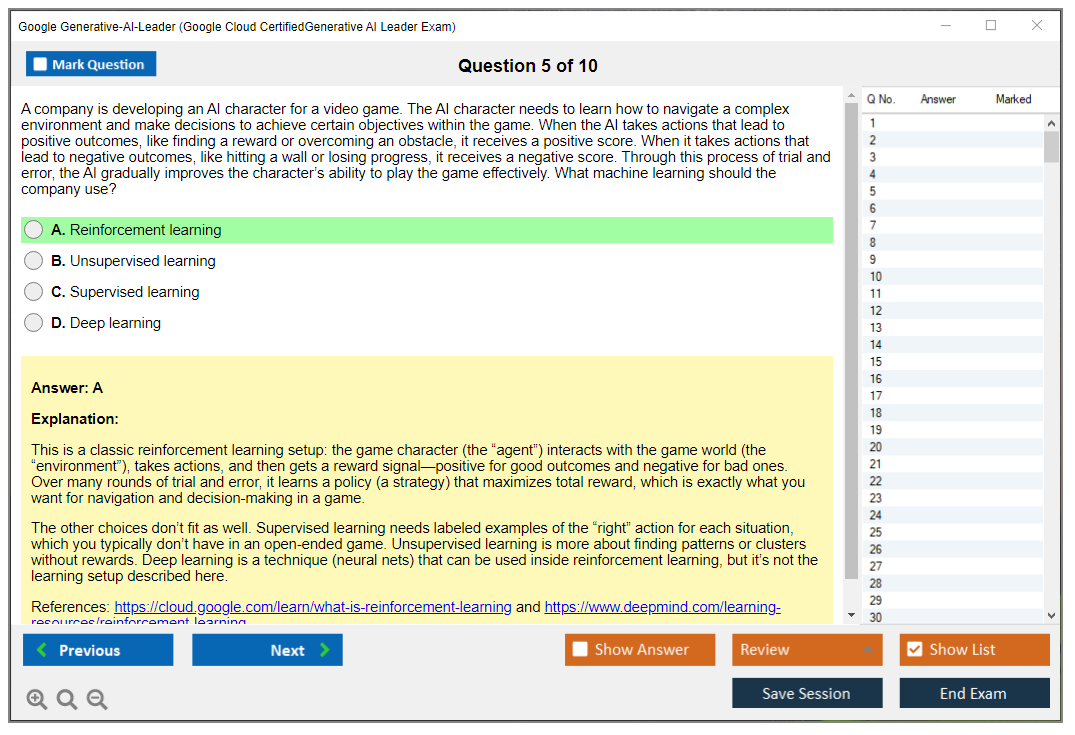

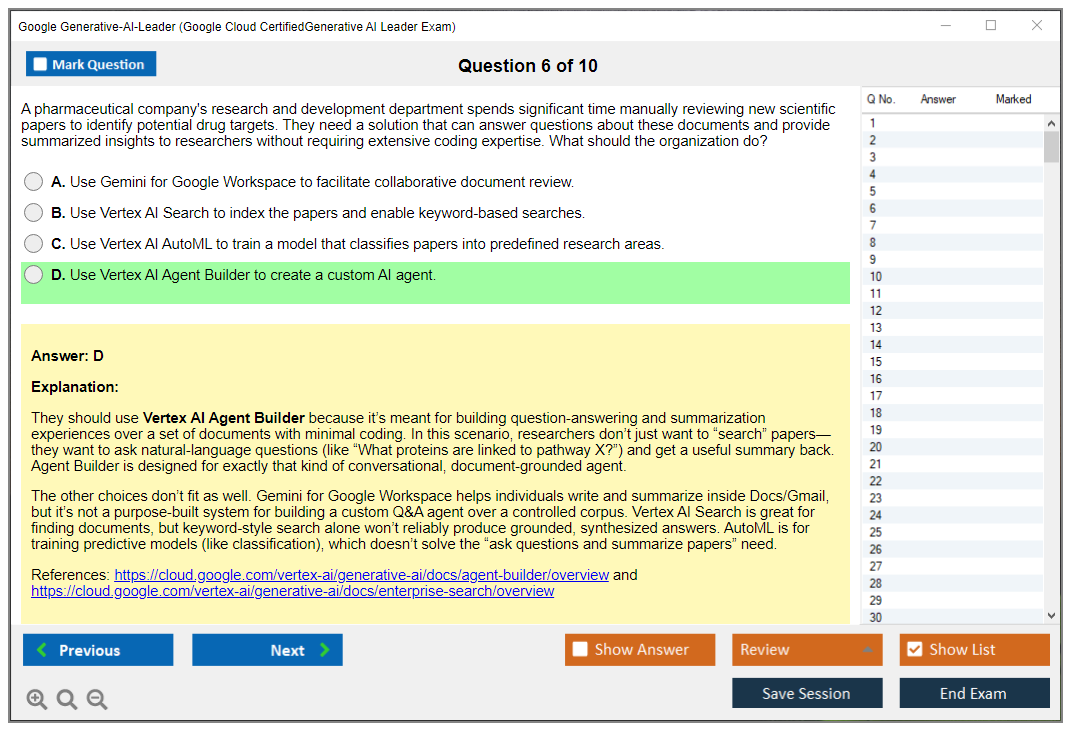

This exam's mostly multiple-choice and multiple-select, but the vibe is business scenario decision-making. You'll see prompts like "A healthcare company wants to deploy generative AI for customer support while meeting regulatory expectations" and then you pick the best next step, the most responsible approach, or the best governance guardrail.

Question types you should be ready for: Some are single-answer multiple choice. Straightforward stuff. Others are multiple-select, meaning "choose all that apply," and those can sting because you can't brute-force them with test-taking tricks if you don't actually know the Generative AI Leader exam objectives. Case study style questions show up too, where you get a chunk of organizational context and then answer several questions that basically test your generative AI adoption strategy instincts and your ability to spot risk and compliance gaps.

I spent about 20 minutes on a question cluster like that during my first attempt. Turned out I'd misread the stakeholder priority in question two, which threw off my answer to question four. Came back during review time and caught it, but man, those connected scenarios will mess with you if you're not careful.

Responsible AI isn't optional here. Think responsible AI governance Google Cloud, model misuse, data handling, human review, and auditability. Less "write Python" and more "don't get your company sued or embarrassed."

Online proctored vs test center: pick your pain

You can take it online with remote proctoring or in person at a Kryterion testing center. Both're valid. Neither's perfect.

Online proctored's great for scheduling. You can often find evening slots, weekends, and short-notice appointments, which is huge if you're juggling work and you just want to get the Google Generative AI Leader certification checked off. But your environment's gotta be clean, quiet, and compliant, and the proctoring rules are strict enough that your own house can become the enemy.

Test centers are boring in a good way. The room's controlled. You're less likely to get flagged because your neighbor started mowing the lawn or your webcam decided to stop working. You do have to travel and deal with appointment availability.

Remote testing setup requirements (read this twice)

Remote testing requires a Windows or Mac computer. Not a Chromebook. Not a tablet. You need a webcam and microphone, stable internet, and a private room where nobody walks in. Government-issued photo ID's required. Desk cleared. No notes. No extra monitors. No "just a second while I close Slack".

You also need to run the system compatibility check 24 to 48 hours before exam time. Do it. A shocking number of failures happen because people show up with a corporate VPN running, screen-sharing software installed, or some security agent that makes the proctoring app freak out.

That compatibility check's looking at browser support, webcam and mic access, bandwidth, screen resolution, and whether you've got conflicting stuff like VPNs, remote desktop tools, or recording software. If you ignore this, you're basically gambling $125 on your laptop mood that day.

What it's like at a testing center

Show up 15 minutes early. Bring valid photo ID. They'll make you store your phone and personal items in a locker, and you'll get scratch paper and something to write with. The room's monitored, usually with cameras, and the whole setup's designed to reduce distractions.

Temperature and lighting are usually fine. Sometimes a little cold. Bring a layer.

The upside? You're not fighting home Wi-Fi, roommates, kids, dogs, delivery drivers, or your own temptation to glance at a second screen. For a lot of people, the controlled environment's worth the hassle.

Languages and release timing

The exam's available in English, Japanese, Spanish, and Portuguese (Brazilian). More languages might get added based on demand, but don't assume parity across versions when Google refreshes content. The English exam tends to get updates first when the blueprint shifts due to platform changes or new best practices, especially around governance and platform components like Vertex AI fundamentals for leaders.

If you're bilingual, taking it in the language you read fastest can be a real advantage, because speed matters with 90 minutes and scenario prompts.

Accessibility accommodations (use them if you need them)

Google Cloud certification offers accommodations for candidates with disabilities. Common ones include extended time (often time-and-a-half), screen reader compatibility, separate testing rooms at centers, and breaks that don't penalize your timer. Alternative formats can be possible too.

You request accommodations during registration and submit documentation, typically at least 10 business days before your scheduled exam. Don't wait until the week of the test. The process is admin-heavy. Plan for it.

Exam day flow and proctor rules

For online testing, you launch the exam through the Webassessor portal. You'll do identity verification with your photo ID on camera, then a 360-degree room scan. You agree to non-disclosure terms. A proctor may greet you via chat and can message you during the exam if they see something questionable.

Timer stays visible. Once you start? It's go time.

Prohibited items and behaviors are strict: no phones, no smartwatches, no reference materials, no additional monitors, no headphones, usually no food or drinks (water's sometimes allowed depending on policy and proctor). No other people in the room. If you break the rules, they can terminate the session, invalidate the score, and potentially suspend you from the program. Yes, really.

Breaks, interruptions, and what happens if things go wrong

There aren't scheduled breaks. The 90-minute clock runs continuously.

If you hit technical problems, contact the proctor right away through chat. Legit issues can lead to a restart or a reschedule, but it depends on what happened and what the logs show. If your personal life interrupts you (like someone walks in or you step away) expect the attempt to be forfeited. Harsh, but that's the deal with remote proctoring.

Group testing and corporate rollout options

If your company's certifying multiple employees, there are corporate options. Bulk voucher purchases can come with volume discounts. Some organizations coordinate group sessions at designated centers. There're also enterprise dashboards for tracking progress, which is handy when HR decides the Google Cloud gen AI leadership certification is part of a broader AI literacy push.

This is where the exam's lower price helps. It's easier to justify across a whole department, especially when there aren't strict Google Cloud Generative AI certification prerequisites and the content's oriented toward leaders, governance, and adoption rather than deep engineering.

One last thing people ask a lot: Generative AI Leader passing score details aren't usually published in a clean "you need 72%" way, and Google can change scoring models. Treat it like a professional exam. Aim to comfortably cover the objectives, understand AI risk management and compliance, and know the Google Cloud pieces at a decision-maker level. Also, keep an eye on Generative AI Leader certification renewal rules once Google posts the cadence for this credential, because these AI exams tend to change fast when platforms shift.

Passing Score, Results, and Performance Metrics

What you're actually scored on

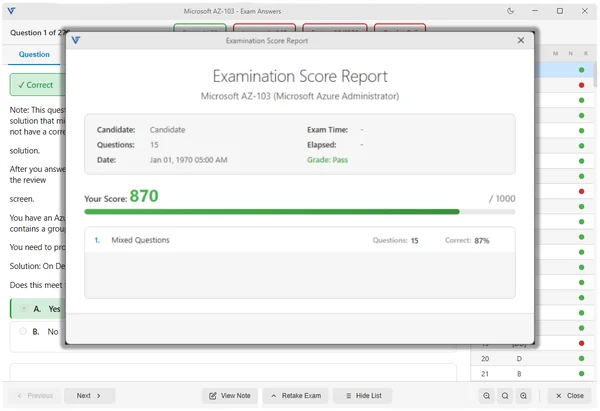

Look, Google Cloud doesn't publish the exact Generative AI Leader passing score, which frustrates everyone studying for this thing. Most certification programs work the same way though. They use scaled scoring that typically hovers around 70-75% correct answers. But here's where it gets interesting: it's about raw percentage.

Google uses something called item response theory, which is a fancy way of saying they account for question difficulty. Not all questions are created equal, right? Some questions test basic knowledge like "what's the difference between generative AI and discriminative AI" while others dig into complex scenarios about responsible AI governance frameworks or ROI calculation for enterprise deployments. The complexity range is wild when you think about how they're testing both strategic vision and technical literacy in the same exam.

Actually reminds me of when I was helping a colleague prep for this exam and she kept getting frustrated that practice questions seemed inconsistent in difficulty. Turned out that was exactly the point - the real exam mixes easy recalls with these complex scenario-based questions that test judgment, not just memorization. Once she understood that pattern, her whole study approach shifted.

The scaling ensures that if you get a harder version of the exam, you're not penalized compared to someone who got easier questions. Two candidates answering completely different question sets can achieve comparable scores because the system adjusts for difficulty variations.

The psychometric analysis behind this is actually pretty sophisticated. They track how questions perform across thousands of test-takers, identify which ones are harder or easier based on statistical performance data, and adjust scoring accordingly. So you might answer 65% of questions correctly but still pass if you got the tougher set. Or answer 72% and fail if yours was easier. The goal is measuring whether you meet the competency threshold, not just counting correct answers.

Getting your results (the waiting game)

Here's the good news: you'll know immediately whether you passed or failed.

Soon as you click that final submit button, the testing interface gives you a provisional pass/fail notification right there on screen. It's provisional because Google Cloud still needs to validate everything on their end, but in my experience, that preliminary result is accurate 99.9% of the time. Technical glitches happen. Irregularities get flagged. But for most people that immediate feedback is your real answer.

The official confirmation takes longer. Within 7-10 business days, you'll get an email from Google Cloud with your formal results. This is when they update their certification databases, assign your credential ID, and prepare your digital badge. Sometimes it's faster. I've seen people get official confirmation in 3-4 days. Plan for the full 10 business days before you start worrying though.

Breaking down your performance report

The official score report arrives within 10 business days, and honestly, it's more useful than just a pass/fail notification. You get a performance breakdown by exam domain, showing where you were strong and where you struggled. For candidates who passed, this helps identify areas for continued learning. For those who didn't pass? It's your roadmap for the next attempt.

The domains covered include:

- generative AI fundamentals (what models are, how they work, basic concepts)

- use case identification and ROI assessment (business value stuff)

- responsible AI governance (ethics, bias, transparency, compliance)

- Google Cloud generative AI ecosystem knowledge (Vertex AI, available models, tools)

- implementation considerations (data requirements, security, integration challenges)

Your report shows performance in each area.

But here's the thing: you won't get question-level feedback. Google doesn't tell you "you missed question 37 about Gemini model capabilities" because that would compromise exam integrity. They protect question content aggressively. What you do get is enough to know whether your weakness is strategic thinking, technical understanding, or governance frameworks.

Decoding "above target" and "below target"

Your score report doesn't give you a numerical percentage for each domain. Instead, you see indicators like "above target," "at target," or "below target" for each section.

This can be confusing at first because you might see "below target" in one domain but still pass overall. The exam measures overall competency, not perfection in every single area. You could be below target in, say, implementation considerations (because you're a business leader without deep technical background) but above target in use case identification and responsible AI governance, and that's fine. The system allows some knowledge gaps while validating your core abilities as a generative AI leader.

I've talked to people who passed with two "below target" domains and others who failed with only one. It depends on how far below target you were and your performance in other areas. The weighting isn't published, but clearly some domains matter more than others for this leadership-focused certification. If you're preparing, I'd focus heavily on use cases, ROI assessment, and responsible AI governance since those align most directly with the exam's purpose.

When you don't pass (it happens)

Not gonna lie, failing sucks. But the Generative AI Leader exam isn't trivial, especially if you're coming from a non-technical background or haven't worked directly with generative AI projects yet.

First thing: analyze that domain performance feedback seriously. If you're below target in generative AI fundamentals, you need to spend more time understanding how large language models actually work, what training data means, the difference between fine-tuning and prompt engineering. If responsible AI governance tripped you up, dive deeper into Google's AI principles, bias mitigation strategies, transparency requirements, regulatory considerations.

Second, get better practice materials. The Generative AI Leader Practice Exam Questions Pack for $36.99 gives you realistic question exposure that mirrors what you'll see on the real exam. Not just content coverage but question style, scenario complexity, the way Google phrases things. I've seen people pass on their second attempt purely because they understood question format better.

Third, consider instructor-led training for concepts that aren't clicking. Some people learn better with guided instruction, especially for strategic topics like ROI calculation or governance framework implementation. Self-paced learning works great for facts and definitions, less so for application and judgment calls.

The retake policy explained

You've got a mandatory 14-day waiting period after a failed attempt. Not 14 business days. Fourteen calendar days. So if you fail on a Monday, earliest you can retake is two weeks from that Monday.

Each attempt requires paying the full exam fee again. There's no discount for retakes, no bundle pricing, nothing. Google Cloud doesn't limit total attempts though, so you can keep trying until you pass. But honestly, I'd recommend substantial preparation improvement rather than just rescheduling immediately. Use those 14 days (and probably more) to actually address the knowledge gaps. Cramming the same material again rarely works.

If you're also pursuing other Google Cloud certifications, you might consider whether the Cloud Digital Leader exam makes sense as a foundation before retaking Generative AI Leader. It covers broader cloud concepts that can strengthen your overall understanding, though it's not a prerequisite.

What happens when you pass

Your credential activates within 10 business days of passing. You'll receive a digital badge through Credly, which is the platform Google Cloud uses for certification management. The badge is pretty slick. You can add it to your LinkedIn profile, include it in email signatures, embed it on websites, whatever.

You also get a downloadable certificate from the Google Cloud certification portal. Print it. Frame it. Put it on your wall if that's your thing. More importantly, your certification appears in Google's public certification directory, which employers can use to verify credential authenticity.

The Credly badge includes a public verification link that shows your certification details, issue date, and confirms it's legitimate. This matters because certification fraud is real, and employers increasingly verify credentials before making hiring decisions. Having that verifiable link makes you stand out.

Sharing and verification

Successful candidates control how widely their certification is shared. Google Cloud doesn't automatically notify anyone. Not your employer, not your LinkedIn connections, nobody. You decide what to share and when.

Most people add the Credly badge to LinkedIn immediately because it's easy and visible. The Professional Cloud Architect and Professional Machine Learning Engineer certifications often appear alongside Generative AI Leader on profiles, showing broader Google Cloud expertise.

For job applications, include the certification on your resume with the credential ID and issue date. Employers can verify through Google's directory using your name and that ID. Some companies require verified credentials before considering candidates for AI leadership roles, so making verification easy works in your favor.

If something went wrong during your exam

Technical issues happen. Internet connection drops, testing software crashes, proctoring systems malfunction. If you experienced problems that really affected your performance, you can submit an appeal through Google Cloud support within 30 days of your exam date.

Document everything. Screenshots of error messages, timestamps of when issues occurred, detailed description of what happened. The more evidence you provide, the better your chances of a successful appeal. Google Cloud reviews claims individually and may offer an exam retake if they determine administration problems legitimately impacted your results.

But be realistic about what constitutes a valid appeal. "The questions were harder than I expected" isn't grounds for a retake. "My testing software crashed three times and I lost 20 minutes" probably is.

Your results stay private

Exam scores remain confidential between you and Google Cloud. They're not published publicly, not shared with employers without your consent, not posted anywhere. You control disclosure completely through badge sharing, resume inclusion, and verification link distribution.

This matters for people who fail and want to retake without their employer knowing. Your privacy is protected. Obviously if you passed and want to share that achievement, go for it, but it's entirely your choice.

Exam Difficulty, Preparation Timeline, and Common Challenges

Where this exam really sits on the difficulty scale

The Google Cloud Certified Generative AI Leader exam is beginner to intermediate. Not "easy," though. It's very doable.

Look, compared to Professional Cloud Architect or Professional Machine Learning Engineer, this thing's way more accessible, mostly because you're not being tested on building anything in code, tuning hyperparameters, or debugging a pipeline at 2 a.m. Instead, you're being tested on whether you understand what generative AI can and can't do for an organization, what good governance looks like, and how Google Cloud positions products like Vertex AI in that story. If you've never touched cloud concepts, AI terms, or basic strategy frameworks, it can still feel surprisingly tough. The thing is the questions assume you can connect dots across tech, risk, and business outcomes, which honestly isn't something most people practice.

I remember my first crack at a cloud cert after years in traditional IT. Spent the whole week before convinced I was ready, then sat down and realized half the questions were asking me to think three steps ahead instead of just recalling facts. Different muscle entirely.

Why business folks usually find it manageable

No coding. No labs. No "write the query."

Honestly, that's the main reason executives, managers, consultants, product leads, and even sales engineering types can pass without living in a terminal. The exam leans hard into conceptual understanding, business case evaluation, stakeholder alignment, and governance principles. If you spend your days doing budget conversations, roadmap debates, vendor comparisons, and change management, you already have the mental muscles the exam wants, even if you can't explain backpropagation without sweating.

That said, "business-friendly" doesn't mean "common sense." You've still gotta learn the vocabulary, especially the Google Cloud flavored vocabulary, and you've gotta get comfortable with scenario questions where multiple answers sound reasonable until you notice the one that best matches a responsible deployment, a realistic ROI story, or a compliance constraint.

The level of technical knowledge you actually need

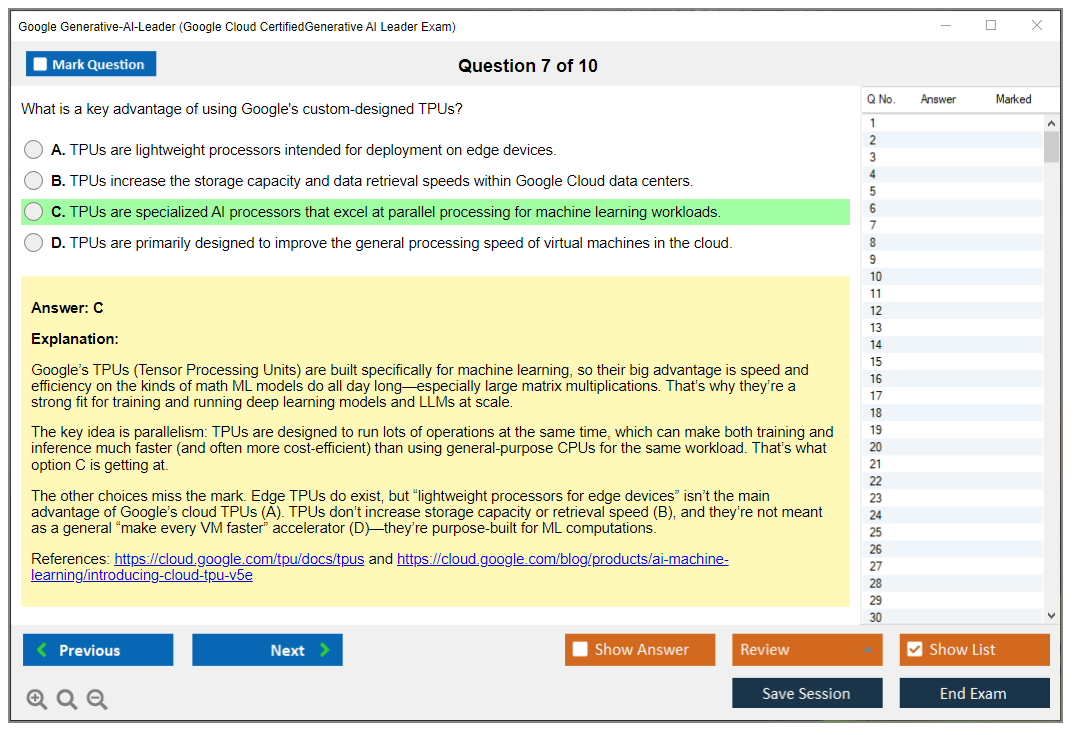

High level only. Concepts, not syntax. Names matter.

You should understand how generative AI models work in broad strokes. Like what an LLM's doing when it predicts the next token, why embeddings help with semantic search, what prompt engineering's trying to control, and what fine-tuning changes versus retrieval-augmented generation. You also want a conceptual grasp of a machine learning pipeline, meaning data collection, prep, evaluation, deployment, monitoring, and feedback loops, but you don't need to implement any of it.

On the Google side, Vertex AI fundamentals for leaders are fair game. Expect to see Vertex AI as the umbrella platform, plus related pieces like Generative AI Studio, Model Garden, and foundation models available through Google Cloud (Gemini, Imagen, and sometimes third-party options). The trap's mixing up what each component's for. More on that later. If you're nervous about "technical" content, watching a couple of Vertex AI demos and clicking around the console in read-only mode helps a lot because you start attaching words to screens and workflows, even if you never deploy a model.

Business acumen is the real passing factor

ROI math shows up. Risk shows up more. Change management's everywhere.

Not gonna lie, a lot of people underestimate how much business strategy and governance thinking matters here. You need to be comfortable with ROI calculation at a practical level (costs, expected benefit, time horizon, adoption friction), stakeholder analysis (who says yes, who blocks, who owns data), and change management principles (training, process updates, adoption measurement). You also need working awareness of regulatory and compliance expectations, plus vendor evaluation criteria like data residency, IP handling, auditability, and how the provider treats customer prompts and outputs.

This exam's basically asking: can you explain a generative AI adoption strategy to leadership, pick a sensible use case, manage risk, and choose the right Google Cloud building blocks, without getting lost in technical rabbit holes.

Preparation timelines that match real backgrounds

Two weeks can work. Six weeks can be smarter. It depends on your starting point.

If you're a business leader who already understands cloud basics, you can usually prep in two to three weeks with about 15 to 20 total hours of focused study. If you're new to both AI and cloud computing, plan four to six weeks and more like 30 to 40 hours, because you're learning vocabulary plus how the pieces fit. If you already have a Google Cloud cert (even Cloud Digital Leader) or you've been close to AI projects, one to two weeks and 10 to 15 hours might be enough, especially if you're disciplined and you do timed practice.

There're two prep styles that work. An intensive approach is daily two to three hour sessions over a couple weeks, which's great if you can block time and keep momentum. An extended approach is six to eight weeks with four to six hours weekly, which's honestly better for busy execs because you can absorb concepts, sleep on them, and come back with clearer thinking instead of cramming terminology the night before.

Common challenge: understanding technical concepts without building anything

It sounds abstract. It feels hand-wavy. That's the point.

A lot of business-focused candidates struggle with translating high-level AI descriptions into something concrete, because the exam questions assume you know what "embeddings plus vector search" implies or why "fine-tuning" might be a bad idea for a small dataset with privacy constraints. The fix isn't learning Python. The fix is pairing concepts with visuals and outcomes: watch Vertex AI demos, study a few architecture diagrams, and spend 30 minutes exploring the Google Cloud console UI so product names stop being just words.

Then do use case mapping. Take a scenario like "customer support summarization" and connect it to: data sources, privacy, evaluation metrics, and deployment risks. That's how the exam thinks.

Common challenge: responsible AI and governance gets complicated fast

Governance is big. Regulations are messy. You can't wing it.

The responsible AI governance Google Cloud framing covers fairness, transparency, accountability, privacy, and security. Candidates often know the buzzwords but not how they drive decisions. Like what you do when a model's producing biased outputs, how you document model behavior for audit, or how privacy rules change what data you can send to a model. You don't need to be a lawyer, but you should have awareness of major regulatory themes like EU AI Act implications, common data privacy expectations, and practical mitigation strategies like human review, access controls, data minimization, and monitoring.

This's also where AI risk management and compliance shows up in scenario questions, because the "best" answer's usually the one that reduces organizational risk while still delivering business value, not the one that sounds the most ambitious.

Common challenge: telling Google Cloud services apart

Vertex AI is broad. Model Garden is specific. Generative AI Studio's its own thing.

Candidates mix up Vertex AI components, Generative AI Studio capabilities, Model Garden offerings, and which foundation model's appropriate. You should know when you'd look at Gemini for general language tasks, Imagen for image generation, and when a third-party model might be considered. And you should know what "use a managed platform" implies for governance, security, and operational overhead versus building your own stack.

A good way to drill this's doing a quick "which tool, why" table in your notes, then testing yourself with scenario prompts. If you want extra reps, a Generative AI Leader practice test format helps because you see the same service names in different contexts until they stick.

Common challenge: scenario questions are sneaky

They're long. Multiple constraints. One best answer.

The exam loves realistic business scenarios where you've gotta pull together concepts: evaluate use case viability while considering data privacy, assess ROI while accounting for implementation risk, or recommend a governance approach for a regulated industry. This's where memorizing definitions fails you, because two choices can both be "true," but only one fits the scenario's constraints.

My rule: read the last sentence first. Then scan for constraints like regulated data, limited budget, timeline pressure, or "executive needs a summary by Friday." Those details tell you what the exam wants.

Common challenge: AI changes every month

Features change. Names change sometimes. Principles stick.

Generative AI moves fast, and Google updates models and tooling frequently. So don't obsess over tiny feature checklists that might shift. Focus on fundamentals, architectural patterns, and strategic frameworks that stay stable. Like governance, evaluation, security posture, and how you decide between RAG and fine-tuning. Still, keep an eye on major platform updates so you don't study something that's clearly outdated.

Time pressure and exam-day nerves

About 90 seconds per question. Flag and move on. Review at the end.

With roughly 90 seconds per question, you need a plan. I mean, if you overthink early questions, you'll rush later and miss easy points. Immediately flag uncertain questions, eliminate obviously wrong answers, and keep moving. Save 15 to 20 minutes at the end to revisit flagged items with a calmer brain and better time awareness.

Test anxiety's real, especially for business professionals who don't take technical exams often. Simulate timed conditions using a Generative AI Leader practice test, and do at least one full run where you treat it like the real thing. No pausing, no checking notes. Also, keep perspective. This cert helps your credibility. It doesn't define your career. Retakes exist.

Quick benchmark vs other Google Cloud exams

Easier than Professional. Close to Cloud Digital Leader. More AI-specific depth.

The Google Cloud Certified Generative AI Leader exam is substantially easier than Professional Cloud Architect and Professional Machine Learning Engineer because those require deeper technical knowledge and, honestly, lived experience. It's roughly comparable to Cloud Digital Leader in accessibility, but more specialized, with deeper AI terminology, governance expectations, and product ecosystem knowledge.

If you want a structured way to rehearse question style and pacing, I'd look at the Generative-AI-Leader Practice Exam Questions Pack ($36.99). It's also a decent confidence-builder if you're the kind of person who needs to see how the exam phrases things before the concepts "click," and it can help you identify weak spots fast. If you do it, don't just memorize answers. Take notes on why you missed questions, then loop back to the Generative AI Leader exam objectives and clean up the gaps.

Generative AI Leader Exam Objectives and Domain Breakdown

The Google Cloud Certified Generative AI Leader exam is not your typical technical certification. It targets people who need to make strategic decisions about gen AI without necessarily writing code themselves - think executives, product managers, business analysts, and IT leaders who are steering their organizations through this AI transformation. The exam objectives are structured to test whether you can actually drive adoption, not just talk about it at a high level.

How the five domains work together

The exam structure spans five major domains, and here is the thing: you cannot just master one area and hope to pass.

Questions get distributed across all domains based on importance, which means you need full knowledge rather than deep specialization in a single topic. Some people I know tried to focus only on the technical bits or only on governance, and they struggled. Why? The exam expects you to connect business strategy with technical implementation with risk management. It mirrors real-world leadership challenges in a way that actually makes sense.

The weighting matters too.

Domain 1 covers generative AI fundamentals and core concepts at roughly 20% of the exam. That's a significant chunk, but not the majority. You will also face questions on use cases and value assessment, responsible AI and governance, the Google Cloud ecosystem (especially Vertex AI), and implementation considerations around data, security, and operations. The domains are not perfectly balanced, which tells you something about what Google thinks leaders need to prioritize.

What domain 1 actually tests

Domain 1 validates understanding of what generative AI is and how it differs from traditional AI and machine learning.

Not gonna lie, this sounds basic until you realize how many leaders still confuse gen AI with predictive analytics or classification models. The exam digs into foundational concepts like large language models, transformer architectures, tokens and embeddings, the difference between training and inference, pre-training versus fine-tuning, prompt engineering basics, and critical limitations including hallucinations and bias.

You need to explain this stuff to non-technical stakeholders in a way that actually makes sense. The exam might present a scenario where a CFO asks why the company should invest in gen AI instead of traditional ML, and you need to articulate the difference clearly.

Discriminative models classify. Predictive models forecast based on existing patterns. Generative models create new content. Seems simple enough. But the exam tests whether you can apply that distinction in business contexts, which is where most people stumble.

Foundation models are huge here. How are they created? How do you adapt them for specific use cases? When does fine-tuning make sense versus just using better prompts? These are not academic questions. They affect budgets and timelines directly.

And you need to recognize capabilities and limitations across modalities: text generation, image synthesis, code generation, multimodal applications. Each has different maturity levels and practical constraints.

Making gen AI concepts concrete for business audiences

Candidates must be able to break down complex technical concepts without dumbing them down to uselessness.

The exam scenario might be something like this: your marketing team wants to use gen AI for personalized campaigns, but they keep asking for features that would require the model to access real-time customer data it was not trained on. Can you explain why that's a problem and what grounding or retrieval-augmented generation might solve?

Tokens and embeddings trip people up.

A token is not always a word. It's a chunk of text the model processes. Understanding token limits matters when you are scoping projects or estimating costs. Embeddings are how models represent meaning mathematically, which is why similar concepts cluster together in vector space. This is not trivia. It's foundational to understanding why prompt engineering works and why certain tasks are easier for models than others.

Hallucinations and bias get tested heavily because they are the biggest risks leaders need to manage. The model might generate plausible-sounding nonsense. It might reflect biases from training data.

You need to know how to mitigate these through techniques like grounding, human review workflows, and careful prompt design. The exam wants to see that you understand these are not bugs to be fixed once. They are inherent characteristics requiring ongoing governance.

One thing I noticed while preparing: many study guides treat hallucinations as purely technical problems. But in practice, the bigger challenge is explaining to stakeholders why the model cannot be 100% reliable and what guardrails make sense given business risk tolerance.

Vertex AI fundamentals every leader should know

Understanding Google Cloud's Vertex AI platform is key for this exam, even if you are not the one clicking buttons in the console.

Generative AI Studio is where teams prototype and experiment with prompts and model parameters without writing code. Model Garden provides access to foundation models from Google and partners, and you need to know which models suit which use cases. PaLM for text, Imagen for images, Codey for code generation, and so on.

The managed APIs matter because they determine how your developers will actually integrate gen AI into applications.

The exam tests whether you understand the trade-offs between using pre-built APIs versus customizing models. Customization options include tuning (adjusting model weights with your data) and grounding (connecting the model to external knowledge sources like your documentation or databases). When do you choose each approach? That's a leadership decision based on accuracy needs, data availability, and budget.

Look, if you have worked with other Google Cloud certs like the Associate Cloud Engineer or Professional Cloud Architect, you will have some context for how Google structures its platforms.

But gen AI introduces new considerations around model selection, prompt management, and responsible AI that do not show up in traditional cloud infrastructure exams.

Connecting technical foundations to strategic decisions

The fundamentals domain is not isolated from the other four domains.

Understanding how pre-training works informs your decisions about data strategy in domain 5. Knowing the limitations of different modalities affects how you assess use cases in domain 2. Recognizing bias risks ties directly into the responsible AI governance tested in domain 3.

I have seen people try to memorize definitions without understanding the implications. That does not work here.

The exam presents scenarios where you need to apply foundational knowledge to make recommendations. Should the company build a custom model, fine-tune an existing one, or just use prompt engineering with a foundation model? The answer depends on your data, timeline, budget, and required accuracy. All of which connect back to understanding what these technical approaches actually involve.

The Cloud Digital Leader certification covers some AI basics, but the Generative AI Leader exam goes much deeper into the specific mechanics and limitations of generative models.

You are expected to understand not just what gen AI can do, but how it does it at a level that informs strategic planning.

Why full coverage across domains is non-negotiable

Since questions are distributed based on relative importance across all five domains, you cannot afford weak spots.

Maybe you are strong on technical concepts but shaky on governance frameworks. Or you understand Vertex AI but struggle with ROI calculation methodologies. The passing score (which we will cover separately) requires consistent performance across the board.

This actually reflects what real generative AI leaders need to know.

You are not just implementing technology. You are managing risk, justifying investment, ensuring compliance, and building organizational capabilities. The exam objectives mirror that complex reality, which is why cramming on just the technical bits or just the business strategy will not get you through.

The exam format reinforces this.

You will see case studies that span multiple domains, requiring you to consider technical feasibility, business value, governance requirements, and implementation challenges all at once. Honestly, it's closer to how actual leadership decisions happen than most certification exams I have encountered.

Conclusion

Wrapping up your prep

The Google Cloud Certified Gen AI Leader exam isn't some impossible mountain to climb. It targets business and technical leaders who need to grasp gen AI adoption strategy without drowning in code, but you can't just wing it. There's actual substance around responsible AI governance, Vertex AI fundamentals for leaders, and AI risk management plus compliance.

Here's the thing.

Most people underestimate how much business context matters. The exam objectives don't just ask "what is generative AI?" They want you thinking through ROI calculations, evaluating use cases in regulated industries, understanding when not to deploy gen AI. That's actually harder than memorizing technical specs, which is kind of funny if you stop and consider it.

The Generative AI Leader exam cost runs about average compared to other Google Cloud certifications. The Generative AI Leader passing score usually sits around 70%, though Google keeps exact numbers close to the vest. You're looking at roughly 50-60 questions. Mix of multiple choice and select-all-that-apply. Some scenario questions get really tricky if you haven't worked through data governance workflows or model bias mitigation in actual business contexts.

Your study timeline? Depends entirely on background. If you've already tackled Google Cloud gen AI leadership certification projects or dealt with responsible AI frameworks, maybe two weeks of focused review works. Someone coming in cold needs more runway. The official Google Cloud Generative AI certification prerequisites stay pretty minimal (no hands-on ML required) but you'll struggle without basic cloud literacy and at least some exposure to AI concepts.

Here's what actually matters: practice tests that mirror real exam scenarios. Documentation helps, sure. But you need to test yourself under pressure, spot where your knowledge gaps hide, drill weak areas until they stick. The Generative AI Leader study guide from Google covers the domains. Applying that knowledge to layered business scenarios though? That's where practice questions separate people who pass from people who guess.

Oh, and one thing nobody mentions enough: the exam loves throwing curveballs about implementation trade-offs. Like, you'll get scenarios where the "right" answer isn't the most advanced AI solution but the one that actually fits the organization's maturity level and regulatory constraints. That kind of judgment call trips people up because it requires thinking past the technology itself.

For a proper Generative AI Leader practice test experience covering all exam objectives (gen AI fundamentals through implementation considerations) check out the Generative-AI-Leader Practice Exam Questions Pack at /google-dumps/generative-ai-leader/. It's built specifically for this exam, with scenario-based questions that reflect how Google tests leadership thinking around generative AI adoption, governance, and ROI assessment.

Don't overthink it.

The Generative AI Leader certification renewal either. You've got two years before recertification. Plenty of time to stay current as Google rolls out new models and updates Vertex AI capabilities. Just schedule the exam, commit to your prep plan, and execute.