Google LookML-Developer (Looker LookML Developer Exam) Overview

Look, if you're working with Looker or thinking about getting serious with semantic layer development, the Google LookML Developer certification is basically the credential that separates people who've actually built production LookML projects from those who've just poked around in the IDE a few times. This is a professional-level certification that validates you can design, build, and maintain LookML projects that actually help business users to explore data without constantly bugging you for custom SQL queries.

The whole point here? Proving you understand Looker's modeling language well enough to create semantic models that bridge the gap between raw database tables and what non-technical folks need to see. Anyone can write a basic view definition, but can you architect an entire explore structure that performs well, maintains governance, and doesn't break every time someone adds a new dimension? That's what this exam tests.

Who actually needs this thing

Data analysts transitioning into analytics engineering roles are prime candidates. BI developers who've been stuck in traditional ETL tools and want to move into modern semantic layer work. Data engineers who need to prove they can do more than just pipeline work and can build the access layer too.

If you're responsible for maintaining Looker infrastructure at your company, this certification basically confirms you know what you're doing. It's also huge for consultants who implement Looker for clients, since having certified people makes proposals way more competitive. Honestly, if you've been doing LookML development for 6-12 months and want career progression, this is probably your next move.

What the exam actually covers

The Google LookML Developer exam is delivered through proctored testing, either remote through Kryterion or at physical testing centers. You're looking at 120 minutes to work through typically 50-60 questions, mix of multiple-choice and multiple-select formats. Not gonna lie, two hours sounds generous but you'll need that time if you're really thinking through scenario-based questions rather than just pattern matching.

The questions focus heavily on real-world scenarios. You'll see situations where you need to decide between different join strategies, optimize query performance, troubleshoot broken explores, or implement field-level security. This isn't a syntax memorization test. It's about architectural decision-making and debugging skills you'd use on actual projects.

Breaking down what gets tested

Core LookML fundamentals form the foundation. You need solid understanding of how projects, models, views, and explores work together. Views define your table logic and field definitions. Models organize those views into explores. Explores are what end users actually interact with. The exam will absolutely test whether you understand these relationships and can design them properly.

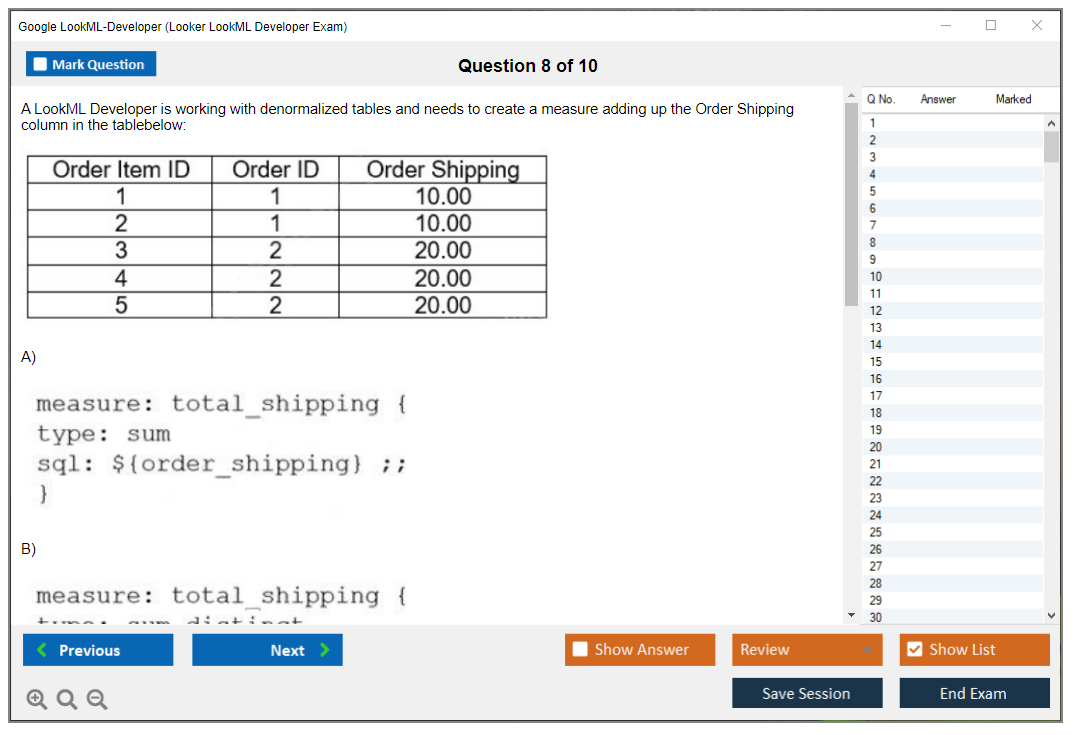

Dimensions and measures? Everywhere in the test. You need to know when to use different dimension types like string, number, date, yesno. How to write measure definitions that actually make sense. When to apply filters or drill fields. Table calculations versus custom measures? Yeah, expect questions on that too.

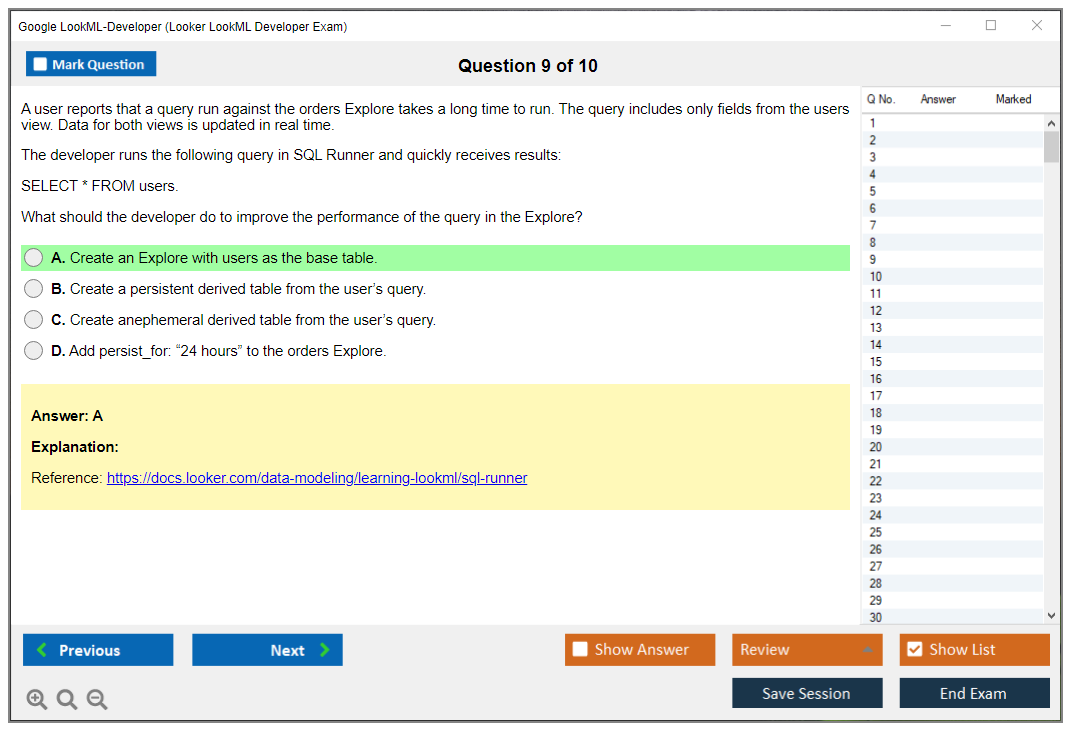

Join logic and explore design probably make up a huge chunk of the exam content. Many-to-one relationships, fan-out issues, join types like left, inner, full. Symmetric aggregates. All fair game. Performance optimization comes up constantly too, especially around persistent derived tables, aggregate awareness, and when to use derived tables versus regular views.

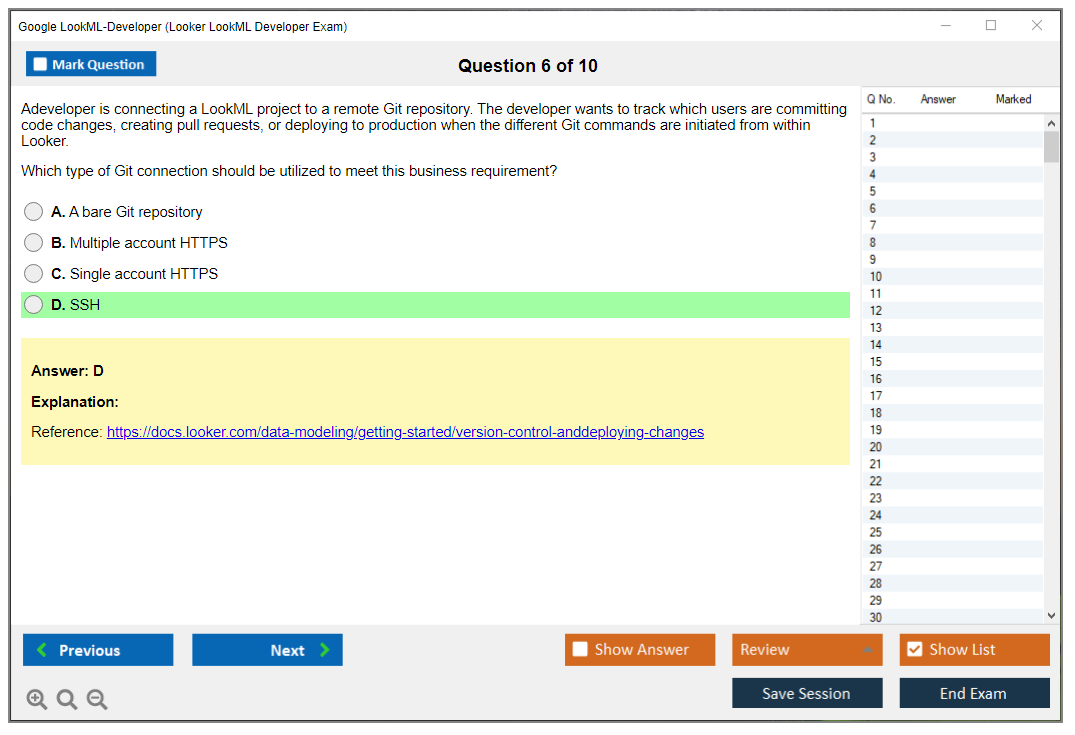

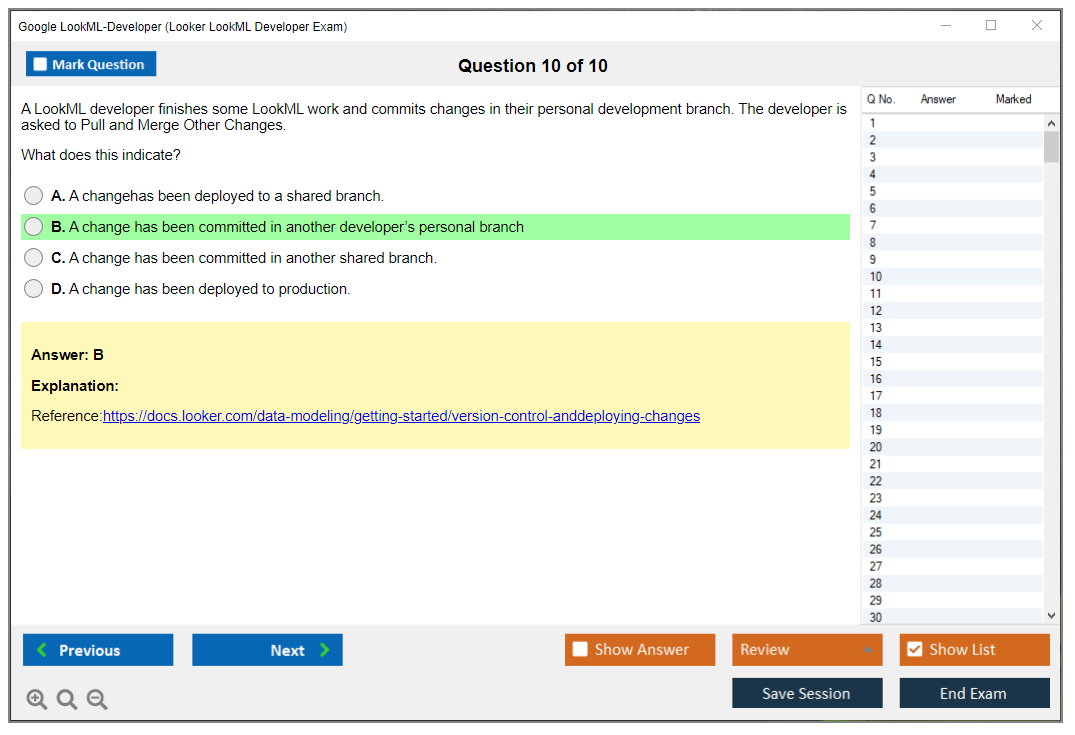

Access control and governance questions test whether you understand model-level security, field-level permissions, and how to implement proper content access management. Version control integration with Git is another major area. Branching strategies, code review workflows, production deployment processes.

How the exam differs from casual Looker use

Here's the thing. Lots of people use Looker to build dashboards and run queries. Way fewer people understand the underlying LookML architecture well enough to design scalable semantic models. This exam validates the latter.

You need to understand database dialects and how Looker connects to different data warehouses like BigQuery, Snowflake, Redshift, whatever. Connection configuration, SQL dialect differences, database-specific optimization techniques. The thing is, the exam treats LookML as part of a broader data platform, not an isolated tool, which honestly makes sense when you think about production environments.

Troubleshooting and validation skills get tested through scenario questions. You'll see broken LookML code snippets and need to identify the issue. Performance problems where you need to recommend solutions. Governance challenges where you need to implement proper access controls. I once spent two hours debugging a fan-out issue caused by a single incorrectly defined join, and let me tell you, that kind of experience sticks with you when you're reading exam scenarios.

Where this fits in the Google Cloud ecosystem

This certification sits within Google Cloud's data analytics certification path alongside credentials like the Professional Data Engineer. If you're building a full data platform skillset, you might combine this with something like the Professional Cloud Architect to show you understand both the semantic layer and the underlying cloud infrastructure.

One massive point of confusion: this exam is about Looker, the BI platform, NOT Looker Studio, formerly Google Data Studio. Completely different products. Looker requires LookML for semantic modeling and costs actual money. Looker Studio is a free visualization tool that doesn't use LookML at all. Make sure you're studying the right product.

Real talk about prerequisites and preparation

Officially? Google doesn't mandate specific prerequisites. Practically, you need solid SQL skills, understanding of relational database concepts, familiarity with data warehouse architecture, and comfort with version control through Git. Basic BI principles help too.

The exam blueprint assumes you've spent real time building LookML projects. Not just following tutorials but actually designing explores, troubleshooting join issues, optimizing PDT refresh schedules, implementing row-level security. Six months of hands-on experience is probably the minimum to feel comfortable, though some people with strong SQL backgrounds pick it up faster.

Training courses help but aren't mandatory. Looker's official LookML training covers the fundamentals well. The documentation is actually pretty full once you know what you're looking for. Building a complete model end-to-end in a practice environment beats passive studying every time.

Career impact and industry value

Organizations using Looker for enterprise BI need people who can build and maintain the semantic layer. This certification opens doors to specialized Looker developer roles, analytics engineering positions, and BI architecture responsibilities. As more companies migrate to cloud-based BI solutions, demand for certified LookML developers keeps growing.

Real competitive advantage. When hiring managers see this certification, they know you can hit the ground running on LookML projects without months of ramp-up time. For consultants and contractors, it's often a requirement just to get on approved vendor lists.

You get a digital badge for LinkedIn and your resume upon passing. Access to the Looker community and Google Cloud professional networks. More importantly, you gain confidence that your skills match industry standards for semantic layer development.

What makes this certification different

Unlike some vendor certs that test surface-level product knowledge, this one digs into architectural thinking and problem-solving. The scenarios mirror actual challenges you'd face building production Looker instances, not toy examples with perfect data and simple relationships.

The emphasis on version control integration reflects how real teams work. Development branches, code review processes, production deployment. These workflows are critical for maintaining LookML projects at scale, and the exam validates you understand them.

Performance optimization questions require understanding both LookML syntax and database query execution. You need to know when a PDT makes sense versus a regular view. How to structure joins to avoid fan-out. When to use aggregate awareness. This stuff directly impacts whether your Looker instance is fast or unusably slow.

Keeping your certification current

Looker keeps evolving with new features and capabilities. Certified professionals need to stay current with release notes, new LookML parameters, and platform updates. The certification demonstrates current expertise, not outdated knowledge from three years ago.

The ongoing learning requirement isn't a burden. It's the reality of working with modern BI platforms that continuously improve. Following the Looker community, reading release notes, experimenting with new features. This is just part of being a professional LookML developer whether you've got the cert or not.

The certification validates you can build scalable, maintainable data models that help business users while maintaining proper governance. That's the core value proposition for employers and what makes this credential worth pursuing if you're serious about semantic layer development as a career path.

Exam Cost, Registration, and Retake Policy

LookML Developer exam cost

The Google LookML Developer exam runs $200 USD as of 2026. Subject to change, obviously, so don't trust random blog posts (including mine) over what's actually on the official Google Cloud certification site.

Credit cards work. That's the normal route, paid through the Kryterion registration portal. Some regions and corporate setups can do purchase orders for bulk registrations, but honestly, that tends to be a "talk to your training coordinator" thing, not a button you click at checkout. Keep the receipt email. Seriously. Expense reports love to "lose" things, and you'll need proof when finance pretends they never saw it three months later even though you submitted it twice.

Pricing-wise, the Looker LookML Developer certification lands around what you see for other Google Cloud pro-level exams. It's mid-range. Not cheap like some entry exams, not premium like certain vendor certs where the price feels like it includes a free stress headache and a commemorative pen nobody wants.

One quick note people miss: the exam fee is separate from training. You can prep for nearly free if you stick to docs and labs, but if you want a Looker LookML training course with an instructor, that's where budgets go to die. I mean, paid classes commonly run $500 to $2000 depending on format, partner, and whether your company negotiated a deal. Free options exist too, like Google Cloud Skills Boost modules. Mixed bag. Some are great, some feel like reading a manual out loud while someone clicks through slides that could've been an email.

Budgeting it out matters because the "real" cost isn't just $200. A practical total investment estimate usually includes:

- Exam fee: $200.

- A retake. Maybe. (Full price again, no discounts, no sympathy.)

- A LookML Developer practice test: typically $50 to $150 if you buy one.

- Study materials: could be $0, could be $60 for a course, could be more.

- Training: optional, but if you do instructor-led, it dominates the total.

If you're trying to pitch reimbursement, do the math in advance and don't pretend it's only $200 if you already know you learn better with structured training.

Employer reimbursement is common. Not universal, but common enough that you should ask before pulling out your wallet. Many companies have professional development budgets covering cert exams, especially if your role touches the semantic layer, metrics, or BI governance. Check your internal policy first, and keep the payment confirmation for finance. Paper trails. Always.

How to schedule the exam

Registration happens through Kryterion Webassessor. Look, it's not complicated, but it's very "certification portal energy," so give yourself time and don't do it five minutes before dinner when you're hangry and impatient.

Basic flow:

- Create an account on Kryterion Webassessor.

- Search for "Google Cloud Certified - Professional Looker LookML Developer" (wording can vary slightly, but it's close).

- Pick delivery method: test center or online proctored.

- Choose date/time.

- Pay.

- Get the confirmation email with your exam details.

That's it. Mostly. Don't overthink this part.

Scheduling timeline is usually decent. In many regions you'll see slots within 1 to 2 weeks, but during peak periods (end of quarter, conference promo seasons, corporate "everyone certify now" pushes) availability tightens fast. The thing is, if you have a deadline, schedule 2 to 3 weeks ahead to be safe. If you don't have a deadline, still schedule ahead because you're more likely to actually study when the clock is real and ticking down.

Time zones matter more than people think. Online proctoring gives you flexibility, sure, but you're still committing to a block of time that includes check-in, ID verification, and the exam itself. Plan for the 2-hour exam duration plus check-in, and don't schedule it at the exact moment your neighbors start their weekly lawn-mower convention or your roommate fires up the blender for their third smoothie of the day.

Test center vs online proctored is a real tradeoff, and honestly I've got mixed feelings about both.

In-person is boring, controlled, and usually less drama. You show up, you sit down, your internet isn't your problem. Online proctoring is convenient, sure, but you're betting your exam fee on your network stability, your webcam behaving, and your room being compliant with rules that feel designed by someone who's never seen an actual home office. I've done both. If your home setup is chaotic, go test center and save yourself the "proctor thinks my cat is a second candidate" situation or the "wait, is that poster on your wall unauthorized reference material" conversation.

Actually, speaking of home office chaos, I once watched a friend try to take a different cert exam from his kitchen because it was "quieter than the living room." What he forgot was that his kitchen shares a wall with the building's trash chute, and every time someone dropped garbage down it sounded like bowling balls in a dryer. The proctor kept pausing the exam to ask if he was okay. He wasn't. He rescheduled.

If you choose online proctoring, expect requirements like:

- Reliable internet connection (Google/Kryterion often cites minimum 1 Mbps upload/download, but more stable is better.. don't trust the bare minimum).

- Webcam and microphone.

- Private quiet room.

- Government-issued ID.

Run the Kryterion system test before exam day. Not the morning of. Before. The test checks browser compatibility, permissions, and network basics, and it can catch silly stuff like corporate VPN settings that break proctoring tools or browser extensions you forgot were even installed.

Workspace rules for remote exams are strict and kind of annoying. Clear desk policy. No dual monitors. No phones or smart devices. No reference materials. No other people in the room. No pets wandering around. If you're thinking "but my notes are off camera," yeah, they've heard that one a thousand times and they're not buying it.

Check-in usually starts about 15 minutes early. You'll do identity verification, sometimes a workspace scan where you rotate your webcam around like you're filming a terrible real estate video, get proctor instructions, and confirm your system is ready. Plan for that time. Don't be the person trying to join while also microwaving lunch and answering a Slack message.

Retakes, rescheduling, and refunds

Refunds are limited. The common rule you'll see is no refund within 72 hours of your scheduled exam time. Read the exact policy at checkout, because it's the kind of detail that changes by program and region, but the theme is consistent: late changes cost you real money.

Rescheduling is typically allowed up to 72 hours before the exam without penalty. Inside that window, rescheduling fees or forfeiture kicks in. Wait, let me clarify that. A no-show usually means you lose the exam fee entirely. Harsh, but predictable once you've seen it happen.

Cancellation is done through the Kryterion portal. If you cancel with enough notice, the fee often becomes a credit rather than money back, depending on the exact terms you accepted at registration. That credit is commonly valid for 12 months from the original purchase date. Miss the deadline and it's just gone. This is why I tell people to schedule when they're confident, not "I'll totally be ready by then" confident.

No-show consequences are worse than people expect. If you fail to appear without the 72-hour notice, you typically lose the fee and it can count as an attempt depending on the program rules. That matters because the retake policy has escalating wait times that get progressively more painful.

Retake policy for this exam works like this:

- After the first failed attempt: wait 14 days before retaking.

- After the second failed attempt: wait 60 days.

- After the third failed attempt: wait 365 days.

That last one is brutal. And honestly, fair. If you've missed three times, the issue probably isn't "I needed one more practice question" or "the exam was worded weird." It's that you don't have the hands-on reps with the Looker modeling language (LookML), or you're shaky on stuff like explores, views, and models in LookML, or you're guessing on joins and aggregate logic and hoping vibes carry you through scenario questions that require actual modeling experience.

Retake fees aren't discounted. Each retake is the full $200 again. So if you're budgeting, treat a possible retake as a real line item, not a theoretical one you ignore because "I'll definitely pass the first time."

My take on retake strategy: don't just reread your LookML Developer study guide notes. That's not enough. Use the waiting period to do targeted work based on your score report, then build something end-to-end in a sandbox project. Write views. Define measures. Break joins on purpose and fix them. Validate, troubleshoot, and repeat until it clicks. That's the difference between "I understand Looker Studio vs Looker (BI)" at a high level and actually passing the exam that cares about modeling details, syntax edge cases, and troubleshooting skills.

Technical issues during the exam happen. If you disconnect or your proctoring tool crashes, contact support right away and follow the proctor's instructions. Proctors can sometimes pause an exam for troubleshooting, but you need to be proactive and document what happened. Screenshots if allowed. Timestamps. Keep it boring and factual, not emotional or accusatory.

Accommodations are available for disabilities through the Google Cloud certification team, but you need lead time. Request 2 to 4 weeks in advance with documentation, because these approvals don't happen instantly and there's usually a review process involved.

Corporate and group scheduling is a thing if your org is putting multiple people through the Google Cloud Looker certification path. Companies can coordinate bulk scheduling and payment through training partners, and sometimes you'll see voucher programs through promotions, partner programs, or conference attendance. Vouchers come and go, so don't plan your life around one showing up, but do ask your manager or partner rep if your company has access because it's basically free money if it exists.

Last boring tip, but it saves pain: retain your payment confirmation and registration emails. They're your proof for reimbursement, your proof if the portal glitches, and your proof if you need to argue about a reschedule credit later. Admin work. Annoying. Part of the game. Do it anyway.

Passing Score and Scoring

What Google actually tells you about passing

Look, Google Cloud doesn't publish the exact passing score for the LookML Developer exam. They're not gonna hand you a number and say "get 72% and you're golden." Most professional certifications work this way now. Honestly it makes sense even if it's annoying when you're studying. Based on industry standards and what I've seen from people who've taken it, the passing threshold sits somewhere around 70-75%, but that's educated guessing, not official confirmation.

The exam uses what's called scaled scoring. Simple percentage calculations don't tell the whole story because not every version of the test has identical difficulty. Think about it. If one exam version happens to include three really tricky questions about derived table optimization and another version focuses more on basic explore configuration, those aren't equivalent tests. Scaled scoring accounts for these variations through psychometric analysis, which is a fancy way of saying they mathematically adjust scores so passing in June means the same thing as passing in December, even if the specific questions differ.

Score ranges and what you'll actually see

The scoring typically uses a point scale rather than simple percentages. Many Google Cloud exams report scores in ranges like 200-1000 points with a passing threshold set somewhere in that range based on statistical analysis of question difficulty. You won't see "you got 78 out of 100 questions correct" on your results.

When you finish? Immediate preliminary result.

Pass or fail, right there on the screen. That's the moment of truth after you click submit and your stomach does that thing. But here's what you don't get: no numerical score, no percentage, nothing you can brag about or stress over beyond "I passed" or "not this time."

The detailed score report arrives 7-10 business days later via email. This report breaks down your performance by domain but still doesn't give you exact numbers. Instead, Google uses performance bands for each exam objective area. You'll see whether you performed "above target," "at target," or "below target" in sections like LookML fundamentals, joins and relationships, derived tables, and access control.

Why they keep the exact score confidential

I mean, there's actual reasoning behind this secrecy beyond Google just being difficult. When candidates know the exact passing score, test-taking behavior changes. People start playing the minimum game. "I just need 70%, so I'll master these high-weight topics and gamble on the rest." That's not what certification should validate.

The goal? Demonstrating full competency.

Not scraping by with strategic gaps in knowledge. If you barely pass by avoiding certain topics, are you really qualified to call yourself a LookML Developer? Not really. You might land a job and immediately struggle when a project requires the exact skills you skipped.

This approach also prevents the score itself from becoming the focus. Your certification proves you can build effective Looker models, optimize performance, implement proper governance. The score is just the gatekeeper, not the achievement. I once knew someone who passed three AWS exams before realizing they couldn't actually architect a production system because they'd studied for tests instead of learning the platforms. Ended badly during their first week at a new job.

Understanding domain weighting

Different sections carry different weight. Core LookML modeling tends to account for a larger chunk of your score than peripheral topics. Building views, defining explores, configuring joins properly..these fundamental skills matter more than, say, advanced Git workflows or obscure edge cases in liquid parameters.

The exact weighting isn't published (sensing a pattern?), but you can infer priorities from the exam objectives and how much detail the official documentation dedicates to each topic. If the objectives list "design and build explores with complex join logic" as a major bullet point and "understand version control basics" as a minor one, that tells you where to invest study time.

When you get your score report after failing (if that happens, and no judgment here because most people don't pass first try), pay serious attention to which domains showed "below target" performance. That's your roadmap for the retake. I've seen people obsess over the overall pass/fail when the real value is identifying that they bombed the derived tables section or didn't understand symmetric aggregates and how those actually work in complex queries.

How individual questions get scored

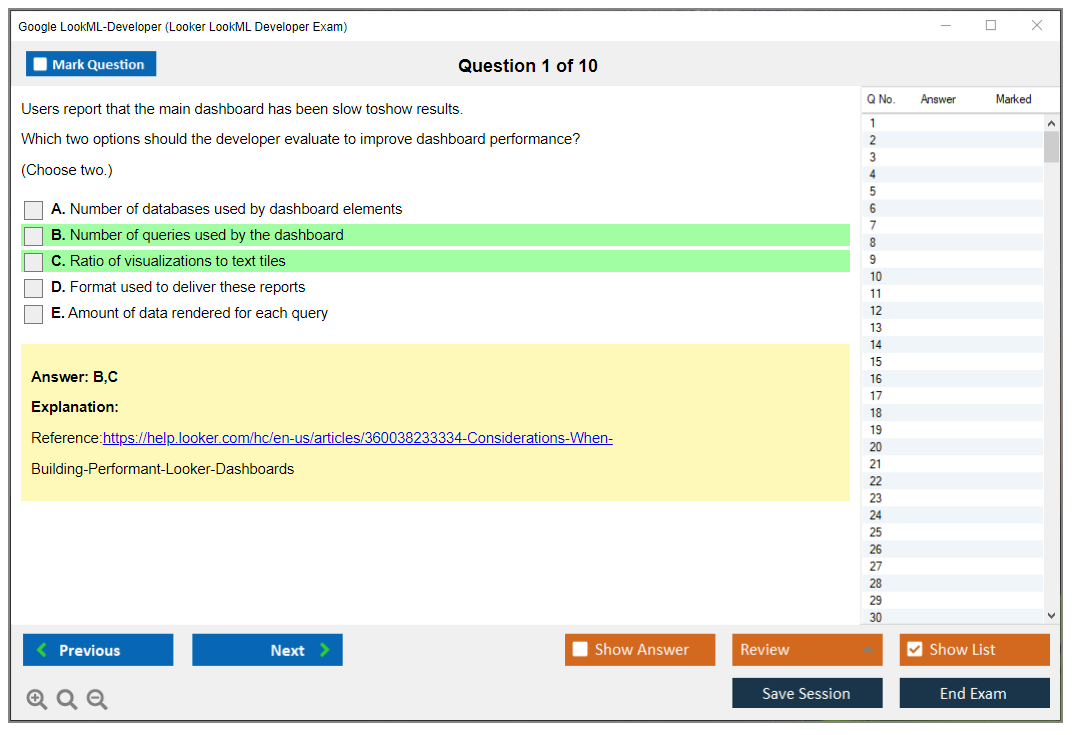

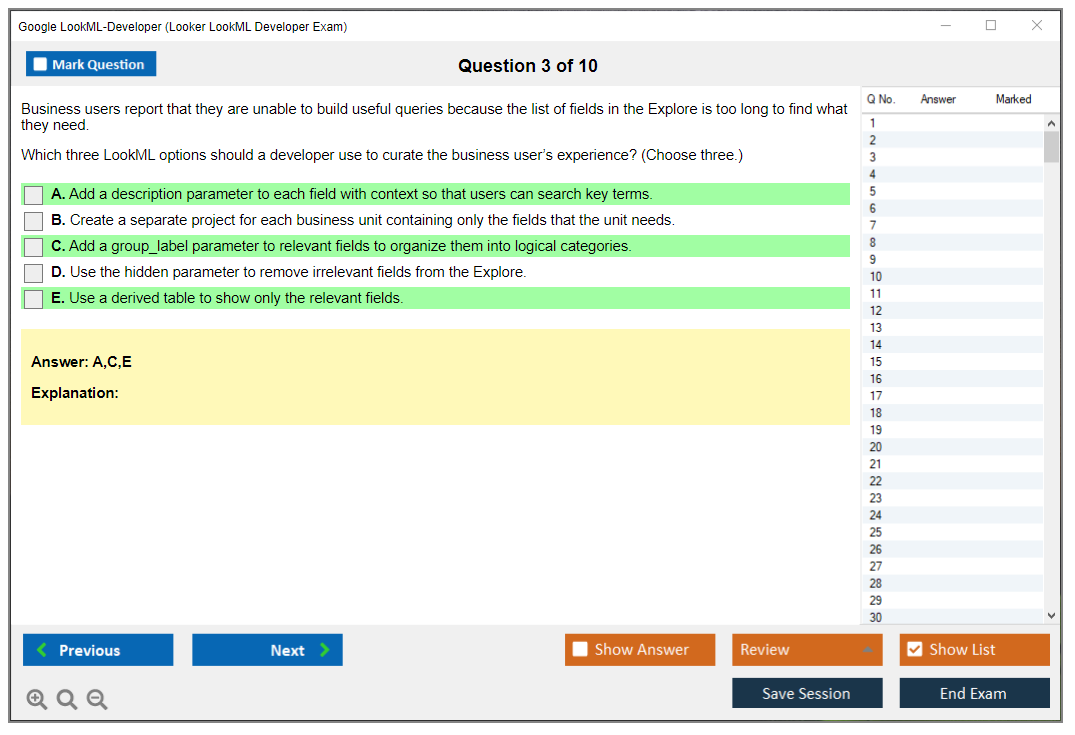

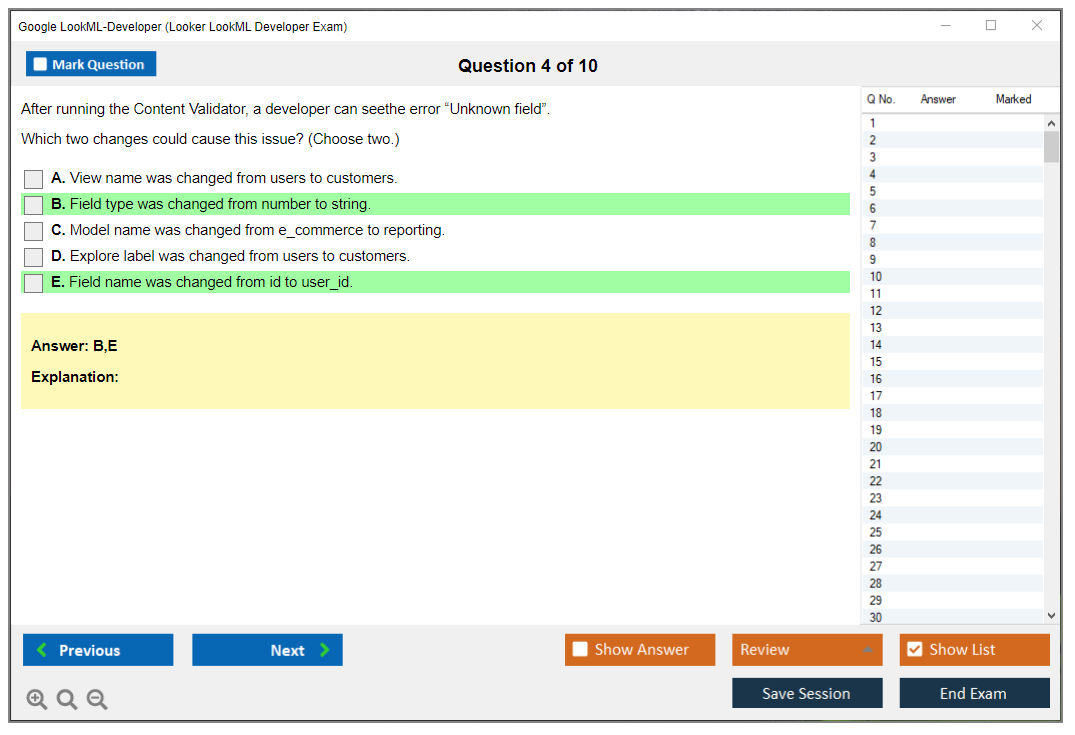

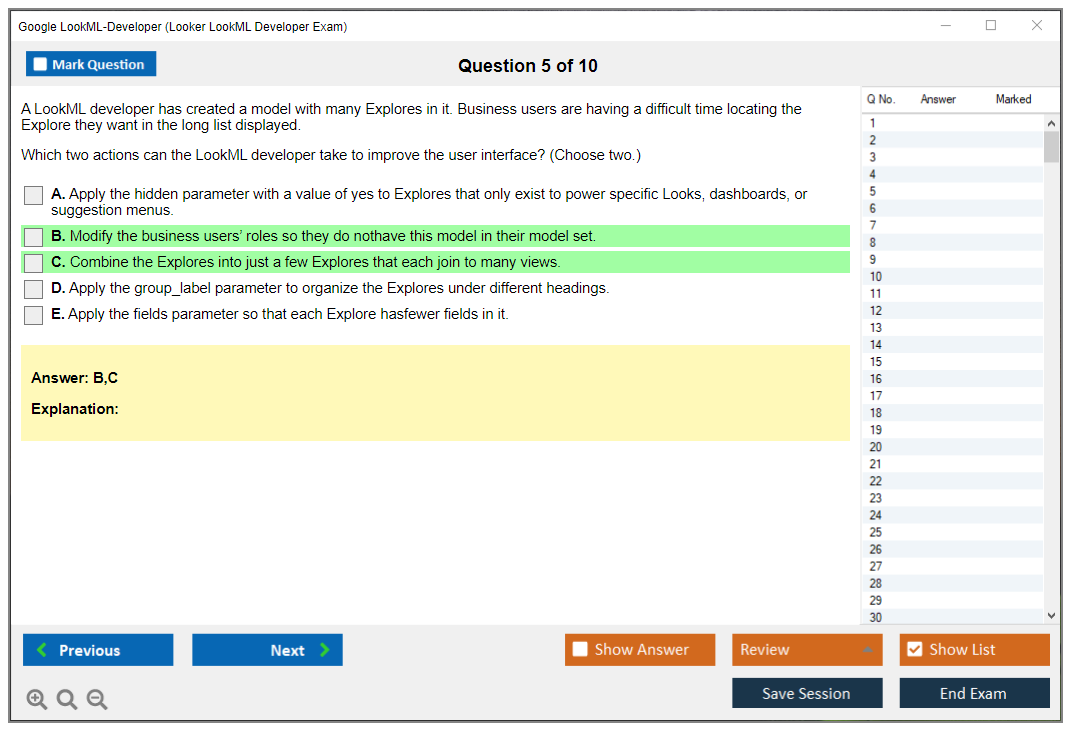

Multiple-choice questions with one correct answer are straightforward. You get it right or you don't. One point, zero points. But multiple-select questions where you need to choose all correct options? Those typically require complete accuracy for credit. Select three out of four correct answers and you usually get zero points, not partial credit.

This is where test-taking strategy matters. If a question asks you to select all valid methods for improving PDT build times, and you're confident about three options but uncertain about a fourth, you're gambling. Choose the wrong additional option and you lose credit for the entire question even though you knew most of it. Don't choose it and you also get zero if it was actually correct. Fun times.

Wrong answers aren't penalized though.

An incorrect guess and a blank answer both score zero, so strategic guessing beats leaving questions unanswered. If you're running out of time with five questions left, throw educated guesses at all of them rather than submitting blanks.

Time management and the review process

The exam gives you functionality to flag questions during your initial pass. Unsure about a question? Mark it and keep moving. You can return during the review period before final submission. I usually recommend budgeting about 1.5-2 minutes per question with 15-20 minutes reserved for reviewing flagged items.

Rushing creates stupid mistakes. You'll misread "which of these is NOT a valid join type" as "which IS valid" and blow an easy question. Pacing yourself matters more than you'd think. The exam isn't designed to be a time crunch if you actually know the material, but it's tight enough that you can't afford to spend five minutes agonizing over every uncertain question.

Version equivalence and grading consistency

Every version of the exam goes through psychometric validation to ensure equivalent difficulty. The person taking the test in Tokyo today and someone taking it in New York next month aren't competing against each other. There's no curve. You're measured against the consistent standard of "does this person demonstrate LookML Developer competency?"

This means your chances don't depend on whether you got an "easy" or "hard" version. Yeah, some specific questions might feel harder to you personally based on your experience gaps, but the overall difficulty balances out. Someone who struggles with SQL might find database-heavy questions harder while someone weak on Git finds version control questions brutal.

What happens after you pass (or don't)

Passing scores remain valid for the certification period, typically 2-3 years for Google Cloud certifications. You'll eventually need to recertify to maintain the credential, either by retaking the exam or completing renewal requirements when Google updates them.

Your results stay confidential. Period.

Unless you choose to share them. Employers can't just call Google and verify your score. They need your permission. The certification itself shows up in Google's certification directory where you can share a public profile link, but that shows certification status, not your score breakdown.

If you fail, you can retake the exam after the waiting period (usually 14 days for Google Cloud exams, but check current policies). Your new attempt is completely independent. Previous scores don't help or hurt you. The system doesn't remember that you were "close" last time.

There's limited appeal process for scoring disputes. If you really believe something went wrong (technical issues, questions that don't match published objectives) you can contact Google Cloud certification support within 30 days. Don't expect them to bump you from fail to pass because you think the test was unfair, but legitimate technical problems do happen.

Reality check on preparation benchmarks

Well-prepared candidates with 6-12 months of hands-on Looker experience typically pass within one or two attempts. If you're consistently scoring 80%+ on quality practice tests like the LookML Developer Practice Exam Questions Pack ($36.99), you're probably ready. Below 70% on practice tests? You're not there yet, and the real exam won't be easier.

The practice materials help you gauge readiness better than any official guidance because you get actual performance feedback. Taking practice tests under timed conditions reveals whether you truly know the material or just recognize it from studying. There's a huge difference between "oh yeah, I remember reading about that" and being able to apply it under pressure.

Similar to how the Professional Data Engineer exam requires hands-on experience beyond just reading documentation, the LookML Developer certification really does test practical skills. You can't memorize your way through questions about debugging LookML errors or optimizing explore performance without having actually done it.

Exam Difficulty and Time Commitment

How hard is the LookML Developer exam?

The Google LookML Developer exam sits somewhere between moderate and challenging. Not impossibly hard, but if you haven't built real Looker models before you're gonna feel that clock pressure intensely.

The tricky part is how it tests both book knowledge and hands-on judgment calls that honestly feel more like work situations than traditional certification questions. You need the Looker modeling language (LookML) syntax down, sure, but the exam leans heavily into semantic modeling choices, performance trade-offs, and troubleshooting situations that feel scarily similar to what happens at work when a dashboard suddenly starts returning weird counts. I mean, you know that panic when numbers just stop making sense? Some questions are straightforward definition checks. Easy wins there. Others are these long scenario prompts where you've gotta pick the "best" approach, and that's where people start second-guessing themselves into oblivion.

Compared to other Google Cloud certs, this one's more specialized than the Associate-level stuff that tests general cloud operations knowledge. It's not trying to see if you can generally operate Google Cloud infrastructure or spin up VMs. It wants to know if you can build and maintain explores, views, and models in LookML that won't break catastrophically, won't overcount transactions, and won't absolutely melt your warehouse with cartesian joins. That puts it closer in feel to Professional-level exams even if the topic scope is technically narrower.

What makes it challenging (in a very specific way)

Lots of candidates assume it's mostly "write LookML."

Wrong assumption.

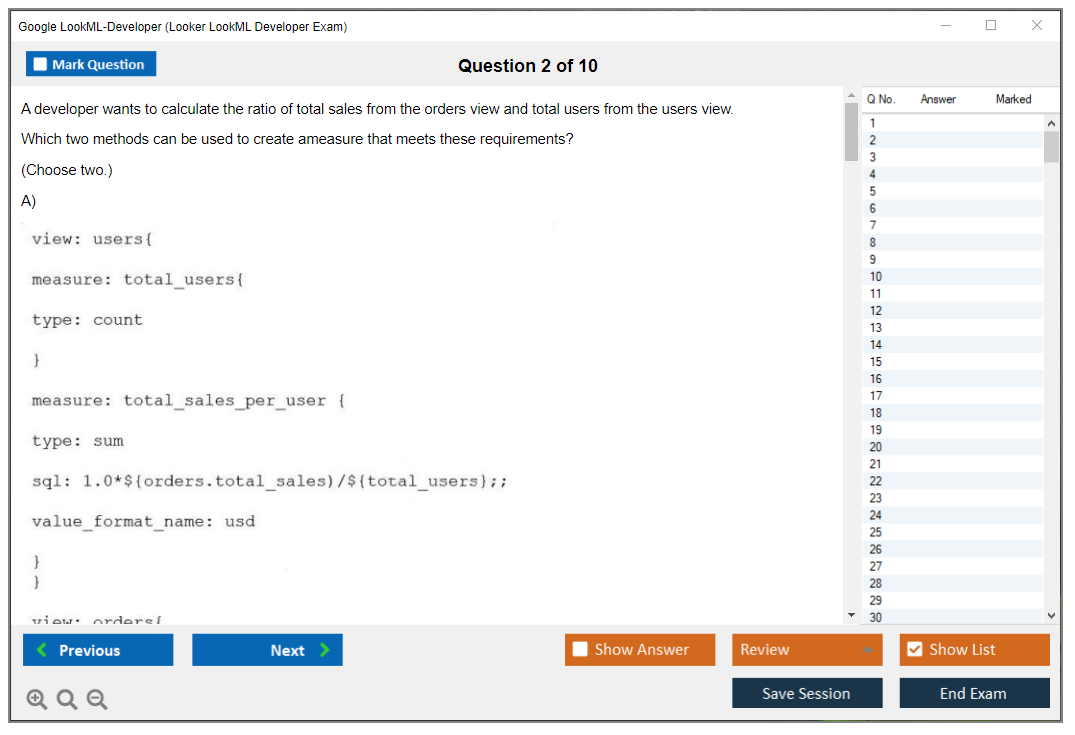

You'll get code interpretation questions where you read a snippet and predict behavior, spot an error lurking in there, or infer what SQL it actually generates behind the scenes. Those can be deceptively hard because the code's rarely isolated in a vacuum. It implies relationships, join paths, field types, filters, and caching behavior, and you have to reason about the output without running it in an actual environment. Fragments everywhere. Quick mental compiles that honestly feel like pop quizzes.

Scenario-based questions are the real time sink that'll murder your pacing. You'll see "business requirement" prompts like "Finance needs a metric that doesn't double count across joined tables" or "Sales wants a dashboard to be fast but data can be stale for 1 hour" and then you choose the best LookML approach from options that all sound plausible. Not gonna lie, this is where theoretical-only studying fails spectacularly. The "right" answer usually matches Looker-recommended patterns and the way Looker behaves in production environments, not what seems plausible from reading docs once while half-paying attention.

SQL matters more than people admit upfront. LookML generates SQL, and the exam expects you to understand the query implications of your modeling decisions at a pretty deep level. If you don't have strong SQL fundamentals (join behavior, aggregation grain, how filters push down through nested queries) you'll struggle when the question is basically "what happens to the result set if we join this view like that with those specific relationship parameters."

And yes, Looker Studio vs Looker (BI) confusion still pops up for some folks, especially if they've lived in Studio dashboards their whole career and are newly stepping into semantic layer modeling territory. The exam assumes you're in Looker proper. Living in LookML files, dealing with Git workflows, validation errors, and production deployments.

Common difficulty factors you'll keep running into

Some topics show up again and again because they're where real projects go sideways in spectacular fashion.

Complex join logic is one massive category. Multi-fact explores that connect multiple fact tables. Fanout issues where row counts explode. Symmetric aggregates that require special handling. If you haven't personally fought with "why did my revenue triple when I joined sessions to orders," you're missing the muscle memory the exam rewards heavily. Many candidates underestimate explore design complexity, and that's not a small gap you can patch quickly. It's a giant one that requires real experience. Grain alignment is everything in dimensional modeling, and the exam will poke at it from different angles until you either see it instantly or you don't.

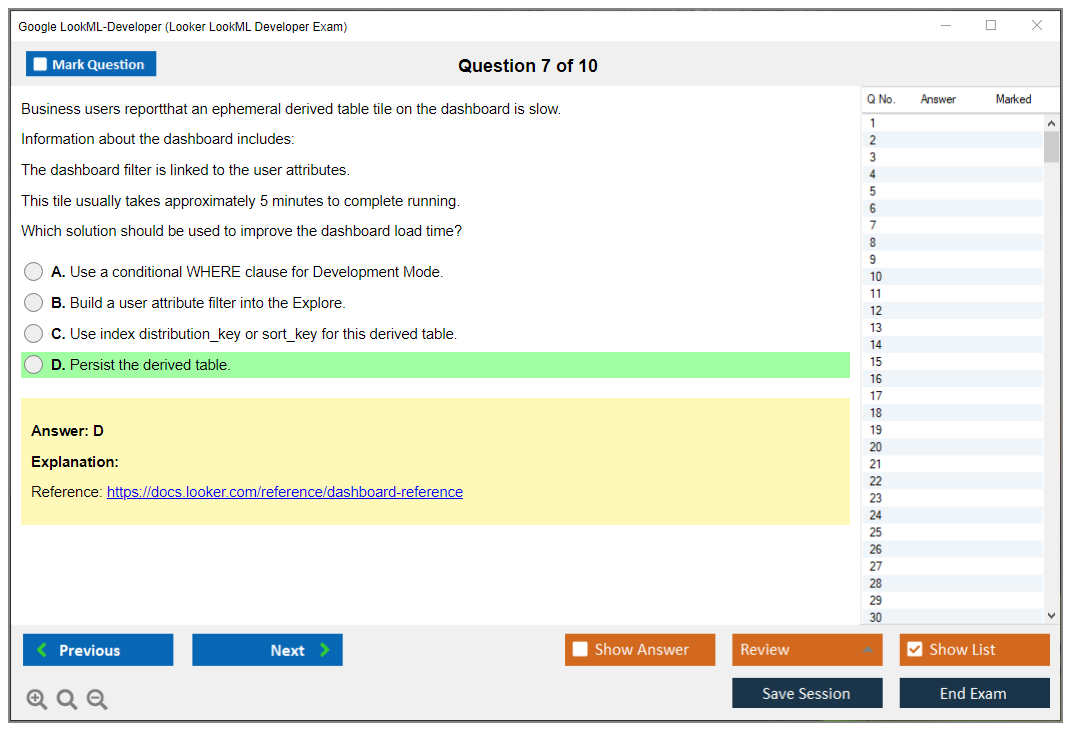

Derived tables are another pain point that consistently trips people up, especially PDT vs NDT trade-offs that seem subtle but matter enormously. Persistent vs ephemeral derived tables. Caching strategies that affect performance. Trigger logic that determines rebuilds. What changes require full rebuilds versus what gets cached intelligently. When a PDT is the right move versus when it's complete overkill for the use case. People mix up what's physically stored, what's rebuilt on schedule, and what gets cached at query time. Then they pick the "sounds right" option instead of the correct one that reflects actual Looker behavior.

Performance optimization decisions show up a lot too, but not in simple ways. Not just "make it faster," but "what would you change first" style prompts that force prioritization. That requires real-world troubleshooting experience accumulated over time, because the best answer usually reflects practical priorities. Reducing join complexity first, using aggregate awareness appropriately, avoiding expensive cross joins that create cartesian products, knowing when the warehouse is the bottleneck versus Looker modeling being the culprit.

Access control details surprise people who thought they understood permissions. Field-level security, model access grants, user attributes, and content permissions can get tangled fast. The exam likes the edge cases where multiple layers interact. You'll think it's simple at first glance, and then you realize the question is asking which layer actually enforces the restriction you want at query time.

Git workflow questions also trip up developers who've never used version control seriously in their careers. Development mode mechanics, branching strategies, resolving merge conflicts, deploying to production safely, validation processes. If your experience is "I edit in prod and hope nothing breaks," this exam will punish that approach. Honestly, it should. That's dangerous in real environments.

If you want extra reps on these patterns that keep appearing, a focused resource like the LookML-Developer Practice Exam Questions Pack can help significantly, mainly because it forces you to practice the exam's decision-making style and scenario judgment, not just reread the LookML Developer study guide documentation and call it a day.

Common reasons candidates fail

Insufficient hands-on practice is number one by a mile.

Reading documentation feels productive when you're doing it. But it's not the same as building a model from scratch, breaking it in weird ways, fixing it through troubleshooting, and learning why the fix worked instead of just cargo-culting solutions.

Weak SQL fundamentals is right up there competing for second place. If you can't reason about join cardinality, group by behavior, and how measures aggregate across different grains, you'll miss questions even if your LookML syntax looks perfectly fine on the surface.

Poor time management is more common than people admit publicly. Scenario questions are wordy and complex, and if you get stuck trying to "prove" the answer in your head through logic, you can burn minutes fast without realizing it. Another big one that sneaks up on people: lack of real-world modeling experience where you've shipped actual dashboards to demanding users. The exam objectives basically assume you've done this at least a little in anger, under pressure, with stakes.

PDT and derived table confusion is a repeat offender that shows up on failure retrospectives. So is performance tuning decision-making. And debugging scenarios that require systematic approaches, because troubleshooting requires a methodical approach you usually only develop after you've been the person on call for broken dashboards at 2am.

One more thing. Overconfidence with explores and join logic. Folks will memorize "relationship: many_to_one" syntax and think they're done preparing, then the exam hits them with multi-fact patterns and symmetric aggregates, and it gets messy quick when theory meets complex reality.

If you're trying to reduce the "surprise factor" on exam day, doing a timed LookML Developer practice test or two is a smart move that simulates pressure. That's where something like the LookML-Developer Practice Exam Questions Pack fits nicely into preparation. It's not magic that guarantees anything. It just makes you practice the way the exam actually asks questions.

Recommended study timeline (beginner vs experienced)

If you're a beginner to Looker and semantic modeling concepts, plan 3 to 4 months at 8 to 10 hours a week minimum.

That's not because you're slow or incapable. It's because you need repetition across multiple weeks. You need time to build actual projects that teach through doing. And you need enough "oh wow, that broke in an unexpected way" moments to learn the patterns that show up in scenario questions.

If you already have 6+ months of LookML development experience building production models, 6 to 8 weeks at 5 to 7 hours weekly is usually enough for focused exam prep that fills gaps. You're mostly tightening up weak areas you don't touch daily, mapping your hands-on experience to the LookML Developer exam objectives explicitly, drilling the stuff you don't touch daily like access grants or aggregate awareness configurations.

There's also the intensive option if you're in a hurry. Four to six weeks full-time is doable for experienced SQL developers transitioning into Looker modeling, but only if you're doing daily hands-on practice and not just watching a Looker LookML training course on autopilot while checking email or multitasking.

Study hour estimates vary by background. Minimum 60 to 80 hours total for experienced developers who already build models regularly. More like 120 to 160 hours if you're new to Looker or new to semantic layer modeling concepts entirely. And I mean actual focused work time, not "I had a tab open while doing other things."

How to spend that time (and not waste it)

Dedicate 60 to 70% of your study time to hands-on work, period.

Building views, explores, joins, derived tables, access controls, then validating and debugging what you built. Passive reading is fine for syntax refreshers and concept review. It won't teach you how to make trade-offs under pressure when multiple approaches seem viable.

A routine that works for most people based on what I've seen: 1 to 2 hours on weekdays for reading, videos, and documentation review. Then 3 to 4 hours on weekends doing labs and building something end-to-end that actually works. Use a Looker trial environment, your company dev environment if you have access, or Google Cloud Skills Boost labs. The environment matters because you need to see generated SQL output, test caching behavior in real-time, and feel how Git-backed development mode actually works in practice.

A project-based approach beats flashcards every time for retention. Build a complete model from scratch that solves a realistic business problem. Include multiple explores with complex relationships, joins across facts, at least one PDT with a trigger configuration, and dashboards that force you to confront performance optimization decisions. Make mistakes on purpose. Fix them systematically. That's the whole point of hands-on learning.

Spaced repetition helps with the topics people routinely miss on first attempts: joins, PDTs, and access control layers. Circle back multiple times across your study period instead of one-and-done reviews. Also, find a study partner or hang out in Looker community forums when you get stuck on something, because talking through modeling decisions is how you learn to justify "why this is better." That's exactly what the exam keeps asking you to demonstrate.

Avoid cramming everything into the last week. Burnout is real and kills retention. Consistent moderate study wins over intensity sprints.

You're ready when you can consistently score 80%+ on practice exams under time pressure, build explores from scratch without copying old code as reference, and explain your design decisions without hand-waving or vague generalizations. If you're not there yet, do more hands-on building, tighten SQL fundamentals, and consider drilling with the LookML-Developer Practice Exam Questions Pack to get comfortable with the exam's scenario style and timing constraints.

Exam Objectives and Domains

The Google LookML Developer exam tests your ability to build, maintain, and optimize data models in Looker. This is not some generic certification where you memorize cloud services and call it a day. You're actually writing LookML code, designing explores, and solving real data modeling problems that show up in production environments every single day.

Five domains that actually matter on test day

The LookML Developer exam objectives break down into five primary domains that cover everything from basic syntax to advanced performance tuning.

LookML modeling and explore design? That's the heavyweight champion here. Usually accounts for 40-50% of all questions, which honestly makes sense because that's where you'll spend most of your time as a developer. You're designing semantic layers, writing joins, making sure business users can actually find the data they need without breaking anything. Performance and optimization comes in second at 20-25% of the exam weight. Access control and governance at 15-20%. Troubleshooting rounds out the last 10-15%.

Look, the exam blueprint alignment means every question maps directly to official exam guide domains. You can't skip sections. I've seen people who thought they could just master dimensions and measures and wing the rest. They failed. Any objective listed can appear on your exam, so that "I'll study it later" approach doesn't work here.

Project structure is not just organizing files

Understanding LookML project hierarchy starts with knowing how everything fits together.

The manifest.lpa file sits at the root. It controls project-level configuration. Model files define your connection to the database and declare which explores users can access. View files contain the dimension and measure definitions that make up your semantic layer. Explore files (though often defined in model files) establish how views join together.

File organization matters more than you'd think. I've debugged projects where someone threw everything into one giant view file with 2000 lines of code. The kind of nightmare where you're scrolling forever just to find a single dimension definition. And don't even get me started on merge conflicts when multiple developers touch that file.

The exam tests whether you understand proper file naming conventions, how to group related views, and when to split complex logic into separate files. I once worked on a project where the entire finance team's reporting broke because someone renamed a view file without updating the include statements. Took three hours to track down. The exam wants to know you would not make that mistake.

Best practices? View file names usually mean naming them after the table they represent. Keep model files focused on specific subject areas. Use explore grouping strategies that make sense to business users.

Model files control the big picture

Connection definition is always the first thing in a model file. You're telling Looker which database connection to use.

Include statements bring in view files. They bring in other LookML objects too. Datagroups define caching policies (huge for performance). Access grants restrict who can see what explores. Model-level configurations set defaults that apply across all explores in that model.

The exam will test whether you know the order matters. You can't reference a view before you've included it. You can't use an access grant before you've defined it. These are not theoretical questions. They're the kind of validation errors you'll see when you deploy broken code to production.

View files are where the magic happens

Dimension and measure definitions make up the bulk of most view files.

Primary key designation tells Looker which field uniquely identifies each row (critical for join logic and aggregate accuracy). SQL table name points to your database table, or you can use derived table logic to build complex transformations. Parameter declarations let users customize queries at runtime.

Dimension types include string, number, date, time, tier, yesno, and location dimensions. Each has appropriate use cases that the exam expects you to know cold.

String dimensions? Text fields. Number for numeric values you will not aggregate. Tier for bucketing continuous values. Yesno for boolean logic. Location for map visualizations.

Dimension syntax requires understanding the sql parameter (your actual SQL logic), type parameter (tells Looker how to treat the field), primary_key designation (marks your unique identifier), hidden parameter (hides fields from users), and label and description for documentation. Honestly, documentation matters way more than people think because six months later nobody remembers why you built that weird calculated field.

Reference syntax matters too. ${TABLE}.column references the underlying table column directly while ${dimension_name} references another LookML dimension. The exam tests this constantly because people mix them up.

Timeframe dimensions deserve special attention

The dimension_group parameter creates multiple time-based dimensions from a single field.

You specify timeframes like date, week, month, quarter, year, time, and raw to give users different granularity options. This shows up on the exam because it's one of the most common patterns in LookML modeling. There are specific syntax rules about datatype compatibility and convert_tz parameters.

Explores bring everything together

Explore definition starts simple. You declare an explore based on a view.

Then join relationships get complex fast. You need to know sql_on conditions, relationship types (one_to_one, one_to_many, many_to_one, many_to_many), and type of join (left_join, inner_join, full_outer_join, cross_join). The always_filter requirement forces certain filters to always apply, which matters for performance and data governance.

Fields specification? It lets you limit which dimensions and measures appear in the explore. Conditionally_filter usage applies filters only when no other time filter exists.

Join relationships trip up a lot of people on the exam. You need to understand when to use each relationship type and what happens to your aggregations when you get it wrong. A many_to_many join without proper handling creates fanout that inflates your counts. Suddenly your revenue report shows $10 million when it should show $1 million. That's the kind of mistake that gets you called into your manager's office. Exam questions test whether you can spot and fix that.

Syntax fundamentals you cannot skip

LookML syntax fundamentals include parameter syntax (how you write dimension, measure, filter, parameter declarations), reference syntax differences I mentioned earlier, Liquid templating basics ({% if %}, {% for %}, {{ _filters['field'] }}), and commenting conventions (# for single line, nothing special for block comments since LookML does not have them).

Liquid templating shows up when you need dynamic SQL.

The exam might show you code that generates different SQL based on user input or conditionally includes table joins. You need to read it and understand what SQL actually executes.

Development workflow matches real projects

Development mode workflow starts with creating development branches (your personal workspace), editing LookML files, validating changes (Looker checks syntax and runs queries), committing to Git, and deploying to production.

Git integration requires understanding version control workflow, pull requests, code review process, merge conflicts, and production deployment timing.

The thing is, the exam assumes you've actually used this workflow. Questions might describe a merge conflict scenario and ask how to resolve it. Or show you a validation error and ask which file needs fixing. They're testing whether you've wrestled with these problems in real development environments. This fits with how certifications like the Google Professional Data Engineer test practical cloud skills rather than just theory.

Remote dependencies and project imports

Project import and dependencies distinguish between local projects (everything in your repo) and remote dependencies (shared code from other projects).

Importing projects lets you reuse common view files across multiple projects. Managing shared view files requires understanding refinements, extends, and when to override versus extend inherited LookML.

Manifest file configuration controls localization settings (for international deployments), constants definition (project-wide variables), visualization plugins (custom viz options), and extension framework configuration (for building custom applications).

Not everyone uses these features. But the exam tests them because they're part of the official exam guide domains.

What the exam actually tests

The weighting I mentioned earlier means you should spend most study time on modeling and explores.

Build a complete project end-to-end. Practice writing dimensions with different types. Create measures with various aggregation types. Design explores with complex join logic. Optimize it. Then break it and fix it. Seriously, breaking things teaches you more than following tutorials ever will because you learn where the boundaries are.

Performance optimization questions might show you slow query patterns and ask how to fix them with PDTs, aggregate awareness, or datagroups. Access control questions test your understanding of access_filter, required_access_grants, and model-level permissions.

Troubleshooting questions? They give you error messages and ask what's wrong with the LookML.

Similar to how the Google Professional Cloud DevOps Engineer certification tests operational scenarios, this exam uses practical questions based on real development situations. You'll see code snippets with errors. You'll answer questions about best practices. You'll identify performance problems from query patterns.

The exam does not mess around with "what is LookML?" questions. It assumes you know what things are and tests whether you can actually use them correctly.

Conclusion

Wrapping this up

Look, you don't just stumble into this. Passing the Google LookML Developer exam demands real prep work and actual hands-on time with the platform. Not just reading docs and crossing your fingers. I've seen people with years of SQL experience still faceplant because they underestimated how different modeling in LookML actually is compared to traditional data work. The mental shift's bigger than you'd think.

The exam objectives cover tons of ground. You need solid understanding of explores, views, and models in LookML, plus you've gotta know when to use persistent derived tables versus native derived tables and why that matters for performance. Access control questions test whether you've actually dealt with content governance in a real environment or just skimmed the documentation. Big difference there.

The thing is, the LookML Developer exam cost might seem steep at first. But think about what you're actually getting though: a credential that proves you can build and maintain a semantic layer that non-technical users actually trust. That's valuable in today's market where every company wants self-service BI but most implementations fall apart because the modeling's complete garbage.

Your study strategy should mix official resources with real practice. I'm talking about really building models from scratch here, not just watching videos passively. The Looker LookML training course options? They're decent, sure, but you'll learn way more by breaking things and fixing them in a dev environment. Git workflows. Debugging validation errors. Optimizing query performance. These aren't things you memorize, you need muscle memory for them.

Not gonna lie, the passing score requirements mean you can't just wing sections you're weak on. Which sucks but makes sense. You need full coverage of all exam objectives. That's exactly why practice tests matter so much. They show you where your knowledge gaps actually are versus where you think they are. (I spent three days on derived table logic once because I thought I had it down cold. Turns out? I barely understood the caching behavior at all.)

If you're serious about certification prep, the LookML-Developer Practice Exam Questions Pack gives you realistic question formats and scenarios that mirror what you'll face on test day. It's not about memorizing answers. It's about training yourself to think through LookML problems the way the exam expects, conditioning your brain basically.

The certification validity period means this isn't a one-and-done thing, but honestly that's good because Looker keeps changing and staying current with new features keeps your skills relevant instead of stale. Whether you're an analytics engineer, BI developer, or data analyst looking to level up, this cert opens doors. Real ones. Just commit to the prep work and don't rush it.

It's a grueling test, but with proper medication and fidelity, anyone can pass it and reap the prices of being certified.