DP-700 Practice Exam - Implementing Data Engineering Solutions Using Microsoft Fabric

Reliable Study Materials & Testing Engine for DP-700 Exam Success!

Exam Code: DP-700

Exam Name: Implementing Data Engineering Solutions Using Microsoft Fabric

Certification Provider: Microsoft

Certification Exam Name: Microsoft Certified: Fabric Analytics Engineer Associate

Free Updates PDF & Test Engine

Verified By IT Certified Experts

Guaranteed To Have Actual Exam Questions

Up-To-Date Exam Study Material

99.5% High Success Pass Rate

100% Accurate Answers

100% Money Back Guarantee

Instant Downloads

Free Fast Exam Updates

Exam Questions And Answers PDF

Best Value Available in Market

Try Demo Before You Buy

Secure Shopping Experience

DP-700: Implementing Data Engineering Solutions Using Microsoft Fabric Study Material and Test Engine

Last Update Check: Mar 22, 2026

Latest 104 Questions & Answers

45-75% OFF

Hurry up! offer ends in 00 Days 00h 00m 00s

*Download the Test Player for FREE

Dumpsarena Microsoft Implementing Data Engineering Solutions Using Microsoft Fabric (DP-700) Free Practice Exam Simulator Test Engine Exam preparation with its cutting-edge combination of authentic test simulation, dynamic adaptability, and intuitive design. Recognized as the industry-leading practice platform, it empowers candidates to master their certification journey through these standout features.

What is in the Premium File?

Satisfaction Policy – Dumpsarena.co

At DumpsArena.co, your success is our top priority. Our dedicated technical team works tirelessly day and night to deliver high-quality, up-to-date Practice Exam and study resources. We carefully craft our content to ensure it’s accurate, relevant, and aligned with the latest exam guidelines. Your satisfaction matters to us, and we are always working to provide you with the best possible learning experience. If you’re ever unsatisfied with our material, don’t hesitate to reach out—we’re here to support you. With DumpsArena.co, you can study with confidence, backed by a team you can trust.

Don't be Nervous About Opting for Exam Microsoft DP-700!

The standard exams are important if you have never taken a parametric or VUE exam before. The accuracy of the questions & answers are fully guaranteed by our certified experts and the number is enough for it to impact you passing the exam. dumpsarena.co includes 90 days of free updates. This is important if you are taking a test that is frequently updated. Microsoft DP-700 Real Dumps Study material verified by Microsoft Experts.

"DP-700: Implementing Data Engineering Solutions Using Microsoft Fabric" PDF & Test Engine cover all the knowledge points of the real Microsoft exam.

It's now easy as walking in a park! Only use on DumpsArena's easy DP-700 Implementing Data Engineering Solutions Using Microsoft Fabric Questions Answers that can provide you first-time success with a 100% money-back guarantee! Thousands of IT professionals have already used these to the point DP-700 Q&As and have achieved their dream certification in the first attempt.

There are no more complexities associated; the exam questions and answers are to the point easy and rewarding for every candidate. DumpsArena's specialists have utilized their best efforts in producing the questions and answers; therefore they are prepared with the latest and the most valid learning you are looking for.

DumpsArena's DP-700 Implementing Data Engineering Solutions Using Microsoft Fabric dumps are fairly effective. They concentrate entirely on the most important elements of your exam and provide you with the most efficient feasible info in an interactive and effortless to understand language. Consider boosting up your career with this tested and also the most authentic exam passing formula. DP-700 braindumps are unique and a treat for every aspired IT professional who wants to try a DP-700 exam despite their time limitations. There is a strong chance that most of these questions you will get in your real DP-700 exam test.

Our experts have devised a collection of exams like DP-700 practice tests for the candidates who want to guarantee the highest percentage in the real exam. Doing them assures your grip on the syllabus content that not only gives confidence to you but also improves your time management skills for answering the test within the given time limit. DP-700 practice tests include a real exam like situation and are fully productive to make sure a significant success in the DP-700 exam.

With all these features, another plus is the easy accessibility of DumpsArena's products. They are instantly downloadable and supported with our online customers service to answer your queries promptly. Your preparation for exam DP-700 with DumpsArena will surely be worth-remembering experience for you!

Pass Microsoft Certification Exam DP-700 Braindumps

Simply make sure your grasp on the IT braindumps devised the industry's best IT specialists and get 100% assured success in Microsoft DP-700 exam. A Microsoft credential, being the most important professional qualification, can open up doors of many job possibilities for you.

A Solid Solution to Glorious Success in the DP-700 Exam!

It was never so easy to make your way to the world's most worthwhile professional qualification as it has become now! DumpsArena's Microsoft DP-700 Implementing Data Engineering Solutions Using Microsoft Fabric training test questions answers are the most suitable choice to ensure your success in just one go. You can surely answer all exam questions by doing our Microsoft DP-700 Practice Exam frequently. Moreover grinding your skills, practice mock tests using our Microsoft DP-700 braindumps Testing Engine software and master your fear of failing the exam. Our Microsoft DP-700 dumps are the most accurate, reliable and the best effective study material that will determine the best option for your time and money.

A Supportive & Worthwhile DP-700 Practice Test

DumpsArena's DP-700 practice test will allow you to examine all areas of course outlines, leaving no important part untouched. Nevertheless, these DP-700 dumps provide you exclusive, compact and complete content that saves your valuable time searching yourself the study content and wasting your energy on unnecessary, boring and full preliminary content. DumpsArena's DP-700 Microsoft questions answers exam simulator is far more efficient to introduce with the format and nature of DP-700 questions in IT certification exam paper.

Microsoft DP-700 Study Guide Content Orientation

To review the content quality and format, free DP-700 Implementing Data Engineering Solutions Using Microsoft Fabric braindumps demo are available on our website to be downloaded. You can examine these top DP-700 dumps with any of the available sources with you.

Types of Questions Support

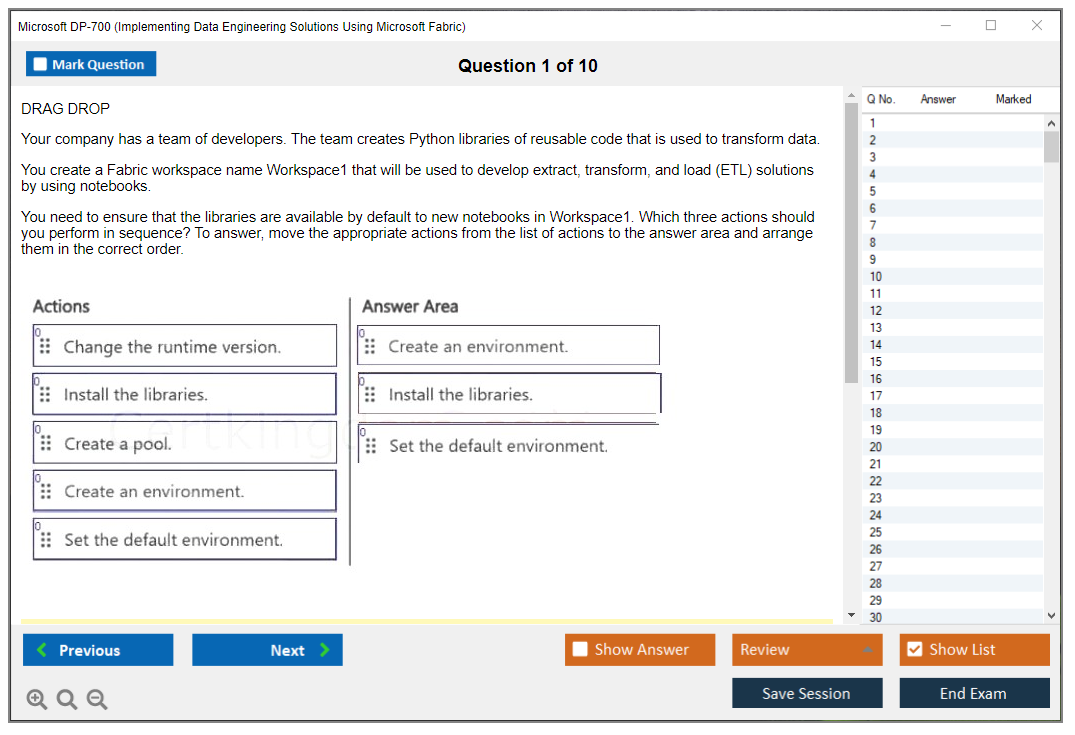

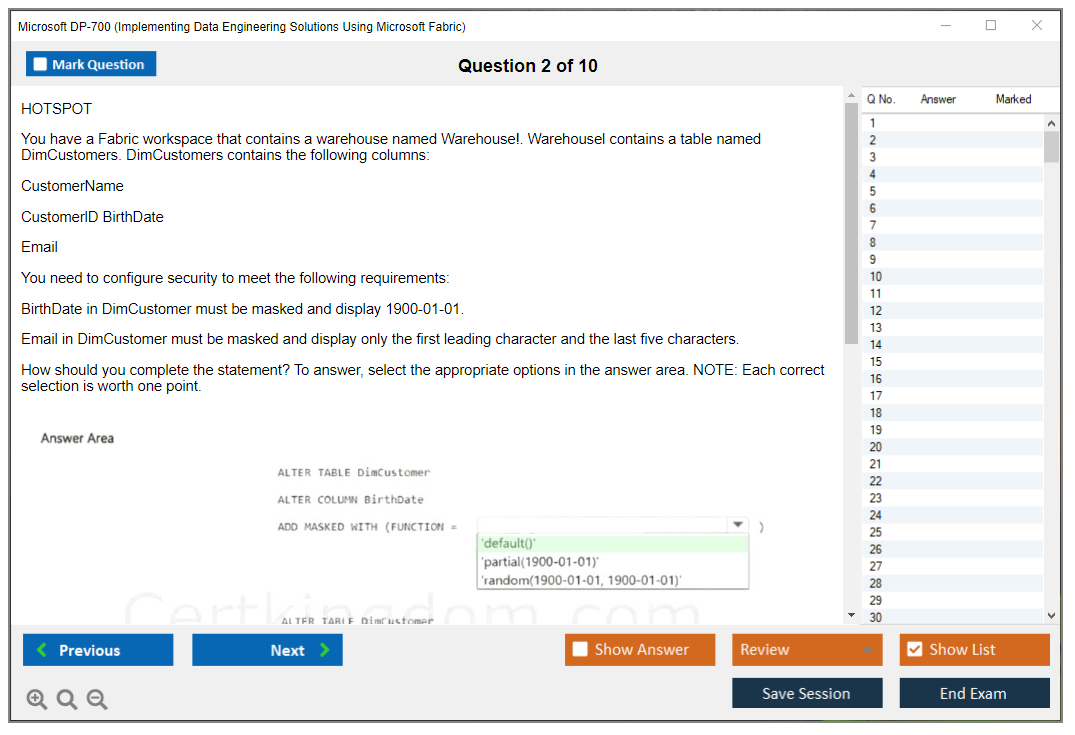

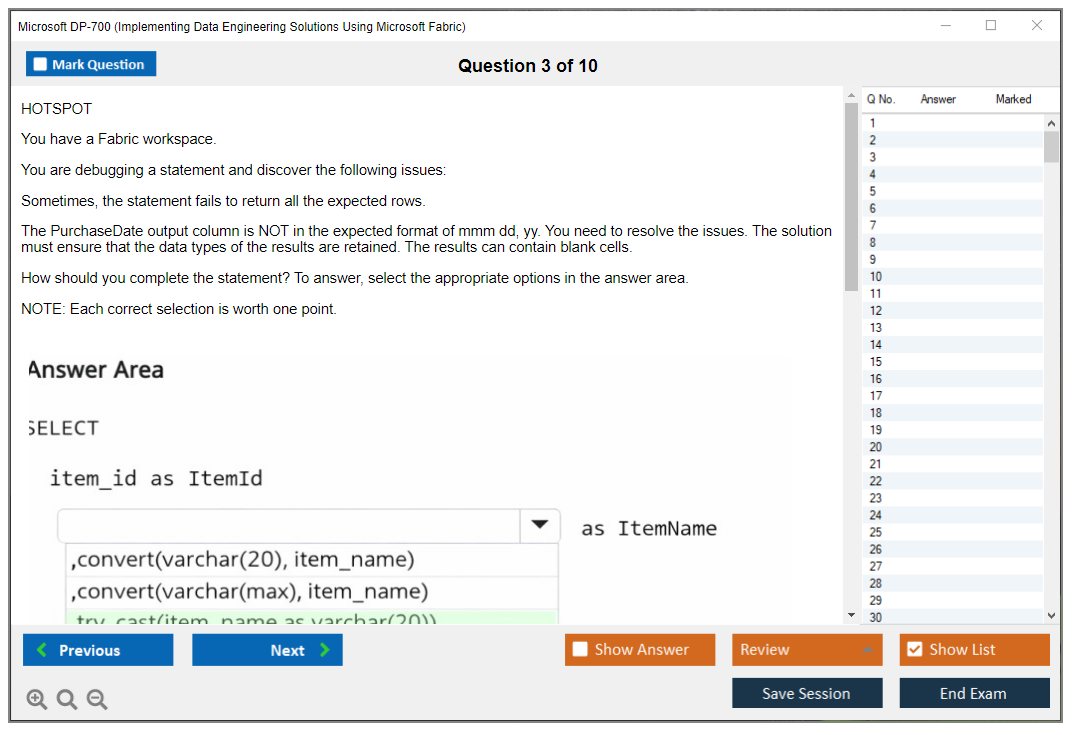

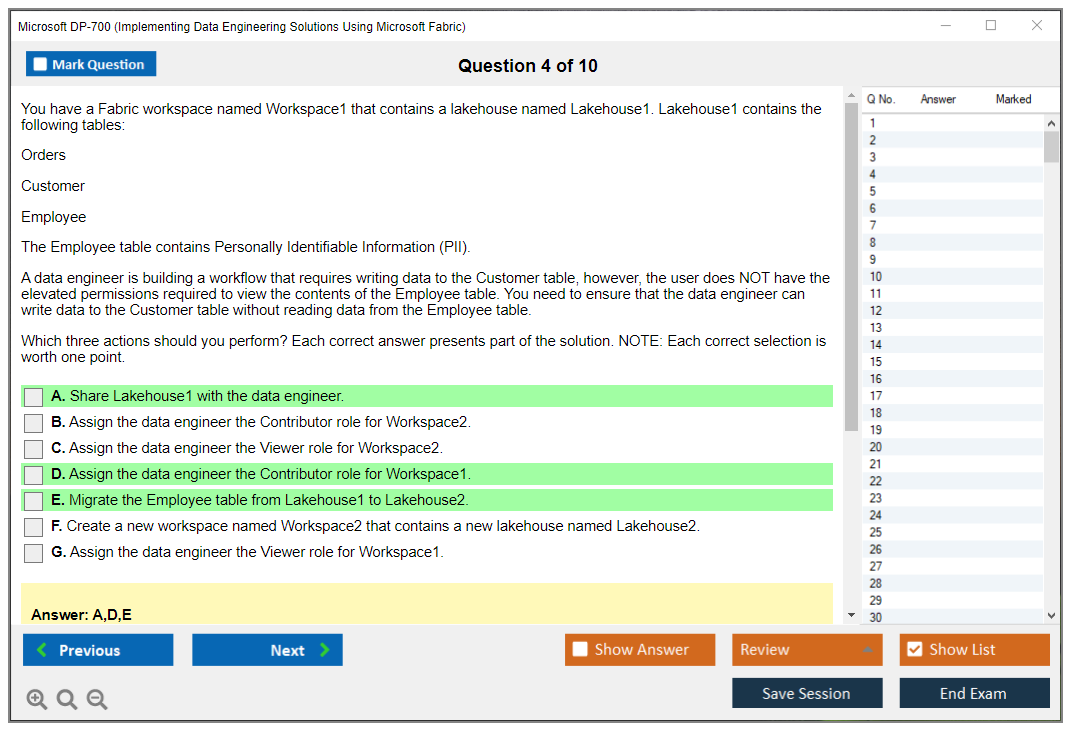

Both DP-700 PDF and Testing Engine have all the Real Questions including Multiple Choice, Simulation and Drag Drop Questions.

Free 3 Months Microsoft DP-700 Exam Questions and Answers Update

We provide you 3 Months (90 days) Free Microsoft DP-700 Exam Updates Totally Free.

100% Microsoft DP-700 Money back Guarantee and Passing Guarantee

We provide you DP-700 dump with 100% passing Guarantee With 100% Money Back Guarantee.

Fully SSL Secure System of Purchase for Microsoft DP-700 Exam

Purchase Microsoft DP-700 Exam Product with fully SSL Secure system protected with 256-bit SSL from Cloudflare.

We Respect Privacy Policy

We Respect Full Privacy of our Customers and Would Not Share Information with Any Third Party.

Fully Exam Environment

Experience Real Exam Environment with our Testing Engine.

2 Modes of DP-700 Practice Exam in Testing Engine

Standard Mode and Case Study Mode.

Exam Score History

Our DP-700 Testing Engine will Save your DP-700 Exam Score so you can Review it later to improve your results.

Question Selection in Test engine

Our Test engine Provides Option to choose Randomize and Non Randomize Questions Set.

Saving Your Exam Notes

Our Test engine Provides Option to Save The Exam Notes so you can Review Later.

- Free demo of Microsoft DP-700 exam questions and material allowing you to try before you buy.

- We offer a standard exam material of Microsoft DP-700 practice tests. The standard exams are important if you have never taken a parametric or VUE exam before. The accuracy of the questions & answers are fully guaranteed and the number is enough for it to impact you passing the exam.

- DumpsArena.co includes 90 days of free updates. This is important if you are taking a test that is frequently updated.

- Correct DP-700 Answers verified by Microsoft Experts

We offer a full refund if you fail your test. Please note the exam cannot be taken within 30 days of receiving the product if you want to get a refund. We do this to ensure you actually spend time reviewing the material.

DumpsArena.co accept Credit cards and PayPal, you can pay through PayPal and other popular credit cards including MasterCard, VISA, American Express and Discover

All products are available for download immediately from your Member's Area. Once you have made the payment, you will be transferred to Member's Area where you can login and download the products you have purchased to your computer.

How long can I use my product? Will it be valid forever?DumpsArena.co products have a validity of 90 days from the date of purchase. This means that any updates to the products, including but not limited to new questions, or updates and changes by our editing team to make sure that you get latest exam prep materials during those 90 days.

Can I renew my product if when it's expired?Yes, when the 90 days of your product validity are over, you have the option of renewing your expired products with a 30% discount. This can be done in your Member's Area. Please note that you will not be able to use the product after it has expired if you don't renew it.

How often are the questions updated?We always try to provide the latest pool of questions, Updates in the questions depend on the changes in actual pool of questions by different vendors. As soon as we know about the change in the exam question pool we try our best to update the products as fast as possible.

What is a PDF version?The PDF version is simply a portable document copy of your exam purchase. This is a world standard .pdf file which contains all questions and answers and can be read by official Acrobat by Adobe or any other free reader application.

Microsoft DP-700 Certification Overview: Implementing Data Engineering Solutions Using Microsoft Fabric Microsoft's betting big on Fabric. Like, really big. They're positioning this as the future of their entire data and analytics stack, so if you're working with Azure data services right now, the Microsoft DP-700 certification is probably on your radar, or it should be. This exam validates that you can actually build data engineering solutions in Microsoft Fabric. Not just know what buttons to click, but architect and implement real production systems that handle data at scale. What you're actually proving when you pass this thing The Implementing Data Engineering Solutions Using Microsoft Fabric exam tests whether you can work within Fabric's unified platform to solve actual data engineering problems. We're talking about building lakehouses, implementing data warehouses, creating pipelines that don't break when someone sneezes, transforming data with Spark notebooks, and making... Read More

Microsoft DP-700 Certification Overview: Implementing Data Engineering Solutions Using Microsoft Fabric

Microsoft's betting big on Fabric. Like, really big. They're positioning this as the future of their entire data and analytics stack, so if you're working with Azure data services right now, the Microsoft DP-700 certification is probably on your radar, or it should be. This exam validates that you can actually build data engineering solutions in Microsoft Fabric. Not just know what buttons to click, but architect and implement real production systems that handle data at scale.

What you're actually proving when you pass this thing

The Implementing Data Engineering Solutions Using Microsoft Fabric exam tests whether you can work within Fabric's unified platform to solve actual data engineering problems. We're talking about building lakehouses, implementing data warehouses, creating pipelines that don't break when someone sneezes, transforming data with Spark notebooks, and making OneLake storage work without burning through your cloud budget like it's nothing.

You also gotta show you understand security, governance, and monitoring. A pipeline that works but leaks sensitive data or crashes without anyone noticing isn't worth much, right?

This isn't like older Microsoft exams where you memorized service names and feature lists. DP-700 expects you to demonstrate hands-on skills. You'll get scenario-based questions where you need to choose the right architecture, optimize performance, troubleshoot issues, or implement governance controls. The Microsoft Fabric data engineering certification focus is practical implementation within Fabric's ecosystem, which combines data integration, engineering, warehousing, science, real-time analytics, and BI into one SaaS platform.

Who Microsoft thinks should take this exam

Data engineers are the obvious target audience. But also analytics engineers, data platform engineers, and solution architects who design pipelines and analytical solutions.

If you've got 1-3 years working with Azure data services and your organization is adopting Fabric (or you want to work somewhere that is), this certification makes sense. People transitioning from traditional data warehousing to lakehouse architectures will find this useful, though you'll need to unlearn some old habits. Probably more than you think.

Not gonna lie, if you're still doing everything in on-prem SQL Server or haven't touched cloud platforms, you're gonna struggle. You need some foundational knowledge before diving into DP-700. Think basic SQL. Understanding of ETL/ELT concepts. Maybe some exposure to Spark or distributed processing. Familiarity with Azure fundamentals, stuff covered in AZ-900 if you're starting from scratch. I've seen people jump straight into DP-700 after years of on-prem work and it's rough watching them wrestle with concepts that just don't translate cleanly.

DP-700 exam cost and what you're paying for

DP-700 exam cost runs $165 USD most places. Standard Microsoft pricing, honestly. Some countries price differently based on local economics. The thing is, you'll need to check Microsoft's official exam registration page for your specific region to know for sure.

Discounts pop up sometimes. Microsoft offers exam vouchers through events like Microsoft Ignite or Build, which is pretty convenient if you're already attending. Training providers bundle exam vouchers with courses. Student? Check Microsoft's academic programs. But here's my take: don't let the price be the deciding factor. If you're working in data engineering, I mean, $165 is a small investment compared to what this certification could do for your career trajectory, consulting rates, or even just your marketability in a competitive job market. I've seen people spend more on certifications that don't carry half the weight.

What score you need to pass and how they calculate it

DP-700 passing score sits at 700 out of 1000. Sounds weird, right? Microsoft uses scaled scoring, which means the raw number of questions you get right gets converted to a scale from 100-1000. This accounts for difficulty variations between exam versions. You might need to answer 65-70% of questions correctly to hit that 700 threshold, but it's not a direct percentage calculation.

You'll see your score immediately after finishing. Pass or fail shows up on screen right there. Fail? You get a breakdown showing which objective areas you struggled with, which is actually useful for figuring out what to study before retaking it. Mixed feelings on the immediate results thing. It's nerve-wracking but beats waiting days.

Format and what the actual exam experience is like

Multiple choice. Multiple select. Case studies, scenario-based questions. You get around 40-60 questions (Microsoft doesn't publish exact numbers because they rotate questions constantly). Time limit? Typically 100-120 minutes. Some questions are experimental and don't count toward your score, but you won't know which ones. Honestly feels a bit unfair.

Testing center or online proctored from home, your call. Online's convenient but you need a clean workspace, stable internet, and a webcam. The proctors? Strict about wandering eyes or background noise. Testing centers are more controlled but require scheduling and travel.

Why people find DP-700 challenging

DP-700 exam difficulty depends heavily on your experience with Microsoft Fabric specifically. Been working in Fabric for 6+ months building real solutions? The exam's manageable. Coming from other platforms or traditional Azure services like Synapse or Data Factory without Fabric experience? It's tougher. Fabric has its own way of doing things. Its whole unified approach changes the game.

Scenario questions can be tricky. They give you a business requirement and ask you to choose the best implementation approach. Multiple answers might "work" technically, but only one's optimal for that specific scenario. You need to understand performance implications, cost trade-offs, governance requirements, and operational considerations. Not just "can I build this?" which is where a lot of people stumble.

People with strong DP-203 (Data Engineering on Microsoft Azure) backgrounds? They've got an advantage since there's overlap in data engineering concepts, but Fabric's unified approach is different enough that you can't just coast on old knowledge. Trust me on that.

What the exam actually covers

Planning lakehouses and warehouses in Fabric

Look, you've gotta know when to use a lakehouse versus a warehouse. It's not optional. The thing is, you also need to understand how to implement medallion architecture with those bronze/silver/gold layers, how to structure your data for analytics workloads, and honestly, it's a lot more involved than people think. This includes understanding Delta Lake format, partitioning strategies, and how OneLake works as the unified storage layer underneath everything. I spent two weeks just wrapping my head around when to actually use which layer, especially since the silver zone can balloon into this weird middle ground that nobody quite agrees on.

The exam tests whether you can design solutions that scale. Small datasets work differently than multi-terabyte data lakes. You need to think about schema design, file formats, compression, and query performance from the architecture phase.

Building pipelines and orchestrating data movement

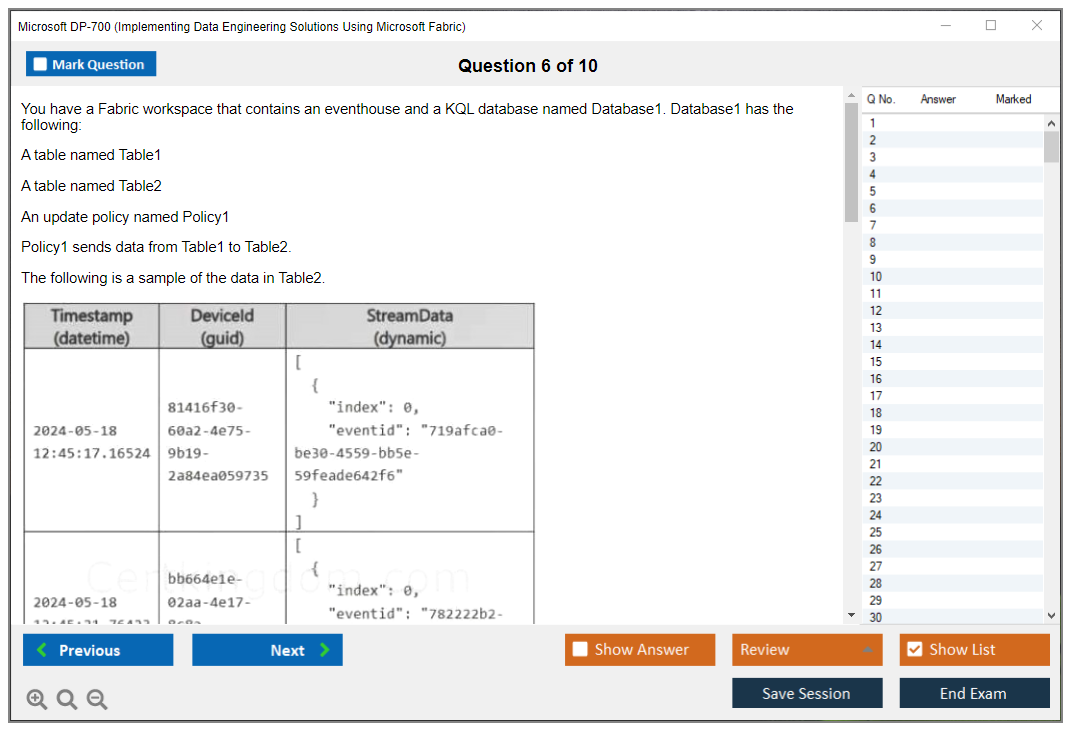

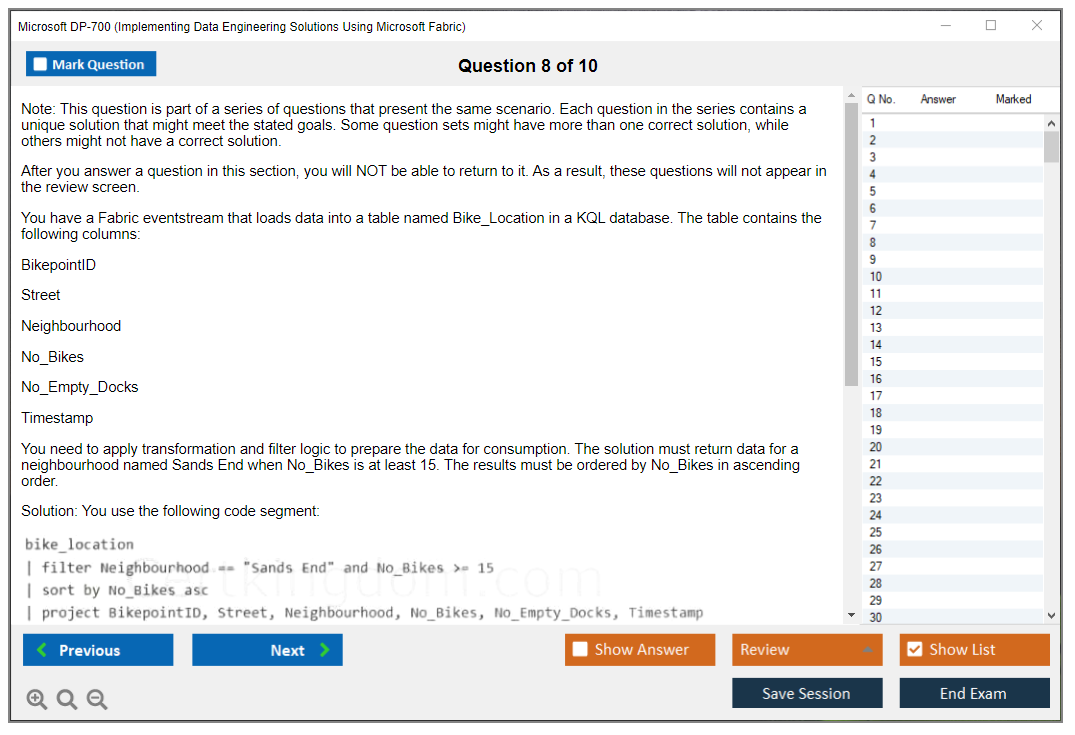

Data pipelines with Data Factory in Fabric is a major section. You'll answer questions about ingestion patterns, scheduling, monitoring, error handling, and parameterization. Covers both batch and streaming scenarios, and I mean, the exam expects you to know how to use Copy activities, dataflows, notebooks in pipelines, and orchestration patterns like dependency chains and conditional logic.

Understand this part. You should know how to ingest from various sources (databases, APIs, files, streaming sources) and implement incremental loading, change data capture, and full refresh patterns appropriately.

Transforming data with Spark and notebooks

Fabric notebooks and Spark data engineering questions cover PySpark and Spark SQL for data transformation. You've gotta know DataFrame operations. Also optimization techniques like broadcast joins and partition tuning, caching strategies, and how to write efficient Spark code that doesn't create massive shuffle operations.

Honestly? The exam also covers dataflows (Power Query) for simpler transformations. Knowing when to use Spark versus dataflows versus SQL views is important. Each has appropriate use cases.

Storage optimization and data modeling

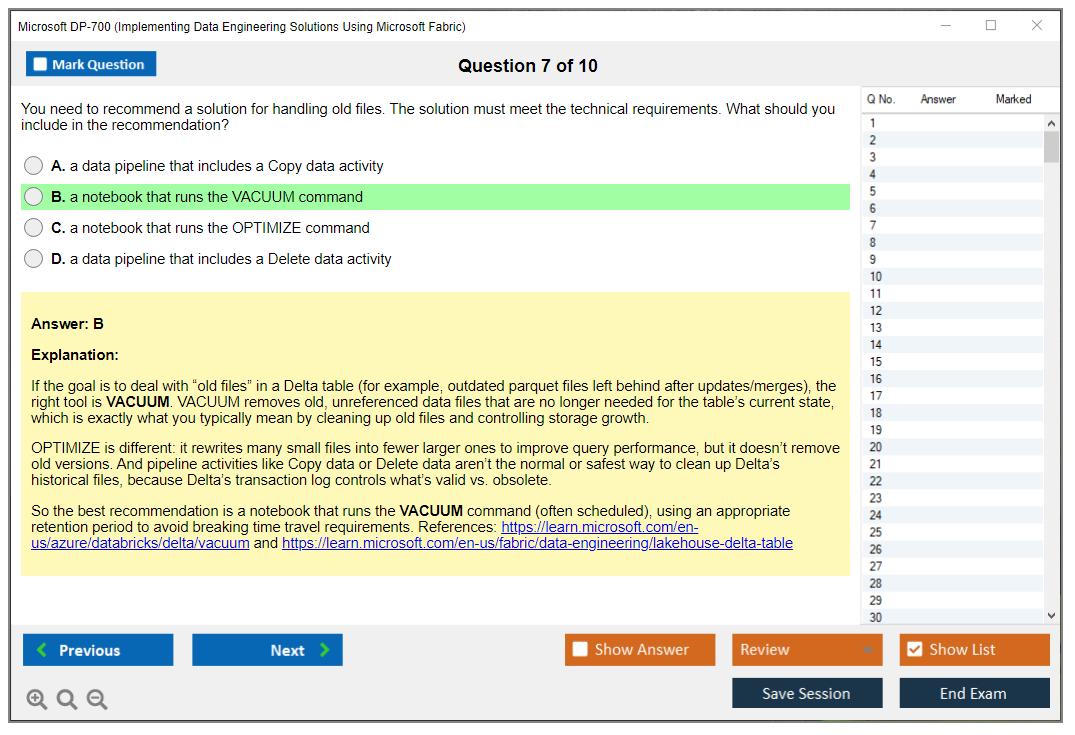

This section tests your understanding of Delta Lake, OneLake architecture, partitioning, Z-ordering, vacuuming, and optimization commands. You need to know how to manage storage costs while maintaining query performance, which can be tricky depending on your workload patterns.

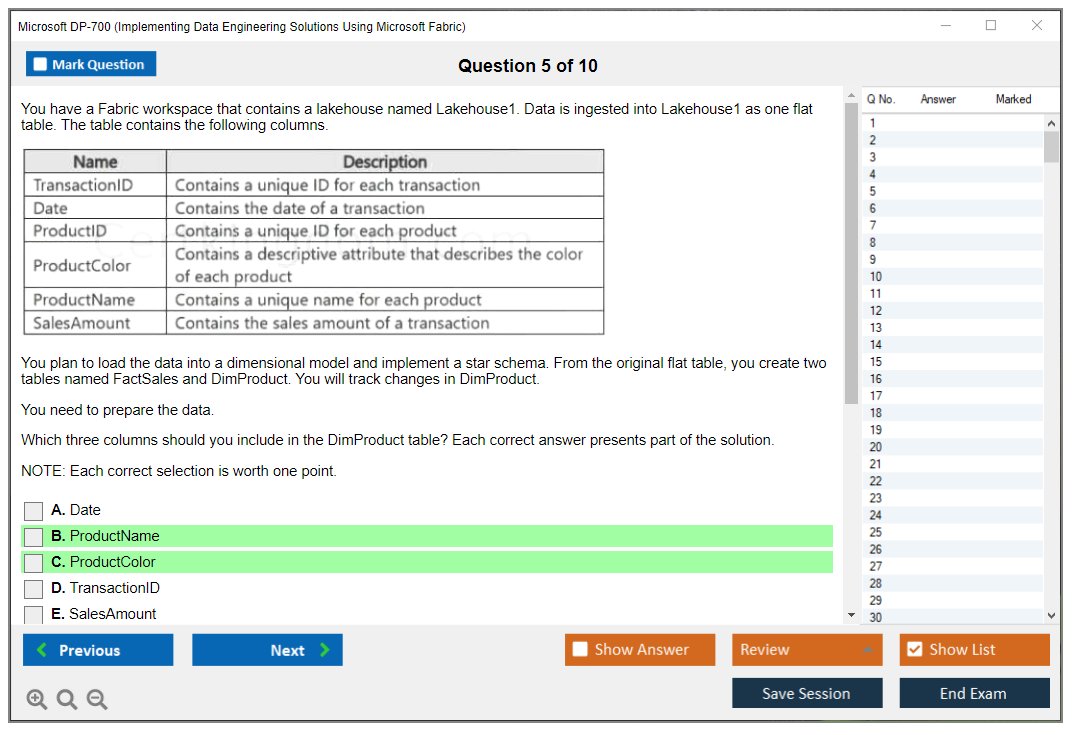

Data modeling questions cover dimensional modeling, star schemas, slowly changing dimensions, and how to implement these patterns in Fabric's lakehouse and warehouse in Microsoft Fabric architecture.

Security, governance, and operations

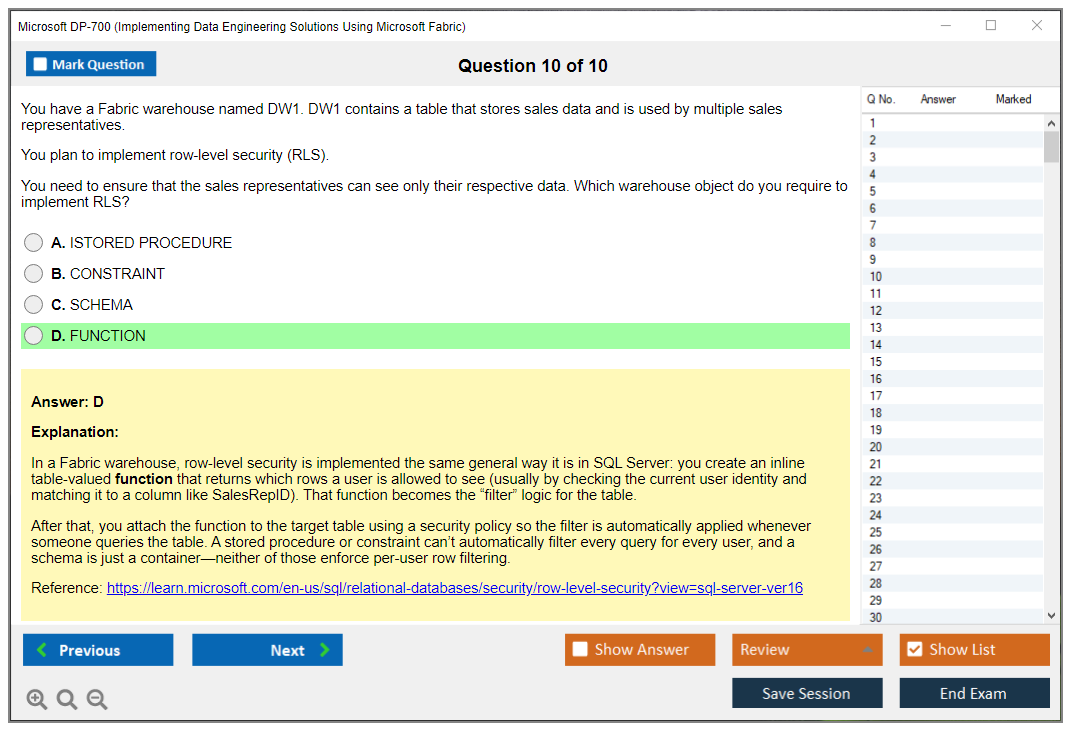

Security questions cover workspace roles, item permissions, row-level security, column-level security, and data masking. The thing is, you need to understand how Fabric integrates with Microsoft Purview for governance, data lineage, and compliance. It's all interconnected in ways that aren't always obvious at first.

Monitoring covers capacity metrics, pipeline runs, Spark job execution, and query performance. You should know how to troubleshoot issues using monitoring tools and logs.

Prerequisites and what you should know first

Official versus realistic prerequisites

Microsoft lists no formal prerequisites for DP-700. Technically speaking? You can register and take it tomorrow. But realistically, you'll waste your money if you don't have foundational knowledge.

DP-700 prerequisites should include understanding of relational databases, SQL query writing, basic Python or another programming language, cloud computing concepts, and ideally some hands-on Azure experience. If you haven't touched Azure at all, start with AZ-900 or DP-900 to build foundational knowledge before jumping into Fabric-specific implementation. I mean, you could skip those, but why make things harder?

Technical skills that make DP-700 easier

SQL proficiency is non-negotiable. Period. You'll work with SQL in warehouses, lakehouses, and transformation logic throughout the exam, so there's really no getting around this one. PySpark knowledge helps a ton for notebook-based transformations. Understanding distributed computing concepts (partitioning, parallelism, shuffle operations) makes Spark optimization questions much clearer, though even surface-level familiarity beats going in completely blind.

If you've worked with medallion architecture, lakehouse patterns, or dimensional modeling before, that translates directly to Fabric implementations. Experience with ETL/ELT tools gives you the mental framework for designing pipelines even when the specific tooling is different. It's more about concepts than exact tools. And look, nobody talks about this enough, but knowing when to not over-engineer a solution matters just as much as knowing all the fancy patterns. Sometimes a simple pipeline beats an elaborate one that breaks every other Tuesday.

Study resources that actually help

Microsoft Learn path and official materials

Microsoft Learn has a dedicated DP-700 study materials learning path. It's free and covers all exam objectives. The modules include hands-on exercises in Fabric, which matters because you need practical experience, not just reading about features.

Work through every module. Don't skip the exercises. Actually build the lakehouses, create the pipelines, write the notebooks. Passive reading won't prepare you for scenario-based questions that test whether you've really worked with the platform or just skimmed some articles.

Documentation deep dives

Microsoft Fabric documentation? Extensive. Focus on architecture patterns, best practices guides, and performance optimization articles. The release notes show you what's new and what's changing. This matters since Fabric's actively evolving.

Don't try memorizing documentation. Use it as reference while building projects. When you implement something hands-on and then read the docs about why it works that way, the knowledge actually sticks. I spent probably too much time reading about delta tables before I built one, which was backwards.

Hands-on labs are mandatory

You can't pass this exam without hands-on practice. Period.

Get access to a Fabric capacity. Microsoft offers trials, or use your organization's environment if available. Build end-to-end solutions. Create a lakehouse, ingest data from multiple sources, implement bronze/silver/gold layers, build dimension tables, create pipelines with error handling, optimize Spark transformations.

Work through realistic scenarios. Import CSV files, transform them, load to a warehouse, create a star schema. Ingest streaming data. Implement incremental loading. The more you build, the better you'll understand trade-offs between different approaches, and that's what separates people who pass from those who don't.

Training courses and what to look for

Instructor-led training can accelerate learning if you find good courses. Look for courses that emphasize hands-on labs over PowerPoint slides. Real deal is instructors with actual Fabric implementation experience provide better insights than those just reading from Microsoft's materials.

Third-party platforms have DP-700 courses, but quality varies wildly. Check reviews, course outlines, and whether they're updating content as Fabric evolves. Some courses created for Fabric's early versions miss newer features, which could leave gaps in your preparation.

Practice tests and exam preparation strategy

Official practice assessments versus third-party options

So here's the thing. Microsoft offers official practice assessments. They're pricey, honestly. But they'll give you the most accurate representation of question style and difficulty, which I mean, that's kinda what you're paying for. The explanations really do help you understand why answers are correct or incorrect.

DP-700 practice tests from third-party providers? Quality's all over the map. Some are really excellent. Others are complete garbage with outdated information or poorly written questions that'll just confuse you. Look for providers that update content regularly, have solid reviews, and offer detailed explanations. Those're your best bet.

What makes good practice questions

Quality practice questions mirror the exam's scenario-based format, no question about it. They should present realistic business requirements and ask you to choose the best implementation approach with detailed reasoning that actually makes sense. Avoid brain dumps or question memorization. Honestly, that's cheating and doesn't prepare you for actual work anyway.

Good practice questions include explanations for both correct and incorrect answers. Understanding why wrong answers are wrong often teaches more than just the right path. Sometimes learning what not to do is more valuable than memorizing the correct answer. Kind of like how I learned never to run an unfiltered scan on a production table during peak hours. That was an expensive Tuesday.

Study timeline for different experience levels

Already working with Fabric daily? 2-3 weeks of focused study might be enough. Review weak areas. Take practice tests. Fill knowledge gaps.

If you're new to Fabric but experienced with data engineering generally, I'd plan for 4-6 weeks. Complete beginners should allow 8-12 weeks minimum, potentially longer if you're also learning programming or cloud fundamentals at the same time, which honestly can feel overwhelming.

Consistency matters way more than cramming. An hour daily for six weeks beats those brutal twelve-hour weekend sessions that leave your brain fried. Hands-on practice should consume most of your study time. Aim for 60-70% building things, 30-40% reading or watching content.

Mistakes people make on DP-700

Common pitfalls? Not reading scenario questions carefully. Choosing technically correct but suboptimal solutions. Confusing lakehouse and warehouse capabilities. Misunderstanding Fabric's security model. Underestimating governance questions. Optimization questions trip people up constantly. You need to know not just how to make something work but how to make it work efficiently at scale, which's a different skill entirely.

Time management during the exam matters. Don't spend ten minutes on one question. Flag tough ones and circle back later. Make your best educated guess rather than leaving questions blank. There's no penalty for wrong answers anyway.

Keeping your certification current

Renewal requirements and timeline

Microsoft certifications expire after one year now. Yeah, it's annoying. To renew DP-700 certification renewal, you'll take a free online renewal assessment about six months after passing. The assessment covers updates and new features added to Fabric since you certified.

Microsoft sends renewal reminders via email. Don't ignore them. Missing your renewal window means retaking the full exam and paying again, which nobody wants to shell out that money and time investment all over again when a simple assessment would've done the trick.

The renewal assessment process

Renewal assessments are shorter. Way shorter than full exams, maybe 20-30 questions. You can retake them if you fail, unlike the main exam where you wait 24 hours between attempts. You'll wanna study the "What's new" documentation for Fabric before taking the renewal assessment.

Staying current with Fabric after certification

Fabric evolves rapidly. New features ship monthly, sometimes it feels overwhelming keeping up, but that's the reality of modern data platforms. Follow the Fabric blog, release notes, and community forums. Build personal projects using new capabilities. If you're not actively using Fabric at work, create side projects to maintain skills.

The certification proves you knew Fabric at a point in time. Staying valuable means keeping those skills fresh, though that's easier said than done when you've got, I don't know, actual client work piling up or maybe you're trying to learn three other platforms simultaneously because your boss thinks everyone should be a polyglot data engineer now. Anyway. It's an ongoing commitment, not a one-and-done achievement.

Frequently asked questions

Is DP-700 worth it if you're working with Microsoft Fabric?

If you're implementing Fabric solutions professionally, yes. It validates your skills to employers and clients, which matters a lot in this market. For career development, it demonstrates you're staying current with Microsoft's strategic platform. The study process itself improves your Fabric knowledge even if you're already experienced.

Not using Fabric? No plans to? Probably skip it for now. Focus on certifications aligned with the technologies you actually use instead. But if your organization's evaluating Fabric or you want to position yourself for opportunities with companies adopting it, DP-700's a smart investment.

One thing I've noticed is that a lot of people get the cert just to have it on LinkedIn, then never touch Fabric again. Seems like a waste, but I guess the market rewards credentials whether you use them or not.

How does DP-700 compare to other Microsoft data certifications?

DP-203 covers data engineering across various Azure services like Data Factory, Synapse, Databricks, Stream Analytics. It's broader but less deep on any single platform. DP-700's Fabric-specific and goes deeper on implementation within that unified environment. If you're working with multiple Azure data services, DP-203 might be better. Fabric's your primary platform? DP-700's more relevant.

DP-900 is fundamentals-level. Doesn't validate implementation skills. DP-300 focuses on database administration for SQL-based systems. PL-300 is for Power BI analysts. Each serves different roles.

Realistic preparation time for DP-700

Experienced data engineers working with Fabric: 2-4 weeks. Data engineers new to Fabric but strong with Azure: 4-8 weeks. Those with general data engineering experience but limited cloud work: 8-12 weeks. Complete beginners need 12+ weeks and should actually consider foundational certifications first.

Your mileage varies based on how much time you're dedicating daily, learning style, and hands-on access to Fabric environments for practice. Don't rush it. Failing and retaking costs more time and money than preparing properly the first time. Seems obvious but people ignore this constantly.

DP-700 Exam Details: Cost, Format, Passing Score, and Difficulty

Microsoft DP-700 certification overview (Implementing Data Engineering Solutions Using Microsoft Fabric)

What DP-700 validates (roles, skills, and Microsoft Fabric focus)

Microsoft's DP-700 certification basically proves one thing: you can build and run data engineering workloads inside Fabric without duct-taping ten products together. That's the short version, anyway, though the reality gets messier once you're three modules deep in the learning path.

What DP-700 validates is your ability to design a lakehouse and warehouse in Microsoft Fabric, ingest data, transform it with Fabric notebooks and Spark data engineering, and then keep the whole thing governed so it doesn't turn into a permission nightmare where nobody can access anything or, worse, everyone can access everything. Fabric's "everything in one place" pitch is exactly why the exam feels different from older Azure exams, because questions love to test how the pieces interact, not just what each button does when you're clicking around in isolation.

Who should take DP-700 (target audience)

Data engineers? Absolutely. Analytics engineers, BI engineers drifting into engineering territory, or platform people who got handed Fabric and told "make it work" are all the audience here. People coming from Azure Synapse Analytics, Azure Data Factory, or Databricks usually feel at home pretty fast once they map the concepts. SQL-only folks? Different story entirely.

You can brute-force memorize terms if that's your learning style, but DP-700 keeps dragging you back to implementation choices, tradeoffs, and operational reality, like "what breaks when the workspace is locked down" or "why did this Spark job suddenly get slow after you changed partitions." Those scenarios mirror what you'd actually troubleshoot at 3pm on a Thursday when everything's on fire.

Side note: I've noticed people who come from traditional ETL tools like Informatica or SSIS have a weird adjustment period because Fabric's declarative pipeline model plus code-based transformations don't map cleanly to the drag-and-drop visual approach they're used to. Not impossible to bridge, just takes longer than they expect.

DP-700 exam details

DP-700 exam cost (price, regional pricing, discounts)

DP-700 exam cost in 2026 is straightforward in the US: $165 USD. Regional pricing varies because Microsoft prices exams in local currency and adjusts for market conditions, economic factors, and probably some algorithm nobody fully explains, so globally you'll typically see something like $99 to $165 USD equivalent depending on where you sit the exam.

Discounts exist. You should chase them, because paying full price when Microsoft is handing out half-off vouchers is just painful to watch. Common discount opportunities for DP-700 exam cost include:

- Microsoft Ignite and Microsoft Build conference vouchers, often 50% off (these come and go, and the rules change year to year, so read the fine print before you get excited)

- Microsoft Learn Cloud Skills Challenges (finish the challenge within the timeframe, get an eligible exam voucher)

- Academic pricing for students (usually tied to verification like a valid .edu email or enrollment status)

- Volume licensing agreements for organizations buying in bulk

Retakes aren't friendly if you're hoping for endless attempts. Fail once, you wait 24 hours before your first retake, then 14 days for subsequent retakes. Each retake costs the full exam fee unless you bought an exam replay bundle upfront. That bundle can be worth it if you're not confident, because the exam doesn't care that you were "close" or had a bad day.

DP-700 passing score (what it is and how scoring works)

DP-700 passing score requirements are based on Microsoft's scaled scoring system, where you need 700 or higher on a scale of 100 to 1000. Simple number. Confusing math.

How DP-700 scoring works is where people get weirdly stressed, so here's the practical take: not all questions are weighted the same, and Microsoft applies psychometric analysis to keep scoring fair across different versions of the exam that might have slightly different question pools. Performance-based questions and case studies often matter more than a basic multiple-choice question, so if you bomb a case study, you can feel it in your score. If you ace the heavy stuff, you can survive missing some smaller items scattered throughout. That's why "I got 80% right" is not a real statement on Microsoft exams. You don't know the weights, and neither does anyone else except the scoring algorithm.

DP-700 exam format (question types, time, delivery options)

Expect 40 to 60 questions in about 120 minutes. That's the normal range, though some people get fewer, some get more depending on adaptive elements or beta inclusions. The format mix includes multiple-choice, multiple-select, case studies, drag-and-drop, hot area questions, and scenario-based questions that check if you can implement data pipelines with Data Factory in Fabric and not just recite definitions from a glossary.

Question types you'll encounter: scenario prompts with a bunch of constraints, then you pick the best architecture or configuration given those limitations. Case studies are a mini packet of context (think company background, infrastructure, requirements) with multiple questions tied to it that you answer sequentially. You'll also see questions where you identify correct code snippets or settings, like Spark configs, lakehouse table behavior, or security scope decisions that require understanding how permissions cascade.

Delivery options: Pearson VUE testing centers, or online proctoring from home or office if you've got the setup for it. Online proctoring is convenient, but it's picky about conditions. Webcam required, reliable internet, quiet private space, no interruptions. You'll get flagged if someone walks behind you or your dog barks during a question. Not gonna lie, if your environment is chaotic or you share a workspace, just go to a center and save yourself the anxiety of getting flagged for looking away from the screen.

Language availability is usually English first, then more languages roll out later as demand justifies localization. DP-700 is offered in English, and additional versions like Spanish, French, German, Japanese, Chinese (Simplified), Korean, and Portuguese may show up as the exam matures, but verify availability before scheduling if English isn't your best testing language.

DP-700 exam difficulty (what makes it challenging and who finds it easiest)

DP-700 exam difficulty is usually rated moderate to challenging by people who've taken it and similar Microsoft exams. Comparable to other associate-level Microsoft certs in scope, but it demands more hands-on time because Fabric is integrated and the exam loves practical scenarios over pure theory.

What makes DP-700 challenging is the depth across components, plus the "how do they work together" angle that forces you to think across services instead of memorizing individual features in isolation. You need optimization knowledge for Spark and Delta tables, you'll get scenarios involving governance and security boundaries where one wrong setting breaks everything downstream, and the exam assumes you can reason about transformations in SQL and Python or Scala, even if you personally only write one of them at work. Fabric notebooks and Spark data engineering show up constantly. If you've never tuned a Spark job or thought about partition strategy, some questions will feel like they're written in another language entirely.

Who finds it easier: people with Synapse or Data Factory background already, Databricks folks who've lived in Spark environments, and anyone comfortable with Delta Lake and Apache Spark fundamentals. SQL-focused professionals may struggle when the exam pivots into Spark-based transformation questions and partition strategy, because you can't "SQL your way out" of every performance issue when the underlying engine operates differently.

Common difficulty areas I keep hearing about from exam-takers:

- Spark optimization and partition strategies (because it's easy to memorize concepts, hard to apply them correctly under exam pressure)

- OneLake and Delta table architecture concepts (what lives where, and what that storage model implies for queries and governance)

- Orchestration scenarios in Data Factory in Fabric (dependencies, triggers, monitoring, failure handling)

- Security boundaries, workspace governance, and who can see what under different permission models

Time management matters here. With 120 minutes for 40 to 60 questions, you've got about 2 to 3 minutes per question on average, but case studies eat time fast because you're reading context and answering multiple related questions. Move quickly on straightforward items and bank minutes for the long scenario blocks.

Microsoft's exam interface lets you mark questions for review and come back later. Use it liberally. Skip the time-sinks early, grab the points you're sure about, then return with a calmer brain when you've got breathing room.

DP-700 exam objectives (skills measured)

Plan and implement data engineering in Microsoft Fabric (lakehouse/warehouse strategy)

DP-700 exam objectives usually start with design choices: when you use a lakehouse vs a warehouse, how you model data for different consumption patterns, and how you align the setup with downstream analytics needs. Storage decisions show up early because they ripple into performance, governance, and cost. Choose wrong, and you're stuck with slow queries or bloated storage bills.

Ingest and orchestrate data (pipelines, Data Factory in Fabric, scheduling/monitoring)

You'll need to understand ingestion patterns, scheduling approaches, monitoring setups, retries, and failure handling that doesn't corrupt data. Some questions are basically "this pipeline fails at 2am, what do you change so it recovers without corrupting data or requiring manual intervention," and that's very day-to-day data engineering reality.

Transform and process data (Spark, notebooks, dataflows, performance considerations)

Fabric notebooks and Spark data engineering are a big slice of the exam. Expect transformations, incremental processing ideas, and performance tradeoffs where the "right" answer depends on scale and freshness requirements. Short sentence here. Spark is not optional knowledge.

Manage and optimize storage and data models (Delta/OneLake concepts, partitioning, optimization)

This is where OneLake and Delta concepts get tested in practical ways, not trivia. Questions about partitioning strategies, file sizes, table maintenance patterns, and compaction. And yes, those "why is this query slow" prompts that force you to pick the least bad fix from a list of imperfect options.

Secure, govern, and operate data solutions (access, lineage, monitoring, reliability)

Governance is the part many people under-study until it bites them. Permissions, workspace roles, lineage expectations, operational monitoring, reliability patterns. These are fragments scattered across the exam, but they're important ones that stack up.

DP-700 prerequisites and recommended experience

Prerequisites (official vs. recommended)

DP-700 prerequisites in the "official" sense are light. Microsoft usually doesn't hard-require another cert as a blocker. Recommended experience is the real gate, though. You should have built pipelines in production, worked with lakehouse or warehouse patterns, and debugged at least a few broken jobs where logs were your only friend.

Skills you should already have (SQL, ETL/ELT, Spark basics, Azure/Microsoft Fabric fundamentals)

Have SQL down cold. Know ETL vs ELT conceptually and practically. Understand Spark basics like partitions, lazy evaluation, and transformations at a high level. You don't need to be a Spark expert, but you can't be totally green either. And you should be comfortable moving around Fabric: workspaces, items, notebooks, pipelines, and the general flow of data from source to consumption.

Best DP-700 study materials

Microsoft Learn DP-700 learning path (how to use it effectively)

DP-700 study materials should start with Microsoft Learn because it maps directly to the DP-700 exam objectives and gets updated when Fabric changes. But don't treat it like a novel you read passively. Read a module, then go do the thing in Fabric right after, while the terminology is still fresh and the steps make sense.

Microsoft Fabric documentation (what to prioritize)

Docs are massive. Prioritize lakehouse tables, Delta behavior, OneLake concepts, pipelines, security models, and monitoring approaches. Skim the rest for awareness, but focus your deep reading where the exam objectives are dense. You're studying for decisions and tradeoffs, not trivia.

Hands-on labs and projects (lakehouse, pipelines, notebooks, warehouse scenarios)

Do at least one end-to-end build: ingest data from a source, orchestrate refresh, transform in notebooks, write to Delta, then expose it through a warehouse or semantic model. Break it on purpose. Delete permissions, misconfigure a pipeline, partition badly. Fix it. That's where the exam concepts click instead of floating as abstract ideas.

Instructor-led training and third-party courses (how to choose)

Courses are fine if they include labs and updated content that reflects current Fabric features. Avoid anything that feels like slides-only certification hype with no hands-on component. If it doesn't make you build something, it won't stick when the exam asks "what happens if.."

DP-700 practice tests and exam prep strategy

Official practice assessments vs. third-party practice tests

DP-700 practice tests are useful for timing practice and identifying weak spots you didn't know existed. Microsoft's official practice assessment is a good baseline for question style and difficulty. Third-party tests can help fill gaps, but quality varies wildly, and brain-dump style questions that just memorize answers are a trap that'll hurt you on the real exam.

What to look for in high-quality DP-700 practice questions

Look for scenario-heavy questions with explanations that reference why a choice is right, not just "A is correct." If it never mentions tradeoffs or alternative approaches, it's probably junk that won't prepare you for decision-based questions.

2 to 4 week study plan (beginner vs. experienced data engineer)

Two weeks can work if you already ship Spark and pipelines at work and just need Fabric-specific mapping of what you know to new terminology. Four weeks is more realistic if Fabric is new and Spark tuning is a weak area, because you'll spend time failing labs, rereading docs, and slowly realizing governance questions are trickier than they look at first glance.

Common DP-700 mistakes (scenario questions, governance, orchestration, optimization)

People rush scenario questions and miss constraints buried in the description. They ignore governance until the last weekend before the exam. They over-focus on definitions instead of application. And they underestimate orchestration edge cases like retries, dependency chains, and what happens when upstream sources are late or malformed.

DP-700 certification renewal

Renewal requirements and timeline (how Microsoft renewals typically work)

DP-700 certification renewal is typically handled through Microsoft's online renewal process before expiration, often with a free assessment you take on Microsoft Learn within a specific window. Timelines can change as Microsoft adjusts certification policies, so check your certification dashboard for the exact window and deadlines.

Renewal process (assessment, reminders, and keeping skills current)

You'll usually get reminders via email, then you pass the renewal assessment to extend the cert for another year. No testing center drama or proctoring stress. Still takes prep, though, because Fabric changes fast and the assessment covers new features.

How to maintain Fabric skills post-certification (projects, updates, release notes)

Keep a small Fabric project alive at work or in a personal tenant, and read Fabric release notes monthly. Seriously, monthly, because features move, defaults change, and old advice rots faster than you'd expect.

DP-700 FAQ

Is DP-700 worth it for data engineers using Microsoft Fabric?

Yes, if your org is standardizing on Fabric or hiring for it. The Microsoft DP-700 certification signals you can actually implement solutions, not just talk about them in meetings.

DP-700 vs other Microsoft data certifications (who should choose which)

If you're deep in Fabric engineering, DP-700 fits your work. If your work is more BI modeling and reporting, you might be better served elsewhere in the certification stack. If you're more platform and admin, look at governance-heavy options that match your daily responsibilities.

How long does it take to prepare for DP-700?

Most people land in the 2 to 6 week range depending on experience, especially with Spark and pipelines. If you're brand new to Spark? Add time. Wait, actually, add significant time because Spark concepts don't sink in overnight.

DP-700 Exam Objectives: Complete Breakdown of Skills Measured

Breaking down what Microsoft actually tests with DP-700

Okay, so here's the deal. Microsoft Fabric is still pretty new, honestly, and the DP-700 exam reflects that reality. It's structured around four main domains that each carry different weight. You're looking at roughly 25-30% for planning and implementing lakehouse/warehouse solutions, another 25-30% for data ingestion and pipeline orchestration, 25-30% for Spark transformations and processing, and then 15-25% for management, optimization, and monitoring.

The weighting matters. A lot.

If you bomb the lakehouse section, you're in serious trouble because that's almost a third of your score right there.

The exam doesn't just test book knowledge either. Microsoft loves scenario-based questions where you need to choose between a lakehouse and a warehouse for a specific use case, or decide whether to use a pipeline activity versus a notebook. I mean, that's the reality of data engineering, right? There's rarely one "correct" answer, just better answers for specific contexts depending on what you're actually trying to accomplish.

Planning and implementing storage solutions in Fabric

This first domain? Massive. It covers the foundational architecture decisions you'll make. You need to understand what a lakehouse actually is in the Microsoft Fabric context. it's a buzzword they're throwing around. It combines the flexibility of a data lake with the structure and query performance of a data warehouse, all sitting on top of OneLake, which is Fabric's unified storage layer built on ADLS Gen2.

The exam will test whether you know when to choose a lakehouse versus a warehouse. Generally speaking, lakehouses are better for raw and semi-structured data, exploratory analytics, and when you need that schema-on-read flexibility. Warehouses shine when you need that traditional data warehouse experience with T-SQL, enforced schemas, and optimized performance for BI queries. But honestly? You'll often use both in the same solution because they complement each other.

Creating and configuring lakehouses involves understanding workspace roles and permissions. Admin, Member, Contributor, Viewer, all that stuff. You'll create lakehouses within a Fabric workspace, set up shortcuts to external data sources (huge feature, by the way), and manage metadata. Shortcuts let you reference data in Azure Data Lake Storage, S3, or other OneLake locations without actually copying it. Saves storage costs and maintains a single source of truth.

Warehouse implementation in Fabric is different from traditional SQL Server or even Azure Synapse Analytics, which trips people up. You're working with Synapse Data Warehouse but through the Fabric interface. You need to know T-SQL support and its limitations (not everything works the same), how to implement tables with distribution strategies, create views and stored procedures, and optimize performance. The distribution strategies matter for query performance at scale. Hash distribution for large fact tables, replicated for small dimensions.

Delta Lake is absolutely critical here. Every lakehouse table in Fabric is a Delta table by default, which gives you ACID transactions, time travel, and schema enforcement. You'll need to understand partitioning strategies (don't over-partition because seriously, this kills performance), how to use Delta time travel for historical queries or rollback scenarios, and optimization commands like OPTIMIZE and VACUUM.

The thing is, the medallion architecture shows up constantly. Bronze layer holds raw data exactly as ingested. No transformations, just land it. Silver layer applies cleansing, deduplication, and basic transformations to make data usable. Gold layer contains aggregated, business-ready data that analysts can actually consume. You implement incremental processing between layers, manage data quality at each tier, and establish clear governance boundaries. Most production Fabric implementations follow this pattern because, honestly, it just works and provides clear separation of concerns.

I've seen teams try to skip straight to gold without a proper bronze layer. That's a mistake you make exactly once before learning your lesson.

Ingesting and orchestrating data with pipelines

Data Factory in Microsoft Fabric is the orchestration engine, and this domain tests your ability to build reliable data pipelines that don't fall apart in production. If you've used Azure Data Factory before, some concepts transfer directly, but Fabric Data Factory has its own quirks and integrations you need to learn.

You'll need to implement various data ingestion patterns depending on your sources. Batch ingestion from databases, file systems, APIs. All the usual suspects. Streaming data ingestion through Eventstreams for real-time scenarios when you can't wait for batch windows. Dataflows for low-code transformations when you don't want to write Spark code or deal with notebooks. Shortcuts for data virtualization when you want to query data in place rather than copying it around and duplicating storage.

The Copy Data activity is your workhorse for moving data around between systems, but you need to know how to configure it properly. Source and sink settings, performance tuning with parallel copies and data integration units, error handling strategies. Data transformation activities let you call stored procedures or execute Spark notebooks. Speaking of which, Notebook activities are huge for when you need custom Spark processing that dataflows can't handle or when your transformation logic is too complex for visual tools.

Incremental data loading? Tested heavily.

Nobody wants to reload entire datasets daily in production. It's wasteful and slow. Watermark-based approaches track the last processed timestamp or ID and only grab newer records. Change data capture (CDC) captures only changed rows from source systems using transaction logs. Delta detection compares modification timestamps between source and target. Each approach has tradeoffs in complexity, reliability, and how much you trust your source systems.

Pipeline scheduling uses different trigger types for different scenarios. Schedule triggers run on a timer. Simple but effective for most batch workloads. Tumbling window triggers are better for time-series data because they guarantee exactly-once processing for each time window, which matters for financial or compliance data. Event-based triggers fire when something happens, like a file landing in storage or a message hitting a queue.

Monitoring and troubleshooting is where you separate beginners from experienced engineers who've been woken up at 3 AM for pipeline failures. The monitoring views show pipeline runs with success and failure status, but you need to drill into activity-level metrics to find actual bottlenecks. Failed runs require analyzing error messages (which are sometimes cryptic), checking source and sink connectivity, and validating data formats match expectations. Setting up alerts for failures is table stakes for production systems. You want to know about problems before your users do.

This domain tests your Spark skills within the Fabric environment specifically. Notebooks in Fabric support Python, Scala, R, and SQL, though most people use PySpark honestly. You create notebooks in a workspace, they automatically attach to a Spark session, and you can integrate them into pipelines as activities for orchestration.

Reading and writing data with Spark requires understanding different file formats and when to use each. Parquet is columnar and compressed, great for analytics workloads. Delta adds transactions and versioning on top of Parquet, which is why Fabric uses it everywhere. CSV and JSON are common for source data but way less efficient for processing. You load data from lakehouses or external sources into Spark dataframes, transform it however you need, and write it back to Delta tables or other formats.

Dataframe transformations are core Spark operations you'll use constantly. Filtering, aggregating, joining. Standard stuff, but the exam tests whether you understand performance implications of different approaches. Window functions enable advanced analytics like running totals or ranking within partitions. Handling null values and data quality issues comes up all the time because real-world data is messy and doesn't follow schemas. User-defined functions (UDFs) let you implement complex business logic that built-in functions can't handle, but they're slower than built-in functions so use sparingly and only when necessary.

Working with Delta tables through Spark APIs is key for the exam. You create managed or external Delta tables depending on ownership needs, implement MERGE operations for upsert patterns (incredibly common for slowly changing dimensions), use time travel to query historical versions or roll back bad updates, and run OPTIMIZE to compact small files into larger ones. Z-ordering arranges data by frequently-filtered columns to improve query performance dramatically. Sometimes 10x faster for the right queries.

Spark performance optimization separates good data engineers from great ones who build systems that actually scale. Understanding execution plans helps you spot inefficient operations like unnecessary shuffles. Partition strategies affect parallelism. Too few partitions and you're not using your cluster capacity, too many and you have scheduling overhead that slows everything down. Shuffle operations are expensive because they move data across the network, so minimize them through better join strategies. Broadcast joins work great for small dimension tables joined to large fact tables because you send the small table to every executor once.

Handling large-scale data processing requires different strategies than working with sample datasets. Processing terabytes means you can't load everything into memory at once. You'll run out and crash. Implement incremental processing patterns that handle data in chunks. Watch for data skew where some partitions are way larger than others. That kills performance because one slow task holds up the entire job. Configure executor memory and cores appropriately for your workload based on actual testing, not just guessing.

Managing, optimizing, and monitoring your solutions

The final domain covers operational excellence and keeping things running smoothly in production. Storage optimization starts with appropriate partitioning schemes. Partition by date for time-series data, but don't go overboard with too many partitions. File compaction solves the small file problem that plagues data lakes when you have thousands of tiny files. Delta's OPTIMIZE command handles this automatically. Data compression reduces storage costs significantly, sometimes 80-90% smaller. Lifecycle policies automatically move or delete old data based on retention requirements.

Query performance optimization in warehouses involves creating indexes on frequently-filtered columns, implementing materialized views for expensive aggregations that run repeatedly, and using query result caching so identical queries return instantly. Analyzing execution plans shows you where queries spend time. Maybe you need better indexing, maybe you need to rewrite the query entirely, maybe your statistics are stale.

Data governance implementation is getting more attention from Microsoft lately. You track data lineage across pipelines and transformations so you know where data comes from and where it goes downstream. Sensitivity labels classify data for compliance with regulations like GDPR or HIPAA. Data quality rules validate incoming data meets expectations. Integration with Microsoft Purview provides enterprise-wide governance, though honestly, that integration is still maturing and has some gaps.

Security and access control uses workspace roles (Admin, Member, Contributor, Viewer) as the first layer of protection. Row-level security in warehouses and semantic models restricts data access based on user identity. Sales managers only see their region's data, for example. Service principals enable application access without using personal accounts. Private endpoints secure connectivity for sensitive workloads that can't go over the public internet.

Monitoring and alerting requires configuring monitoring for all your Fabric items. Lakehouses, warehouses, pipelines, notebooks, the whole stack. Custom metrics and logs help track business-specific KPIs beyond just technical metrics. Alerts notify you when failures occur or performance degrades below thresholds. Fabric's built-in monitoring covers most needs, but you can integrate with Azure Monitor for advanced scenarios, similar to what you'd do with AZ-104 Azure administration.

Reliability and disaster recovery planning ensures business continuity when things go wrong. Fabric provides built-in redundancy, but you still need backup strategies for critical data you can't afford to lose. Understand recovery point and recovery time objectives for different data tiers. Implement retry logic in pipelines for transient failures that resolve themselves. Document recovery procedures so anyone on your team can handle incidents, not just the person who built everything.

How the domains interconnect in practice

Here's the thing. Microsoft structures the exam into neat domains, but real-world Fabric implementations don't work in silos like that. You might design a lakehouse architecture (Domain 1), ingest data with pipelines (Domain 2), transform it with Spark notebooks (Domain 3), then optimize and monitor the whole solution (Domain 4) as one integrated project. The exam tests this integrated understanding through scenario questions that span multiple domains.

For example, a question might describe a retail company ingesting point-of-sale data from hundreds of stores. You need to recommend whether to use a lakehouse or warehouse (Domain 1), choose between batch pipelines or streaming ingestion based on latency requirements (Domain 2), decide on incremental processing strategy to avoid reprocessing everything (Domain 3), and plan for monitoring and governance at scale (Domain 4). That's four domains tested in one question.

The weighting tells you where to focus study time effectively. If you're weak on Spark transformations and that's 25-30% of the exam, you're risking a lot of points unnecessarily. Conversely, if you're already strong there from previous experience, maybe spend more time on pipeline orchestration or optimization strategies where you're less comfortable.

Preparing for scenario-based questions

Microsoft loves scenarios where multiple answers could technically work but one is better given the specific requirements. You need to evaluate tradeoffs constantly. A lakehouse offers flexibility for exploratory work but a warehouse provides better BI performance for known queries. Streaming ingestion gives real-time insights but costs more and adds complexity compared to batch. MERGE operations are powerful for upserts but slower than append-only patterns when you don't need updates.

The DP-700 practice exam questions pack at $36.99 helps here because it includes these scenario-based questions with detailed explanations of why each answer is right or wrong. You're not just memorizing facts. You're learning to evaluate options and choose appropriately based on context.

Understanding the "why" behind architectural decisions matters way more than memorizing syntax you can look up. Why would you choose a tumbling window trigger instead of a schedule trigger? Because you need guaranteed exactly-once processing for each time window without gaps or overlaps. Why use shortcuts instead of copying data everywhere? To avoid storage duplication, reduce costs, and maintain a single source of truth. The exam tests this reasoning constantly.

What makes DP-700 different from other data certifications

If you've taken DP-203 for Azure Data Engineering, you'll notice overlap in concepts like data lakes, Spark, and pipelines. But DP-700 is Fabric-specific. You're working within the Fabric ecosystem with its unified interface, OneLake storage, and integrated experiences across different workload types.

The certification assumes you understand data engineering fundamentals already. You should already know SQL, basic ETL and ELT concepts, and how distributed systems work at a high level. The exam tests your ability to implement solutions specifically using Microsoft Fabric's tools and services, not generic data engineering theory.

Compared to DP-300 for database administration or PL-300 for Power BI, DP-700 sits in the middle. You're building the data infrastructure that DBAs optimize and analysts consume downstream. You need enough SQL for warehouses, enough Python for notebooks, and enough architectural knowledge to design end-to-end solutions that actually work.

Common gaps in knowledge to address

Most candidates struggle with specific areas that come up repeatedly. Delta Lake optimization commands like OPTIMIZE and VACUUM. Know when and why to use them, not just that they exist. Pipeline trigger types and their appropriate use cases beyond just "schedule triggers run on a timer." Spark performance tuning, especially understanding shuffles and broadcast joins at a deeper level. Security implementation across workspaces, lakehouses, and warehouses with proper role assignments.

The medallion architecture sounds simple. Bronze, silver, gold, right? But implementing it properly requires understanding incremental processing between layers, data quality checks at each stage, and governance boundaries. You can't just create three folders and call it a medallion architecture without the proper transformations and controls.

OneLake shortcuts are powerful but confusing at first because they're essentially symbolic links for data. They create virtual paths to data stored elsewhere without copying it physically. Understand when shortcuts make sense versus copying data, and how they affect performance and governance differently.

Honestly? Hands-on experience matters more for this exam than pure study of documentation. Build a lakehouse, ingest some real data, transform it with Spark, create a warehouse, run some queries and see what performs well. You'll understand the concepts way better than reading documentation alone because you'll hit the same issues real implementations face. The skills measured in DP-700 aren't theoretical. They're practical engineering skills you'll use constantly if you work with Microsoft Fabric in any capacity.

DP-700 Prerequisites and Recommended Experience for Success

The Microsoft DP-700 certification is Microsoft's way of saying, "Yep, you can build data engineering solutions in Fabric and not get lost halfway through a pipeline run." It maps to real work: ingestion, orchestration, Spark transformations, lakehouse and warehouse choices, modeling, and the operational stuff people forget until production melts.

Short version. Data engineering, Fabric-first. Scenario-heavy.

A lot of folks assume it's "just Synapse with a new name." Look, honestly, Fabric feels familiar if you've lived in Azure Data Factory, Synapse, or Databricks, but the exam leans into the integrated platform angle. I mean, like OneLake, workspace security, and how lakehouse and warehouse in Microsoft Fabric fit different workloads, even when both can answer "analytics" on paper.

If you're building or maintaining analytical data platforms, you're the target. Data engineers. Analytics engineers who got dragged into pipelines. BI folks who now own the lakehouse. Also, anyone whose job title includes "platform" and whose calendar includes "why is the refresh late."

New to data engineering?

You can still take it. No gatekeeping. But your prep time'll be longer, and the DP-700 exam difficulty will feel personal.

People always ask: How much does the DP-700 exam cost? The DP-700 exam cost's set by Microsoft and varies by region and currency, so you'll see different numbers depending on where you schedule. Some employers get discounts via enterprise agreements, and students sometimes have academic pricing. Check the exam page at booking time because the displayed price is the only one that matters.

What is the passing score for Microsoft DP-700? Microsoft exams typically use a scaled score, and for DP-700 the passing score's 700. That "700" isn't "70%." It's scaled, and different question weights can make two attempts feel wildly different. Not gonna lie, this's why practice questions help, because you need to learn Microsoft's wording, not just Fabric features.

Expect a mix: multiple choice, case studies, drag and drop, and those "pick two" questions that punish guessing. You'll see scenario prompts that read like a mini ticket from a real backlog, and you'll need to choose the best implementation, not the one you used last year. The thing is, delivery's usually online proctored or test center, depending on what's available in your area.

How hard is the DP-700 (Microsoft Fabric) exam? The DP-700 exam difficulty's mostly about breadth plus platform specifics. If you've actually built data pipelines with Data Factory in Fabric, authored Fabric notebooks and Spark data engineering code, and had to secure a workspace properly, you'll be fine. If you've only watched videos and never clicked through the UI, the scenario questions'll wreck your confidence because they assume you've felt the pain of things like incremental loads, permissions, and monitoring.

Plan and implement Fabric solutions (lakehouse/warehouse strategy)

This's where the exam tests judgment. When do you pick a lakehouse vs a warehouse. How do you think about OneLake storage, Delta tables, and serving patterns. Also, what happens when the business wants "SQL endpoints for everything" but your data's messy and landing in files first.

Facts.

Ingest and orchestrate data (pipelines, scheduling/monitoring)

Ingestion's more than "copy activity." You'll need to understand orchestration patterns, monitoring, retries, and how you'd design pipelines for reliability. The DP-700 exam objectives push you to think about operational reality, like dependencies, incremental loading, and alerting when a pipeline fails at 2 a.m.

Transform and process data (Spark, notebooks, dataflows)

Spark shows up. A lot. You need the basics of drivers, executors, partitions, and why your join suddenly got expensive. Lazy evaluation matters, transformations vs actions matter, and you should be comfortable reading PySpark dataframe code even if you don't write it daily. One long truth: Microsoft can ask you about a Fabric notebook scenario where performance's bad, and the "right" answer's some combo of partitioning choices, avoiding UDFs, caching, or changing the way you're writing to Delta, and you only recognize that if you've debugged slow Spark jobs before.

Manage and optimize storage and models (Delta/OneLake, partitioning)

Storage questions tend to be sneaky. They'll ask about table formats, partitioning, and optimization decisions, plus how modeling choices affect downstream analytics. Knowing dimensional modeling basics's huge here: facts vs dimensions, star schema, normalization vs denormalization trade-offs, and slowly changing dimensions.

Quick thought. Modeling isn't dead.

Secure, govern, and operate solutions (access, lineage, reliability)

Fabric's integrated with Microsoft's identity stack, so expect Azure Active Directory concepts, workspace roles, item permissions, and governance ideas like lineage and auditing. Wait, there's also the "operate it" side: monitoring, handling failures, and keeping costs from spiraling, because cloud bills don't care about your feelings.

Here's the clean answer on DP-700 prerequisites: Microsoft doesn't mandate formal prerequisites for taking the DP-700 exam. Anyone can register. No required cert chain. No documented work history. You pay, you schedule, you attempt.

That said, recommended experience's real. Microsoft basically hints that you should have 1 to 2 years doing data engineering work: building pipelines, transforming data at scale, implementing storage, and supporting analytical workloads. That recommendation isn't fluff, because the exam's stuffed with "what would you do" scenarios, and theory-only study tends to fall apart when the question's really asking about trade-offs.

Skills you should already have (SQL, ETL/ELT, Spark basics, Azure/Fabric fundamentals)

SQL's non-negotiable. You should be comfortable with intermediate to advanced SQL: joins, subqueries, window functions, and CTEs, plus reading execution-ish logic when performance's implied. Warehouse questions assume you can reason in SQL without sweating.

ETL vs ELT matters too. You need to understand extract-transform-load and extract-load-transform patterns, when each makes sense, data integration best practices, and incremental loading strategies like watermark columns or change tracking patterns.

One sentence. Incremental loads show up.

Spark fundamentals. Drivers, executors, partitions. Lazy evaluation. Transformations vs actions. Basic optimization ideas like avoiding wide shuffles when possible. You don't have to be a Spark wizard, but you can't be Spark-blind.

Programming: Python or Scala. In Fabric, you'll likely touch Python more, but the exam can still reference Scala patterns. Be able to write transformation logic, use pandas for local-ish work, and handle standard collection operations. Notebook work counts.

Cloud familiarity helps. Azure resource concepts, identity via Azure AD, and cost management basics. Also, hands-on Microsoft Fabric platform exposure's huge: workspaces, lakehouses, warehouses, pipelines, notebooks. Honestly, theoretical knowledge alone isn't enough for DP-700 because the prompts read like you've been inside the product.

DevOps basics matter more than people think. Git. CI/CD concepts for data pipelines. Environment separation (dev, test, prod). Not advanced, but real.

Microsoft Learn path (how to use it effectively)

Your baseline DP-700 study materials should start with Microsoft Learn because it aligns to the DP-700 exam objectives. But don't treat it like a novel. Skim, then build. Read a module, implement the feature in Fabric, break it, fix it. That feedback loop's how you make the content stick.

Also, take Microsoft's free skills assessment early. It's the fastest way to spot gaps and decide whether you need to backfill SQL or Spark before you grind Fabric specifics.

Fabric documentation (what to prioritize)

Docs can be a time sink. Prioritize: security and permissions, lakehouse vs warehouse behavior, pipelines orchestration and monitoring, Spark runtime basics, and anything related to Delta tables and OneLake concepts. If you only read one category, read the operational stuff, because the exam likes "what will you do when this fails."

Hands-on labs and projects (lakehouse, pipelines, notebooks, warehouse)

Build a mini end-to-end project: land raw data into a lakehouse, transform with a notebook, publish curated tables, then serve via warehouse SQL. Add a pipeline with scheduling and alerting. Include an incremental load.

Make it messy on purpose.

If you're brand new to Fabric, do labs. If you're missing practice questions, a targeted pack can help you learn the patterns Microsoft uses. I've seen people pair their labs with a small question set like DP-700 Practice Exam Questions Pack when they need reps on exam-style wording, not just product knowledge.

Pick courses that show the UI, not just slides. Look for sections on pipelines, Spark notebooks, and security. Mentioned casually: some're great, some're fluff, and you can usually tell by whether they make you build something that fails and then debug it.

Official practice assessments vs third-party tests

Microsoft's practice assessment's good for calibration. Third-party DP-700 practice tests can help with volume, but quality varies a lot, so don't memorize answers. Use them to find weak spots, then go back to Fabric and reproduce the scenario.

If you want a simple add-on, DP-700 Practice Exam Questions Pack is the kind of thing people use for extra drills after they've done hands-on work, not as a substitute for it. That distinction matters.

What to look for in high-quality practice questions