CCA175 Practice Exam - CCA Spark and Hadoop Developer Exam

Reliable Study Materials & Testing Engine for CCA175 Exam Success!

Exam Code: CCA175

Exam Name: CCA Spark and Hadoop Developer Exam

Certification Provider: Cloudera

Certification Exam Name: Cloudera Certified Associate CCA

Free Updates PDF & Test Engine

Verified By IT Certified Experts

Guaranteed To Have Actual Exam Questions

Up-To-Date Exam Study Material

99.5% High Success Pass Rate

100% Accurate Answers

100% Money Back Guarantee

Instant Downloads

Free Fast Exam Updates

Exam Questions And Answers PDF

Best Value Available in Market

Try Demo Before You Buy

Secure Shopping Experience

CCA175: CCA Spark and Hadoop Developer Exam Study Material and Test Engine

Last Update Check: Mar 20, 2026

Latest 96 Questions & Answers

45-75% OFF

Hurry up! offer ends in 00 Days 00h 00m 00s

*Download the Test Player for FREE

Dumpsarena Cloudera CCA Spark and Hadoop Developer Exam (CCA175) Free Practice Exam Simulator Test Engine Exam preparation with its cutting-edge combination of authentic test simulation, dynamic adaptability, and intuitive design. Recognized as the industry-leading practice platform, it empowers candidates to master their certification journey through these standout features.

What is in the Premium File?

Satisfaction Policy – Dumpsarena.co

At DumpsArena.co, your success is our top priority. Our dedicated technical team works tirelessly day and night to deliver high-quality, up-to-date Practice Exam and study resources. We carefully craft our content to ensure it’s accurate, relevant, and aligned with the latest exam guidelines. Your satisfaction matters to us, and we are always working to provide you with the best possible learning experience. If you’re ever unsatisfied with our material, don’t hesitate to reach out—we’re here to support you. With DumpsArena.co, you can study with confidence, backed by a team you can trust.

Cloudera CCA175 Exam FAQs

Introduction of Cloudera CCA175 Exam!

Cloudera CCA175 is a certification exam for Apache Hadoop and related technologies. It is designed to test a candidate's knowledge and skills in the areas of data ingestion, data storage, data processing, and data analysis using Apache Hadoop. The exam covers topics such as HDFS, MapReduce, YARN, Hive, Impala, Pig, Sqoop, Flume, Oozie, HBase, and Spark.

What is the Duration of Cloudera CCA175 Exam?

The duration of the Cloudera CCA175 exam is 2 hours.

What are the Number of Questions Asked in Cloudera CCA175 Exam?

There are 8 questions in the Cloudera CCA175 exam.

What is the Passing Score for Cloudera CCA175 Exam?

The passing score required in the Cloudera CCA175 exam is 70%.

What is the Competency Level required for Cloudera CCA175 Exam?

The Cloudera CCA175 exam is designed to assess the knowledge, skills, and abilities of an entry-level Apache Hadoop developer. It tests the candidate’s ability to implement complex architectures that include Apache Hadoop, Apache Spark, Apache Hive, and other components of the Cloudera Data Platform. The content of the exam covers topics such as setting up a multi-node cluster, running and debugging applications, managing data with Hive and Impala, and managing jobs with Oozie. The minimum competency level required to pass the exam is Intermediate.

What is the Question Format of Cloudera CCA175 Exam?

The Cloudera CCA175 exam consists of eight performance-based, hands-on tasks that must be completed using Cloudera Enterprise cluster and Hadoop tools. Each task requires a specific set of skills and knowledge, and each task is associated with a specific set of questions. The exam questions format include multiple-choice, drag-and-drop, fill-in-the-blank, and simulated tasks.

How Can You Take Cloudera CCA175 Exam?

The Cloudera CCA175 exam can be taken either online or at a testing center. For the online version, you will need to register for the exam on the Cloudera website and then follow the instructions provided to complete the exam. For the testing center version, you will need to contact a Cloudera Certified Instructor (CCI) to arrange a time and location to take the exam.

What Language Cloudera CCA175 Exam is Offered?

The Cloudera CCA175 Exam is offered in English.

What is the Cost of Cloudera CCA175 Exam?

The Cloudera CCA175 exam is offered for a fee of $295 USD.

What is the Target Audience of Cloudera CCA175 Exam?

The target audience of the Cloudera CCA175 exam is individuals who are interested in becoming certified in Apache Hadoop and Spark. This includes developers, architects, data engineers, analysts, and administrators who want to prove their skills and knowledge in the area of big data.

What is the Average Salary of Cloudera CCA175 Certified in the Market?

The average salary for a Cloudera CCA175 certified professional is approximately $100,000 per year. This figure can vary depending on the individual's experience, location, and other factors.

Who are the Testing Providers of Cloudera CCA175 Exam?

Cloudera provides official testing for the Cloudera Certified Administrator for Apache Hadoop (CCA175) exam. The exam is administered by Pearson VUE, a third-party testing provider.

What is the Recommended Experience for Cloudera CCA175 Exam?

The recommended experience for the Cloudera CCA175 exam includes a strong understanding of the core components of the Hadoop ecosystem and their associated tools, a working knowledge of MapReduce, Spark, and HDFS, and experience with basic Linux commands. Knowledge of HBase, Hive, Impala, and Pig is also helpful. Additionally, knowledge of software development and scripting languages such as Java, Python, and Shell scripting is highly recommended.

What are the Prerequisites of Cloudera CCA175 Exam?

The Cloudera CCA175 exam requires candidates to have a basic understanding of the following concepts: Hadoop Distributed File System (HDFS), Apache Hadoop YARN, Apache Hive, Impala, Apache Spark, Apache Flume, Apache Sqoop, Apache HBase, Apache Oozie, and Apache Kafka. Additionally, familiarity with Hue, Cloudera Manager, and basic Linux commands is beneficial.

What is the Expected Retirement Date of Cloudera CCA175 Exam?

The official website to check the expected retirement date of Cloudera CCA175 exam is https://www.cloudera.com/products/certification/cca-175-exam.html.

What is the Difficulty Level of Cloudera CCA175 Exam?

The difficulty level of the Cloudera CCA175 exam is medium to advanced. It is designed to test the candidate's knowledge and skills in Apache Hadoop and related technologies.

What is the Roadmap / Track of Cloudera CCA175 Exam?

The Cloudera CCA175 Exam Certification Track/Roadmap is a comprehensive program designed to help IT professionals gain the skills and knowledge needed to become certified in the Cloudera CCA175 Exam. The program consists of a series of courses and exams that cover topics such as Hadoop, Apache Spark, and other Big Data technologies. It also includes hands-on labs, practice exams, and a final certification exam. The program is designed to help IT professionals gain the skills and knowledge needed to become certified in the Cloudera CCA175 Exam.

What are the Topics Cloudera CCA175 Exam Covers?

Cloudera CCA175 exam covers the following topics:

1. Apache Hadoop: Apache Hadoop is an open source framework for distributed storage and processing of large data sets. It is used to store and process large amounts of data across a cluster of computers.

2. Apache Hive: Apache Hive is a data warehouse system built on top of Hadoop. It provides a SQL-like interface for querying data stored in Hadoop.

3. Apache Pig: Apache Pig is a high-level scripting language that is used to process large data sets in Hadoop.

4. Apache Spark: Apache Spark is an open source cluster computing framework for large-scale data processing. It can process data in-memory, which makes it faster than traditional MapReduce.

5. Apache Flume: Apache Flume is a distributed, reliable, and available service for efficiently collecting, aggregating, and moving large amounts

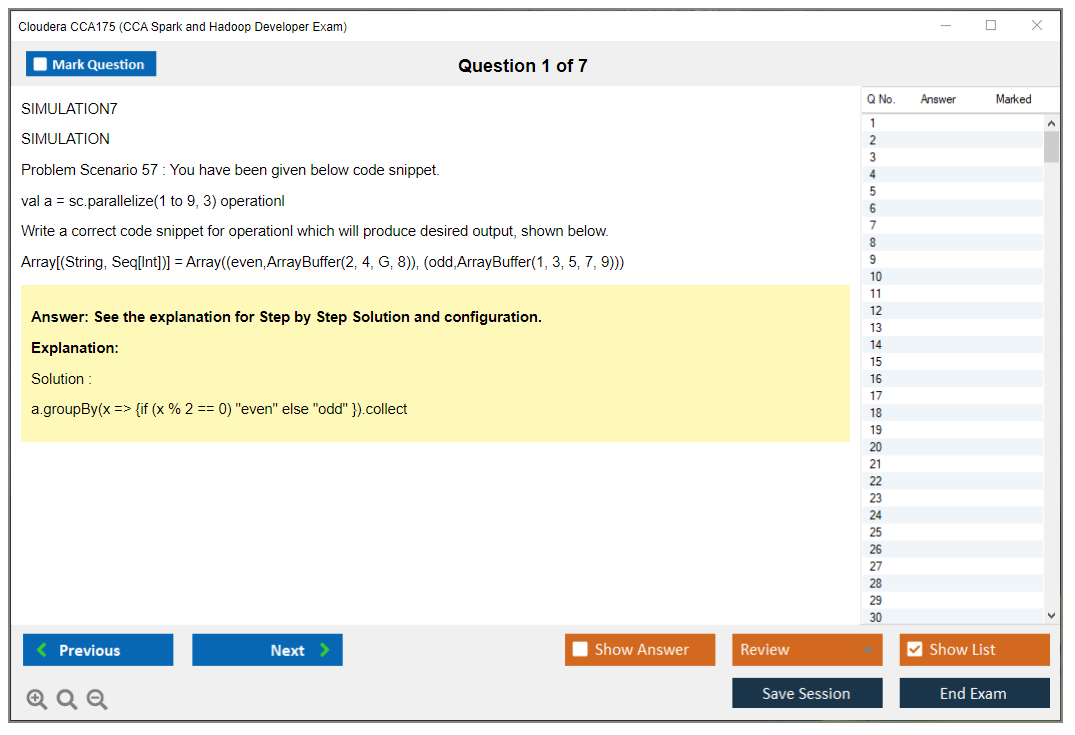

What are the Sample Questions of Cloudera CCA175 Exam?

1. What is the purpose of the YARN Resource Manager?

2. What is the function of the HDFS NameNode?

3. How can you monitor the progress of a MapReduce job?

4. What is the purpose of the Hive metastore?

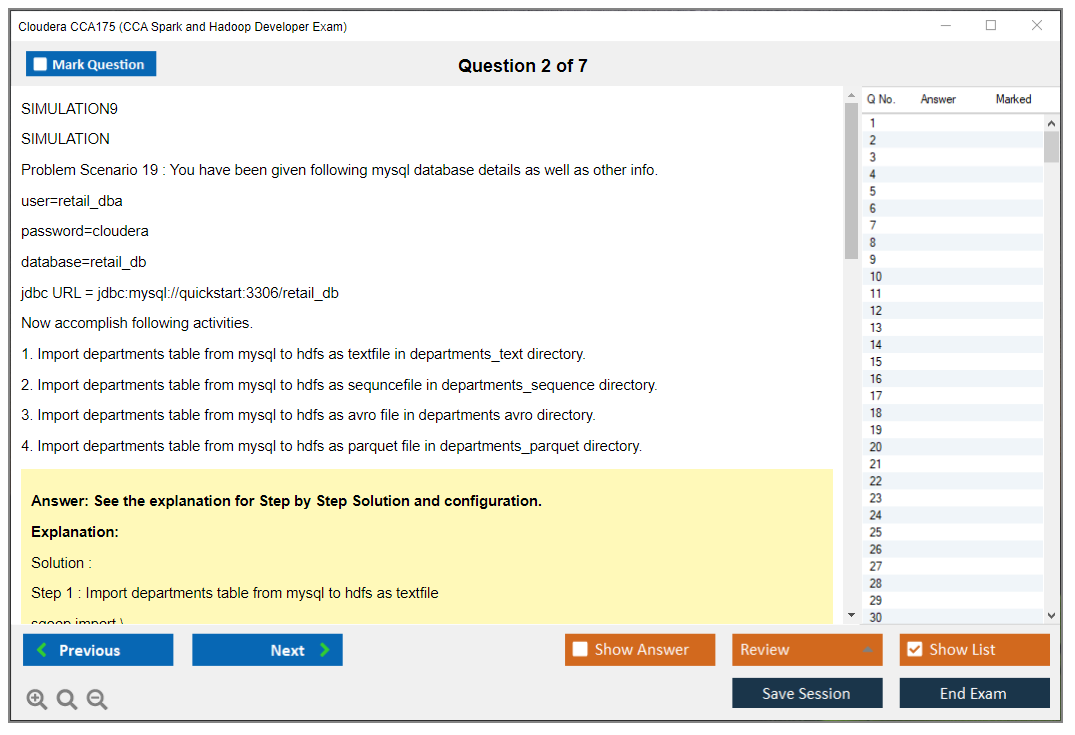

5. How does the Sqoop import command work?

6. What is the purpose of the Impala daemon?

7. How would you configure a secure HBase cluster?

8. How can you optimize the performance of a Spark job?

9. What is the purpose of the Oozie workflow engine?

10. What is the difference between a Hive table and an Impala table?

Cloudera CCA175 (CCA Spark and Hadoop Developer Exam) Cloudera CCA175 (CCA Spark and Hadoop Developer Exam) Overview What is the CCA175 certification and why it matters in 2026 The Cloudera CCA175 certification is different. Completely different from what you'd expect. This isn't some multiple-choice thing where you cram facts the night before and cross your fingers. This exam throws you into an actual live Cloudera cluster and basically says "here, solve these real problems right now." You deal with genuine Spark and Hadoop environments, transforming data, writing queries, producing output that has to match exact specifications. We're talking precision here, not close enough. In 2026, this cert still matters. Cloud-native platforms are everywhere, sure, but here's the thing: tons of enterprises still run Cloudera environments, especially in finance, healthcare, manufacturing sectors where data stays on-premise or lives in hybrid setups. Employers hunting for data engineers and big... Read More

Cloudera CCA175 (CCA Spark and Hadoop Developer Exam)

Cloudera CCA175 (CCA Spark and Hadoop Developer Exam) Overview

What is the CCA175 certification and why it matters in 2026

The Cloudera CCA175 certification is different. Completely different from what you'd expect. This isn't some multiple-choice thing where you cram facts the night before and cross your fingers. This exam throws you into an actual live Cloudera cluster and basically says "here, solve these real problems right now." You deal with genuine Spark and Hadoop environments, transforming data, writing queries, producing output that has to match exact specifications. We're talking precision here, not close enough.

In 2026, this cert still matters. Cloud-native platforms are everywhere, sure, but here's the thing: tons of enterprises still run Cloudera environments, especially in finance, healthcare, manufacturing sectors where data stays on-premise or lives in hybrid setups. Employers hunting for data engineers and big data developers recognize CCA175 because it proves you can actually do the work, not just discuss it at a whiteboard. You've transformed messy datasets. Handled various file formats. Optimized Spark jobs when they're crawling along. Delivered clean output under time pressure.

The certification validates proficiency in both PySpark and Spark SQL environments, which is basically your daily toolkit. Unlike vendor-neutral certs that touch everything superficially, CCA175 digs deep on the Cloudera ecosystem. This distinguishes you in competitive job markets. Passing it means you've solved practical data transformation problems in production-style settings where mistakes cost money. That's what hiring managers actually want to know anyway.

I remember the first time I saw someone struggle through a Spark job that kept running out of memory. They knew the theory cold but couldn't figure out the partitioning strategy to save their life. That's the gap this exam actually tests.

What the CCA175 certification validates

Real talk here. The exam tests your ability to ingest data from various sources into HDFS and Hive tables. Sounds simple, right? Wrong. You handle different formats, deal with delimiters, manage schemas, know when to use which ingestion method. It's nuanced.

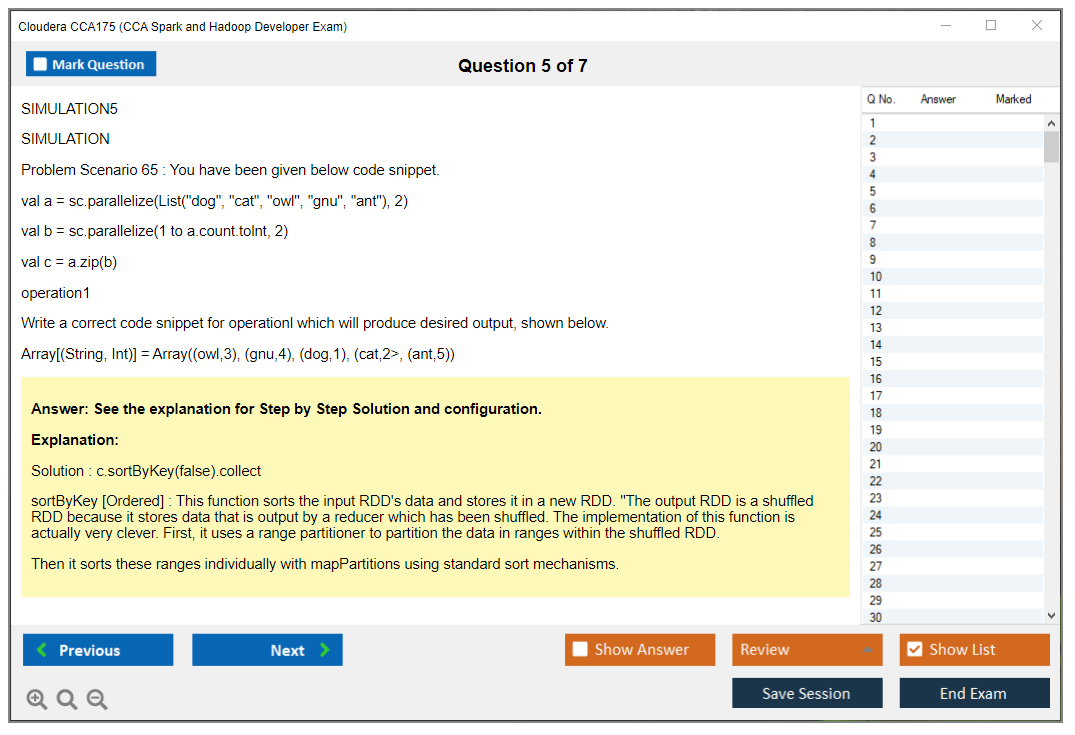

Then there's proficiency transforming and cleaning data using Spark DataFrames and RDDs, though honestly the exam leans heavily toward DataFrames now since that's the modern approach everyone adopted. You demonstrate expertise in Spark SQL for querying and analyzing structured data. Complex joins, aggregations, window functions that show up constantly in real ETL pipelines. Not gonna lie, window functions trip people up because they require thinking about partitioning and ordering simultaneously, which is like patting your head while rubbing your stomach.

You also need competence writing output data in multiple formats like Parquet, JSON, Avro, plain text files. Each format has quirks. Compression, schema handling, performance implications that matter.

The capability to optimize Spark jobs? Critical. Understanding data partitioning and bucketing strategies can make the difference between a job finishing in minutes versus one that times out and ruins your day. You're expected to show knowledge of working with both batch and iterative data processing workflows, covering most use cases you'll encounter professionally.

Who should take the CCA175 exam

Data engineers working with big data processing frameworks are obvious candidates. If you're building pipelines moving and transforming data at scale, this cert validates what you already do daily. Hadoop developers transitioning to Spark-based development benefit because it forces you to learn Spark properly rather than just hacking together RDD code that works but isn't maintainable or scalable.

ETL developers building data pipelines on Cloudera platforms should consider it. Big data analysts requiring certification for career advancement too. Sometimes your skills are solid but you need that credential to get past HR filters and automated screening systems. Software engineers specializing in distributed data processing find it useful. Career changers entering the big data engineering field use it to prove they're serious. Consultants needing credible proof of Cloudera Spark skills basically need this to bill at higher rates and land premium contracts.

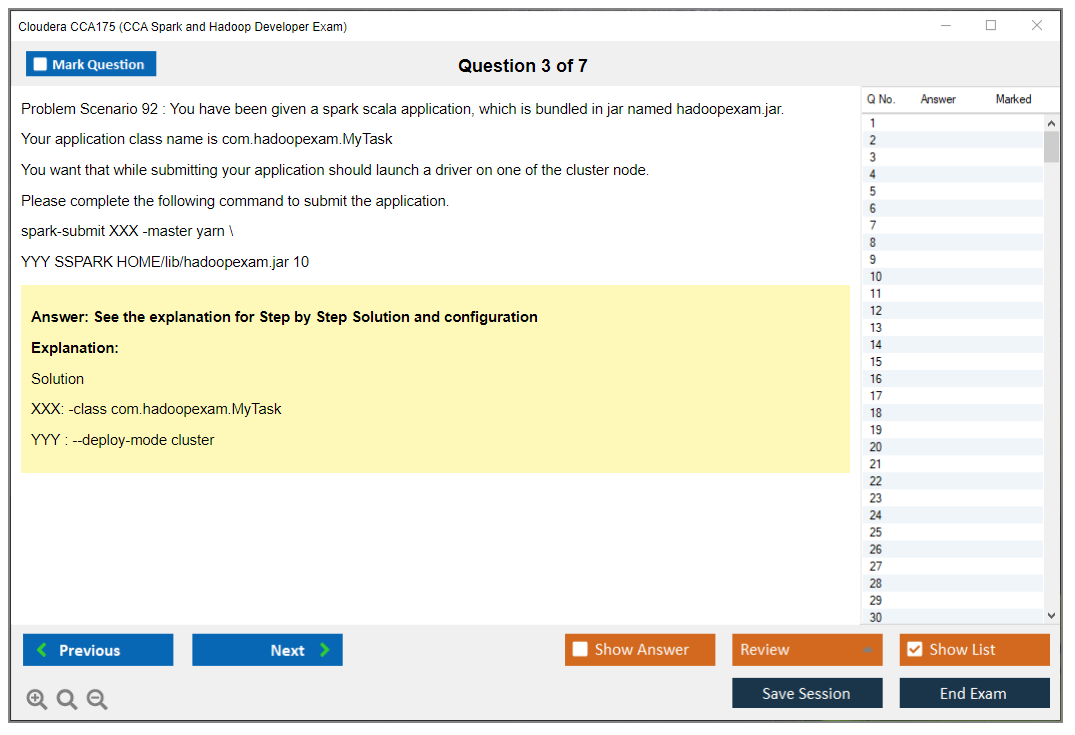

CCA175 exam format and structure

Here's where it gets interesting. The performance-based exam requires hands-on problem solving. You get access to a live Cloudera cluster during the examination itself. You'll face 8-12 practical scenarios to complete within a 120-minute duration, which means time management isn't optional. It's survival. Each scenario presents a problem: maybe ingesting CSV files, joining datasets, aggregating results, writing output in a specific format with particular compression settings.

It's a remote proctored exam taken from your own computer with webcam and screen sharing setup monitoring everything. No multiple-choice questions exist because everything's practical implementation where you're actually writing code that executes. Your solutions get evaluated based on correctness of output data. If your results don't match expected values exactly, you don't get credit. Period.

The good news? You can use documentation and command-line tools during the exam. The bad news is you need to know where to look and work fast because time evaporates when you're troubleshooting.

Key differences from traditional certification exams

The focus on doing rather than knowing theoretical concepts changes everything about how you prepare. You're in a real cluster environment instead of simulated scenarios where mistakes don't have consequences. Partial credit isn't awarded. Solutions must meet exact requirements for file format, column names, data types, and values down to the last decimal place.

The emphasis on data accuracy and format compliance means attention to detail matters as much as Spark knowledge itself. Time pressure combined with complexity of realistic tasks creates stress revealing whether you truly know the material or just studied it. It requires muscle memory and command fluency, not just comprehension of concepts. You might understand joins perfectly but if you can't write the syntax quickly without looking up basic stuff, you'll run out of time and fail scenarios you actually understand.

The exam tests troubleshooting skills under examination conditions. It validates production-ready coding practices and efficiency that employers actually care about.

Evolution of CCA175 in 2026

The updated content reflects current Spark 3.x features while maintaining the core assessment approach that's always made this cert valuable. Despite cloud-native data platform growth everywhere, the cert has continued relevance because Cloudera installations aren't disappearing overnight. Enterprises don't just migrate legacy systems on a whim.

Integration of newer Spark SQL functions and capabilities keeps it current. The emphasis on DataFrame API over legacy RDD approaches fits with how people actually work now in modern environments. The certification maintains industry value for Cloudera-based enterprise environments. Gets recognition across hybrid cloud and on-premise deployments that dominate real-world infrastructure.

If you're targeting roles at organizations running Cloudera platforms, this cert opens doors that other credentials won't. Doors that lead to roles with actual technical depth and competitive compensation.

CCA175 Exam Cost and Registration Details

Cloudera CCA175 (CCA Spark and Hadoop Developer Exam) overview

What the CCA175 certification validates

The CCA175 exam is a performance-based Spark developer certification that Cloudera's built its reputation on. That "performance-based" aspect? It's everything. You're not clicking through multiple choice garbage. You're actually executing pyspark and spark sql exam tasks inside a live environment and getting judged on whether your outputs align with the grader's expectations.

Hands-on work. Timed execution. Real scenarios.

Who should take the CCA175 exam

Here's my take: if your current role already involves Spark DataFrame transformations and actions, wrestling with messy data files, building ETL pipelines, and troubleshooting jobs that fail in ridiculous ways, you're probably ready. Now, if you're completely new to Spark, sure, you can still pass this thing. But honestly you'll burn most of your prep time just learning basic mechanics rather than practicing exam-style execution speed, and that's typically where candidates waste money on retakes they didn't need.

I've watched people attempt this after two weeks of online tutorials. It goes about as well as you'd expect.

CCA175 exam cost and voucher details

CCA175 exam cost (what to expect)

The standard CCA175 exam cost runs $295 USD, with that usual "subject to regional variations" disclaimer depending on your purchase location and testing geography. That price gets you one exam attempt with remote proctoring included, which is actually convenient since you're not dealing with extra fees to locate some testing center or arrange travel. But it also means your home setup absolutely has to cooperate on exam day. No excuses.

One thing candidates overthink constantly: there aren't any additional costs for accessing the exam cluster environment. Cloudera supplies the environment as part of your attempt. You're not provisioning your own cluster or racking up cloud bills while that timer's running. Purchase the voucher. Schedule your slot. Log in. Execute.

Voucher purchases happen through the Cloudera website directly. Sometimes corporate training bundles include exam vouchers, and I mean that can be a surprisingly solid deal if your employer's covering costs anyway, since you're getting training plus the attempt without battling procurement teams twice. Discounts exist but aren't reliable. You'll occasionally spot promotional pricing surrounding Cloudera events, and educational discounts might be available for students, though you should still budget assuming you're paying full price since those offers appear and vanish unpredictably.

Cost comparison context. Within the big data certification space, $295 lands squarely in the middle range. It's cheaper than some proctored specialty exams pushing $400 or higher, and it's pricier than entry-level badges. The value proposition is that this is a performance-based Spark exam where you're demonstrating you can actually deliver working code, not just regurgitate memorized definitions.

Reschedule/retake policy considerations

Schedule when you're legitimately ready. Not gonna sugarcoat it: most "I barely failed" stories are actually "I scheduled way too early" stories wearing a disguise.

Also, keep that voucher clock top of mind. You don't want to purchase, procrastinate endlessly, then suddenly realize you're racing an expiration deadline.

Registration process and scheduling

First move is establishing an account on the Cloudera certification portal. From there, you purchase an exam voucher before scheduling your actual attempt. The workflow's pretty straightforward. Voucher acquisition first, then you select a date and time falling within the voucher validity window, then you confirm everything.

Schedule at minimum 48 hours ahead of your preferred exam time. That matters substantially because it connects directly to rescheduling rules and because remote proctoring slots can get booked solid, particularly around weekends or those end-of-quarter training stampedes. Once you schedule, you'll receive a confirmation email containing exam instructions. You should really read that thing because the technical requirements verification is where candidates often faceplant spectacularly.

Time zones get messy for global candidates. The portal displays times in a specific zone, and if you're traveling or your laptop clock's drifting, you can manufacture your own catastrophe. Multiple available time slots is the advantage, though. You can select something matching your peak mental performance. Early morning if that's when you're sharpest. Late evening if that's when your household's actually quiet.

Reschedule and cancellation policies

Cloudera's typical rule structure is straightforward: free rescheduling if completed 48+ hours before your scheduled exam time. Inside that window, cancellation fees can kick in for last-minute modifications. A no-show without cancellation typically forfeits your entire exam fee. One-time reschedule is generally permitted per voucher, which seems fair enough, but it means you can't keep postponing indefinitely.

Emergencies get handled individually. Life happens, obviously. But you should operate under the assumption you'll need documentation. Assume the default response is "policy says no." Don't construct your entire strategy around exception handling.

Voucher expiration periods typically run 12 months from purchase, and policy updates do occur periodically, so verify the official Cloudera site before purchasing or rescheduling. Best practice is boring but brutally effective: schedule when you're really prepared, not when you're feeling guilty about procrastinating.

Retake policy and additional attempts

If you fail, you'll generally wait 15 days before retaking. That's the standard cooling-off period. Each retake demands purchasing a fresh exam voucher at full price. There's no cap on total attempts allowed, which sounds reassuring until you calculate the cost implications of multiple attempts literally being $295 per try.

Each attempt represents an independent evaluation. Previous exam performance details aren't carried forward as some form of partial credit, so you can't "bank" the ingestion tasks and only redo joins next time. Use the waiting period strategically. Patch your knowledge gaps. Redo your weakest objective areas. Run a CCA175 practice test style workflow where you're practicing reading requirements quickly, executing efficiently, and validating outputs under genuine time pressure.

Voucher validity and expiration

Standard voucher validity is 12 months from purchase date, and you must schedule and complete the exam within that timeframe. Expired vouchers typically can't be refunded or extended. No extensions granted is harsh, admittedly, but it's consistent with how exam vendors universally operate.

Corporate vouchers might have different expiration terms altogether. Ask whoever manages training budgets in your organization, because I've witnessed companies purchase batches and establish internal deadlines that are substantially shorter than the public policy.

Purchase timing strategy here: don't buy the voucher as supposed motivation. Purchase it when your CCA175 study guide plan is already progressing and you've demonstrated you can hit the CCA175 exam objectives under timed conditions.

CCA175 passing score and results

Is there a published passing score?

Candidates ask "What's the CCA175 passing score?" constantly. Cloudera hasn't always published a simple fixed number publicly, and what actually matters more is that you complete sufficient tasks correctly to meet whatever scoring threshold exists.

How CCA175 is graded (performance-based scoring)

This gets graded by outputs exclusively. You execute Spark jobs and generate results. The system validates them. That's precisely why sloppy naming conventions, incorrect formats, or missing files can destroy you even if your underlying logic was close enough.

When and how you receive your results

Results typically get delivered after the exam through the portal and email, depending on current processing workflows. Expect some delay occasionally, because remote proctoring and automated grading pipelines aren't instantaneous in every scenario.

CCA175 FAQ

Cost, passing score, difficulty: quick answers

How much does the CCA175 exam cost? Standard's $295 USD, with regional variations possible. How hard is the CCA Spark and Hadoop Developer exam? Brutal if you're slow at hands-on Spark execution. Totally manageable if you practice like it's an actual work shift. Does the CCA175 certification expire or require renewal? Policies shift over time, so verify on Cloudera's site directly, but plan for recertification rules to exist eventually as platforms evolve.

CCA175 Passing Score and Results

Is there a published passing score?

Look, Cloudera doesn't publish the exact passing percentage for the CCA175 exam. Just doesn't do it. Industry speculation and candidate experiences suggest the passing threshold sits around 70%, but that's educated guessing, not official confirmation. The scoring algorithm might weight different tasks differently based on complexity. A simple data ingestion task probably doesn't carry the same points as a complex join with window functions. But Cloudera keeps that methodology under wraps.

Honestly, focusing on some minimum score is the wrong approach anyway. You should aim to complete all tasks correctly because you won't know during the exam which ones are worth more points. Some candidates report feeling confident about certain tasks and still failing, which suggests the weighting isn't uniform across all problems. Different exam versions might have different difficulty curves too, though the passing standard remains consistent across versions according to Cloudera's official policy.

Not gonna lie, the lack of transparency frustrates people. But it also means you can't game the system by identifying "easy points" and ignoring harder tasks. The CCA175 exam forces you to develop well-rounded Spark and Hadoop skills rather than cherry-picking topics. I once heard about someone who skipped all the RDD questions thinking they'd pass on SQL alone. Yeah, that didn't work out.

How CCA175 is graded (performance-based scoring)

The CCA175 uses performance-based scoring that evaluates the correctness of your output data, not your code or approach. Each task gets assigned a specific point value based on its complexity and the skills it tests. Your solutions must match the expected output format and content exactly. Both data accuracy and format compliance are required for a task to pass validation.

Partial credit? Generally not awarded. A task either passes the automated validation or it doesn't. This creates a binary pass or fail evaluation for each individual problem. The automated scoring system compares your results against an answer key, checking things like column names, data types, row counts, sort order, and actual values. I mean, if the expected output has 1,247 rows and yours has 1,246, that task fails even if everything else is perfect.

You get zero visibility into individual task scores during the exam. Can't see which tasks passed or failed until you get your final results. This makes the testing experience kind of nerve-wracking because you're working blind. You rely on your own validation steps to verify correctness before moving to the next problem.

The scoring methodology means your approach matters less than results. You could write elegant, optimized Spark code or messy, inefficient queries. If the output matches, you get the points. Conversely, you might use best practices and still fail a task if you misread the requirements and produced the wrong output format.

When and how you receive your results

Results typically become available within 24 to 48 hours after exam completion, though sometimes it happens faster. Cloudera sends an email notification to your registered email address with your pass or fail status clearly indicated. The results don't include a detailed breakdown of individual task performance or a percentage score. Just whether you passed or failed overall.

If you passed, you get access to download your official CCA175 digital certificate in PDF format through the Cloudera certification portal. The certificate includes a unique verification number that employers can use to confirm your credentials. You also get listed in Cloudera's public certification registry. Plus you can integrate a LinkedIn certification badge into your profile. The certificate shows validity period information and comes with usage rights and guidelines for displaying the certification mark professionally.

Failure notifications are less helpful. You won't get specific remediation guidance about which tasks you missed or which skill areas need improvement. Just a "you didn't pass" message basically. This means you need to rely on your own recall of the exam experience to identify gaps in your preparation.

Digital certificates for passing candidates are available immediately once results are released. No waiting for physical certificates or mailed documents. Everything happens through the certification portal, and you get access to certified professional resources and community forums that aren't available to non-certified individuals.

What to do if you don't pass

First thing: wait the mandatory 15-day period before you can reschedule. Cloudera enforces this cooling-off period, and you'll need to purchase a new voucher for your retake attempt since exam vouchers are single-use. The CCA175 exam cost for a retake is the same as the initial attempt. No discounts for second tries.

Review the exam objectives against your preparation gaps based on what you remember from your attempt. I know you can't see which specific tasks you failed, but you probably have a sense of which problems felt shaky or which domains gave you trouble. Maybe your joins were solid but aggregations with window functions tripped you up. Or maybe you struggled with the time pressure more than the technical content itself.

Strengthen hands-on practice in those challenging domains. If you felt rushed, work on time management and task completion efficiency by setting strict time limits during practice sessions. The CCA Spark and Hadoop Developer exam is as much about execution speed as technical knowledge. Consider additional practice tests and lab exercises that simulate the performance-based format. Read requirements carefully, plan your approach, execute the solution, and validate output before moving on.

Adjust your study strategy based on first attempt insights. Some candidates realize they knew Spark SQL theory but lacked muscle memory for writing efficient queries quickly. Others discover they didn't practice enough with different file formats or output requirements. Focus on building that hands-on competency through repetitive practice rather than just reviewing documentation.

The retake policy doesn't limit how many times you can attempt the exam, but each attempt costs money and requires that 15-day wait. Most people who fail once and then prepare strategically pass on the second attempt. Especially true if they treat the first exam as a learning experience rather than just a disappointment.

CCA175 Difficulty: How Hard Is the Exam?

Cloudera CCA175 (CCA Spark and Hadoop Developer Exam) Overview

The CCA175 exam is Cloudera's hands-on Spark developer certification Cloudera style, and look, it's the opposite of a trivia quiz. You get a live environment, a set of tasks, and you're judged on whether your outputs match what the grader expects. No partial credit for "I knew what to do." Just results.

This is why people call the CCA Spark and Hadoop Developer exam "moderately difficult to challenging." Not because the concepts are impossible, but because you've gotta execute fast, clean, and correctly, under pressure, with real data and real mistakes waiting to happen. Short clock. Real consequences.

What the CCA175 certification validates

It validates that you can actually build Spark ETL style pipelines, not just recite Spark architecture. Think pyspark and spark sql exam tasks like reading from a messy dataset, transforming it with Spark DataFrame transformations and actions, registering temp views, writing to a required format, and making Hive play nice.

Who should take the CCA175 exam

Daily Spark coders. Data engineers who already ship jobs. Folks who live in DataFrames and SQL and don't panic when a job fails with a weird schema mismatch. If your Spark work's mostly notebooks and toy datasets, honestly, you're gonna feel the time pressure hard.

CCA175 exam cost and voucher details

CCA175 exam cost (what to expect)

CCA175 exam cost changes based on promos and region, so check Cloudera's current listing before you buy. Budget for a retake too, because not gonna lie, the performance-based format makes first attempts rough for a lot of people. You're coding live without your usual safety nets and the clock's ticking down faster than you'd expect. Every second counts in a controlled environment where there's zero room for the kind of casual debugging you do at your desk.

Reschedule/retake policy considerations

Read the policy before purchase. Seriously. Remote proctoring rules, reschedule windows, and retake pricing can turn into surprise friction when your internet decides to be "creative" on exam week.

CCA175 passing score and results

Is there a published passing score?

CCA175 passing score details aren't always presented like classic multiple-choice tests. That's part of the mental stress. You're aiming to complete enough tasks correctly, not hit a neat percentage you can game.

How CCA175 is graded (performance-based scoring)

Grading is performance-based Spark exam scoring. Outputs matter. File formats matter. Column names and ordering can matter. If the task says write Parquet partitioned by 'date', and you write JSON unpartitioned, you might as well have not done it.

When and how you receive your results

You typically get results after submission, though timing can vary. Don't plan a celebration dinner for the same hour. Just saying.

CCA175 difficulty: how hard is the exam?

Most candidates rate the CCA175 exam as moderately difficult to challenging, and the biggest reason's the format, not the syllabus. You're not picking A/B/C/D. You're building, running, verifying, and fixing under a timer. That timer changes everything because you don't have room for long debugging sessions, second attempts, or rewriting half your code when you realize the requirements had one extra constraint buried in the wording. I've seen people who could recite the Spark docs verbatim absolutely fall apart when faced with a ticking clock and a file write that keeps throwing cryptic errors.

More challenging than multiple-choice certifications. Way more. Yet less difficult for someone who codes Spark daily, because the exam rewards muscle memory. If you already crank out joins, aggregations, and windowing-style analysis at work, the tasks feel like a normal Tuesday with a slightly annoying proctor watching you.

Technical environment stuff adds its own pain. You're in an unfamiliar cluster configuration, maybe with some latency, limited customization. You're often stuck working from the command line instead of your favorite IDE, which is fine until you realize how much you rely on autocomplete for Spark SQL syntax or for remembering that one option flag for a write mode.

Skills that make the exam easier (Spark SQL, DataFrames, ETL)

If you want the exam to feel "fair," you need both breadth and depth.

Strong Spark DataFrame API proficiency helps a ton. Filters, 'withColumn', casting, 'explode', joins, groupBy aggregations. Being able to predict what the schema'll look like after each step. Spark SQL fluency's the other half, including basic query tuning instincts, because a bad join choice can burn minutes while you stare at a job that takes forever.

A few more that matter: production ETL experience helps, Linux command-line comfort's non-negotiable, knowing file formats like Parquet, JSON, Avro, ORC. I'll also call out Hive table structures and partitioning, plus quick troubleshooting when Spark throws errors that look scary but are really just "column not found" or "cannot resolve due to data type mismatch."

Common reasons candidates fail

The failures I see repeat. A lot.

People show up with theory but not enough hands-on work on real Spark clusters. They burn time on basic mechanics like reading from a Hive table, writing with the right compression, or figuring out why their output path already exists. Then time management collapses, they leave tasks incomplete, and the score never recovers.

Other common killers: typos and syntax errors, misreading requirements, not validating output before submitting. Getting stuck on one "boss fight" task instead of moving on. Also, weirdly specific file format requirements trip people up, like "write ORC with Snappy" or "partition by these two columns." If you haven't done it recently, you'll waste precious minutes hunting docs you may not even be allowed to use.

Time management strategies for hands-on tasks

You get 120 minutes for 8 to 12 tasks, so you're averaging 10 to 15 minutes each. Some'll take 5. Some'll take 20+. There's basically no time for extensive debugging, and reading comprehension itself costs time, because the tasks are often precise about naming, paths, formats, partitions, and what "correct output" means.

My opinionated strategy: triage fast. Do the simple wins first, bank points, then come back for the heavy ETL tasks. Validate outputs quickly. Run 'count()', 'printSchema()', spot-check a few rows, confirm partitions exist, and move on. Practice builds speed here, because you stop thinking about mechanics and start just doing because the patterns are automatic.

CCA175 exam objectives (what you must be able to do)

CCA175 exam objectives usually boil down to: ingest data from files and tables, transform it, query it, and write it back correctly. That includes Spark SQL and DataFrame operations, joins and aggregations. The output requirements that graders love to punish, plus troubleshooting and performance tuning basics like partitioning choices and avoiding obviously expensive patterns.

CCA175 prerequisites and recommended background

There aren't "official prerequisites" that save you. Practical prerequisites do. If you haven't built a few end-to-end pipelines, including reading messy JSON, normalizing it, and writing partitioned Parquet with Hive integration, you'll feel it.

Be comfortable with the tools too. CLI. Spark shell or pyspark. Basic cluster habits. Fragments of knowledge won't save you.

Best CCA175 study materials (official and third-party)

Start with docs and a CCA175 study guide that maps directly to tasks you can practice. Focus on Spark SQL and DataFrames first. Then file formats and Hive. Then tuning and debugging patterns.

If you want structured practice, I'm a fan of doing lots of timed problems. Having a realistic question bank helps. The CCA175 Practice Exam Questions Pack is $36.99 and it's the kind of thing you run through repeatedly until the common patterns stop being "hard" and start being automatic.

CCA175 practice tests and hands-on practice

A good CCA175 practice test's performance-based, timed, and forces you to produce exact outputs. You want scenarios that feel like ETL tickets: "ingest, clean, join, aggregate, write." Then review your mistakes like an engineer, not a student. Keep an error log, redo the same tasks a week later, and measure speed.

If you need a ready-made set to grind, the CCA175 Practice Exam Questions Pack is worth looping through, because repetition's what builds the speed you need on exam day. Honestly, do 50+ practice problems before you schedule. More if you're not coding Spark daily.

CCA175 exam day tips (performance-based format)

Test your system. Network stability matters. Proctoring overhead's real. Close everything you don't need. During tasks, follow a simple loop: read, plan, execute, verify. Keep command history clean so you can rerun steps. Save work as you go because nothing's worse than realizing you can't reproduce an output you got "once."

CCA175 renewal, validity, and recertification

Does CCA175 expire? (renewal policy overview)

Cloudera policies can change, so confirm current renewal rules on their site. Some certs have validity windows, some shift when versions change. Don't assume forever.

When to recertify and how to stay current

Recertify when your job market demands it or when your tooling stack shifts. Keep coding. That's the secret.

Alternatives and next-step certifications

If you pass, consider broader data engineering certs next, or cloud-managed Spark paths depending on where your career's going.

CCA175 FAQ

Cost, passing score, difficulty (quick answers)

How much does the CCA175 exam cost? Varies, check Cloudera's listing. What's the passing score for CCA175? Not always presented as a simple number. How hard's the CCA Spark and Hadoop Developer exam? Moderate to challenging, mostly due to time and hands-on grading. What're the objectives covered in the CCA175 exam? ETL, Spark SQL, DataFrames, files, Hive, outputs, troubleshooting. Does the CCA175 certification expire or require renewal? Sometimes policies change, confirm before you plan.

If you're trying to figure out how to pass CCA175, my blunt advice's practice like it's a sport. Timed reps, real outputs, and lots of small failures before the real attempt. If you want a drill set, the CCA175 Practice Exam Questions Pack is a straightforward way to get those reps in without spending days inventing your own tasks.

CCA175 Exam Objectives and Domain Breakdown

CCA175 exam objectives and domain breakdown

The CCA175 exam is not your typical multiple-choice certification where you memorize facts and hope for the best. This is a performance-based exam that drops you into a live Spark cluster and says "prove you can actually do this work." You get 8-10 hands-on tasks that mirror real ETL scenarios, and you have got 120 minutes to complete them. Not gonna lie, that time pressure hits different when you are staring at the clock and your Sqoop command just failed for the third time because you forgot one stupid flag.

Look, the exam divides into five main domains, but honestly the boundaries blur when you are actually working through tasks. A single problem might require you to ingest data, transform it, aggregate results, and save output in a specific format. That is why understanding the weight of each domain helps you prioritize your study time. It also gives you a sense of where to focus your limited practice hours, assuming you have a day job and cannot spend every waking moment running test clusters.

Data ingest fundamentals (15-20% of exam weight)

This domain tests whether you can move data around without losing it or breaking things.

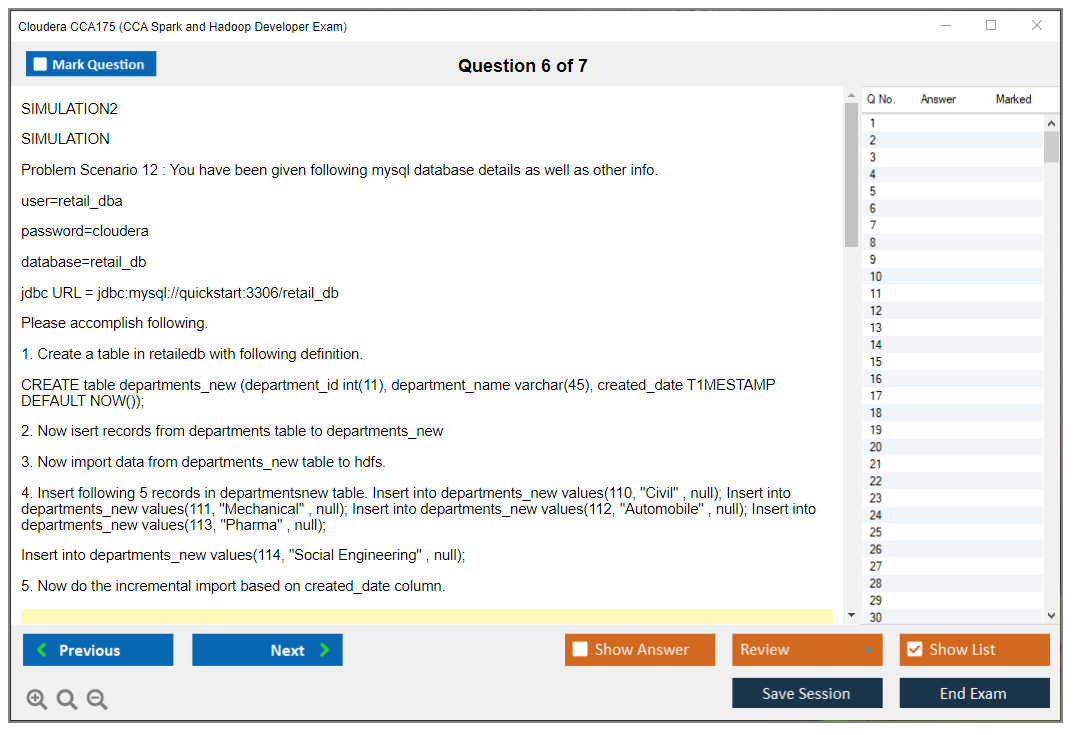

Sqoop is huge here.

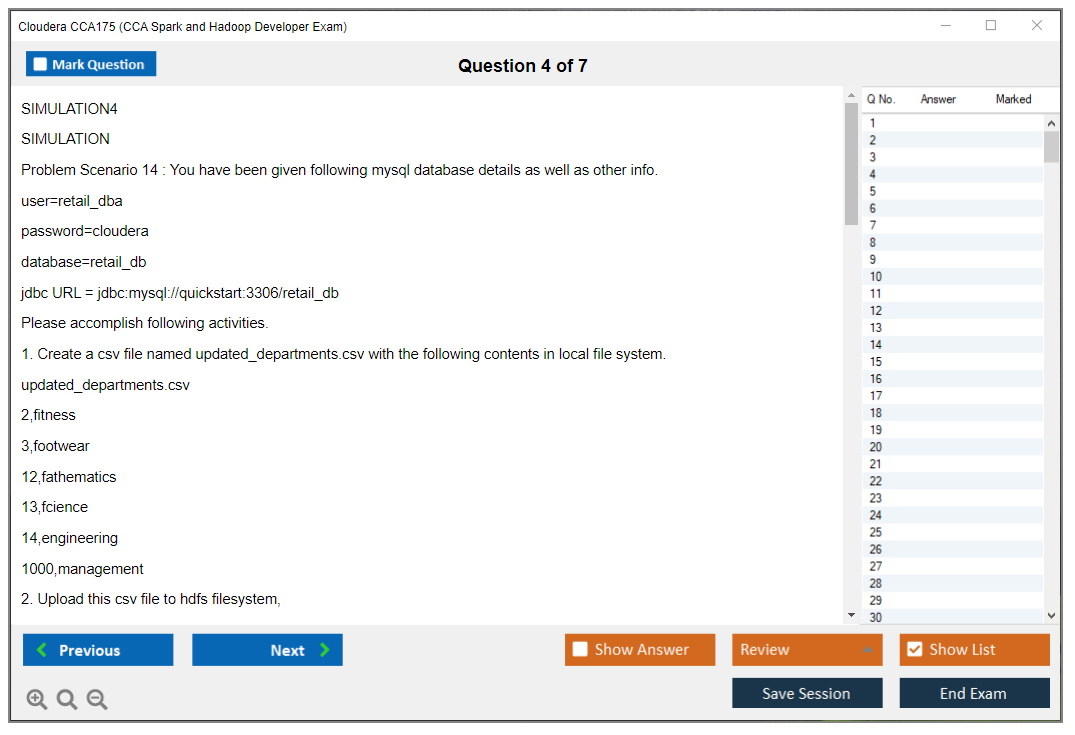

You need to import data from MySQL databases into HDFS using Sqoop with the right target directory and file format, and I have seen people mess up by not controlling the number of mappers properly, which either wastes resources or takes forever. You should know how to import all tables or just specific ones, handle incremental imports with append modes, and even import query results instead of dumping entire tables.

The export side matters too, getting data from HDFS back to relational databases. And basic operations like loading data from local filesystem to HDFS using command-line tools, creating Hive tables, working with different file formats during ingestion. CSV, JSON, Parquet. You need to handle all of them. Compression during import is another detail that trips people up. Always validate your ingestion worked. Check those record counts before moving on.

Transform, stage, and store (40-45% of exam)

This is where you spend most of your time, and it shows. Nearly half the exam focuses on data transformation tasks. You will load data from HDFS into Spark DataFrames, read from Hive tables using Spark SQL, and parse various formats. The format handling is critical. JSON parsing can get messy with nested structures.

Filtering data based on conditions seems simple until you are dealing with complex criteria across multiple columns. Selecting specific columns, deriving new ones through transformations, handling missing values correctly. I mean, null handling alone could be an entire domain because it is so easy to accidentally drop half your dataset when you meant to just clean it up a bit.

You need to know when to filter nulls out versus when to fill them with defaults.

String manipulation comes up constantly. Substring operations, concatenation, regex patterns for cleaning messy data. Date and timestamp transformations always appear because real-world data has terrible date formats. Built-in Spark SQL functions are your friends here. Conditional logic using when/otherwise constructs lets you implement business rules directly in your transformations.

Normalizing and denormalizing data structures based on requirements, flattening complex nested types from JSON files into columnar formats for analysis. Removing duplicates using distinct versus dropDuplicates (they are different, by the way). Sorting by single or multiple columns. And then repartitioning or coalescing DataFrames for optimization, which affects both performance and your output file structure.

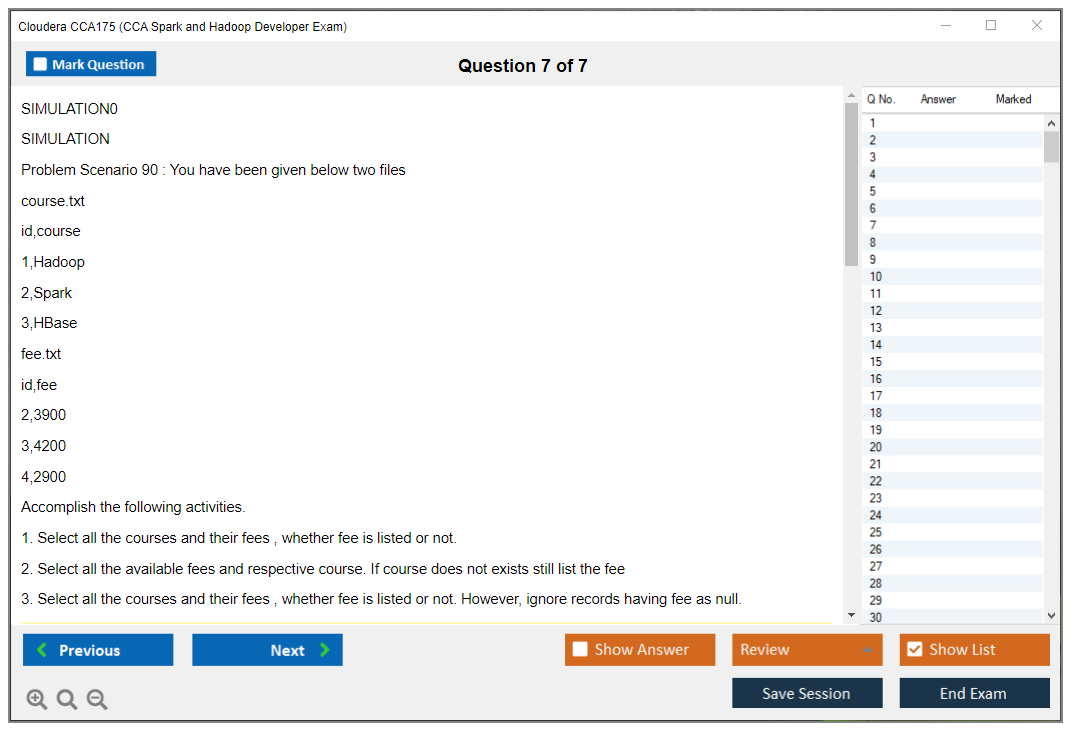

Data analysis operations (25-30% of exam)

Joins and aggregations dominate this domain. You will perform inner joins between multiple DataFrames or tables, execute left, right, and full outer joins with proper logic. Join conditions with multiple key columns can get tricky when you are joining three or four datasets together.

Aggregating data using groupBy operations is fundamental, but the exam goes deeper. Apply multiple aggregation functions at once. Sum this column while counting that one and averaging another. Window functions separate people who really know Spark from those who just dabble. Row_number, rank, dense_rank with proper partitioning and ordering specifications. Calculate running totals, moving averages, lag and lead operations for time-series analysis.

SQL syntax proficiency helps because sometimes writing a query is cleaner than chaining DataFrame operations. Combining multiple aggregation levels in a single query, implementing having clauses for filtered aggregations. Pivot tables show up occasionally.

Percentiles and statistical measures.

And always, always handle nulls correctly in aggregation contexts or you will get wildly wrong answers that look perfectly reasonable until someone questions why your revenue totals do not match the source system.

Configuration and optimization (10-15% of exam)

You do not need to be a Spark tuning expert, but you should know the basics. Set configuration properties appropriately for the task at hand. Configure partition counts for shuffle operations. Understand when to broadcast small lookup tables versus doing regular joins. Cache DataFrames when you will reuse them multiple times, and know the difference between persist and cache.

Partition your output data by specific columns when it makes sense. Control output file counts using coalesce or repartition based on downstream requirements. Understand lazy evaluation. Know which operations trigger actions. Minimize shuffles by ordering your transformations logically.

If you want structured practice on these domains, the CCA175 Practice Exam Questions Pack gives you realistic scenarios that mirror actual exam tasks.

Output and storage requirements (15-20% of exam)

The exam is brutal about output specifications.

You will save DataFrames to HDFS in exact formats. Parquet with specific compression, JSON with proper structure, delimited text files with exact delimiters. Write to Avro format when required. Save to Hive tables, both managed and external types.

Control whether you overwrite existing data or append to it. Partition output data for query optimization downstream. Compress using various codecs like Snappy or Gzip based on requirements. And here is the thing: validate everything before you consider a task complete because the automated scoring does not care about your intention, only your actual output files. Check record counts, verify data accuracy, make sure column ordering matches specifications exactly.

Technical foundations across domains

Beyond individual domains, you need solid proficiency in both PySpark and Spark SQL syntax. Switch between DataFrame API and SQL as appropriate for the task. Understand Spark's execution model. Troubleshoot job failures by reading error messages. Work efficiently in Linux command-line environment because you are not getting a fancy IDE. Manage HDFS directories and file operations. Understand Hive metastore concepts.

Honestly, the CCA Spark and Hadoop Developer exam tests practical application more than theoretical knowledge. You will implement complete ETL pipelines from source to destination, perform data quality checks, combine data from multiple sources, generate analytical outputs meeting specific business requirements. Handle messy real-world data scenarios where nothing is perfectly formatted.

If you have worked with related technologies, certifications like CCD-410 or CCD-333 cover some overlapping Hadoop developer concepts, though CCA175 focuses heavily on Spark specifically.

CCA175 Prerequisites and Recommended Background

CCA175 prerequisites and recommended background

Here's the deal with the CCA175 exam , people constantly wonder "what's actually required before I register" like there's gonna be some huge barrier to entry. There isn't. Zero paperwork requirements. Nobody's checking whether you've logged Spark hours for however many years. It's literally just you, that registration form, and the question of whether you've got what it takes to knock out performance tasks when the clock's ticking.

Nothing blocking you.

Required prerequisites (official vs. practical)

So officially? Cloudera doesn't impose mandatory prerequisites for the Cloudera CCA175 certification. Translation: no certifications you gotta show first, no degree requirements, no mandatory training you have to complete before they'll let you sit for the CCA Spark and Hadoop Developer exam. Pay the fee, book your slot, you're good to go.

That's pretty liberating, but honestly it's also risky. The entire burden falls on you to figure out if you're actually ready, and I've seen way too many people think "oh I binged a Spark tutorial series" means they can execute ETL tasks quickly, accurately, and consistently under exam conditions. Spoiler: tutorial binges don't translate to real speed.

They don't verify anything beforehand. Your experience? Your skills? Cloudera's not calling your boss. Not reviewing your code repos. Not asking you to submit your GitHub profile. The thing is, the exam just assumes you've already done that self-assessment work and you're arriving with enough hands-on muscle memory to function in a live environment. Which is exactly why the format feels less like answering trivia questions and more like someone just dumped you into a support ticket queue with a countdown timer running.

Now, Cloudera does recommend certain training courses. Key word: recommend. Not require. That difference actually matters because you can definitely pass using a solid CCA175 study guide, reading through Spark documentation thoroughly, and grinding out lots of practical exercises. But those recommended classes do align pretty tightly with the CCA175 exam objectives. They usually cover those irritating edge cases that pop up when you're working against the clock.

Anyone can register, yeah.

That's intentional.

Recommended experience level (projects and on-the-job tasks)

Real talk? I'd advise most candidates to rack up at least 6 to 12 months of legitimate hands-on Spark development before taking a shot at the Spark developer certification Cloudera's offering here. This exam isn't checking if you can define what a DataFrame is. It's evaluating whether you can use Spark DataFrame transformations and actions to generate precise outputs, manage janky datasets, and stay calm when your initial strategy bombs or runs too slow.

That kind of confidence typically comes from actual project work with Spark applications, where you've needed to interpret existing codebases, modify transformations, and work through the completely normal situation where data quality is terrible but stakeholders still expect deliverables.

Production ETL experience? Super valuable. When you're building ETL pipelines in production settings, you're forced to obsess over repeatability, schema evolution, partitioning strategies, file format choices, and validation checks. Those instincts translate directly to the pyspark and spark sql exam scenarios you'll encounter, where you're supposed to ingest data sources, transform them, join datasets, aggregate metrics, and write everything back in the specified structure without settling for "close enough" results.

Quick reality check for how to pass CCA175: ask yourself whether you could grab a messy dataset, diagnose its schema problems, write Spark SQL and DataFrame logic matching a detailed specification, validate your output with spot-checking techniques, and cleanly rerun everything if you messed up the first attempt. All without frantically googling basic syntax constantly. If that scenario sounds overwhelming, you probably need more practice reps, not more conceptual videos.

Other useful experience worth mentioning casually: handling Parquet and CSV formats, pulling from Hive tables, managing null values, parsing strings, dealing with timestamp conversions, and basic performance-based Spark exam instincts like only caching when it actually improves things and avoiding joins that accidentally explode your data volume. Oh, and knowing when to just abandon a slow query and rewrite it from scratch instead of stubbornly debugging for twenty minutes.

Tools and environment familiarity (cluster, CLI, notebooks)

This part gets overlooked constantly. Then people regret it.

You don't just need Spark knowledge. You need environment fluency. You should work through a Linux shell without conscious thought, because time evaporates shockingly fast when you're struggling with file paths, permission errors, or trying to locate where your output actually landed. Being really comfortable with standard CLI workflows, checking file contents, inspecting directory structures, and running quick verification commands is a subtle advantage that pays massive dividends on this exam.

You also wanna feel natural switching between Spark SQL and PySpark DataFrames, because certain tasks are just faster in SQL, while other scenarios work better with DataFrame APIs. The exam couldn't care less about your stylistic preferences. It cares about correct output matching the specification. Knowing how to spin up temp views, execute SQL queries, then pivot back into DataFrame code for more sophisticated transformations is basically baseline competency for a Hadoop developer certification exam in this space.

Honestly? Tons of candidates shoot themselves in the foot by practicing exclusively in notebooks with auto-complete and formatted outputs, then they show up and discover those safety nets don't exist. Practice in an environment that resembles the actual exam setup more closely. I mean running code in a more "raw" context, writing outputs to files, validating counts and distinct values manually, and keeping your workspace organized enough that you can reproduce your work if needed.

Quick tie-in to the questions people ask

People constantly ask about a CCA175 passing score, like whether Cloudera publishes the magic number. Reality check: the exam is performance-based, so what actually counts is completing sufficient tasks correctly, not memorizing some percentage threshold. Folks also wonder about CCA175 exam cost and retake policies, and yeah, you should definitely verify current pricing and rules before scheduling because those details shift periodically and nobody enjoys unpleasant surprises.

And if you're debating whether you need a CCA175 practice test, I mean technically you don't need one, but you absolutely do need timed, hands-on repetitions that simulate exam conditions. Otherwise you're walking into a performance-based Spark exam armed with nothing but theoretical flashcards.

Bottom line? No official prerequisites. But the practical prerequisite is being the type of Spark developer who's already delivered ETL work, debugged horrible data quality issues, and can execute rapidly under pressure.

Conclusion

Wrapping up your CCA175 path

Here's the deal. The CCA175 exam? It's not multiple-choice where you're guessing answers and hoping for the best. Completely performance-based, which means you've gotta write actual working Spark code that produces exactly the right output or you're toast. There's no partial credit for "I sorta understood the concept." That's honestly what makes the Cloudera CCA175 certification actually valuable in the job market, because employers know you can execute the work, not just regurgitate memorized definitions.

If you've stuck around this long, you probably know whether you're actually ready. Most people? They're not ready the first time they convince themselves they are. The exam objectives cover serious ground. Data ingestion, transformations, Spark SQL, DataFrame operations, joins, aggregations, output validation. You need muscle memory here. Not "I skimmed some tutorials" but "I've hammered through this exact task 50 times and could do it half-asleep."

The passing score thing drives everyone nuts because Cloudera won't publish an exact number, but grading's pretty straightforward. Your code either produces the correct output files in the right format or it doesn't. Period. Time management gets critical when you're wrestling 8-10 real-world tasks in just a few hours, and while practice tests help, they're only useful if they're legitimately mimicking that performance-based format with ETL-style scenarios. I remember my buddy Jake who spent three months drilling theory and completely bombed because he'd never actually written the code under pressure. Just sat there staring at the console like it was gonna solve itself. Anyway, the point is you need hands-on work, not theory questions that don't translate.

Cost-wise, the CCA Spark and Hadoop Developer exam runs around $295. Not cheap. You definitely don't wanna pay that twice 'cause you rushed in unprepared. Build your own practice environment, grind through every exam objective until you're legitimately bored, and drill those Spark DataFrame transformations and actions until they're absolute second nature. The pyspark and spark sql exam tasks demand speed and accuracy at the same time. No compromises.

Before you schedule, just make sure your hands-on skills really match what the CCA175 exam objectives actually require. Read the task, plan your approach, execute cleanly, verify output. That's your loop. Save your command history, double-check file formats, validate row counts every single time.

If you're hunting for solid preparation materials that mirror the actual performance-based format, the CCA175 Practice Exam Questions Pack at /cloudera-dumps/cca175/ gives you realistic scenarios to work through. Not gonna lie, it's probably the closest thing to sitting for the real exam without actually paying Cloudera $295. Practice's everything for this Spark developer certification Cloudera exam. Get your reps in.

Show less info

Comments

Hot Exams

Related Exams

Certified Business Analysis Professional (CBAP)

Certified Server Professional

Implementing Cisco Enterprise Advanced Routing and Services (300-410 ENARSI)

WorkKeys Assessment

VMware HCI Master Specialist

Professional vSphere 6.7 Exam 2019

Red Hat Certified Engineer (RHCE) exam for Red Hat Enterprise Linux 8

Avaya Oceanalytics - Insights Integration and Support Exam

Scrum Master Certified (SMC)

Medical College Admission Test: Verbal Reasoning, Biological Sciences, Physical Sciences, Writing Sample

Flutter Certified Application Developer

Management Accounting

Implementing Cisco Collaboration Applications (CLICA)

Cloudera Certified Developer for Apache Hadoop (CCDH)

Cloudera Certified Administrator for Apache Hadoop (CCAH)

CCA Spark and Hadoop Developer Exam

How to Open Test Engine .dumpsarena Files

Use FREE DumpsArena Test Engine player to open .dumpsarena files

DumpsArena.co has a remarkable success record. We're confident of our products and provide a no hassle refund policy.

Your purchase with DumpsArena.co is safe and fast.

The DumpsArena.co website is protected by 256-bit SSL from Cloudflare, the leader in online security.