DAS-C01 Practice Exam - AWS Certified Data Analytics - Specialty

Reliable Study Materials & Testing Engine for DAS-C01 Exam Success!

Exam Code: DAS-C01

Exam Name: AWS Certified Data Analytics - Specialty

Certification Provider: Amazon AWS

Certification Exam Name: AWS Certified Data Analytics

Free Updates PDF & Test Engine

Verified By IT Certified Experts

Guaranteed To Have Actual Exam Questions

Up-To-Date Exam Study Material

99.5% High Success Pass Rate

100% Accurate Answers

100% Money Back Guarantee

Instant Downloads

Free Fast Exam Updates

Exam Questions And Answers PDF

Best Value Available in Market

Try Demo Before You Buy

Secure Shopping Experience

DAS-C01: AWS Certified Data Analytics - Specialty Study Material and Test Engine

Last Update Check: Mar 15, 2026

Latest 164 Questions & Answers

Training Course 123 Lectures (12 Hours) - Course Overview

45-75% OFF

Hurry up! offer ends in 00 Days 00h 00m 00s

*Download the Test Player for FREE

Printable PDF & Test Engine Bundle

Dumpsarena Amazon AWS AWS Certified Data Analytics - Specialty (DAS-C01) Free Practice Exam Simulator Test Engine Exam preparation with its cutting-edge combination of authentic test simulation, dynamic adaptability, and intuitive design. Recognized as the industry-leading practice platform, it empowers candidates to master their certification journey through these standout features.

What is in the Premium File?

Satisfaction Policy – Dumpsarena.co

At DumpsArena.co, your success is our top priority. Our dedicated technical team works tirelessly day and night to deliver high-quality, up-to-date Practice Exam and study resources. We carefully craft our content to ensure it’s accurate, relevant, and aligned with the latest exam guidelines. Your satisfaction matters to us, and we are always working to provide you with the best possible learning experience. If you’re ever unsatisfied with our material, don’t hesitate to reach out—we’re here to support you. With DumpsArena.co, you can study with confidence, backed by a team you can trust.

Amazon AWS DAS-C01 Exam FAQs

Introduction of Amazon AWS DAS-C01 Exam!

The Amazon AWS DAS-C01 exam is a certification exam that tests a candidate's knowledge and skills in designing, deploying, and operating applications and infrastructure on the Amazon Web Services (AWS) platform. The exam covers topics such as designing and deploying applications on AWS, managing and operating applications on AWS, and troubleshooting and optimizing applications on AWS.

What is the Duration of Amazon AWS DAS-C01 Exam?

The Amazon AWS DAS-C01 exam is a 90-minute exam consisting of multiple-choice and multiple-response questions.

What are the Number of Questions Asked in Amazon AWS DAS-C01 Exam?

There are 65 questions in the Amazon AWS DAS-C01 exam.

What is the Passing Score for Amazon AWS DAS-C01 Exam?

The passing score for the Amazon AWS DAS-C01 exam is 720 out of 1000.

What is the Competency Level required for Amazon AWS DAS-C01 Exam?

The Amazon AWS DAS-C01 exam requires a competency level of Advanced.

What is the Question Format of Amazon AWS DAS-C01 Exam?

The Amazon AWS DAS-C01 exam consists of multiple-choice and multiple-response questions.

How Can You Take Amazon AWS DAS-C01 Exam?

The Amazon AWS DAS-C01 exam can be taken either online or at a testing center. If you choose to take the exam online, you will be required to register for an online proctored exam through Pearson VUE. Once you have registered, you will receive an email with instructions on how to access the exam. If you choose to take the exam at a testing center, you will need to register for an in-person proctored exam through Pearson VUE. Once you have registered, you will receive an email with instructions on how to access the exam.

What Language Amazon AWS DAS-C01 Exam is Offered?

The Amazon AWS DAS-C01 exam is offered in English.

What is the Cost of Amazon AWS DAS-C01 Exam?

The cost of the Amazon AWS DAS-C01 exam is $150.

What is the Target Audience of Amazon AWS DAS-C01 Exam?

The target audience for the Amazon AWS DAS-C01 Exam is individuals who are looking to become certified as AWS Data Analytics Specialists. This includes individuals who have experience and knowledge of collecting, storing, and analyzing data on the AWS platform.

What is the Average Salary of Amazon AWS DAS-C01 Certified in the Market?

The average salary for someone with an Amazon AWS DAS-C01 certification is around $120,000 per year. However, salaries can vary widely depending on experience, location, and other factors.

Who are the Testing Providers of Amazon AWS DAS-C01 Exam?

The Amazon AWS DAS-C01 exam is administered by Amazon Web Services (AWS). AWS offers practice exams and study materials to help you prepare for the exam. You can also find third-party providers that offer practice exams and study materials.

What is the Recommended Experience for Amazon AWS DAS-C01 Exam?

The recommended experience for Amazon AWS DAS-C01 Exam includes a minimum of two years of hands-on experience designing, developing and troubleshooting distributed applications and systems on the AWS platform. This experience should include working with AWS services such as Amazon S3, Amazon EC2, Amazon DynamoDB, AWS Lambda, Amazon API Gateway, and Amazon Elasticsearch. Additionally, experience with continuous integration/continuous delivery (CI/CD) and best practices for cloud security is beneficial.

What are the Prerequisites of Amazon AWS DAS-C01 Exam?

The prerequisites for the Amazon AWS DAS-C01 exam include a minimum of two years of hands-on experience operating AWS-based applications and services, a strong understanding of core AWS services, and an in-depth understanding of at least one high-level programming language, such as Python, Java, or C#.

What is the Expected Retirement Date of Amazon AWS DAS-C01 Exam?

The official website for checking the expected retirement date of Amazon AWS DAS-C01 exam is https://aws.amazon.com/certification/certification-exam-retirement-dates/.

What is the Difficulty Level of Amazon AWS DAS-C01 Exam?

The difficulty level of the Amazon AWS DAS-C01 exam is considered to be moderate.

What is the Roadmap / Track of Amazon AWS DAS-C01 Exam?

The Amazon AWS DAS-C01 exam is a certification track and roadmap for individuals who want to demonstrate their skills and knowledge in designing, deploying, and operating applications and infrastructure on the Amazon Web Services (AWS) platform. The exam covers a wide range of topics, including AWS core services, security, networking, and automation. Passing the exam demonstrates that the individual has the skills and knowledge necessary to design, deploy, and operate applications and infrastructure on the AWS platform.

What are the Topics Amazon AWS DAS-C01 Exam Covers?

The Amazon AWS DAS-C01 exam covers the following topics:

1. Designing and Deploying Cloud Solutions: This section covers the best practices for designing, deploying, and managing cloud solutions. It covers topics such as designing and deploying resilient architectures, selecting appropriate cloud services, and designing and deploying secure applications.

2. Managing Cloud Resources: This section covers the best practices for managing cloud resources, such as AWS services, Amazon EC2 instances, and Amazon S3 buckets. It covers topics such as managing access control, creating and managing Amazon Machine Images (AMIs), and troubleshooting cloud resources.

3. Automating Cloud Tasks: This section covers the best practices for automating cloud tasks, such as using AWS CloudFormation and AWS Lambda. It covers topics such as creating and managing AWS CloudFormation templates, deploying applications with AWS CodeDeploy, and automating tasks with AWS Lambda.

4. Monitoring and Logging Cloud

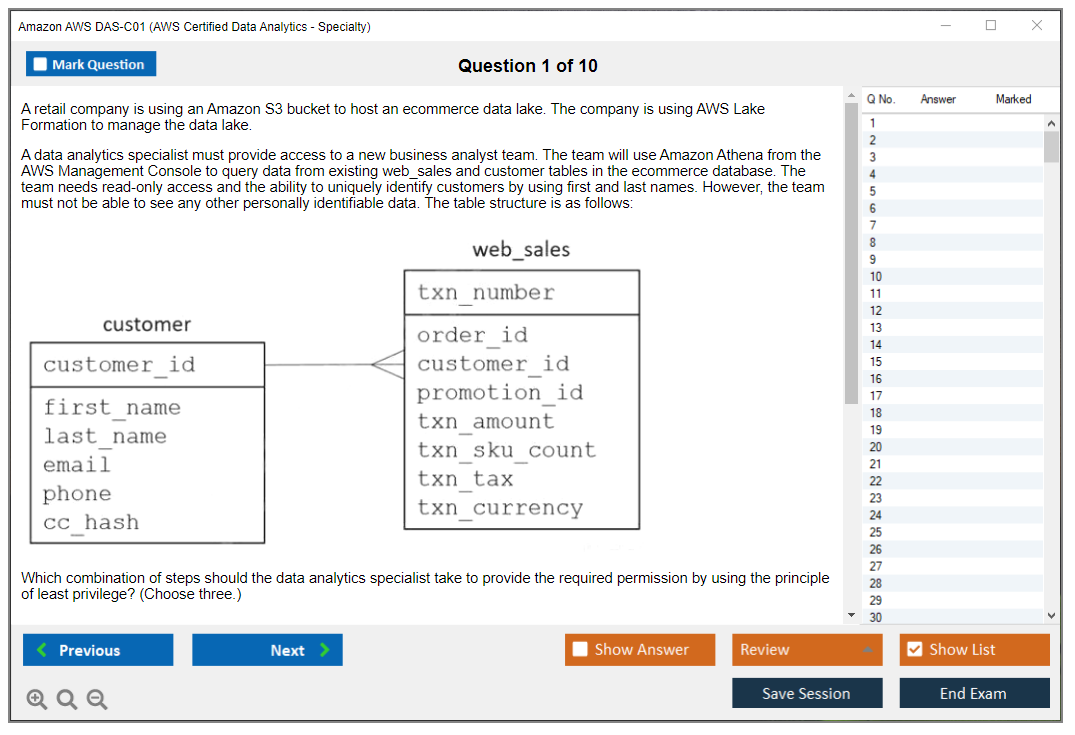

What are the Sample Questions of Amazon AWS DAS-C01 Exam?

1. What is the purpose of Amazon Web Services (AWS) Direct Connect?

2. How can you use AWS Direct Connect to optimize application performance?

3. What is Amazon VPC and how does it work?

4. What are the benefits of using Amazon Elastic Compute Cloud (EC2)?

5. What is the difference between Amazon Simple Storage Service (S3) and Amazon Elastic Block Store (EBS)?

6. What are the different types of Amazon EC2 Instance Types?

7. What security measures are available to protect data stored in Amazon S3?

8. How can you monitor the performance of Amazon EC2 instances?

9. What is Amazon CloudWatch and how does it work?

10. What services are available for deploying applications on Amazon EC2?

Amazon AWS DAS-C01 (AWS Certified Data Analytics - Specialty) AWS Certified Data Analytics, Specialty (DAS-C01) Overview AWS Certified Data Analytics, Specialty (DAS-C01) Overview The AWS Certified Data Analytics, Specialty DAS-C01 represents AWS's professional-level validation for data analytics practitioners who design, build, secure, and maintain analytics solutions on the AWS platform. This isn't some weekend cert. Not even close. It's designed for people who actually work with data pipelines, data lakes, and analytics systems every day and need to prove they know what they're doing beyond just clicking through the console. You know, folks who've dealt with production incidents at 2 AM and made architectural decisions that either saved or cost their company serious money. Look, this certification targets data analysts, data engineers, business intelligence developers, and solutions architects who work extensively with AWS analytics services exam (Glue, Athena, Redshift, Kinesis)... Read More

Amazon AWS DAS-C01 (AWS Certified Data Analytics - Specialty)

AWS Certified Data Analytics, Specialty (DAS-C01) Overview

AWS Certified Data Analytics, Specialty (DAS-C01) Overview

The AWS Certified Data Analytics, Specialty DAS-C01 represents AWS's professional-level validation for data analytics practitioners who design, build, secure, and maintain analytics solutions on the AWS platform. This isn't some weekend cert. Not even close. It's designed for people who actually work with data pipelines, data lakes, and analytics systems every day and need to prove they know what they're doing beyond just clicking through the console. You know, folks who've dealt with production incidents at 2 AM and made architectural decisions that either saved or cost their company serious money.

Look, this certification targets data analysts, data engineers, business intelligence developers, and solutions architects who work extensively with AWS analytics services exam (Glue, Athena, Redshift, Kinesis) and need to demonstrate full expertise. it's about knowing these services exist. You need to understand when to use Athena versus Redshift Spectrum, or why you'd choose Kinesis Data Firehose over Data Streams in specific scenarios that actually matter to business outcomes and budget constraints. The exam really digs into those architectural decisions that separate someone who's read the docs from someone who's actually architected production systems, dealt with stakeholder requirements, and had to justify their technology choices to leadership who may or may not understand why you can't just "put it in Excel."

DAS-C01 validates your ability to implement AWS services to derive value from data, including collection, storage, processing, analysis, visualization, and security across the entire analytics lifecycle. That's the whole enchilada. You're not just memorizing service features. The thing is, you're showing you can stitch together end-to-end solutions that actually solve business problems while meeting non-functional requirements like cost targets and performance SLAs.

Unlike associate-level certifications, this specialty exam requires deep understanding of designing analytics pipelines on AWS with appropriate service selection based on specific business requirements and technical constraints. The questions aren't straightforward "what service does X" type stuff. They give you a scenario with multiple competing requirements (maybe cost optimization versus query performance, or real-time processing versus operational simplicity) and you need to pick the best approach given constraints that sound frustratingly similar to real-world project discussions. Sometimes multiple answers could work, but only one is optimal given the specific constraints mentioned. It can be tough to parse sometimes.

What this certification actually validates

The certification demonstrates proficiency in AWS data lake certification concepts, including data lake architecture, cataloging, partitioning strategies, and integration with downstream analytics tools. You need to understand how Lake Formation permissions interact with IAM policies. How Glue Data Catalog integrates with Athena, Redshift Spectrum, and EMR. Why you'd partition data one way versus another based on query patterns that'll emerge six months down the road when your data volume triples and suddenly everyone's complaining about query performance. This stuff matters when you're managing petabytes of data and query costs can spiral out of control with poor architecture decisions. I've seen monthly bills jump from $5K to $50K because someone didn't understand partition pruning.

Real work experience matters.

Candidates must understand data ingestion and transformation on AWS patterns, including batch and streaming ingestion, ETL/ELT processes, and data quality management across diverse data sources. This is where a lot of people struggle if they haven't done real implementation work. The difference between using Glue ETL jobs versus Lambda functions versus EMR for transformation isn't always obvious from reading documentation. You need to have hit the limitations of each approach, dealt with timeout issues, wrestled with memory constraints, and optimized job execution times to really internalize when each makes sense for different scenarios.

The exam tests real-world scenario judgment. Not gonna lie, this is what makes it challenging and kind of frustrating when you're sitting there second-guessing yourself. You'll see questions where three or four options could technically work, but you need to evaluate multiple valid solutions and select the most appropriate based on cost optimization, performance requirements, scalability needs, and operational excellence. Basically all the stuff your manager and finance team care about simultaneously.

Data governance and security in AWS analytics forms a critical component, covering encryption at rest and in transit, access control through IAM policies and Lake Formation permissions, data masking, and compliance requirements. I've seen people who're strong on the technical architecture side trip up on governance questions because they haven't dealt with compliance requirements or fine-grained access control in their day jobs. This domain is important and you can't skip it, especially if you're working with PII or healthcare data where regulations actually have teeth.

The certification remains valid for three years from the date you pass, after which AWS DAS-C01 renewal through recertification or continuing education becomes necessary. AWS has been pushing their new recertification program where you can take a shorter renewal exam instead of the full thing, which is nice. But in three years the analytics space changes enough that you'd benefit from reviewing updated content anyway. Lake Formation didn't even exist when some folks first certified, and now it's central to data lake architectures.

Why this cert matters for your career

AWS regularly updates exam content to reflect new services, features, and best practices, keeping certified professionals current with the evolving analytics ecosystem. They retired the older Big Data Specialty (BDS-C00) and replaced it with DAS-C01 to reflect the shift toward modern analytics patterns and newer services like Lake Formation. That's going to keep happening. The exam evolves as AWS releases new capabilities, which is both annoying and necessary if you think about it.

This specialty certification sits alongside other AWS specialty tracks like AWS Certified Security - Specialty and AWS Certified Machine Learning - Specialty and represents equivalent professional-level expertise in the data analytics domain. It's positioned above associate-level certs like AWS Certified Solutions Architect - Associate in terms of depth and specialization. Some people go for the Solutions Architect Professional, others specialize. Both paths're valid depending on your career direction and what kind of work actually interests you.

Earning DAS-C01 certification signals to employers that you can architect end-to-end analytics solutions, troubleshoot complex data pipeline issues, and optimize analytics workloads for cost and performance without constant hand-holding or escalation. In job descriptions for senior data engineering roles, you'll often see this cert listed as preferred or required. It's become the de facto standard for validating AWS analytics expertise, similar to how the Solutions Architect certs validate general AWS architecture skills. Though some people have mixed feelings about cert requirements in job postings.

The exam covers five major domains with varying weights, requiring balanced knowledge across collection, storage, processing, analysis, visualization, and security rather than specialization in just one area. You can't just be amazing at Redshift optimization and skip Kinesis. You need breadth across the entire analytics stack, which means studying stuff you might never use in your current role. The domains're weighted differently, with collection, storage, and processing typically carrying more weight than visualization, but every domain shows up enough that you can't ignore any of them.

Skills and knowledge you'll demonstrate

Successful candidates typically combine hands-on AWS experience with structured study of service documentation, whitepapers, and best practices guides specific to analytics workloads. Look, I'm not saying you can't pass without production experience, but it's significantly harder. The questions're written to expose people who've only done tutorials versus people who've made architectural decisions with real consequences. Budget impacts, performance issues, security incidents, that sort of thing.

The certification benefits professionals seeking career advancement in data engineering, analytics engineering, business intelligence, or cloud data architecture roles where AWS expertise commands premium compensation. Data engineers with this cert regularly see salary bumps or better job opportunities. The market demand for AWS analytics skills is strong, especially in organizations doing cloud migrations or building modern data platforms. Though the competition's getting tougher as more people certify.

You'll demonstrate ability to define AWS data analytics services and understand how they integrate to create full analytics solutions addressing specific business problems. it's knowing what Glue does. It's understanding how Glue connects to S3, the Data Catalog, Lake Formation, Athena, Redshift, and EMR in a cohesive architecture that actually delivers business value instead of just consuming budget. The integration points and data flow patterns matter more than individual service features when you're designing real systems.

Skills in implementing and automating data ingestion and transformation on AWS using services like AWS Glue, AWS Data Pipeline, Amazon Managed Streaming for Apache Kafka (MSK), and Amazon Kinesis family. You need to know when to use Kinesis Data Streams versus Firehose, how MSK compares to Kinesis, when Data Pipeline makes sense versus Step Functions orchestrating Lambda and Glue. Data Pipeline feels a bit dated now but it still appears on the exam. These aren't academic questions. They come up constantly in real implementations where the wrong choice means rework and missed deadlines.

Proficiency in designing and maintaining AWS data lake certification architectures using Amazon S3, AWS Lake Formation, AWS Glue Data Catalog, and appropriate partitioning and bucketing strategies. The data lake pattern has become dominant for modern analytics, and understanding how to architect one properly on AWS is key for anyone working in this space. You need to know how to organize data in S3, define schemas in the Data Catalog, manage permissions through Lake Formation, and optimize for query performance without creating a maintenance nightmare six months down the road.

Who should actually pursue this certification

Data engineers with 2-5 years of experience designing and implementing data pipelines who want to validate their AWS-specific analytics expertise and advance their careers. If you're already building pipelines with Glue or orchestrating workflows with Step Functions, this cert formalizes what you know and fills gaps in areas you haven't worked with yet. That's valuable even if you never use those services.

Business intelligence developers transitioning from on-premises or other cloud platforms to AWS who need to prove proficiency with AWS analytics and visualization services. Maybe you've been using Tableau with SQL Server or Oracle for years, and now your company's moving to AWS. This cert helps you learn the AWS equivalents (QuickSight, Athena, Redshift) and prove you can work in the new environment without a lengthy ramp-up period that makes hiring managers nervous.

Solutions architects specializing in data and analytics workloads who design end-to-end analytics architectures for enterprise clients and need recognized credentials. If you're designing solutions for clients, having this cert gives you credibility and often satisfies client requirements for certified staff on projects. It's similar to how AWS Certified Solutions Architect - Professional works for general architecture roles, though I'd argue this specialty's more focused and potentially more valuable for analytics-specific engagements.

Data analysts with strong technical skills who've expanded into data engineering responsibilities and work extensively with AWS analytics services in their daily roles. I've seen analysts who started querying data evolve into building the pipelines that generate that data. If that's your trajectory, this cert validates the engineering skills you've developed beyond traditional analysis work. Skills that might not be obvious from your job title but definitely impact your value to the organization.

Cloud engineers or DevOps professionals who manage analytics infrastructure and want to deepen their understanding of analytics-specific AWS services and best practices. You might already know CloudFormation, IAM, and general AWS operations, but analytics workloads've got specific patterns and services you need to understand. This cert bridges that gap between general cloud ops and analytics-specific requirements that can trip you up if you're just applying generic cloud patterns.

Professionals working with AWS analytics services exam (Glue, Athena, Redshift, Kinesis) who want to formalize their knowledge and gain recognition for their hands-on experience. Sometimes you learn by doing and then want the credential that proves you know what you're doing. That's completely valid. The cert validates and often extends your practical knowledge, exposing you to use cases and patterns you haven't encountered yet in your specific role.

Consultants and contractors who need to prove verified expertise to clients and differentiate themselves in competitive markets where AWS analytics skills command premium rates. When you're billing $150-200+ per hour, clients want to see certifications as evidence you know your stuff. Fair or not, that's the reality. This specialty cert's more valuable than associate-level certs for specialized analytics work and can literally be the difference in landing contracts.

Career changers from related fields like database administration, ETL development, or traditional BI who've invested in learning AWS analytics and want to validate their new skills. The cloud analytics space is different enough from traditional on-premises data warehousing that formal validation helps show you've made the transition successfully. That can be key when you're competing against people with cloud-native backgrounds.

Professionals who've already earned AWS Certified Solutions Architect - Associate or AWS Certified Developer - Associate and want to specialize in the data analytics domain for career growth and increased earning potential. The associate certs give you AWS fundamentals, and this specialty dives deep into one domain. It's a natural progression for career advancement, though it's definitely a step up in difficulty and time investment.

DAS-C01 Exam Details

AWS Certified Data Analytics, Specialty (DAS-C01) overview

AWS Certified Data Analytics, Specialty DAS-C01 basically tests whether you can actually build analytics pipelines on AWS that won't collapse at 2 a.m. during a data spike. Real work stuff. It's aimed at folks handling ingestion, storage, processing, and analysis, not just people who've memorized service names from flashcards. You're expected to examine a business scenario, identify the constraints, and select the best AWS pattern, even when multiple answers look totally "fine" initially.

This cert aligns well with data engineers, analytics engineers, data platform folks, and solutions architects who constantly get dragged into analytics projects. Honestly, it's a solid "I can talk to both the BI team and the platform team without losing my mind" signal, you know? And yeah, it's also an AWS data lake certification in spirit. The exam absolutely loves lakes, governance, and "where should this dataset actually live."

What DAS-C01 validates (skills and roles)

You're proving you can build and operate data ingestion and transformation on AWS, choose the right storage layer, and make tradeoffs across cost, latency, and operational overhead. Not theoretical.

Practical.

Expect questions pushing you into messy middle-ground decisions. I mean, Kinesis vs. MSK, Glue vs. EMR, Redshift vs. Athena, Lake Formation vs. DIY IAM and bucket policies. Then they sprinkle in performance tuning, partitioning, encryption, and "someone needs access but not that access."

Who should take this certification

If you've been hands-on with Glue crawlers, Athena partitions, Redshift sort keys, Kinesis shard math, or you've had to explain why everyone querying the raw bucket is a terrible idea, you're in the target zone.

If you're brand new to AWS analytics, you can still take it, but look, it'll feel like drinking from a firehose. Not impossible. Just.. loud.

DAS-C01 exam details

The AWS Certified Data Analytics, Specialty DAS-C01 exam is 65 questions, computer-based, delivered through Pearson VUE at a testing center or via online proctoring. You get 180 minutes. That's roughly 2.8 minutes per question, which sounds generous until you hit those long scenario prompts with exhibits and you're also doing architecture in your head while fighting the clock.

Two question types show up. Multiple-choice (one correct out of four). Multiple-response (two or more correct out of five or more), and the UI tells you how many you need to select, which is nice because guessing "is it two or three" isn't a fun hobby.

Questions are scenario-based. Not trivia. You'll get a company situation, current stack, data volumes, latency needs, compliance rules, and then you pick the most appropriate AWS analytics services exam (Glue, Athena, Redshift, Kinesis) solution. Distractors are often "almost right." Like, it'd work, but it's too expensive, or it misses governance, or it adds operational babysitting nobody wants.

Some questions get service-nerdy. Shard counts for Kinesis, tuning Athena with partitions and columnar formats, Redshift distribution styles, Glue job bookmarks and worker types. Others are end-to-end, the classic "designing analytics pipelines on AWS" prompt where you stitch ingestion, lake storage, ETL, warehouse, and BI together with security layered on top.

Exhibits happen. Architecture diagrams, config snippets, bits of code, sample data layouts. Read them carefully. The thing is, half the time the answer's hiding in a tiny constraint like "must support cross-account access" or "data must be deleted after 30 days."

The exam UI lets you mark questions for review and move around freely. Do that. This is one of those tests where you sometimes need the later questions to jog your memory about what AWS expects as the "best" pattern right now.

No notes. No docs. No phone. No second monitor. Nothing. You either know it or you don't.

Delivery options (online vs. testing center)

Online proctoring's convenient, but it's strict. Webcam on, screen shared, desk cleared, ID check, and they can be picky about your room setup. Wait, is that a mirror behind you? If you live in a noisy place, or your internet does that cute thing where it drops for 20 seconds, consider a testing center.

Testing centers are boring in a good way. Quiet. Controlled. They give you the workstation, usually headphones, and either a whiteboard or scratch paper depending on location.

Same content. Same scoring. Pick your stress flavor.

Cost (exam fee and add-ons)

The DAS-C01 exam cost is $300 USD. That's the standard AWS specialty price, more than associate exams ($150), and aligned with other specialty certs.

Once scheduled, the fee's generally non-refundable. You can reschedule up to 24 hours before the appointment without penalty. Within 24 hours, you typically forfeit the fee, and yeah, paying another $300 hurts.

AWS includes your score report, digital badge, and certificate download. No extra charge.

Practice exams exist. AWS Skill Builder has a practice exam around $40 with 20 questions and explanations. Helpful, but it's not a full simulator.

Lots of people also buy third-party DAS-C01 study materials like video courses, books, and question banks. That's optional, but it adds up fast. If you want one focused thing that's basically "give me lots of exam-style questions and let me build reps," I'd point you to something like the DAS-C01 Practice Exam Questions Pack as a targeted add-on, especially once you've already read the official exam guide and you're ready to pressure-test your weak spots.

Also, retakes cost the full $300 again.

No discounts by default.

So prep well. Your wallet'll thank you later.

Passing score (how scoring works)

The DAS-C01 passing score is 750 on a scaled score range from 100 to 1000. Scaled scoring means AWS converts your raw performance into a standard scale that accounts for question difficulty differences across exam versions.

AWS does not publish how many questions you need correct to hit 750. You can't reverse-engineer it reliably. Some questions are unscored "pilot" items used for stats and future exam building, and they aren't labeled. Answer everything like it counts.

After you finish, results show up fast. Online proctored exams typically give you the pass/fail result immediately, and testing centers usually post within a few hours. In your AWS Certification account you'll see pass/fail and the scaled score, plus domain performance bands like "needs improvement," "at target," or "above target." You won't get question-by-question feedback. That's just how AWS runs it.

Failing means a 14-day waiting period before retaking. No limit on total attempts, but each attempt's another $300, so.. you know.

The exam's offered in English, Japanese, Korean, and Simplified Chinese.

DAS-C01 exam objectives (domains)

The AWS DAS-C01 exam objectives are split into five weighted domains. The weights matter. They should shape how you study, because "I'll just focus on my favorite services" is how people end up rebooking the exam.

Domain 1: Data collection (18%)

This is ingestion. Batch and streaming. You should be comfortable with Kinesis Data Streams vs. Kinesis Data Firehose, when to use SQS, when IoT Core fits, and how you land data reliably into S3, Redshift, or OpenSearch.

One detail people miss: the exam likes operationally simple ingestion when requirements allow it. Firehose to S3 with buffering and format conversion can beat a custom consumer app, even if the custom app feels more "engineer-y."

Domain 2: Storage and data management (22%)

S3's the center of gravity. Data lakes. Partitioning. File formats. Cataloging with Glue Data Catalog. Governance with Lake Formation. Lifecycle policies. Cross-account patterns. This is also where you'll see questions about choosing between S3, Redshift, DynamoDB, and other stores based on access patterns.

A common exam trap's confusing "where to store" with "how to query." Athena querying S3 isn't the same thing as a warehouse, and Redshift being fast doesn't mean you should dump every raw log into it.

Domain 3: Data processing (24%)

Biggest weight. Expect Glue ETL, Glue Studio, EMR (Spark, Hive, Presto), Lambda for light transforms, Step Functions for orchestration, and how to handle retries, idempotency, and incremental loads.

Honestly, know what Glue job bookmarks do, what triggers and workflows are, and when EMR's the better fit because you need custom libraries, long-running clusters, or fine-grained Spark tuning. Also, look, "processing" includes streaming processing too, so Kinesis Data Analytics and Lambda stream consumers can show up.

Domain 4: Analysis and visualization (18%)

Athena, Redshift (including Spectrum), QuickSight, OpenSearch, and sometimes the "how do analysts access this" side of things. Performance tuning for Athena shows up more than people expect: partitions, Parquet/ORC, compression, file sizing, and avoiding scanning the whole lake.

QuickSight's usually straightforward on the exam, but they may ask about SPICE vs. direct query, row-level security, or how to share dashboards with the right access boundaries.

Domain 5: Security (18%)

This is data governance and security in AWS analytics. IAM, KMS encryption, bucket policies, VPC endpoints, private connectivity, Lake Formation permissions, CloudTrail, and sometimes Macie.

Security questions are rarely "turn on encryption." It's more like "how do we enforce least privilege for teams querying shared tables across accounts without giving them direct S3 access," and Lake Formation's often the expected answer.

Key AWS services to know

Glue, Athena, Redshift, EMR, Kinesis (Streams and Firehose), Lake Formation, QuickSight. Also know supporting pieces like S3, IAM, KMS, CloudWatch, CloudTrail, Step Functions, Lambda, and VPC networking basics.

DAS-C01 prerequisites and recommended experience

Prerequisites (official vs. recommended)

AWS doesn't gatekeep the exam with hard prerequisites.

No required certs.

The real-world expectation's something like 1 to 2 years working with analytics workloads on AWS, or at least a bunch of hands-on practice that mimics it. If you've never partitioned a dataset or debugged a Glue job run, you're going to be learning and testing at the same time, and that's rough.

Hands-on experience expectations

You should've touched the console enough to not waste time hunting for settings. Same for CLI basics. IaC matters too. The exam assumes familiarity with CloudFormation or Terraform concepts for analytics resources, not because it wants syntax, but because it wants repeatable infrastructure thinking.

Build something small. A mini lake on S3. Glue crawler to catalog it. Athena queries. Maybe load curated data into Redshift. Then visualize in QuickSight. Throw in Lake Formation permissions if you want to match exam vibes.

I actually spent a weekend once building a pipeline just to understand why everyone complains about small file problems in S3. Turns out they're right. Query performance tanks when you've got 50,000 tiny JSON files instead of 50 properly sized Parquet files. That's the kind of thing you can read about, sure, but watching Athena crawl through a badly structured bucket at 2 cents per gigabyte scanned really drives the lesson home.

Helpful prior certs (optional)

An associate-level Solutions Architect cert helps, mostly for IAM, networking, and general AWS patterns. A data engineering background helps even more.

How hard is the AWS Data Analytics Specialty? (Difficulty)

Difficulty level and what makes it challenging

AWS Data Analytics Specialty difficulty is real. It's specialty for a reason. The hard part isn't memorizing service names, it's picking the best design under constraints, especially when multiple answers look plausible.

You're constantly trading off cost vs. performance vs. ops overhead. And AWS loves "managed" choices where possible, so if you default to building custom consumers and custom Spark clusters for everything, the exam'll punish you.

Common stumbling blocks

Service selection confusion's big. Another's performance tuning details, like what makes Athena cheap and fast versus slow and expensive.

Security and governance also trip people. Lake Formation, cross-account access, column-level permissions, encryption boundaries, and audit trails show up, and they aren't always intuitive if you've only worked in one AWS account with admin rights.

Best study materials for DAS-C01

Study materials (AWS Skill Builder, exam guide, whitepapers)

Start with the official exam guide and the domain breakdown. Then map each domain to docs and FAQs for the big services: Glue, Athena, Redshift, Kinesis, Lake Formation.

Skill Builder courses are fine.

Some are dry.

Still worth it for structured coverage.

Third-party video courses can be great if you need someone to explain the "why," not just the "what." And for repetition, DAS-C01 practice tests matter a lot, because the question style's its own skill.

Documentation and FAQs that map to objectives

Read the Athena best practices pages. Read Redshift distribution and sort key docs. Skim Glue job parameters and job bookmarks. Know Kinesis scaling basics and what shard count implies.

You don't need every limit memorized. But you do need the ones that drive architecture decisions.

Hands-on labs and projects (portfolio-style practice)

Build one batch pipeline and one streaming pipeline. Even small. The exam rewards muscle memory.

If you want practice that feels closer to exam pacing, I'd mix hands-on with targeted questions, like the DAS-C01 Practice Exam Questions Pack after each domain, so you're not waiting until the end to find out you misunderstood Lake Formation or Redshift Spectrum.

DAS-C01 practice tests and exam prep strategy

Practice tests (what to look for)

Quality matters more than quantity. You want explanations that teach, not just "B is correct." Watch out for banks that feel like trivia dumps.

Do timed sets. Review wrong answers. Then redo them a week later. That spaced repetition's boring, but it works.

If you prefer a single paid pack to keep you honest, the DAS-C01 Practice Exam Questions Pack is the kind of thing you can cycle through multiple times, building speed and spotting the patterns in how AWS phrases "most cost-effective" versus "lowest operational overhead."

Practice exam review method (error log plus domain mapping)

Keep an error log. A simple doc. Question topic, what you chose, why it was wrong, and which domain it maps to. Fragments are fine. "Athena partition projection confused." "Picked EMR, but Glue simpler." That kind of note.

Then spend your next study session closing those exact gaps, not rereading everything.

Time management and question strategy

First pass: answer what you know fast. Mark the rest. Second pass: slow down on the marked ones and re-read the constraints, especially security and "must" statements.

Don't leave anything blank. Pilot questions exist, sure, but you don't know which ones they are, so treat every question like it's scored.

DAS-C01 renewal and recertification

Renewal requirements and timeline

AWS certifications expire on a cycle (historically 3 years). The AWS DAS-C01 renewal approach's basically recertify before it lapses, and AWS may offer options depending on their current program rules at the time you're renewing.

Recertification options

Often the simplest path's retaking the current version of the specialty exam. AWS sometimes introduces alternative recert routes, but those can change, so check your AWS Certification account for the current policy when you're within a few months of expiration.

Keeping skills current

AWS updates exam content as services change. So keep an eye on release notes for Glue, Athena, Redshift, Lake Formation, and Kinesis. Small feature changes can shift what the "best answer" is.

DAS-C01 FAQs

How much does the AWS DAS-C01 exam cost?

The DAS-C01 exam cost is $300 USD per attempt, plus any optional prep materials you buy.

What is the passing score for AWS Certified Data Analytics, Specialty?

The DAS-C01 passing score is 750 on a scaled 100 to 1000 score report.

How hard is the DAS-C01 exam compared to other AWS certifications?

Harder than associate exams for most people, mostly because it's scenario-heavy and expects you

DAS-C01 Exam Objectives (Domains)

The five-domain structure that defines your exam scope

The AWS DAS-C01 exam objectives split into five weighted domains that collectively cover the complete analytics lifecycle from data collection through security and governance. AWS didn't just randomly throw services into buckets here. Each domain represents a distinct phase in how real analytics projects actually work, from the moment data enters your environment to securing it against unauthorized access. Domain weighting determines the approximate percentage of exam questions from each area, guiding candidates to allocate study time proportionally across the five domains.

The weighting isn't trivial info you ignore. If a domain carries 30% of the exam weight versus 10%, you'll see three times as many questions from that area. Basic math, but people still spend equal time on every domain and then wonder why they bombed sections that mattered most.

AWS publishes an official exam guide that details specific tasks, knowledge areas, and services within each domain. That guide? Your blueprint. Not blog posts, not YouTube videos, not some third-party course outline. Those can supplement, but the exam guide tells you exactly what AWS expects you to know. Understanding the domain structure helps candidates organize study materials, identify knowledge gaps, and prepare across all tested areas.

Each domain covers multiple AWS services, architectural patterns, best practices, and real-world scenarios that candidates must master for exam success. You're not memorizing service names. You're learning when to choose Kinesis Data Streams versus Firehose, how to partition S3 data for optimal Athena performance, why you'd pick EMR over Glue for certain transformations. The domains reflect the typical workflow of analytics projects: collecting data from sources, storing it appropriately, processing and transforming it, analyzing and visualizing insights, and securing everything.

Questions may span multiple domains, requiring integrated knowledge of how services work together across the analytics pipeline rather than isolated service memorization. A single question might ask about ingesting streaming IoT data (Domain 1), storing it cost-effectively in S3 with proper lifecycle policies (Domain 2), transforming it with Glue (Domain 3), and ensuring proper encryption and access controls (Domain 5). That's why cramming service facts doesn't work here.

Actually, there's this weird thing where people think analytics certifications are easier than solution architect exams because they're "specialized." They're not easier. They're narrower but deeper. You need to know not just what each service does but how they interact, when one makes sense over another, what the performance implications are. Anyway.

Domain 1 gets into collection mechanics

Data ingestion and transformation on AWS begins with collection, covering batch and real-time ingestion patterns from diverse sources including databases, applications, IoT devices, and streaming platforms. This domain typically carries around 18% of exam weight, so roughly one in five questions comes from collection topics. You need to know the ingestion space cold.

Amazon Kinesis Data Streams enables real-time ingestion of high-volume streaming data with configurable shard capacity, retention periods, and consumer applications. Each shard handles 1 MB/sec input or 1,000 records/sec, and 2 MB/sec output. You provision shards based on throughput needs. Retention goes from 24 hours default up to 365 days, and you pay for what you provision regardless of actual usage.

Amazon Kinesis Data Firehose provides fully managed delivery streams that automatically scale, transform data in-flight, and deliver to destinations like S3, Redshift, and OpenSearch. The difference matters. Streams gives you raw shard-level control and custom consumer apps. Firehose gives you simple delivery with automatic scaling and built-in transformations. Firehose can invoke Lambda functions to transform records before delivery, convert formats, and batch data efficiently.

Amazon Managed Streaming for Apache Kafka (MSK) offers fully managed Apache Kafka clusters for scenarios requiring Kafka-specific features or existing Kafka investments. If your organization already uses Kafka on-prem or needs specific Kafka ecosystem tools, MSK makes sense. Otherwise? Kinesis is typically simpler and more AWS-native.

AWS Database Migration Service (DMS) facilitates continuous replication from relational databases, enabling change data capture for analytics without impacting production systems. DMS can do full loads plus ongoing replication, capturing inserts, updates, and deletes from source databases like Oracle, MySQL, PostgreSQL, SQL Server. You can filter which tables replicate, transform data in flight with simple mapping rules, and land everything in S3 as Parquet for your data lake.

AWS DataSync and AWS Transfer Family support file-based ingestion patterns for migrating or continuously syncing data from on-premises storage or SFTP/FTPS sources. DataSync moves files from NFS, SMB, or object storage into S3 or EFS with automatic scheduling and bandwidth throttling. Transfer Family gives you managed SFTP/FTPS/FTP endpoints that write directly to S3, perfect for legacy systems that only speak file transfer protocols.

AWS IoT Core and AWS IoT Analytics provide specialized ingestion paths for IoT sensor data with device management, message routing, and protocol translation. Unless you're working in IoT scenarios, this is lower priority, but know that IoT Core handles MQTT and other IoT protocols, routes messages to Kinesis or other destinations, and manages device registries.

Amazon AppFlow enables no-code integration with SaaS applications like Salesforce, ServiceNow, and Slack for ingesting business application data into analytics pipelines. You configure flows with point-and-click, map fields, apply filters, and schedule runs. Niche but shows up in scenarios about integrating SaaS data.

AWS Glue crawlers automatically discover schema and metadata from data sources, populating the Glue Data Catalog for downstream processing and analysis. Crawlers scan S3 buckets, JDBC databases, or DynamoDB tables, infer schemas, detect partitions, and create or update catalog table definitions. They run on schedules or on-demand.

Direct Connect and VPN connections enable secure, high-bandwidth data transfer from on-premises data centers to AWS for hybrid analytics architectures. These aren't analytics services, but you need to understand when hybrid connectivity matters for ingestion patterns. API Gateway and Lambda functions support custom ingestion endpoints for applications to push data into analytics pipelines via RESTful APIs.

Understanding ingestion patterns includes selecting appropriate services based on data velocity (batch vs. streaming), volume, variety, and source system capabilities. Batch means scheduled or triggered loads. Streaming means continuous near-real-time flow. Volume affects whether you need managed scaling. Variety determines if you need schema flexibility.

Domain 2 focuses on where data lives

AWS data lake certification concepts center on storage domain knowledge, including S3 as the foundation for data lakes with appropriate bucketing, partitioning, and lifecycle strategies. This domain typically weighs around 22%, making it the second-heaviest section. Storage architecture decisions ripple through everything else.

Amazon S3 storage classes (Standard, Intelligent-Tiering, Glacier) optimize costs based on access patterns, with lifecycle policies automating transitions between classes. Standard for frequently accessed data, Infrequent Access for stuff you touch monthly, Glacier for archival. Intelligent-Tiering automatically moves objects between tiers based on access patterns, charging a small monitoring fee per object.

S3 object tagging, bucket policies, and access points enable fine-grained access control and data organization within large-scale data lakes. Tags let you categorize objects, apply lifecycle rules by tag, and control access based on tag values. Access points create dedicated access configurations for different applications or teams hitting the same bucket.

Partitioning strategies using prefixes like year/month/day improve query performance and reduce costs by enabling partition pruning in Athena, Redshift Spectrum, and EMR. If your S3 data sits in 's3://bucket/sales/2024/01/15/data.parquet', query engines can skip reading irrelevant partitions when you filter by date. This drastically cuts scan volumes and costs.

Data formats (Parquet, ORC, Avro, JSON, CSV) impact storage efficiency, query performance, and schema evolution capabilities, with columnar formats preferred for analytics. Parquet and ORC compress better and allow column pruning during reads, so you only scan needed columns. JSON and CSV? Row-based, easier to read but terrible for analytics at scale. Avro handles schema evolution nicely for streaming scenarios.

Amazon Redshift is the primary data warehouse solution, with cluster sizing, distribution keys, sort keys, and compression encoding critical for performance optimization. Redshift uses MPP (massively parallel processing), distributing data across nodes. Distribution keys determine which node stores which rows, sort keys order data on disk for faster scans, and compression reduces storage and I/O.

Redshift Spectrum extends Redshift queries to S3 data without loading, enabling hybrid architectures that balance cost and performance. You query Parquet files in S3 using the same SQL as Redshift tables, joining lake data with warehouse tables. Spectrum spins up temporary compute to scan S3, so you don't pay for warehouse capacity you don't always need.

AWS Lake Formation centralizes data lake governance with permissions management, data catalog integration, and cross-account access control. Lake Formation sits on top of Glue Data Catalog, adding fine-grained permissions at database, table, column, and row level. It simplifies granting access across accounts and integrates with services like AWS Certified Data Analytics - Specialty workflows.

Amazon DynamoDB provides NoSQL storage for high-velocity operational data that may feed into analytics pipelines via DynamoDB Streams or export to S3. DynamoDB isn't typically your analytics data store, but Streams capture item-level changes that you can funnel into Kinesis or Lambda, and S3 exports dump table snapshots for analytics.

AWS Glue Data Catalog is the central metadata repository, storing schema information, table definitions, and partitions for all analytics data assets. Athena, Redshift Spectrum, EMR, and Glue ETL jobs all reference the Data Catalog to understand table schemas and locations. Basically a Hive metastore as a managed service.

Data retention policies, archival strategies, and compliance requirements influence storage architecture decisions and lifecycle management configurations. If regulations demand seven-year retention, you're using Glacier. If data becomes worthless after 30 days, lifecycle policies delete it automatically.

Understanding storage patterns includes evaluating tradeoffs between cost, performance, durability, and query capabilities for different data types and access patterns. S3's cheap and durable but requires compute to query. Redshift's fast for complex SQL but costs more. DynamoDB handles high-throughput key-value but isn't built for analytics queries.

Domain 3 covers transformation and processing

Designing analytics pipelines on AWS requires mastery of processing services that transform raw data into analytics-ready datasets through ETL/ELT. This domain typically weighs around 24%, the heaviest section, because transformation's where analytics projects get complex. You're dealing with AWS Glue, EMR, Lambda, Step Functions, and understanding when each fits.

AWS Glue provides serverless ETL with Python or Scala scripts that run on auto-scaled Spark clusters, perfect for batch transformations that don't require constant cluster uptime. Glue jobs process data from the Data Catalog, apply transformations using Glue's DynamicFrame abstraction or native Spark DataFrames, and write results back to S3 or other targets. You pay per second of job runtime, not for idle clusters.

Glue DataBrew offers visual data preparation for analysts who don't code, with over 250 built-in transformations for cleaning, normalizing, and enriching data. Point-and-click recipe building that generates reusable transformation workflows.

Amazon EMR runs managed Hadoop, Spark, Hive, Presto, and other big data frameworks on EC2 clusters you control. Unlike Glue's serverless model, EMR gives you full cluster control, custom configurations, and support for frameworks beyond Spark. Use EMR when you need specific versions, custom libraries, or long-running clusters for interactive workloads.

EMR Serverless and EMR on EKS provide alternative deployment models. Serverless removes cluster management entirely, similar to Glue but with more framework options. EMR on EKS runs Spark jobs on your existing Kubernetes clusters, useful if you're already invested in container orchestration.

AWS Lambda handles lightweight transformations, real-time processing of streaming data from Kinesis, and glue code (pun intended) between services. Lambda functions in Kinesis Data Firehose transform records before delivery. Lambda functions triggered by S3 events process files as they land.

AWS Step Functions orchestrate complex multi-step workflows, coordinating Glue jobs, Lambda functions, EMR steps, and other services into reliable pipelines with error handling and retry logic. You define state machines that control execution flow, handle failures gracefully, and provide visibility into pipeline status.

Amazon Athena queries S3 data using SQL without any infrastructure, making it perfect for ad-hoc analysis and simple transformations via CREATE TABLE AS SELECT (CTAS) statements. Athena uses Presto under the hood, charges per terabyte scanned, and integrates tightly with the Glue Data Catalog. Partitioning and columnar formats drastically reduce scan costs.

Transformation patterns include deduplication, schema evolution, data quality validation, aggregation, joining datasets, format conversion, and enrichment. Glue and EMR handle heavy lifting. Lambda handles lightweight event-driven transforms. Athena handles SQL-based transformations directly on S3.

Understanding processing tradeoffs means knowing when serverless (Glue, Lambda, Athena) beats managed clusters (EMR), when real-time streaming processing (Kinesis Data Analytics, EMR with Spark Streaming) beats batch, and when simple scheduled queries beat complex orchestration.

Domain 4 digs into analysis and visualization

Analysis and visualization domain covers around 18% of exam content, focusing on tools that turn processed data into insights. Amazon QuickSight is AWS's primary BI tool, offering interactive dashboards, ML-powered insights, and SPICE in-memory engine for fast query performance. QuickSight connects to Redshift, Athena, S3, RDS, and SaaS sources.

QuickSight ML Insights automatically detect anomalies, forecast trends, and surface narratives explaining data patterns without requiring data science expertise. You can embed QuickSight dashboards into applications using APIs.

Amazon Athena enables SQL analysis directly on S3 data without loading into a database, perfect for exploratory analysis and data validation. Athena integrates with QuickSight as a data source, so analysts query the lake and visualize results.

Amazon Redshift provides high-performance SQL analytics with complex joins, aggregations, and window functions across large datasets. Redshift's columnar storage and MPP architecture deliver sub-second query times on multi-terabyte datasets when properly tuned.

Amazon OpenSearch Service (formerly Elasticsearch) supports log analytics, full-text search, and real-time dashboards via Kibana. Specialized for log and event data, offering visualizations like histograms, heatmaps, and geospatial plots.

Amazon SageMaker brings machine learning into the analytics picture, with capabilities for building, training, and deploying ML models. SageMaker Canvas provides no-code ML for business analysts, while SageMaker Studio offers full IDE capabilities for data scientists.

Understanding when to use which tool depends on user personas (executives need dashboards, analysts need SQL, data scientists need notebooks), data volumes, query complexity, and latency requirements. QuickSight for business users. Athena for analysts. Redshift for complex analytics. OpenSearch for logs. SageMaker for ML.

Domain 5 locks down security and governance

Security domain carries around 18% weight, covering encryption, access control, monitoring, and compliance across all analytics services. Encryption at rest and in transit? Table stakes. S3 supports SSE-S3, SSE-KMS, and SSE-C encryption. Redshift encrypts clusters with KMS keys. Kinesis encrypts streams with KMS.

IAM policies and roles control who can access what, with service-specific policies for S3 buckets, Glue jobs, Athena workgroups, and Redshift clusters. Understanding least privilege and how to craft policies that grant necessary access without over-permissioning is critical. Similar concepts appear in AWS Certified Security - Specialty but DAS-C01 focuses on analytics-specific scenarios.

AWS Lake Formation provides centralized access control with table and column-level permissions that work across Athena, Redshift Spectrum, EMR, and Glue. It simplifies cross-account sharing and enforces consistent permissions regardless of which query engine users employ.

VPC configurations, security groups, and network ACLs control network access to resources like Redshift clusters, EMR clusters, and RDS databases. Private subnets, VPC endpoints, and PrivateLink keep traffic off the public internet.

CloudTrail logs API calls across analytics services for audit trails and compliance. CloudWatch monitors metrics, sets alarms, and aggregates logs from Glue jobs, Lambda functions, and Kinesis streams.

Data governance includes data quality, lineage tracking, cataloging, and lifecycle management. Glue Data Quality validates datasets against rules. Lake Formation tracks column-level lineage showing how data flows through transformations.

Understanding security patterns means knowing encryption options, when to use resource policies versus IAM policies, how cross-account access works with Lake Formation, and how compliance frameworks like HIPAA, PCI-DSS, and GDPR impact architecture decisions.

Security questions trip up candidates who focus only on the happy path of building pipelines without thinking through access controls, encryption requirements, or audit logging. Every scenario question potentially has security implications.

Conclusion

Wrapping up your DAS-C01 path

Okay, real talk here.

The AWS Certified Data Analytics - Specialty DAS-C01 isn't something you'll breeze through with a weekend cram session. I mean, honestly, it's one of the tougher specialty tracks AWS offers because you're not just memorizing a long list of services. You're expected to architect entire analytics pipelines from scratch, weigh trade-offs between Kinesis Data Streams versus Firehose in messy real-world scenarios, and know exactly when Lake Formation governance makes sense instead of rolling your own IAM policies (which can get complicated fast, by the way). The DAS-C01 exam cost of $300 makes it a real investment, so you'd want to pass on your first attempt if possible.

The passing score? Around 750 out of 1000.

Sounds generous until you realize AWS scales difficulty on the fly. Some questions hit you with multi-service architecture scenarios where three answers look plausible. That's where hands-on experience with data ingestion and transformation on AWS separates people who pass from those who don't.

Study materials matter. A lot, actually.

AWS documentation for Glue, Athena, Redshift, and EMR should be your bible, not just third-party courses that skim the surface. Whitepapers on designing analytics pipelines on AWS and data governance plus security in AWS analytics will cover edge cases that trip people up on exam day. The AWS Data Analytics Specialty difficulty comes from the sheer breadth you're dealing with here. You need to know data lake certification concepts, Kinesis for streaming, QuickSight for viz, plus all the security layers stacked on top. I once spent three hours debugging a Glue job that failed because of a tiny partition naming issue, and weirdly enough, that exact scenario showed up in a practice question months later.

Practice tests? Can't skip them.

You need realistic scenarios that mirror how AWS actually phrases questions, not generic dumps that teach you to memorize answers without understanding what's happening underneath. Quality DAS-C01 practice tests force you to think through why wrong answers fail, which builds the mental models you'll need when facing a 180-minute exam with 65 complex questions that aren't straightforward at all. Let me clarify that part.

The AWS Data Analytics Specialty prerequisites technically don't exist on paper, but you'll struggle without 2-3 years working with analytics services in production environments. And remember AWS DAS-C01 renewal happens every three years, so you're committing to staying current with service updates that come faster than you'd expect.

If you're serious about passing, the DAS-C01 Practice Exam Questions Pack at /amazon-dumps/das-c01/ gives you exam-realistic scenarios that actually prepare you for the architecture-heavy questions AWS throws at you. Not gonna lie, practice questions that map directly to the five exam domains are what turned my second attempt into a pass after I failed my first try by 40 points. Had mixed feelings about that initial failure, but it taught me where my gaps were.

You've got this.

Just don't underestimate prep time.

Show less info

Hot Exams

Related Exams

PMI Professional in Business Analysis (PMI-PBA)

AWS Certified: SAP on AWS - Specialty

AWS Certified Data Analytics - Specialty

AWS Certified Developer - Associate

AWS Certified Solutions Architect - Professional

AWS Certified SysOps Administrator - Associate

Amazon AWS Certified Advanced Networking - Specialty

AWS Certified Database - Specialty

AWS Certified DevOps Engineer - Professional

AWS Certified Security - Specialty

AWS Certified Alexa Skill Builder-Specialty

AWS Certified Cloud Practitioner

AWS Certified SysOps Administrator - Associate (SOA-C02)

AWS Certified Solutions Architect - Associate (SAA-C03)

AWS Certified AI Practitioner Exam(AI1-C01)

AWS Certified Machine Learning - Specialty

How to Open Test Engine .dumpsarena Files

Use FREE DumpsArena Test Engine player to open .dumpsarena files

DumpsArena.co has a remarkable success record. We're confident of our products and provide a no hassle refund policy.

Your purchase with DumpsArena.co is safe and fast.

The DumpsArena.co website is protected by 256-bit SSL from Cloudflare, the leader in online security.