Microsoft DP-100 (Designing and Implementing a Data Science Solution on Azure)

What Is the Microsoft DP-100 Certification?

What the Microsoft DP-100 certification actually validates

The Microsoft DP-100 certification officially certifies you as a "Microsoft Certified: Azure Data Scientist Associate." Sounds formal, but really it just means you know how to build and deploy machine learning solutions on their cloud platform. This is not theoretical stuff.

The exam tests whether you can actually handle the complete ML lifecycle using Azure Machine Learning services. Real-world application here, not just memorizing definitions.

You need to demonstrate practical skills in designing and implementing a data science solution on Azure. That covers everything from setting up compute resources to deploying models into production, monitoring their performance in real-time, and iterating based on feedback from stakeholders who may or may not understand what you're actually doing. The certification validates you understand Azure's ML workspace, can run experiments, track model performance, and implement MLOps practices. It focuses on real scenarios where you'd provision clusters, configure pipelines, and monitor deployed endpoints.

How DP-100 differs from other Azure certifications

Microsoft has several data and AI certifications. People get confused about which one to pursue. The DP-100 is specifically for data scientists working with machine learning.

The AI-102 targets AI engineers building cognitive services applications. Different audience entirely. The DP-203 is for data engineers focused on data pipelines and warehousing rather than ML modeling.

Entry-level? Different story.

If you're just exploring whether cloud data careers interest you, the DP-900 Azure Data Fundamentals is your starting point. DP-100 assumes you already understand data concepts and Python programming. It's intermediate level at minimum.

The exam has changed a lot from earlier versions. Microsoft updated it in recent years to reflect Azure Machine Learning's newer features like automated ML, designer workflows, and responsible AI tools. The 2026 updates incorporated even more emphasis on MLOps, model monitoring, and deployment strategies that enterprises actually use in production environments.

Who benefits from getting DP-100 certified

Data scientists transitioning to Azure obviously get the most value here. If you've been doing ML work locally or on other platforms, this credential proves you can translate those skills to Microsoft's ecosystem. Machine learning engineers who deploy and maintain models will find the Azure Machine Learning certification directly applicable to their daily responsibilities.

AI practitioners and analytics professionals expanding into predictive modeling benefit too. Won't sugarcoat it though: if you're a complete beginner to both data science and Azure, you'll struggle. The exam assumes foundational knowledge in both areas.

Specific roles that benefit most? Azure Data Scientists, ML Engineers, Data Science Consultants, and AI Solution Architects. These positions increasingly require cloud platform expertise alongside traditional data science skills. I've seen job postings where they say Azure ML experience is "preferred" but really they mean required unless you bring something exceptional to the table. Organizations hiring for these roles often list it as a must-have, though sometimes they bend depending on your other qualifications.

Career impact and market demand

Getting DP-100 certified can legitimately boost your earning potential. Certified Azure Data Scientists typically command salaries ranging from $95,000 to $150,000+ depending on experience and location. The certification demonstrates you're not just theoretically capable but can actually implement solutions using enterprise-grade tools.

Market demand keeps growing. Companies migrating ML workloads to cloud platforms need people who understand both the data science and the infrastructure side. Having DP-100 on your resume signals you can handle both aspects.

The certification itself won't get you a job if you lack practical experience, but it does establish credibility with employers and clients who specifically use Azure. Particularly valuable if you're consulting or contracting where clients want proof of platform-specific expertise.

How DP-100 connects to Azure's broader ecosystem

This certification integrates with Microsoft's entire AI and machine learning ecosystem. You'll work with Azure Machine Learning workspace, Azure Databricks, Azure Synapse Analytics, and various compute options. Understanding how these services interconnect matters for designing scalable solutions.

The exam validates your proficiency in end-to-end ML lifecycle management. From data preparation through model deployment and monitoring, handling edge cases that inevitably pop up when real users start interacting with your models in ways you never anticipated during development. You're expected to understand Azure ML pipelines and MLOps practices that enable continuous integration and deployment of ML models. This is where many data scientists struggle because they're used to working in notebooks without thinking about productionization.

Responsible AI in Azure Machine Learning is increasingly emphasized too. Microsoft wants certified professionals to understand fairness, explainability, and governance considerations when deploying ML systems. This is not just checkbox compliance. It's about building systems that work reliably and ethically at scale.

Practical considerations before pursuing DP-100

The certification stays valid for one year, then you need to renew through Microsoft Learn assessments. The DP-100 renewal process is less intensive than retaking the full exam but still requires staying current with platform updates.

DP-100 prerequisites aren't officially mandated, but realistically you need Python programming skills, understanding of ML concepts, and basic Azure familiarity. Some candidates find value in completing AZ-900 Azure Fundamentals first to understand cloud basics.

Complementary certifications that pair well? DP-203 for data engineering skills or AI-102 if you're expanding into cognitive services. Building full Azure expertise often means stacking multiple credentials over time.

The DP-100 exam cost runs around $165 USD. The DP-100 passing score is set at 700 out of 1000 points. Plenty of candidates wonder about DP-100 difficulty. It's challenging if you lack hands-on Azure ML experience, manageable if you've actually built and deployed models on the platform.

DP-100 Exam Overview and Format

DP-100 is the official exam code for DP-100 (Designing and Implementing a Data Science Solution on Azure), and passing it earns you the Microsoft DP-100 certification under the broader Azure Machine Learning certification umbrella. Honestly? It's one of the few Microsoft exams where you can't fake it by memorizing product pages. Hands-on matters here.

Look, Microsoft tweaks this one constantly because Azure Machine Learning changes constantly, and the thing is, for 2026 you should assume the "current version" is whatever's listed on the official Skills Measured PDF the week you actually book. The exam objectives and weighting get refreshed on a rolling basis when features shift. Designer vs SDK workflows, managed online endpoints behaviors, registry and model packaging updates, that kind of thing. The most recent updates heading on the exam page? That's the date you care about, and I mean, I tell people to screenshot it for their own sanity because you don't want to study an older outline and then wonder why you're getting hammered with Azure ML pipelines and MLOps and governance questions.

DP-100 exam overview and basic format

Total testing time? 180 minutes (3 hours).

That's not "time in the building", that's the clock for the exam itself, and it can feel tight when you hit case studies plus any performance-based stuff that makes you read carefully and click around. Like you're working through some weird treasure map where one wrong turn costs you points and mental energy.

Question count's typically 40 to 60 questions, and yes it varies by exam form. Some versions feel like 42 chunky questions with heavy scenarios while others feel like 58 quicker hits with a couple nasty case studies thrown in for fun. Either way? Plan for stamina.

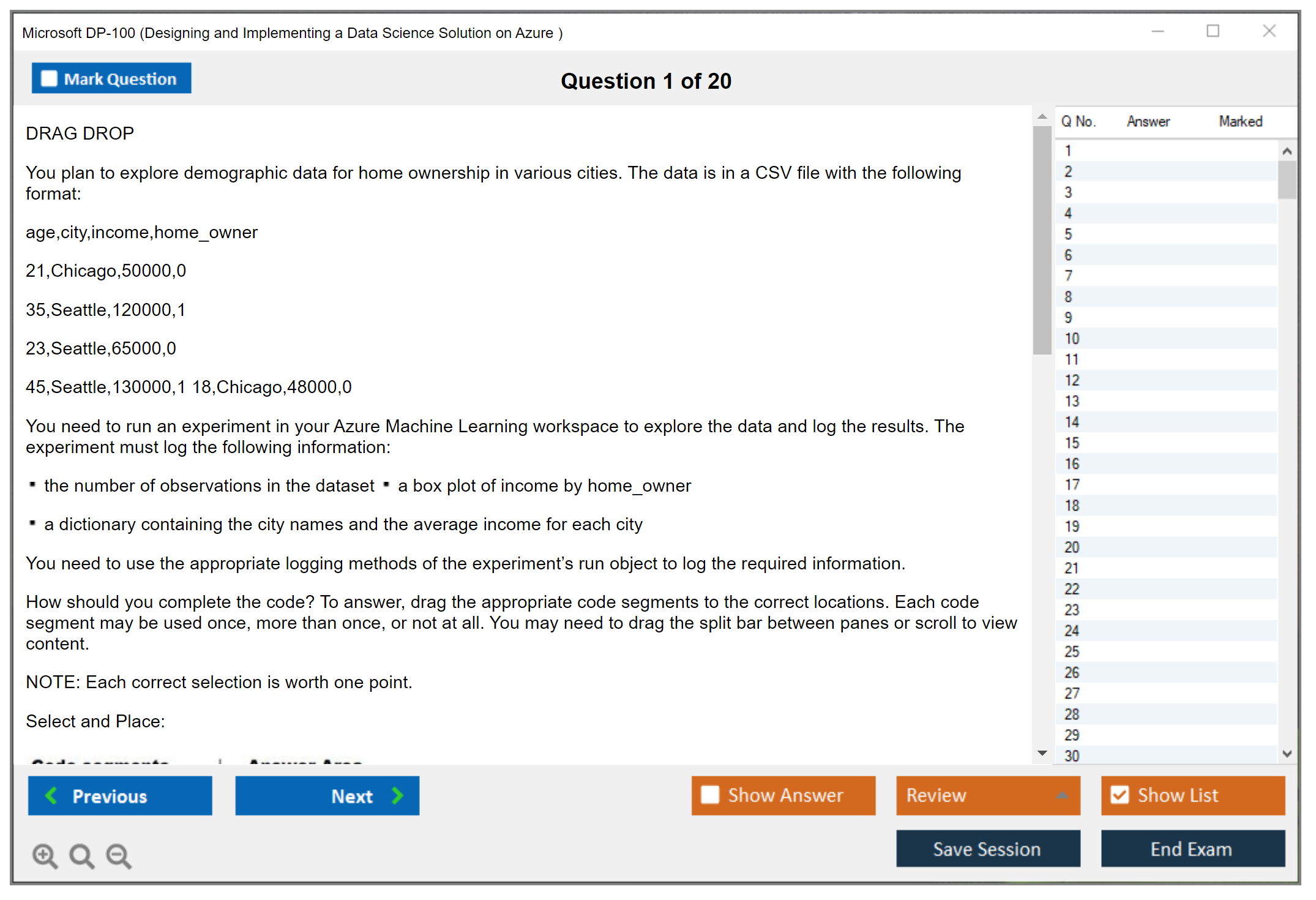

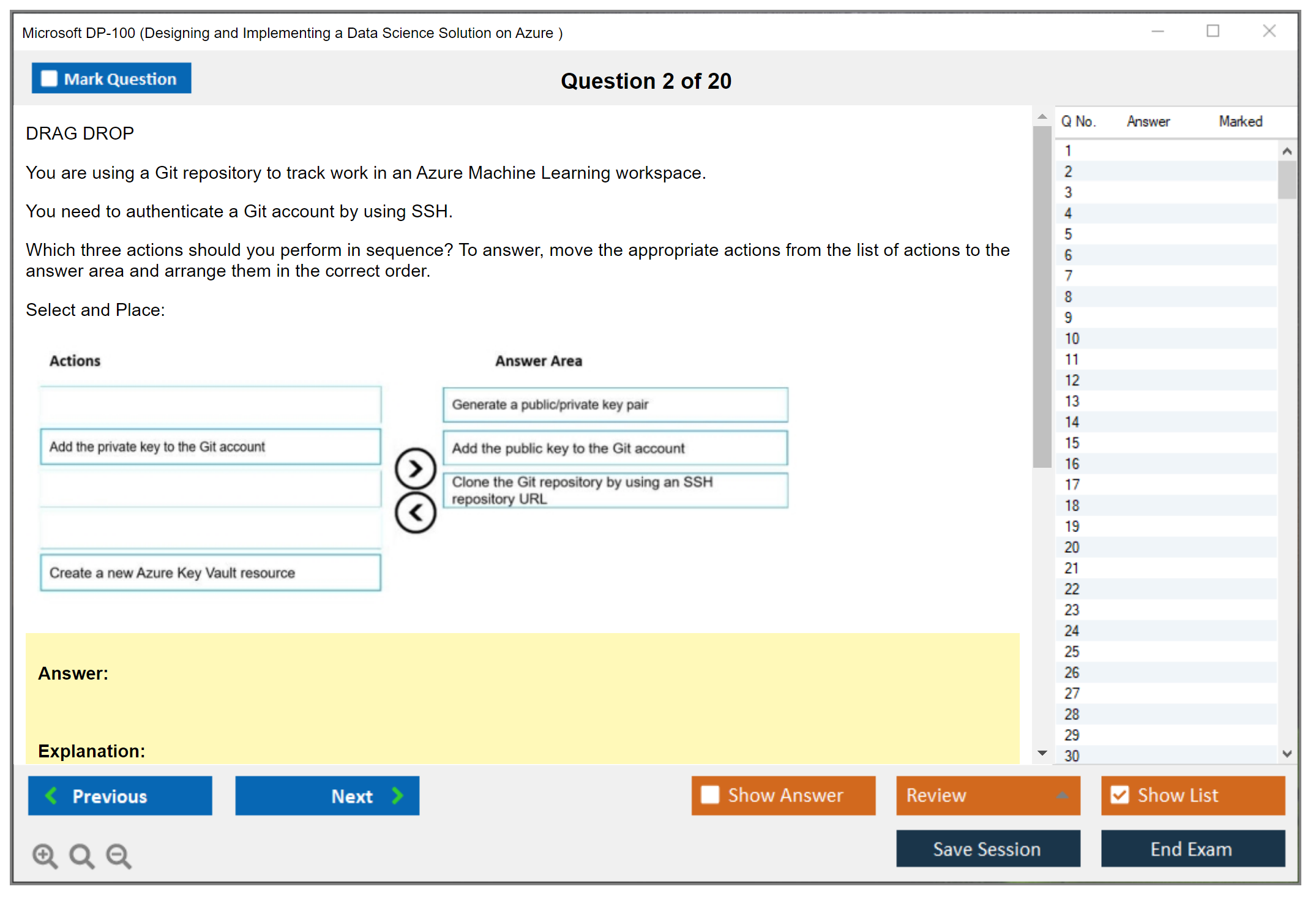

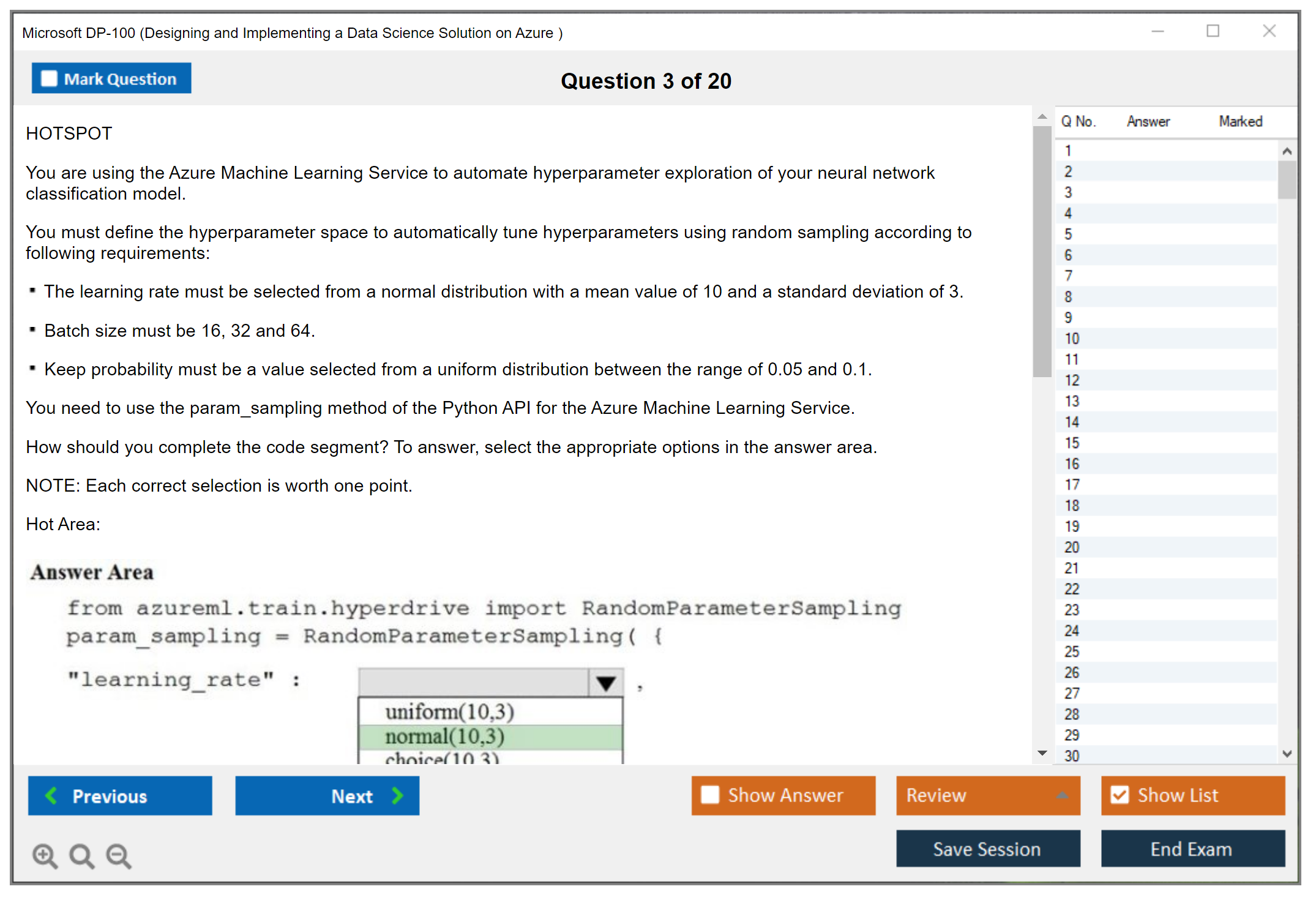

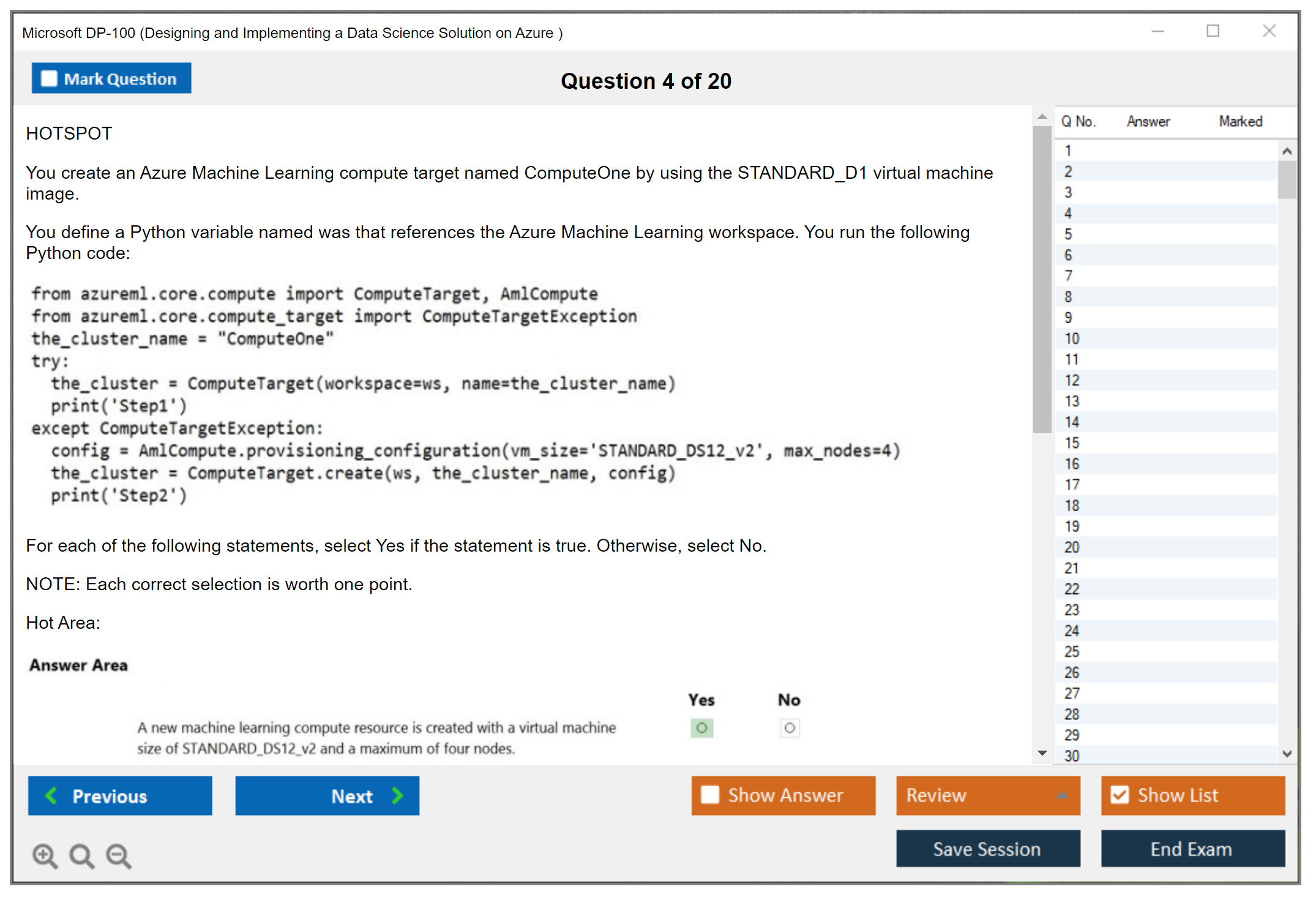

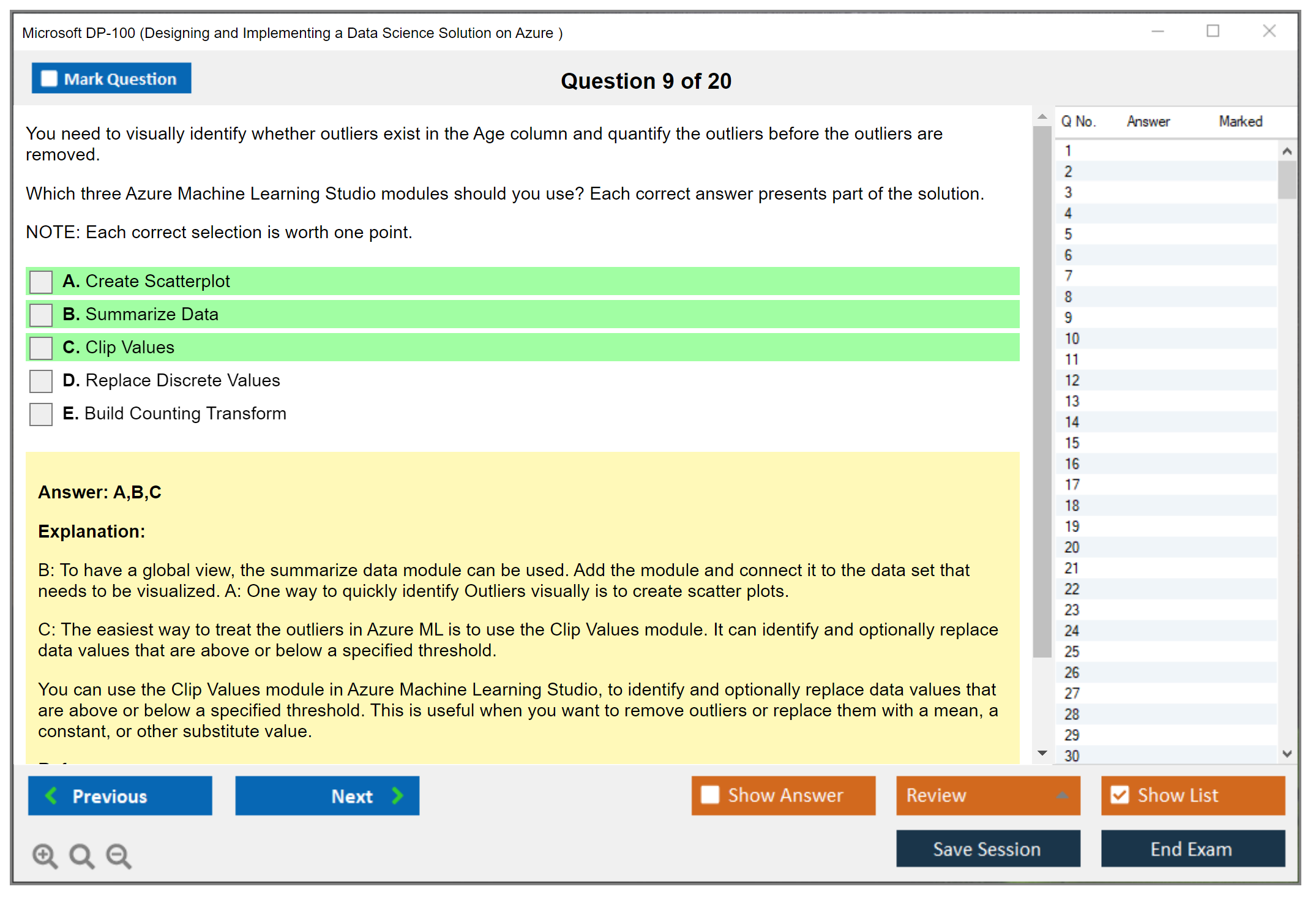

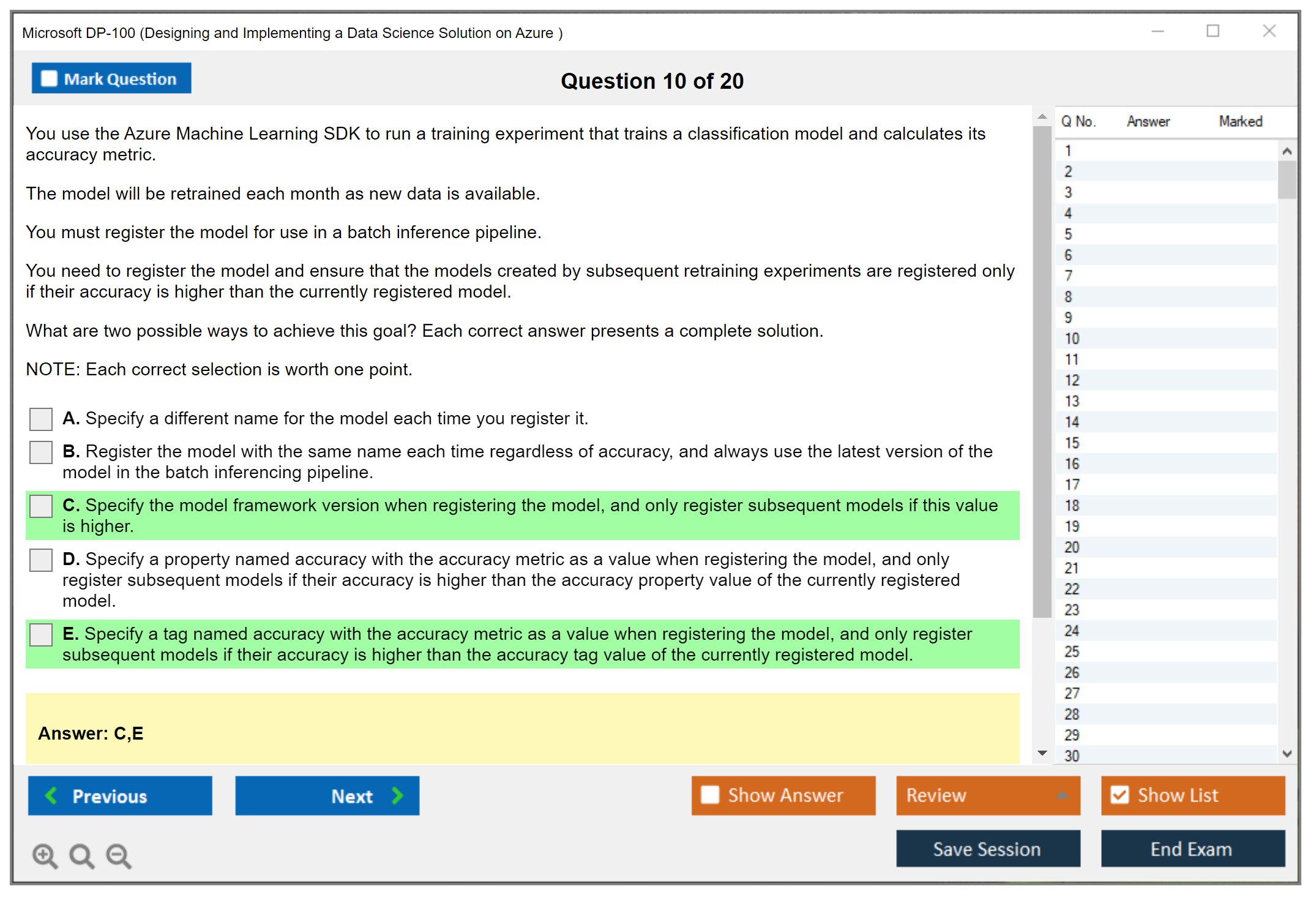

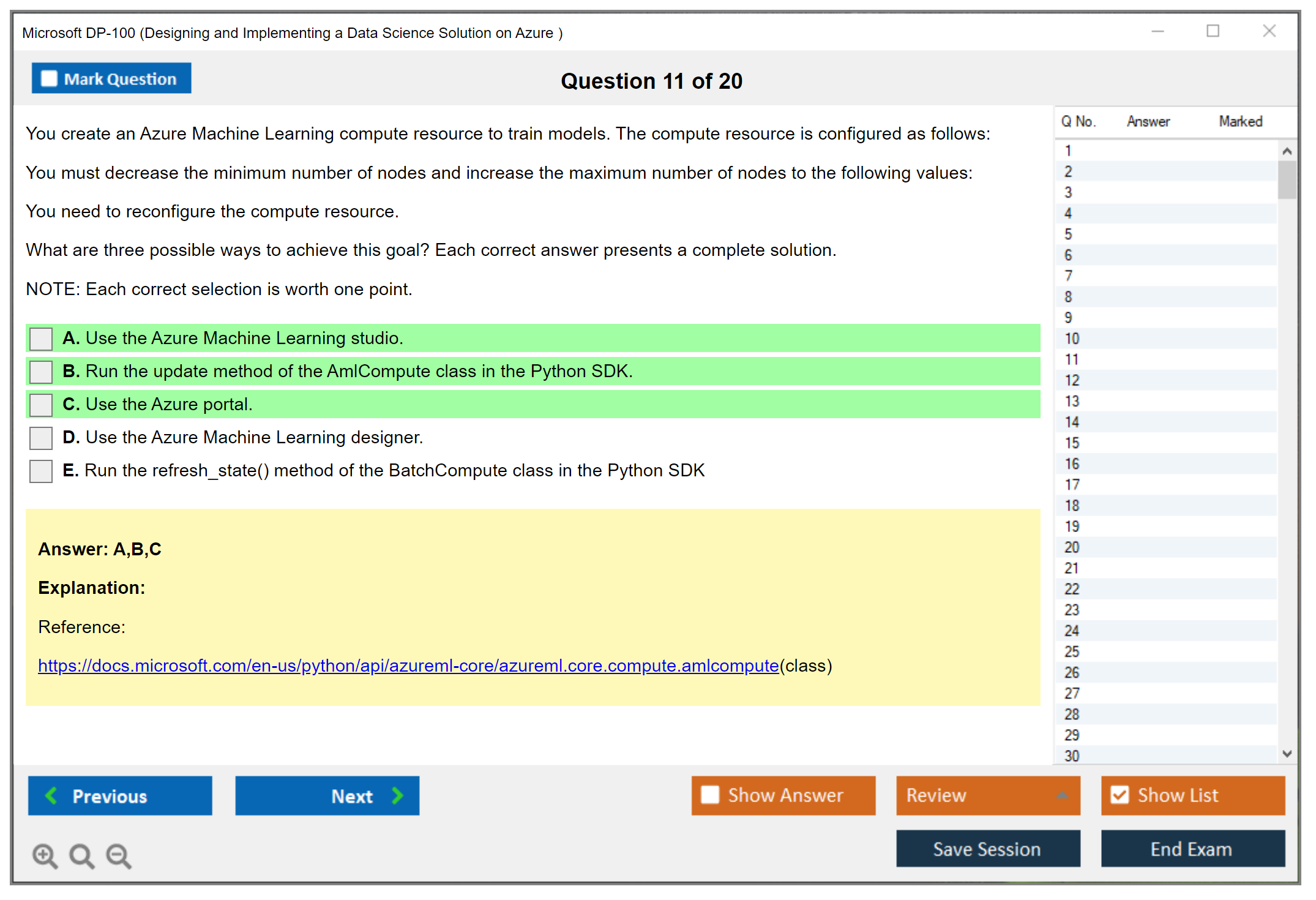

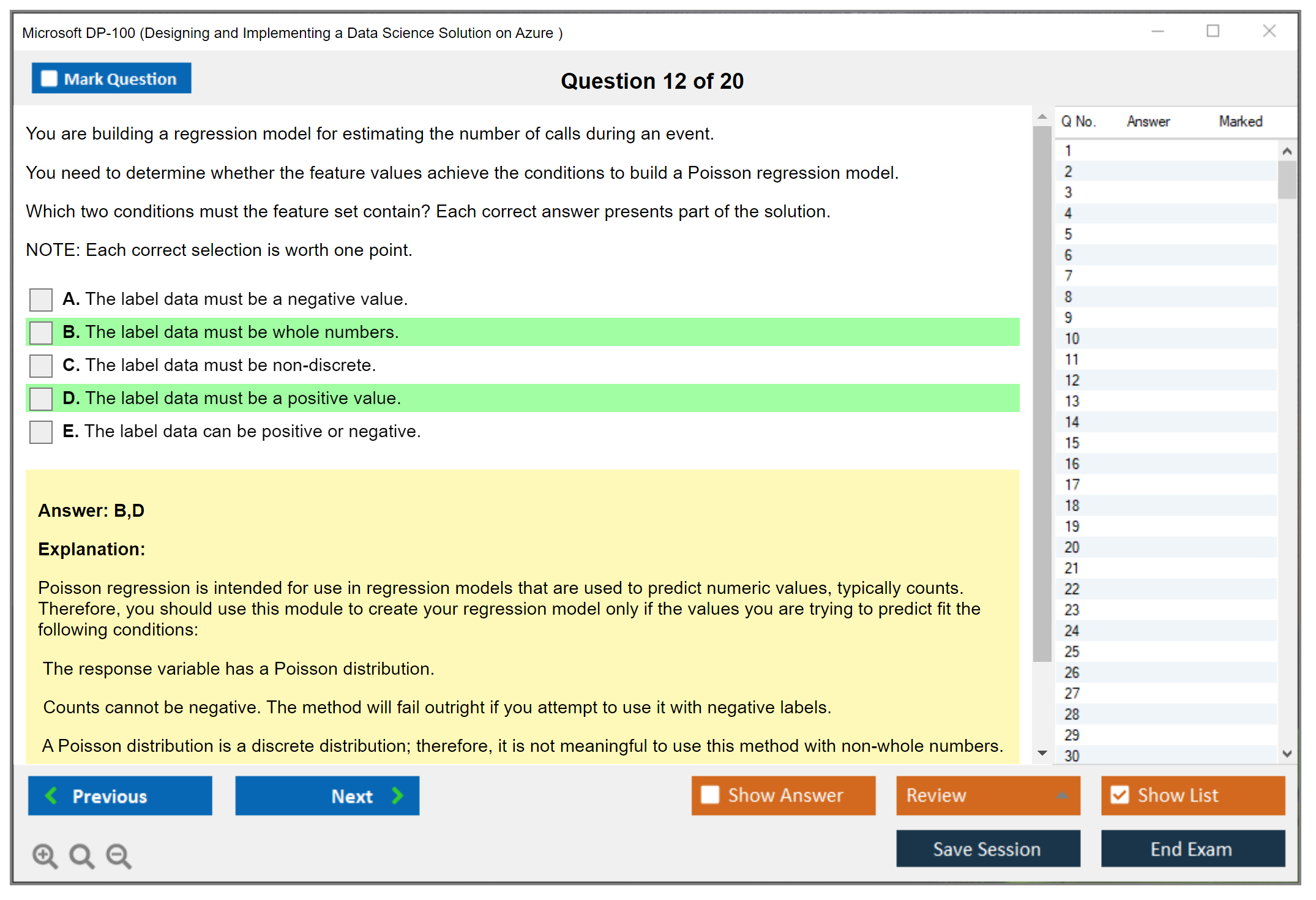

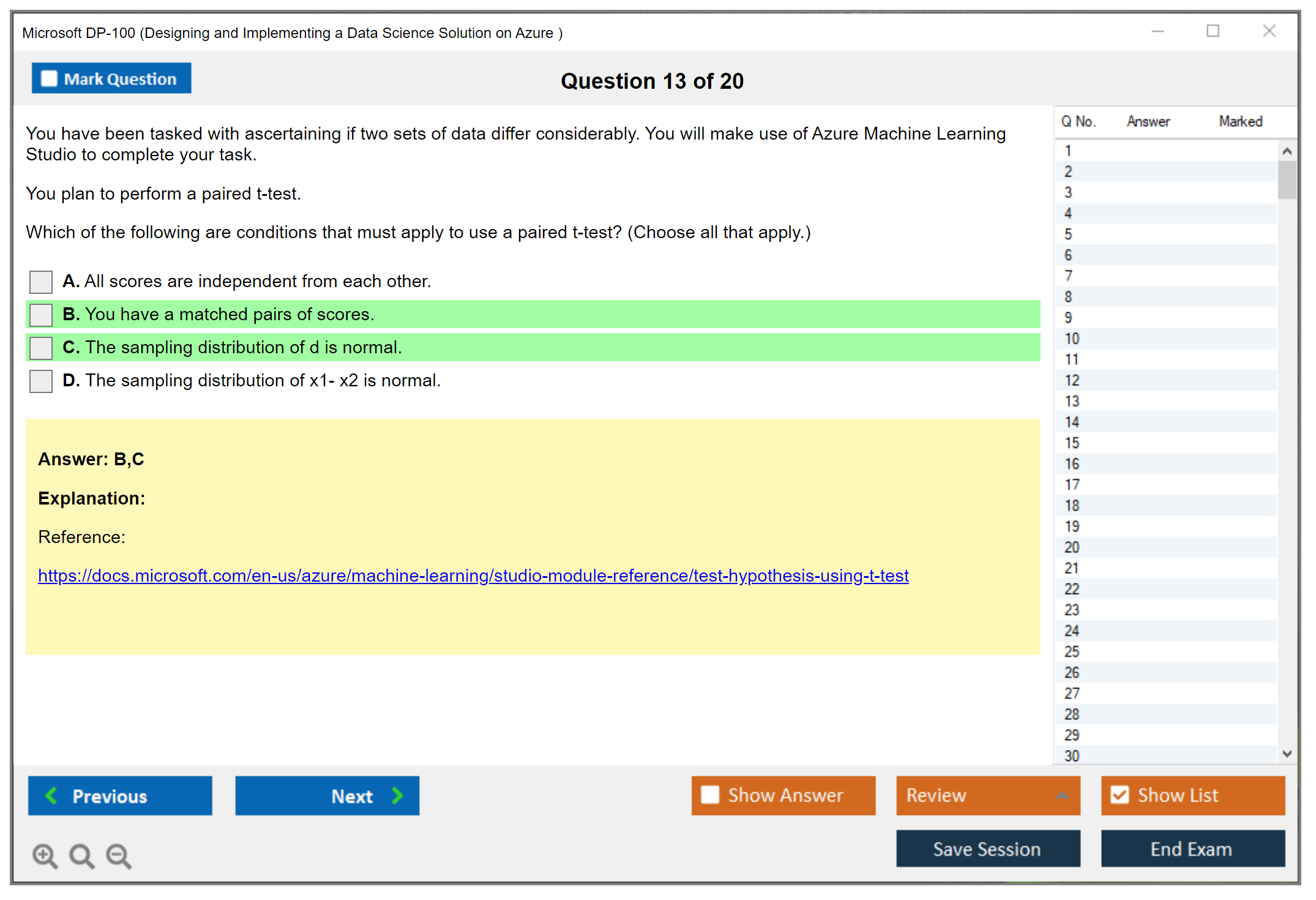

Question types you'll see are a mix. Multiple choice. Straightforward sometimes, but DP-100 likes "best next step" choices with distractors that're technically possible but wrong for cost, security, or reproducibility. Multiple response. These're where people bleed points because they rush and miss the "choose two" detail. Case studies. More on these below, 'cause they're their own beast. Drag-and-drop and hotspot. Usually UI-ish, like mapping steps in a pipeline or selecting where to configure identity, networking, datastore, or endpoint settings. Not always easy, trust me. Scenario-based questions. Honestly most of the exam feels scenario-based, even when it's multiple choice, because it's built around business requirements and technical constraints that mirror real projects.

Performance-based questions can show up, meaning portal simulation vibes or code interpretation where you're reading Python SDK snippets, YAML, or pipeline definitions and deciding what it does, what's missing, or what breaks when you move from dev to prod. Sometimes it's "what'll this run produce" and sometimes it's "which setting makes this deploy privately", and either way it rewards people who actually did model training and deployment in Azure at least once.

lab-style tasks and case study flow

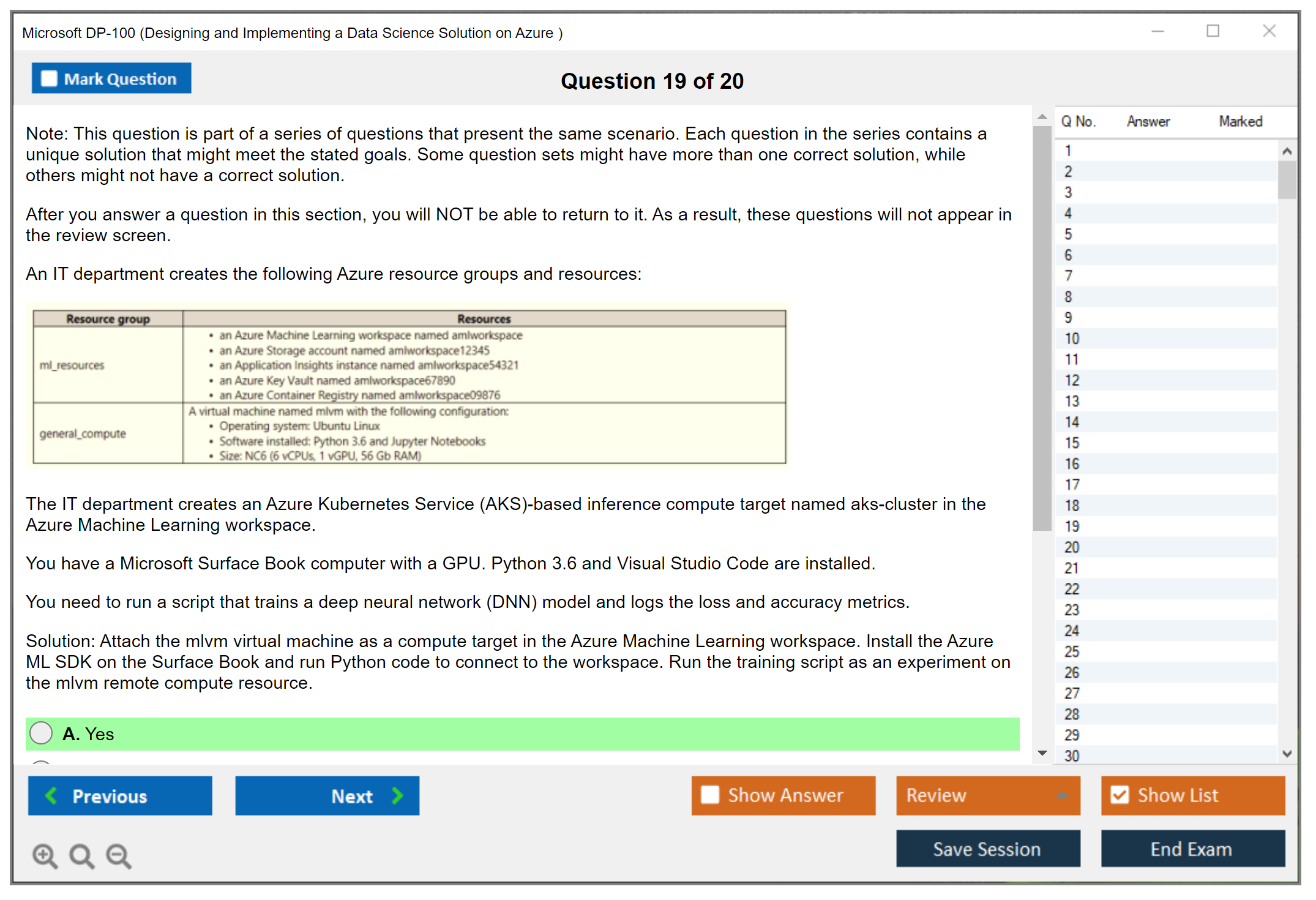

There can be lab-based scenarios where you basically demonstrate practical configuration choices for Azure ML. Compute cluster selection, environment setup, datastore and data asset handling, managed identity, endpoint deployment options, monitoring hooks, and pipeline orchestration. It's not a full-on free-for-all lab like older Microsoft exams used to do. But it can feel close.

When you're interpreting a workspace setup and deciding what to click or what setting's correct, you need to be comfortable with where things live in the Azure portal and inside Azure ML Studio. Not just theoretically but like you've actually been there before staring at those menus at 2 AM wondering why your pipeline won't trigger. I once spent forty minutes debugging a datastore connection that turned out to be a typo in the account key. Still mad about it.

Case studies are multi-question scenario sets. You get a business context, current architecture, requirements, and "technical specifications" sections, then a series of questions that all reference the same story. Not gonna lie? The trick isn't the ML math. The trick's reading like an engineer and spotting the constraint that actually matters. Like "data can't leave region", "must be reproducible", "must support CI/CD", "must log lineage", "must meet Responsible AI requirements", and then choosing the Azure ML feature or workflow that fits without overengineering it or ignoring governance.

Adaptive testing elements may appear. Microsoft doesn't always advertise the exact algorithm, but they do use psychometrics and can adjust question selection and difficulty based on how you're doing, plus they rotate forms aggressively. So if your first 15 questions feel brutal? Don't spiral. That can be normal.

languages and delivery options

Language availability includes English, Japanese, Chinese (Simplified), Korean, German, French, Spanish, Portuguese (Brazil), Russian, Arabic, and Indonesian. Read the fine print when scheduling, 'cause not every delivery method and region offers every language at every time slot.

Delivery's either Pearson VUE testing centers or online proctored exams. Testing center's boring but predictable. Online's convenient but way more fragile.

Online proctoring requirements are where people mess up. You need a supported OS and browser, stable internet, webcam and mic, and you must pass the system test ahead of time. Your workspace setup has to be clean. Clear desk, no second monitor, no phone, no notes, and the proctor can ask you to show the room like you're on some weird apartment tour. Identification verification's strict, and if your name doesn't match your profile, you're going to have a bad day.

Testing center experience? More old-school. You check in, show ID, lock your stuff up, and they give you rules about what's allowed. Break policies vary, but breaks can still cost you time if the clock keeps running, so don't assume you can wander off guilt-free.

Calculator and note-taking: generally, the exam interface can provide an on-screen calculator when needed, and you may get a digital whiteboard or, at a test center, a physical erasable sheet. Don't expect to bring your own. Also, DP-100's rarely calculator-heavy anyway.

NDA's real. You agree not to share exam content. No question dumps, no "here are the exact scenarios", none of that. Besides being unethical, it can get your cert revoked.

exam interface, results, and retakes

The interface lets you flag questions, review answers, and track time. Use those tools.

Some sections, especially case studies, may lock navigation so you can't go back once you exit that case study, so read the on-screen instructions every single time. I mean, time management's a skill here because you can burn 20 minutes arguing with yourself about deployment options and then panic later when you've got eight questions left and three minutes on the clock.

You usually get immediate preliminary results on completion at the test center or after the online session ends. The official score report typically lands within 24 to 48 hours in your Microsoft certification profile.

The score report includes pass/fail and domain-level performance feedback, not a line-by-line breakdown. People always ask about DP-100 passing score, and Microsoft exams are typically scaled with 700 as the passing score, and DP-100 follows that pattern, but don't overthink the number. Focus on coverage.

Retake policies: if you fail, you can retake after a waiting period, and the waiting period increases after multiple failures. Fees apply each time unless you bought an offer that includes a retake. Check Microsoft's current rules before you plan a "two attempts no matter what" strategy.

what DP-100 actually tests (objectives and vibe)

The DP-100 exam objectives cover Azure Machine Learning service components end to end: workspace management, compute resources, data preparation, experiment tracking, training, deployment, and monitoring. You'll see version control and experiment tracking expectations, plus Azure ML pipelines and MLOps best practices sprinkled everywhere, not isolated in one corner like some afterthought.

Responsible AI shows up across domains. Expect questions about model interpretability, fairness, and governance, like when to use explainers, what to track for audits, and how to think about bias and monitoring in production under Responsible AI in Azure Machine Learning guidelines.

The balance's theory plus doing. DP-100's practical.

It's real-world scenario focus rather than memorization of service features, which is why your DP-100 study materials should include labs and why DP-100 practice tests only help if you also build something, even if it's just a toy model deployed to a managed endpoint so you understand what breaks and why.

If you're coming from data engineering, pairing prep with something like DP-203 (Data Engineering on Microsoft Azure) helps. If you're heavier on admin and want more Azure grounding, DP-300 (Administering Relational Databases on Microsoft Azure) can round out platform thinking. And if you want the official exam page handy, here's DP-100 (Designing and Implementing a Data Science Solution on Azure).

People also ask about DP-100 exam cost, DP-100 difficulty, DP-100 prerequisites, and DP-100 renewal. Cost varies by region and promos. Difficulty? Medium-to-high if you've never shipped a model. Prereqs aren't formal, but Python, ML basics, and Azure basics're basically assumed. Renewal's the usual Microsoft renewal model: periodic free online assessment, open book style, tied to your cert expiration window.

DP-100 Exam Cost and Registration Details

How much does the DP-100 exam cost?

Alright, money talk.

The standard DP-100 exam cost sits at $165 USD, which honestly isn't cheap but also isn't outrageous when you stack it against other professional certifications out there in the tech world.

Here's the thing, though. That $165's just a baseline number. Your actual cost varies based on where you live, and I mean, regional pricing differences can seriously push that number higher in some countries because of local taxes, VAT, and administrative fees tacked on. If you're in Europe or parts of Asia, you might see the price bump up to $180 or even $200 after conversion and taxes get factored in, which is kinda frustrating.

To find the most current official pricing for your specific region, check the Microsoft Learn certification page or head directly to the Pearson VUE website. Microsoft updates pricing occasionally. The last thing you want's to budget for one amount and get smacked with a surprise at checkout.

Registration and scheduling

Registration happens through Microsoft's official certification portal, which connects to Pearson VUE for the actual scheduling.

First thing you need to do? Create a Microsoft certification profile if you don't already have one. This tracks all your Microsoft credentials and exam history, keeps everything organized.

Once your profile's set up, you'll schedule through Pearson VUE. You get two main options here: testing center or online proctored. Testing centers give you a controlled environment with someone physically watching over the room, which some people prefer. Online proctored lets you take it from home but requires a webcam, microphone, and a space that meets their requirements (no clutter, no other people around, that kind of thing).

Pretty flexible payment methods. Credit cards obviously work. PayPal's accepted. You can also use exam vouchers, which I'll get to in a second, or organizational training credits if your employer has a Microsoft training agreement set up.

Vouchers and discounts

Exam vouchers? That's where you can actually save some money, honestly.

Microsoft offers these through various channels, and they basically let you redeem the exam without paying the full retail price directly, which's clutch if you're on a budget.

Students get the best deals through Microsoft Imagine Academy and partnerships with academic institutions. Some universities bundle these certifications into their programs and provide vouchers at reduced rates or even free, which's insane. If you're currently enrolled somewhere, check with your IT or computer science department because they might've stuff available you don't even know about.

Microsoft Virtual Training Days are another goldmine. These're free training events Microsoft runs regularly, and if you attend the full session for a specific certification track, they often give you a free exam voucher as a reward. The catch's you need to actually show up for the whole thing and these vouchers typically expire within a few months, so you can't just hoard them indefinitely.

For organizations, corporate training agreements and volume licensing can reduce per-exam costs when buying in bulk. If your company's paying for your certification, they might already have credits available through their Microsoft partnership.

Retake policies and costs

Here's something important. Retakes cost the same as the original exam. No discount whatsoever.

If you fail, you're paying another $165 to try again, which stings. The waiting period between attempts's typically 24 hours for your first retake, which honestly feels generous compared to some other cert programs. But if you fail a second time, you're waiting 14 days before attempt number three. After five failures you're locked out for a year, which's pretty harsh but prevents people from just brute-forcing their way through.

Some people buy exam replay packages, which bundle the exam with a guaranteed retake option at a slightly reduced total cost. These can be worth it if you're not super confident, though I'd argue if you're that uncertain maybe you need more study time instead. I knew someone who bought three retakes upfront "just in case" and passed on the first try anyway, so that money just sat there wasted.

Rescheduling and cancellations

Actually pretty reasonable here.

If you cancel or reschedule at least 6 business days before your appointment, you get a full refund, no questions asked. Cancel with less notice and you'll pay fees based on how close you're cutting it. The exact fee structure depends on how close you are to the exam date.

Miss your appointment entirely (what they call a "no-show") and you forfeit the entire exam fee. No refund, no reschedule, nothing. This's why I always set multiple calendar reminders, like one week out, one day out, and morning of.

Special accommodations're available if you have disabilities that require testing adjustments. You submit a request through Pearson VUE with documentation, and they'll work with you on extended time, assistive technology, whatever you need to succeed. Just start this process early because approval takes time.

Additional discount programs

Military and veteran discounts exist in some regions. Microsoft partners with organizations that support veteran employment and training, which's pretty cool. Nonprofit organization employees might also qualify for discounts through Microsoft's philanthropic programs, though these aren't as widely advertised or easy to find.

The real total cost

Beyond the exam fee itself, you need to think about the total investment required.

Study materials can run anywhere from free (Microsoft Learn paths) to several hundred dollars if you buy practice tests, instructor-led training, or lab subscriptions for hands-on practice. The DP-300 certification shares some Azure fundamentals that might help with foundational knowledge if you're coming from database administration, just saying.

Practice test bundles sometimes get offered at discounted rates when purchased alongside the exam, though honestly the quality varies wildly across vendors and you gotta be careful.

Tax and reimbursement considerations

Worth checking here.

Check whether exam fees count as tax-deductible professional development expenses in your jurisdiction. In many places they do, especially if the certification directly relates to your current job or clear career advancement trajectory.

Employer reimbursement's worth pursuing aggressively. Put together a brief case explaining how Azure Machine Learning certification benefits your role and the company's cloud strategy. Most organizations with any kind of professional development budget'll cover certification costs, especially for cloud technologies they're already using or planning to adopt.

ROI perspective

Is the DP-100 exam cost worth it?

Look, $165 plus study materials might total $300-500 depending on your approach and how much prep you need. Data scientists with Azure ML skills command higher salaries than those without cloud platform expertise. Even a modest salary bump of a few thousand dollars annually pays back the certification investment pretty quickly. The market for Azure data science skills's strong right now, and having that credential definitely opens doors you wouldn't otherwise get through.

DP-100 Passing Score and Scoring Methodology

What is the Microsoft DP-100 certification?

The Microsoft DP-100 certification is the Azure Machine Learning credential most hiring managers actually recognize when they want proof you can do real work in Azure ML, not just talk about algorithms. You're being measured on Designing and implementing a data science solution on Azure, which basically describes the day job for data scientists and ML engineers who build, train, and ship models.

This one's less about math tricks, honestly. More about platform choices.

It maps to roles like data scientist, applied ML engineer, and the person who gets paged when a deployment breaks.

DP-100 exam overview

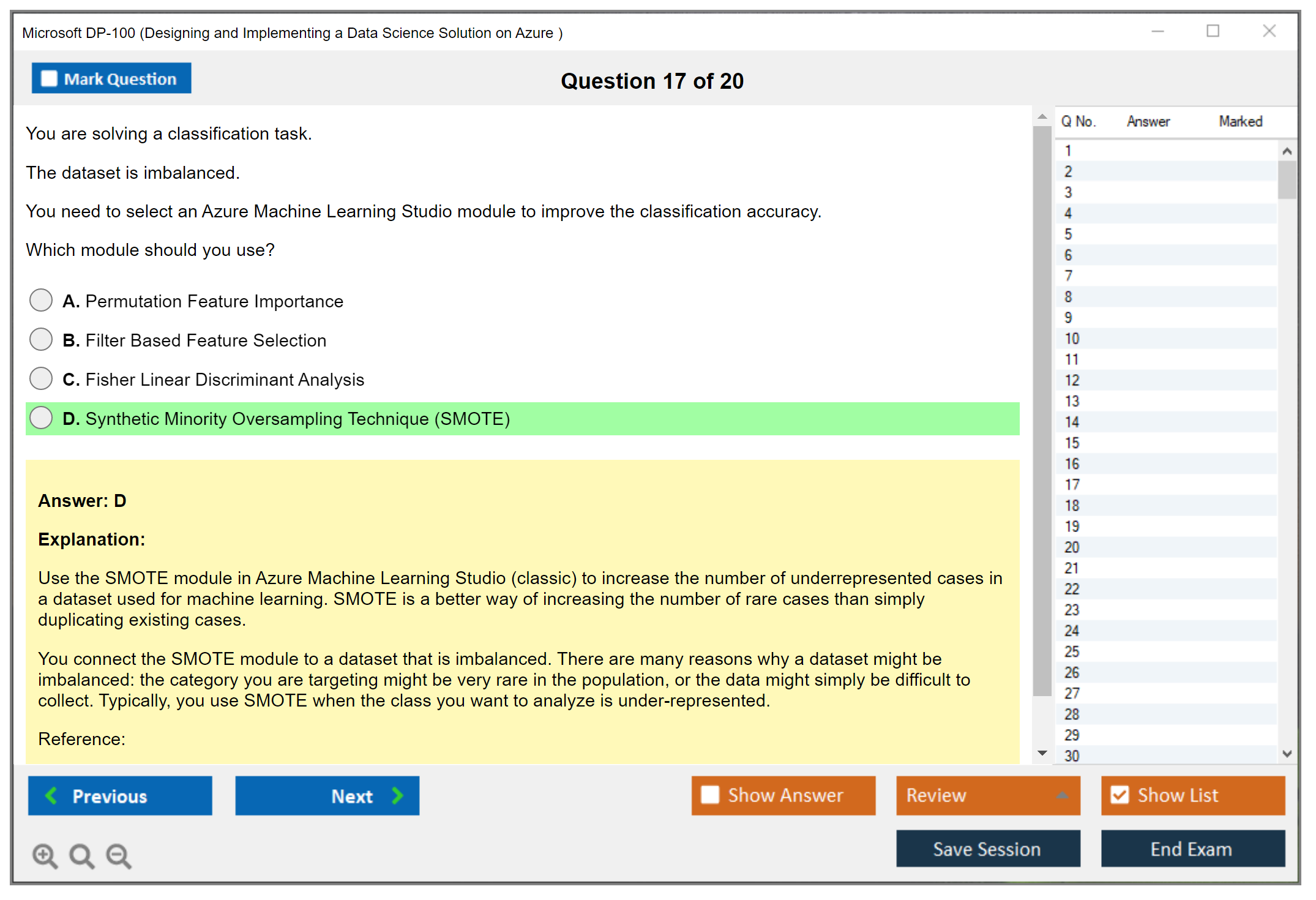

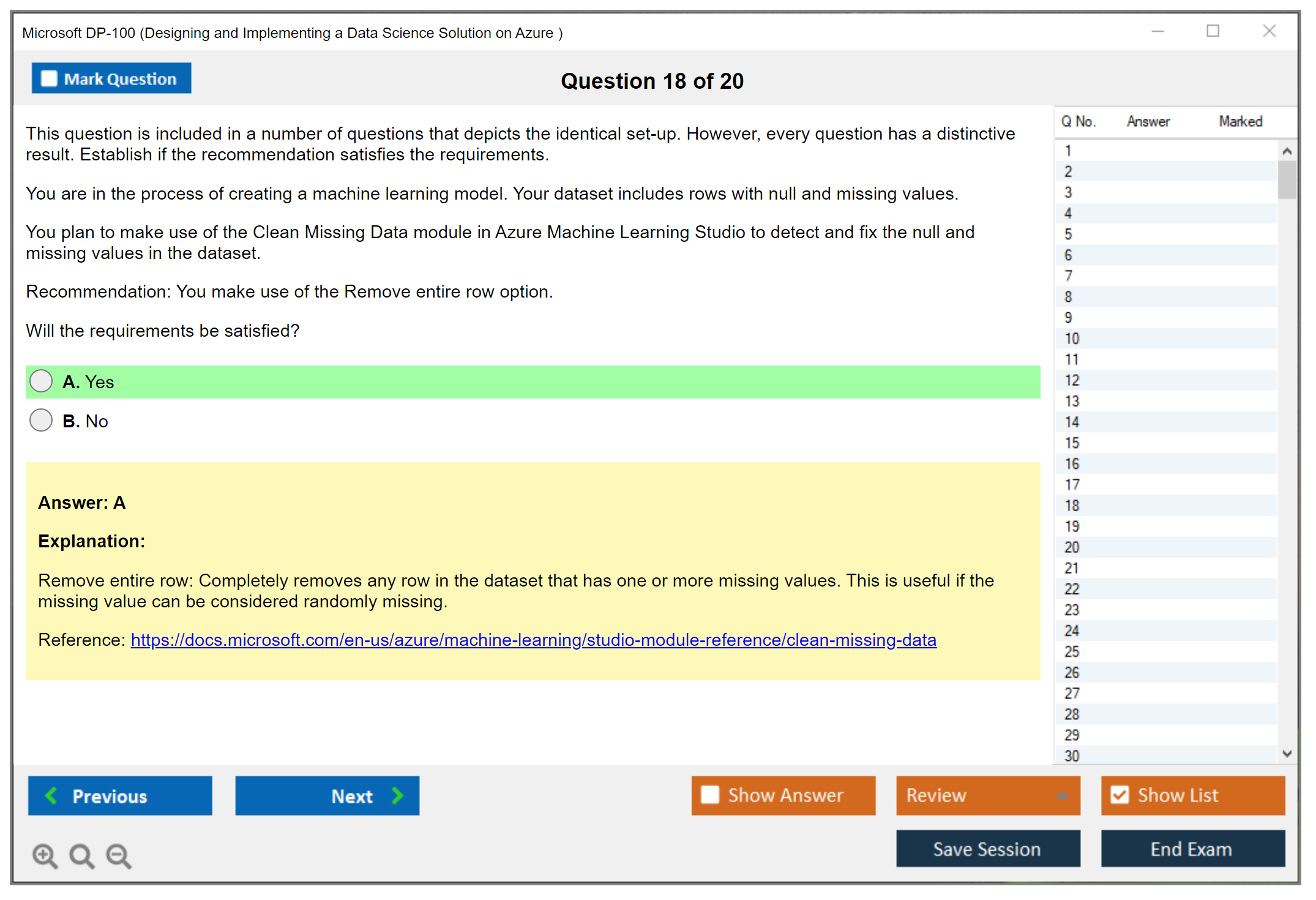

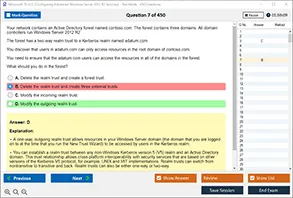

DP-100 is officially "Designing and Implementing a Data Science Solution on Azure." The format changes over time, but expect a mix of multiple choice, multi-select, drag-and-drop style items, and case study blocks where you get a scenario and a bunch of questions tied to it. The kind that tests whether you understand Azure ML pipelines and MLOps rather than whether you memorized one command.

The case studies feel real. The one-off questions? Warmup.

Time is time. No speed bonus. Use every minute.

Also, Microsoft can include unscored questions. Experimental items. They don't count toward your score, and there's no way to identify which ones they are while you're taking the exam, so you treat every question like it matters and keep moving.

DP-100 exam cost

People ask DP-100 exam cost a lot, and the annoying answer is "it depends." Pricing varies by region and currency, and Microsoft updates it, so you check the official exam page at booking time for the current number. Some employers cover it, some training providers hand out vouchers, and sometimes you can get discounts through events or programs.

Retake policies exist. But they change, so don't rely on a blog post for the fine print. Check the policy during scheduling, because the rules around waiting periods and pricing can shift.

DP-100 passing score and scoring methodology

The DP-100 passing score requirement is 700 out of 1000 on Microsoft's scaled scoring system. That's the headline: the score range is 0 to 1000, and 700 is the minimum passing threshold.

Now the part that trips people up. Microsoft's scoring isn't a simple percentage-based calculation where 70% correct equals a 700. I mean, scaled scoring means your final number is a converted score, and the conversion from raw score to scaled score uses a proprietary algorithm that accounts for question difficulty, exam form differences, and how the exam's statistically calibrated. So a 700 is really "you showed enough competency," not "you got X questions right."

Different questions carry different weight values, too. Performance-based scoring's real. Case study questions are typically worth more points than standalone multiple-choice items because they're testing a broader skill set across the DP-100 exam objectives like experiment tracking, model training and deployment in Azure, and Responsible AI in Azure Machine Learning. And yes, partial credit can happen: some multi-part questions may award points for partially correct answers, which is why being "half right" beats blank.

No penalty for wrong answers. Guessing beats blanks. Always answer.

Microsoft also doesn't provide exact raw scores. Not gonna lie, people hate that, but it's about exam integrity and question bank security. If everyone knew precisely how many points each item was worth and how the conversion worked, it'd get gamed fast, and you'd see memorization strategies get even worse than they already are.

Scaled scoring exists mainly for fairness. Exam forms vary, and the difficulty can vary across versions, so scaled scoring helps ensure consistency regardless of which questions you receive. Microsoft uses statistical validation to calibrate difficulty, and questions are pre-tested and validated before inclusion in scored exams, which is also why those unscored experimental questions show up at all.

Beta exams? Special case. Beta scoring and results take longer because Microsoft's still validating the exam, tuning question performance, and finalizing that scoring model based on real candidate data, so don't panic if you take a beta and you're waiting.

A 700, practically speaking, means you met the bar across the skills measured. It's not a trophy for perfection. And once you pass, certification status is binary: pass/fail is what matters, and your specific score above 700 doesn't appear on the certificate.

Score report breakdown and how to use it

Your score report gives performance feedback by objective domain. Instead of handing you the raw math, Microsoft gives domain-level categories like above target, near target, or below target for each skill area. That's what you use for retake prep.

If you fail, don't obsess over "I was 30 points away." Focus on the domains marked below target, then map them back to the DP-100 exam objectives and build a fix list. For example, if you're weak on operationalization, go do hands-on work with endpoints, monitoring, and rollback patterns. If you're weak on experimentation, spend time in Azure ML studio and SDK doing runs, metrics, and registries until it feels boring. I've seen people spend weeks on video courses and still bomb the deployment section because they never actually set up a real endpoint. Watching someone click through a demo isn't the same as troubleshooting why your inference config keeps rejecting your scoring script.

Retaking after failure? Clean slate. Your score doesn't carry over or influence future attempt scoring. Also, incomplete exams tend to score proportionally lower simply because you left points on the table, so time management matters even though there's no bonus for finishing early and no penalty for using the full time.

If you want targeted prep, I'm fine with practice packs as long as you use them like diagnostics, not a crutch. The DP-100 Practice Exam Questions Pack is one option at $36.99, and it's useful when you review why each option's right or wrong, then go reproduce the task in Azure ML for real. Same link again when you're ready: DP-100 Practice Exam Questions Pack. Don't just memorize.

DP-100 renewal and what happens later

DP-100 renewal is its own thing. Renewal assessments have different scoring models than the proctored exam, and Microsoft can change the renewal process over time, so you follow the instructions in your Microsoft Learn certification dashboard when your renewal window opens.

Pass once. Maintain it later. Different rules apply.

DP-100 FAQs

How much does the DP-100 exam cost?

It varies by region and currency, so check the exam registration page for the current price, and watch for vouchers or employer reimbursement.

What is the passing score for the DP-100 exam?

700 out of 1000 on a scaled scoring system, not a straight percentage.

Is DP-100 difficult for beginners?

If you're new to Azure, yes. The DP-100 difficulty's mostly platform depth: Azure ML pipelines and MLOps, data access, identity, deployment patterns, and Responsible AI expectations.

What are the best study materials and practice tests for DP-100?

Microsoft Learn plus hands-on labs is the base. Add DP-100 practice tests carefully, and use something like the DP-100 Practice Exam Questions Pack to find weak spots, then fix them with real Azure work.

How do I renew the Microsoft DP-100 certification?

Use the renewal assessment in your Microsoft certification dashboard during the renewal window, and don't assume the proctored scoring rules carry over.

DP-100 Difficulty Level and Study Timeline

Understanding where DP-100 sits in the Microsoft certification space

The DP-100 difficulty level falls squarely in that intermediate to advanced zone separating the "I just learned what Azure is" crowd from folks who actually build production ML systems. It's definitely harder than your foundational certs like AZ-900 or DP-900. Those are basically "I know what cloud computing means" tests. DP-100 demands you actually know how to design, build, and deploy machine learning solutions on Azure, not just identify what a virtual machine is.

I'd put it roughly on par with AI-102 in terms of overall difficulty, though they test different skill sets. Both assume you're past the basics and ready to implement real solutions. The exam expects you to make architectural decisions, optimize costs, troubleshoot production issues, and understand the trade-offs between different approaches. You can't just regurgitate definitions from some study guide.

Is DP-100 difficult for beginners

Not gonna lie. If you're brand new to both machine learning and Azure, DP-100's going to hurt. This isn't a certification you can pass by memorizing definitions or watching a few YouTube videos. You need solid hands-on experience with Azure Machine Learning workspaces. You also need to understand the underlying ML concepts well enough to make informed decisions about algorithm selection, feature engineering, and model evaluation.

Beginners often underestimate how much prerequisite knowledge DP-100 assumes. You should be comfortable with Python before you even think about scheduling this exam. Not just "I can write a for loop" comfortable, but "I regularly use pandas, scikit-learn, and matplotlib" comfortable. The Azure ML SDK becomes your daily driver during preparation. If you're still Googling basic Python syntax, you're not ready.

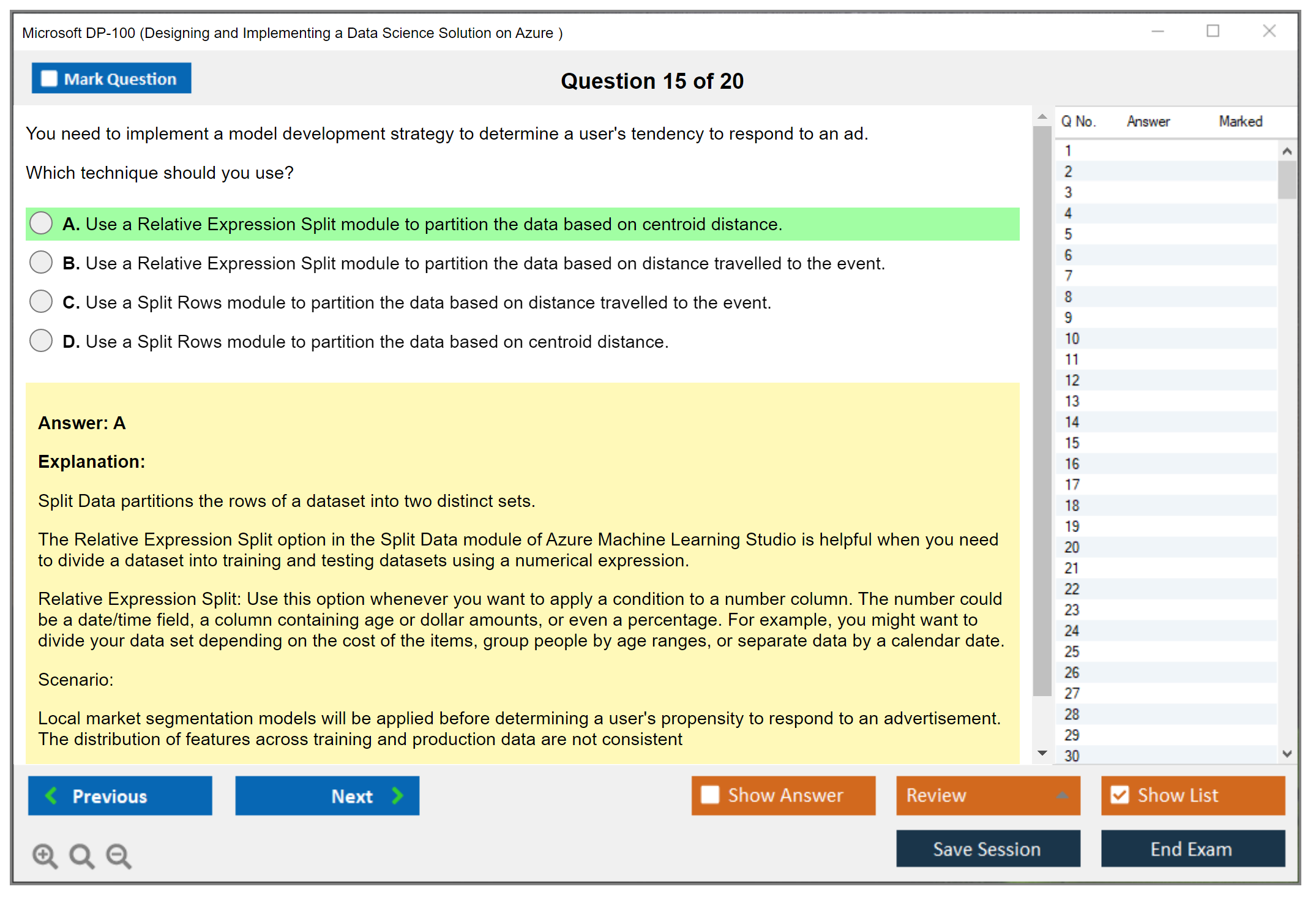

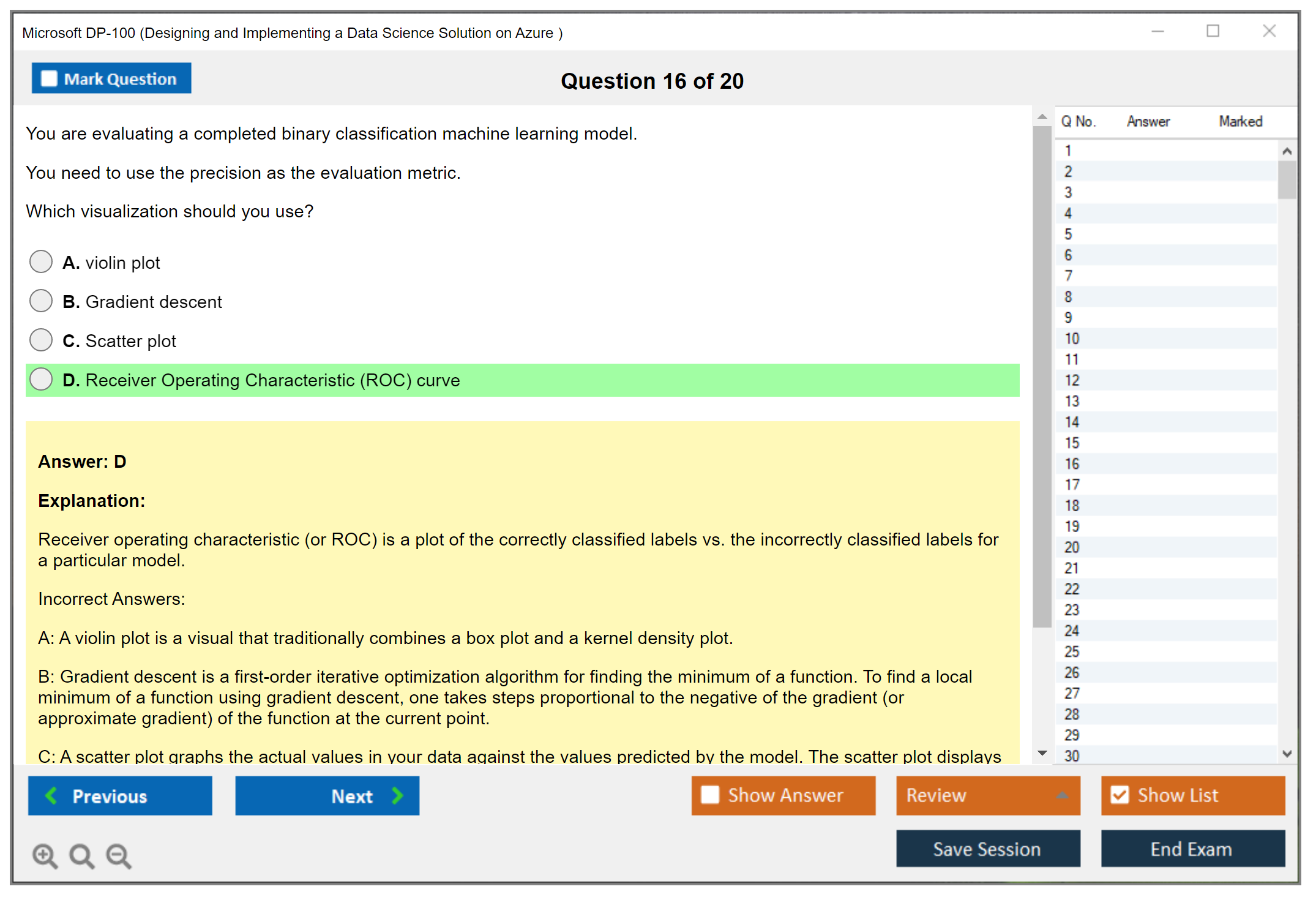

The conceptual complexity around ML algorithms and evaluation metrics trips up a lot of people. Understanding precision vs recall isn't enough. You need to know when each metric matters for specific business scenarios. Same with choosing between different model types. The exam loves scenario-based questions requiring you to recommend the right approach for a given problem, considering constraints like data volume, training time, interpretability requirements, and deployment environments. Actually, this reminds me of when I first tried understanding recall and kept confusing it with sensitivity until I finally realized they're the same thing, which felt dumb but whatever.

How different backgrounds affect your DP-100 difficulty timeline

If you're an experienced data scientist who's just new to Azure, you're looking at maybe 3-4 weeks of intensive study. You already get the ML fundamentals, so you're really just learning the Azure-specific implementations. Focus heavily on Azure ML pipelines and MLOps. Those are where your gaps will show up. The DP-100 Practice Exam Questions Pack at $36.99 can help identify which Azure services you need to prioritize.

Azure professionals who are new to data science face a steeper climb. You know your way around the portal and understand Azure architecture, but now you need to learn an entire discipline. Plan for 8-12 weeks and don't skip the ML fundamentals. You can't fake your way through understanding bias-variance tradeoff or feature scaling. The exam's scenario questions will expose that knowledge gap faster than you'd expect. Similar to how DP-300 requires deep database knowledge, DP-100 demands real ML understanding.

Complete beginners? This isn't where you should start. Get some foundational certs first. Build some ML projects. Learn Python properly. Then come back to DP-100 when you've got those prerequisites nailed down.

Professionals with both Azure and ML experience have it easiest. You might only need 2-3 weeks for review and to fill in specific gaps around newer Azure ML features. Your hands-on experience carries you through most of the exam content.

The specific challenges that make DP-100 tough

Azure ML pipelines and MLOps implementation details consistently rank as the hardest topics. Understanding pipeline components is one thing. Knowing how to optimize pipeline execution, handle failures gracefully, and implement proper versioning and monitoring? That's where people struggle. The exam digs into these operational details way more than you'd expect. It asks about parallel run steps, pipeline scheduling, parameter passing between components, and caching strategies that actually matter in production environments.

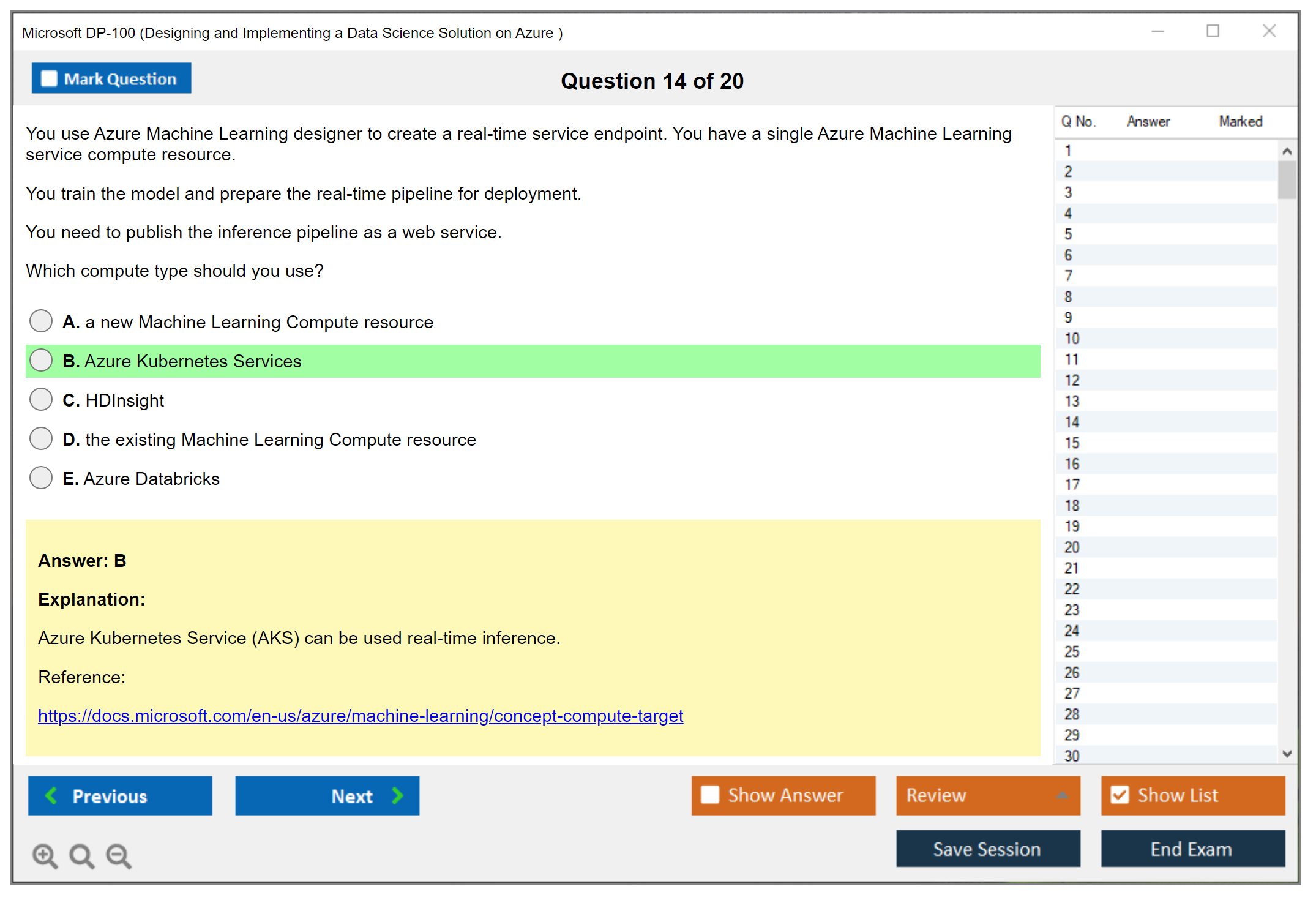

Compute resource selection trips people up constantly. When do you use compute instances vs compute clusters vs inference clusters? What about AKS vs ACI for deployment? The cost implications of each choice matter, and the exam tests whether you can match the right compute option to specific scenarios.

Model deployment gets tricky. Azure offers multiple paths: real-time endpoints, batch endpoints, edge deployment. Each has appropriate use cases, and you need to understand the trade-offs. Monitoring deployed models adds another layer, with questions about detecting data drift, model performance degradation, and setting up proper alerting.

Cost optimization questions are sneaky. The exam doesn't just ask "what's cheapest." It presents business requirements and asks you to balance cost against performance, scalability, and reliability. Security and access control configurations also show up more than expected, testing your knowledge of managed identities, service principals, and proper RBAC implementation.

Realistic study timelines based on your situation

For that 2-3 week timeline (40-60 hours total), you're already crushing it in both Azure and ML. Your study focuses on newer features, exam-specific scenarios, and filling small knowledge gaps. Heavy practice test usage helps identify weak spots quickly.

The 4-6 week range works for people with strong foundations. That's 80-120 hours total, fits data scientists learning Azure specifics. Half your time goes to hands-on Azure ML labs. The other half covers Azure architecture and service integration patterns.

Azure professionals need that 6-8 week window (120-160 hours) because learning ML fundamentals takes time. You can't rush understanding how different algorithms work or when to apply various evaluation metrics. I tried once, bombed a practice test, learned my lesson. Budget time for both conceptual learning and practical implementation.

Career changers looking at 10-12 weeks (200+ hours) are building foundations in multiple areas at once. This isn't just exam prep. It's skill development. You're learning Python, ML concepts, Azure fundamentals, and then finally Azure ML specifics. Even this timeline assumes consistent 10-15 hours weekly, which sounds doable until life happens.

Making your study time count

At least 50% of your preparation should involve hands-on work in Azure. Reading documentation matters, but you need to actually build pipelines, deploy models, and troubleshoot issues. Azure's free tier and trial options give you enough runway to practice without breaking the bank, though you might need to spend $20-50 on compute resources for more complex scenarios.

The DP-100 Practice Exam Questions Pack helps validate your readiness once you've done the hands-on work. I'd recommend using practice tests after you've covered the major topics, not before. Otherwise you're just memorizing answers without understanding context. Quality practice tests should feel harder than the actual exam. If you're consistently hitting 85%+ on realistic practice questions, you're probably ready.

Watch for warning signs. Struggling with hands-on labs? Unfamiliar with Azure ML terminology when reading documentation? Those indicate you're not ready yet. The exam expects confidence across all domains: compute management, model training, deployment, and monitoring. You can't have huge gaps and expect to pass.

Similar to other technical Microsoft exams like AI-102, DP-100 rewards depth over breadth. Better to truly understand core concepts than superficially cover everything.

DP-100 Exam Objectives and Skills Measured

DP-100 exam objectives and skills measured

The Microsoft DP-100 certification is basically Microsoft's way of verifying you can build, train, deploy, and keep an ML solution running inside Azure Machine Learning without just clicking random buttons hoping something works. It's not a "do you know sklearn" quiz. It's more like "can you operationalize ML like it's actual production software" stuff. Short bit here. Super real.

The DP-100 exam objectives are organized into four primary functional groups, each carrying a weighted percentage. Those weights matter way more than most candidates want to admit because they think everything's equal when it's absolutely not. If one domain sits at 30 to 35% and another's only 10 to 15%, you don't study them identically unless you enjoy stress and paying for retakes, which I'm guessing you don't. Those weights are your time budget. They also signal what Microsoft believes a working data scientist on Azure actually spends time doing, which turns out to be a lot of operational work, a ton of repeatability engineering, and less "cool model architecture" energy than most people expect walking in.

Microsoft also updates DP-100 periodically, and they're not shy about reshuffling things as Azure ML changes. Check the official skills outline right before you launch your DP-100 study materials plan, not after burning two weeks on outdated notes from 2024. The 2026 version can shift items like managed online endpoints, v2 SDK patterns, or Responsible AI tooling expectations. Suddenly your DP-100 practice tests feel weirdly misaligned and you're panicking three days before the exam. Not great.

Manage Azure resources for machine learning (weighted domain)

This chunk is where you prove you can stand up an Azure Machine Learning workspace without creating a billing disaster or security nightmare. Creating and configuring Azure Machine Learning workspaces includes picking the region, tying it to the right subscription and resource group, and understanding the associated resources Azure spins up behind the scenes. Storage, key vault, container registry, app insights depending on your choices. They all matter for day-two operations when things break. One sentence. No magic involved.

Access control shows up fast. Managing workspace access using Azure RBAC isn't optional. You should know the difference between "can read experiments" versus "can create compute" and why giving Owner permissions to everyone is a terrible career choice that ends badly. The exam loves practical governance questions because real organizations love not getting breached or audited into oblivion.

Compute is a whole mini-universe here: compute instances for dev and testing, compute clusters for training at scale, inference clusters for deployment, plus attached compute like Azure Databricks, Azure Synapse, and HDInsight when you need distributed processing. I mean, there's this whole tangent about how most people vastly overestimate their GPU needs at first and burn through credits in like three days running massive instances for models that would train fine on a humble CPU cluster in twenty minutes longer, but whatever. The exam tends to probe the "which compute for which workload" decision, like interactive notebook work versus distributed training versus low-latency serving. Then it piles on cost control scenarios with start/stop policies, idle shutdown configs, and autoscale settings that actually work without surprise bills. Don't hand-wave specs either. Determining CPU versus GPU, memory requirements, and node counts shows up constantly in scenario questions where you're picking the least expensive option that still meets throughput. Those questions are sneakier than they look.

Data management is also crammed in this domain. You'll register datastores like Azure Blob, ADLS Gen2, and Azure SQL, then create and version datasets, both tabular and file types. Set up data drift monitoring on registered datasets too, which is a classic "production ML is tedious and annoying" topic that nobody talks about in Kaggle competitions. Data labeling projects matter for supervised learning pipelines, especially when they want you to connect labeling workflows to dataset versioning and traceability for compliance. Quick note. Keep receipts always.

Environments and security round it out: creating and managing environment definitions for reproducible experiments, using Key Vault integration for secrets instead of hardcoding passwords like an intern, configuring private endpoints and network isolation for regulated industries, and turning on diagnostics and logging at every layer. That last bit is sneaky important because if you can't see logs when something fails, you can't fix failures. Failures are half the Azure ML experience on any given Tuesday, honestly.

Run experiments and train models (weighted domain)

This is the "can you actually train stuff in Azure ML without breaking everything" section. It mixes SDK and CLI mechanics with real modeling workflows that mirror production environments. Running experiments using Azure Machine Learning SDK and CLI includes logging metrics, parameters, and artifacts properly, tagging runs so you can find them later, and organizing experiment history so you can compare runs three months from now. You don't want to lose the one good model you trained at 2 a.m. after six cups of coffee. Tiny fragment. Happens constantly.

Training approaches are explicitly covered across multiple approaches: script-based training with custom Python for maximum control, AutoML for classification, regression, and forecasting when you need fast baselines, and Designer for drag-and-drop flows when stakeholders want visual pipelines they can understand without reading code. AutoML configuration is way more than clicking "run" and walking away. You need to set primary metrics and optimization goals correctly, choose validation strategies that won't leak data, apply data guardrails to catch issues early, and turn on explainability features. That's where people who only did Jupyter notebooks sometimes stumble hard during scenario questions. Estimators and script run configurations also show up frequently, especially around specifying the environment correctly, pointing to the entry script, passing arguments that don't break serialization, and targeting the compute in a way that works reliably across runs without mysterious failures.

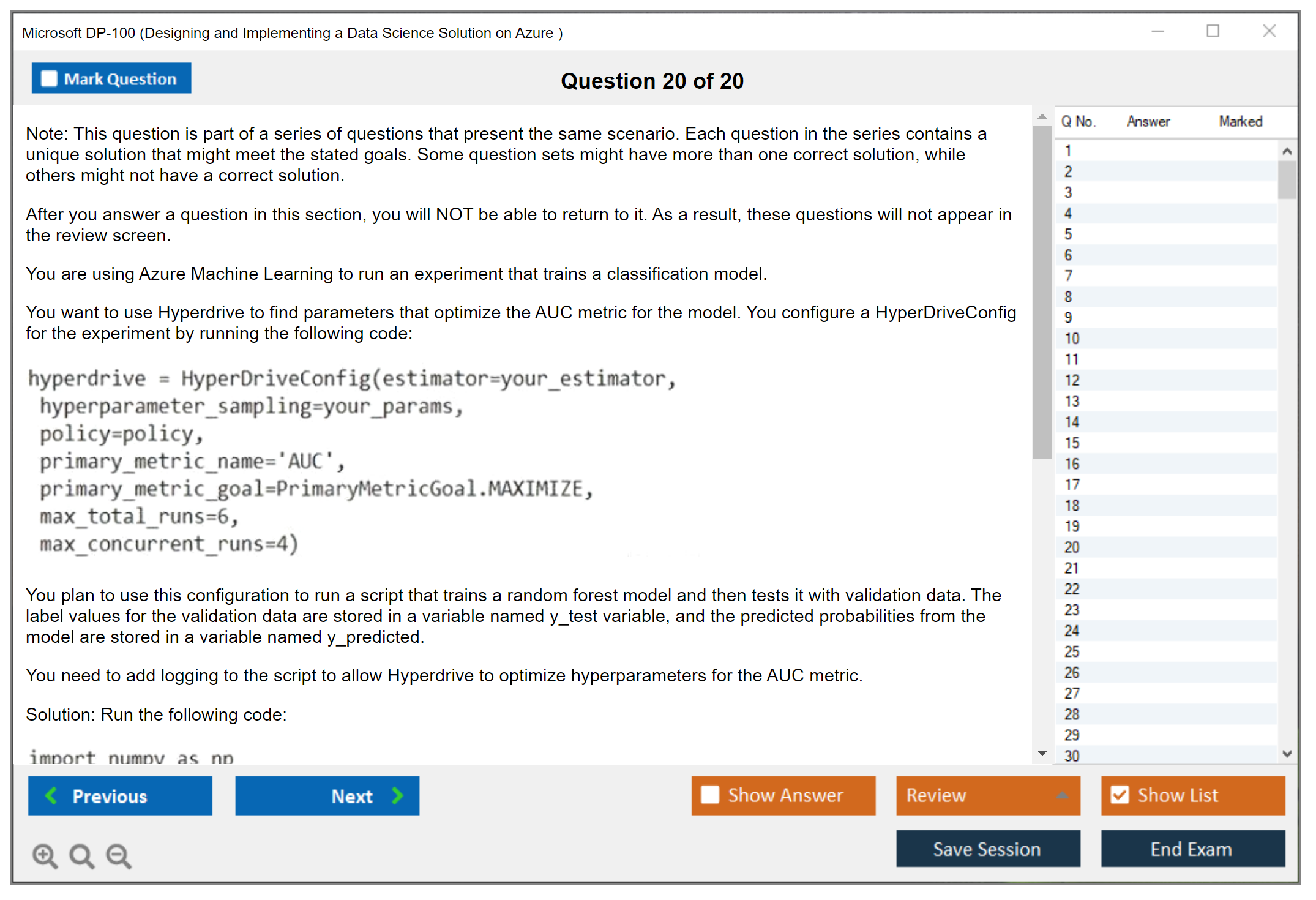

Distributed training is fair game for larger scenarios: data parallelism across nodes when your dataset's massive, model parallelism for very large models that won't fit in single-GPU memory, Horovod integration, and other distributed frameworks that Azure ML supports natively. Hyperparameter tuning is another exam favorite. Search spaces definition, sampling methods like random, grid, and Bayesian optimization, and early termination policies like Bandit and Median Stopping that save compute costs. Plus tracking model lineage properly and comparing runs systematically to pick the best candidate based on metrics that actually matter for your use case. Sometimes accuracy isn't even the right metric, right? Pipelines come in when they ask how to run training at scale with reusable components, parameterized modules, and steps that run in sequence or parallel. Then troubleshoot failed runs using logs and metrics while keeping performance good and resource utilization reasonable without burning money. That's literally the job description.

Deploy and operationalize machine learning solutions (weighted domain)

This is the "model training and deployment in Azure" workflow from end-to-end where theory meets production reality. Register models in the registry, version them properly so you can roll back when v3 breaks everything, attach metadata for governance, then pick a deployment target that matches your latency and scale requirements. Registering trained models in the model registry sounds basic, but versioning is where exam scenarios like "roll back to previous version" and "promote staging model to prod" actually live and breathe.

Deployment targets include ACI for dev and testing when you just need something quick, AKS for production workloads that need serious scale and reliability, Azure Machine Learning endpoints in both real-time and batch flavors, and edge deployment via Azure IoT Edge when you're processing data on devices without cloud connectivity. You'll configure inference pipelines with entry scripts (scoring scripts that handle the actual prediction logic), dependency environments that match training exactly, and resource allocation and scaling settings that keep costs reasonable. Real-time endpoints mean synchronous web services with authentication. Both key-based and token-based approaches. You should know how to test endpoints properly and handle requests and responses including error cases that will definitely happen. Batch inference is its own beast entirely: batch pipeline components, scheduling scoring jobs that run overnight or weekly, and processing large datasets without pretending a real-time endpoint is somehow a batch system because that ends badly.

MLOps shows up hard here. Azure ML pipelines and MLOps best practices include building reusable components that teams can share, publishing pipelines as REST endpoints for automation, versioning pipeline definitions so changes are tracked, and CI/CD automation through Azure DevOps or GitHub Actions with proper model testing and approval gates before production deployment. A/B testing and canary deployments get mentioned for gradual rollouts. Plus troubleshooting deployment failures with container logs, understanding containerization basics without being a Docker expert, and configuring traffic routing and scaling policies because production systems break in the most boring, predictable ways imaginable.

Track, monitor, optimize, and responsible AI (weighted domain)

The 2026 skills version leans heavily into monitoring and Responsible AI in Azure Machine Learning because regulators and customers really don't care that your ROC curve is gorgeous if your model's biased or drifting. Interpretability includes Azure ML interpretability tools built-in, feature importance calculations global and local, and model-agnostic methods like LIME and SHAP that work across frameworks. Bias detection and mitigation is covered through fairness assessment across sensitive features like race or gender, Fairlearn metrics that quantify disparate impact, and fairness constraints during training that trade some accuracy for equity. Plus differential privacy techniques for protecting individual data points from reconstruction attacks. Documentation matters too, more than most data scientists want to admit, because transparency requirements and audit trails are part of governance frameworks, not extra credit for overachievers.

In production environments, monitoring means Application Insights integration for real-time telemetry, custom logging and telemetry that tracks business metrics not just technical ones, dashboards that stakeholders can actually read, and drift detection for both data drift and model drift with automated alerts when thresholds break. You'll need to know how to handle performance degradation without panicking, decide when retraining is necessary based on drift magnitude, set automated retraining triggers that don't retrain constantly for no reason, manage version updates across environments, and run approval workflows so you don't accidentally ship a broken model on a Friday afternoon and ruin everyone's weekend. That's happened more than you'd think, honestly.

quick exam notes people ask anyway

How much does the DP-100 exam cost? The DP-100 exam cost varies by region and sometimes currency fluctuations, so check Microsoft's official exam page for your specific locale before budgeting. What is the passing score for the DP-100 exam? The DP-100 passing score is 700 on Microsoft's scaled score model, which isn't a percentage but a normalized scale. Is DP-100 difficult for beginners? DP-100 difficulty is medium-to-high if you lack hands-on Azure ML experience, and considerably easier if you've already built real deployments and pipelines before studying. What are the best study materials and practice tests for DP-100? Start with Microsoft Learn modules, then hands-on labs in your own subscription, then targeted DP-100 practice tests that match the current outline not outdated versions. How do I renew the Microsoft DP-100 certification? DP-100 renewal is done through Microsoft's online renewal assessment flow on Microsoft Learn once you're eligible, usually six months before expiration. The process changes over time, so confirm the current rules before your expiration date sneaks up on you.

Conclusion

Wrapping up your DP-100 path

Real talk here.

Getting your Microsoft DP-100 certification? It's way beyond just passing some test. You're proving you can legitimately build and deploy machine learning solutions in Azure without torching budgets or breaking production environments. Spinning up a notebook is easy. Literally anyone does that. But designing and implementing an actual data science solution on Azure that handles real-world workloads with users breathing down your neck? Whole different beast.

The DP-100 exam cost sits around $165. Not cheap, honestly. But that's pretty standard for Microsoft certs, so whatever. You'll need a DP-100 passing score of 700 out of 1000, and yeah, that scaled scoring system can seriously mess with your head, though I'd say don't overthink it too much. What really matters is crushing those DP-100 exam objectives around Azure Machine Learning pipelines, model training and deployment in Azure, and the MLOps stuff that consistently trips candidates up.

Here's what I've witnessed firsthand: people treating this like some weekend sprint? They usually crash hard with the DP-100 difficulty. The exam throws scenario-based questions at you that flat-out assume you've configured compute clusters, optimized hyperparameters, and wrestled with model drift in real environments. Coming in without hands-on Azure ML experience means you're gonna struggle badly regardless of how many study guides you've consumed. The DP-100 prerequisites aren't technically strict or anything, but you really should know Python and basic ML concepts before starting.

I watched someone last month try speedrunning this thing with zero Azure experience, just straight memorization. Passed the practice tests, felt confident, then got absolutely wrecked by a question about pipeline parameter configurations. Sometimes there's no substitute for actually breaking things yourself.

Best DP-100 study materials? Microsoft Learn's free and thorough, which is great. Pair that with actual lab work in your own Azure subscription because reading about Azure ML pipelines and MLOps is completely different from debugging them at 2am when nothing works. DP-100 practice tests help too, especially for timing and question format, though don't just memorize answers like some robot.

Not gonna lie here, responsible AI in Azure Machine Learning is becoming a legitimately bigger deal on the exam. Microsoft's pushing governance and explainability super hard these days, so don't skip those sections thinking they're just fluff.

Quick thing.

Once you pass, remember the Azure Machine Learning certification requires DP-100 renewal every year through a free online assessment. Set a calendar reminder because letting it expire after investing all that work would be really painful.

If you're serious about prepping efficiently, the DP-100 Practice Exam Questions Pack gives you realistic scenarios that mirror the actual exam format. It's one of those resources where you immediately know if you're ready or need way more hands-on time.

Go build something. Deploy a model. Break it, fix it, then take the exam.