Databricks Certified Machine Learning Associate Exam Overview

What you're actually getting with this credential

Seriously good question.

The Databricks Certified Machine Learning Associate certification is an industry-recognized credential that validates you've got the chops to build end-to-end ML workflows on the Databricks platform. Honestly, it's designed for data scientists, ML engineers, and data engineers who need concrete proof they can handle the entire ML lifecycle. From messy data preparation (which, let's be real, is where everyone spends way too much time) all the way through model deployment and ongoing monitoring.

This isn't just Python knowledge. Or scikit-learn basics.

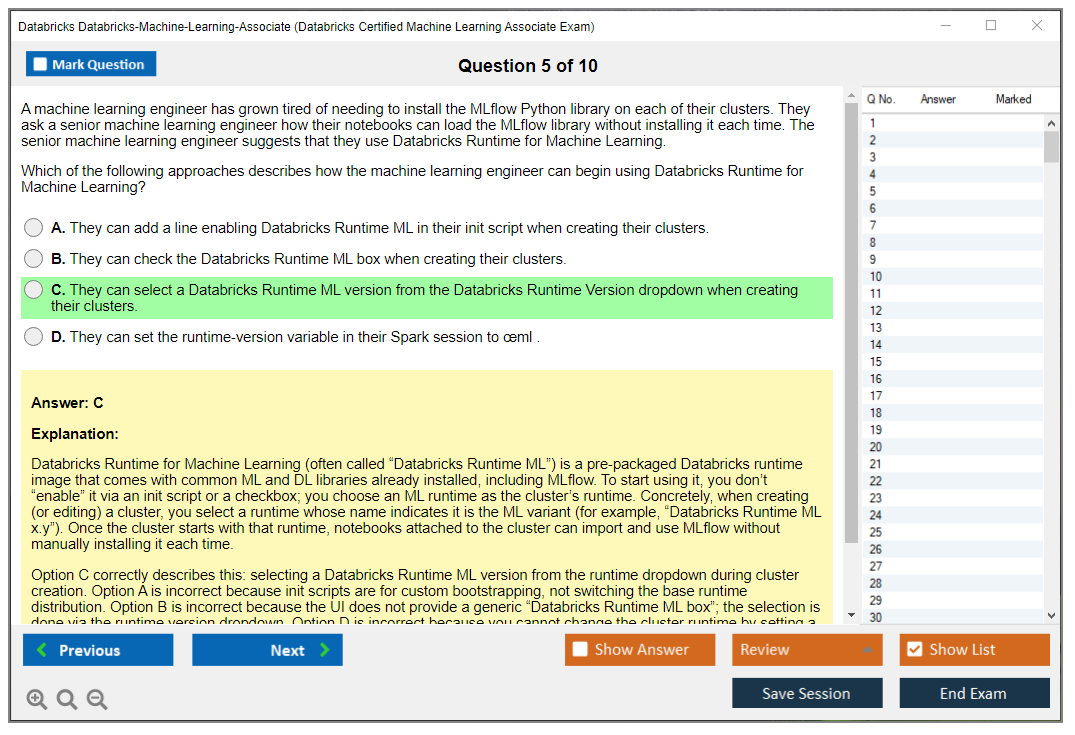

The exam focuses heavily on platform-specific tools like MLflow for experiment tracking, the Feature Store for managing engineered features, and Databricks ML Runtime for training at scale. You're demonstrating competency in notebooks, cluster configuration, and collaborative ML development in a unified workspace. It's the entry-level certification in Databricks' ML pathway. If you're serious about MLOps on this platform? You start here.

Who actually needs this thing

Junior to mid-level data scientists transitioning into the Databricks ecosystem, that's a huge chunk of candidates. Got 6-12 months of hands-on Databricks platform experience and you're working with ML workflows regularly? You're probably ready. Data engineers expanding into machine learning workflows should absolutely consider this because it validates you understand both the data engineering foundations and the ML-specific tooling.

I've seen analytics professionals moving into predictive modeling roles use this to formalize their skills, which makes sense. Recent graduates with ML coursework but limited real-world experience? This gives you something concrete to show employers. Python developers specializing in ML operations and deployment can differentiate themselves too, especially if they're consulting or contracting where credentials matter way more than full-time roles.

One thing though. I had a colleague who thought she could skip straight to the professional level stuff because she'd been doing ML in other environments for years. Turns out the platform specifics matter more than you'd think. She ended up taking this one first anyway just to learn how Databricks does things differently from AWS SageMaker or Azure ML.

The skills this exam actually tests

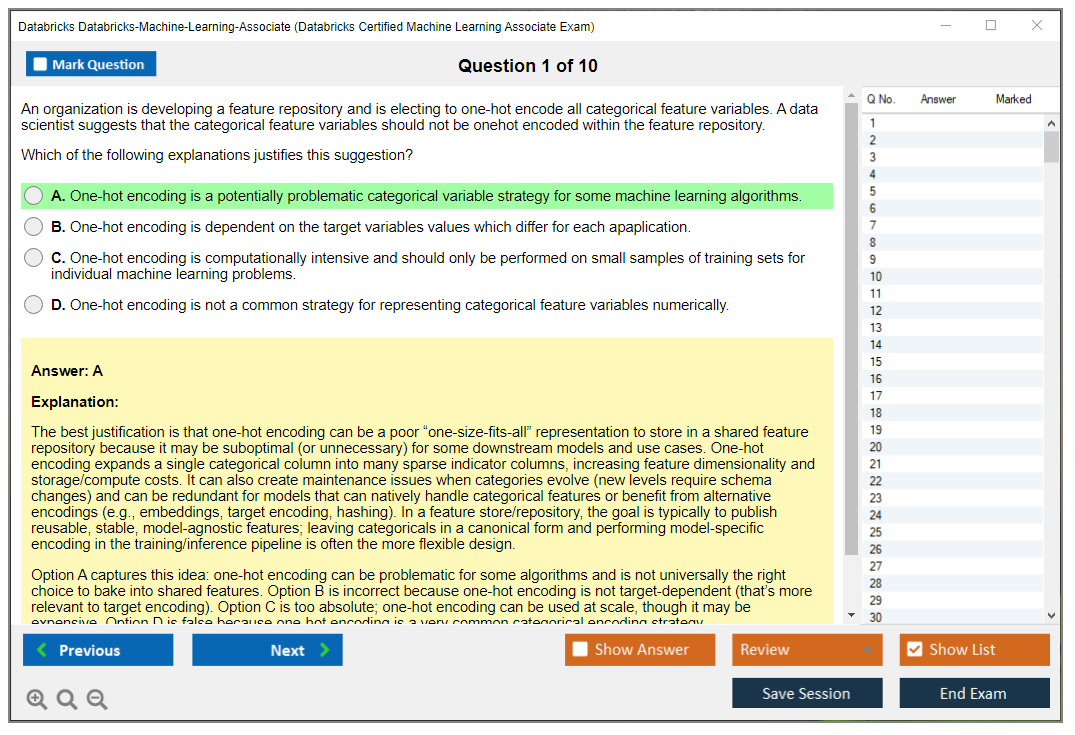

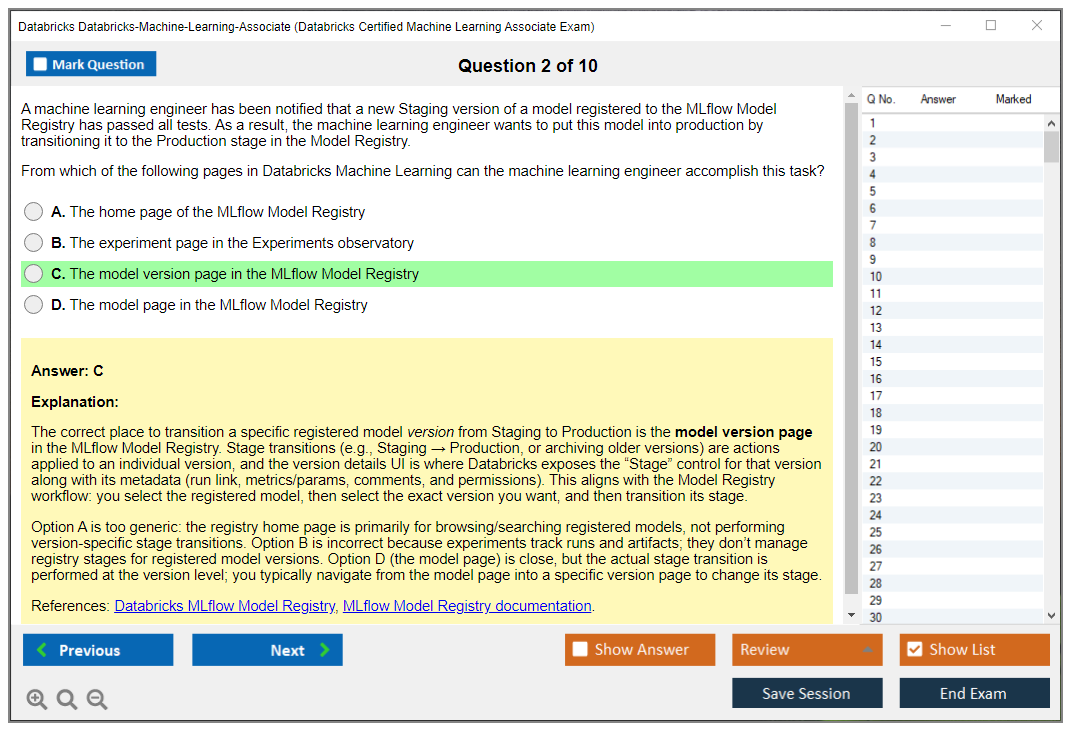

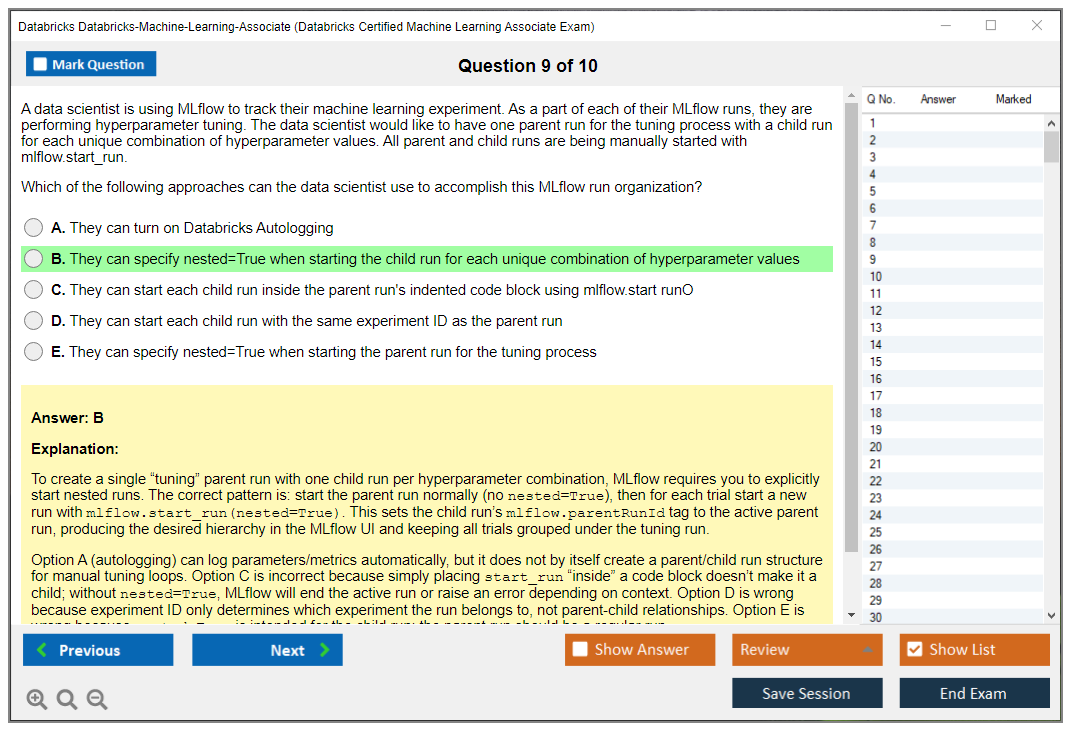

Building reproducible ML experiments using MLflow tracking is huge here. Not gonna lie, if you can't explain the difference between experiments, runs, and registered models, you'll struggle. Managing model lifecycle from development to production deployment means understanding how models move through staging, production, and archival states. And when to use which deployment pattern, which is trickier than it sounds.

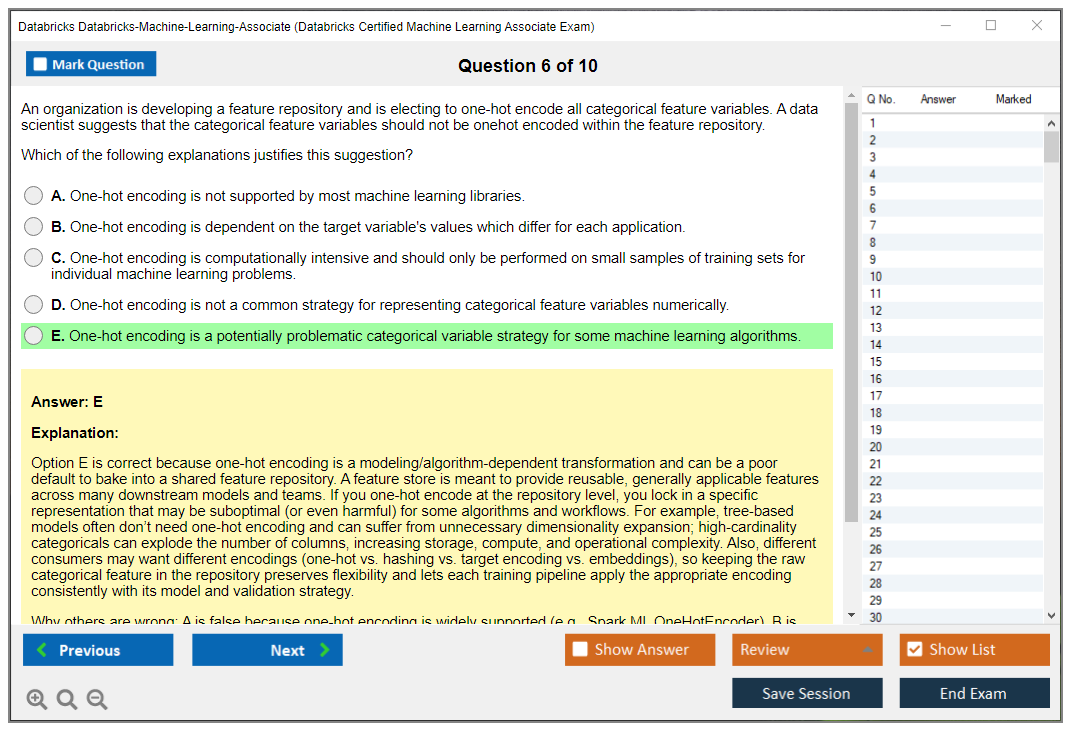

Implementing feature engineering pipelines with the Feature Store? The thing is, this trips up tons of people. You need to know when to use offline versus online feature lookup, how feature tables work, and why you'd bother with all this complexity instead of just engineering features in your training notebook (which, I mean, seems way simpler at first).

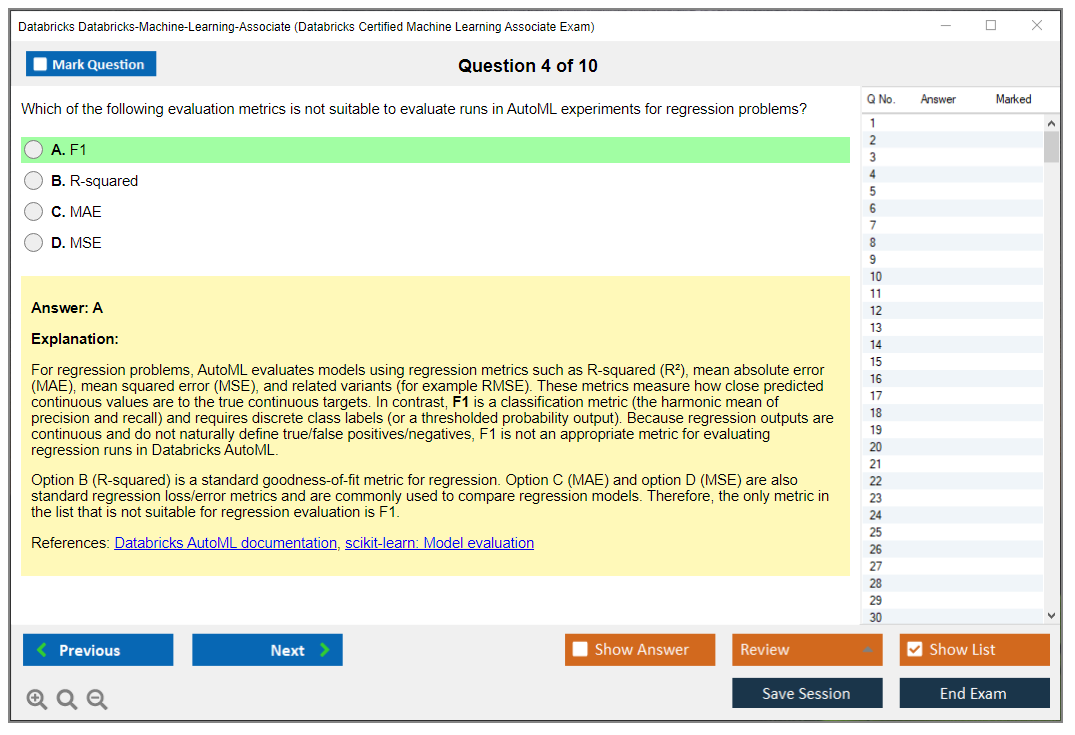

Training models using Databricks ML Runtime and AutoML covers both manual model development and automated approaches. Evaluating model performance requires you to pick appropriate metrics for classification versus regression tasks. You also need to understand cross-validation strategies and know when your validation approach is actually introducing data leakage. Wait, also understanding when you're accidentally peeking at test data. Deploying models for batch and real-time inference scenarios means understanding Model Serving endpoints, scheduled batch jobs, and the tradeoffs between them.

Best practices matter.

Applying ML best practices for governance and responsible AI shows up more than you'd expect. Model explainability, bias detection, and compliance tracking aren't just buzzwords anymore.

Why this certification actually matters for your career

It differentiates candidates in a competitive data science job market where everyone claims ML expertise. Which honestly gets exhausting to sift through. Platform-specific skills are what employers actively seek right now. Generic ML knowledge is table stakes, but showing you can implement MLOps on Databricks specifically? That gets interviews.

The earning potential increase for ML and data engineering roles with Databricks certifications is real. I mean, companies paying for Databricks licenses want people who can use the platform effectively from day one, not folks who'll spend months ramping up. This also opens doors to the Databricks Certified Machine Learning Professional certification, which is where compensation really jumps.

Consulting or implementing Databricks solutions? This provides instant credibility with clients who need reassurance you know the platform. The industry shift toward unified analytics and ML platforms means these skills stay relevant as more organizations consolidate their data stacks.

Where this fits in the bigger certification picture

Foundation for more advanced ML certifications in the Databricks ecosystem. It complements the Databricks Certified Data Engineer Associate and Databricks Certified Data Analyst Associate credentials. Many people hold multiple to show breadth across the platform, which employers actually appreciate.

You're building toward the Databricks Certified Data Engineer Professional or Professional ML track, basically. This certification bridges data engineering and ML engineering competencies, which is exactly where the market needs people right now. Most teams need folks who understand both data pipelines and model training, not specialists who only know one side.

It's recommended before pursuing solution architect certifications because you need hands-on ML implementation experience to design good architectures. Think of this as proof you've done the work, not just studied the theory.

Databricks Machine Learning Associate Certification Exam Cost and Format

what this cert is really about

The Databricks Certified Machine Learning Associate exam is your entry point for proving you can run an end-to-end ML workflow inside Databricks without getting lost in the product UI or tripping over the "where did my model go" parts. It maps pretty closely to day-to-day work: prep data, train, track runs, register models, and push something to inference. Not glamorous stuff, but it's what actually matters when you're trying to ship a model.

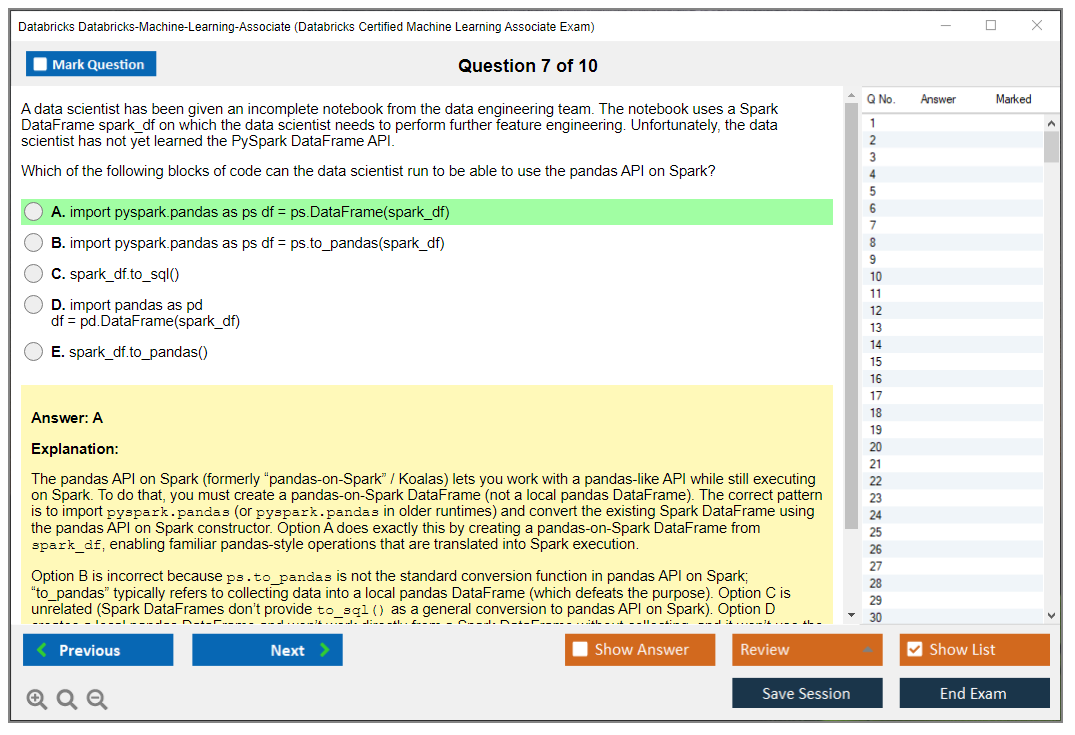

This isn't a pure theory test. You need to recognize what Databricks wants you to do with MLflow, how Feature Store fits, and when you should pick Spark ML vs Python ML libraries in Databricks. You'll see practical "what would you do next" prompts that feel like a senior engineer looking over your shoulder during a code review, which is annoying and useful at the same time. Once you get past the initial hump, though, it clicks. I had a colleague who kept mixing up the model registry stages and deployment endpoints until he actually broke something in staging. That's when it stuck. Sometimes the hard lessons are the ones that stay with you.

who should sit for it

If you're aiming for the Databricks Machine Learning Associate certification because you touch notebooks, clusters, MLflow, or model deployment and inference on Databricks at work, you're the target. Data analysts trying to pivot. Junior ML engineers. Platform folks who keep inheriting ML pipelines and wondering how they got stuck with all this legacy code that nobody documented properly because, let's be real, documentation always gets skipped when deadlines hit.

Total beginners suffer. Some experience helps a lot.

money stuff you should know

The Databricks-Machine-Learning-Associate exam cost is straightforward: the standard exam fee is $200 USD, and it can vary a bit by region and taxes depending on where you register. The nice part? There are no additional fees for first-time registration and scheduling, so you're not paying extra just to pick a time slot.

Retakes are where people get surprised. The retake policy is simple and kind of brutal: full exam fee per attempt, every time, and there are no bundled packages or discounts for multiple Databricks exams, so stacking certs doesn't unlock a cheaper cart checkout later.

A couple ways people pay less. Corporate training programs may include exam vouchers, and academic institutions occasionally offer subsidized pricing, so if you're at a university or a company with Databricks training credits, ask before you swipe your card. Payment is typically accepted via credit card or PayPal, and organizations can often use a purchase order.

what the exam looks like on test day

The format is consistent: 60 questions total, all multiple choice or multiple select, with a 90-minute time limit. No hands-on lab. No coding tasks. It's computer-based testing through Kryterion, and you're basically living in a proctored multiple-choice world for an hour and a half.

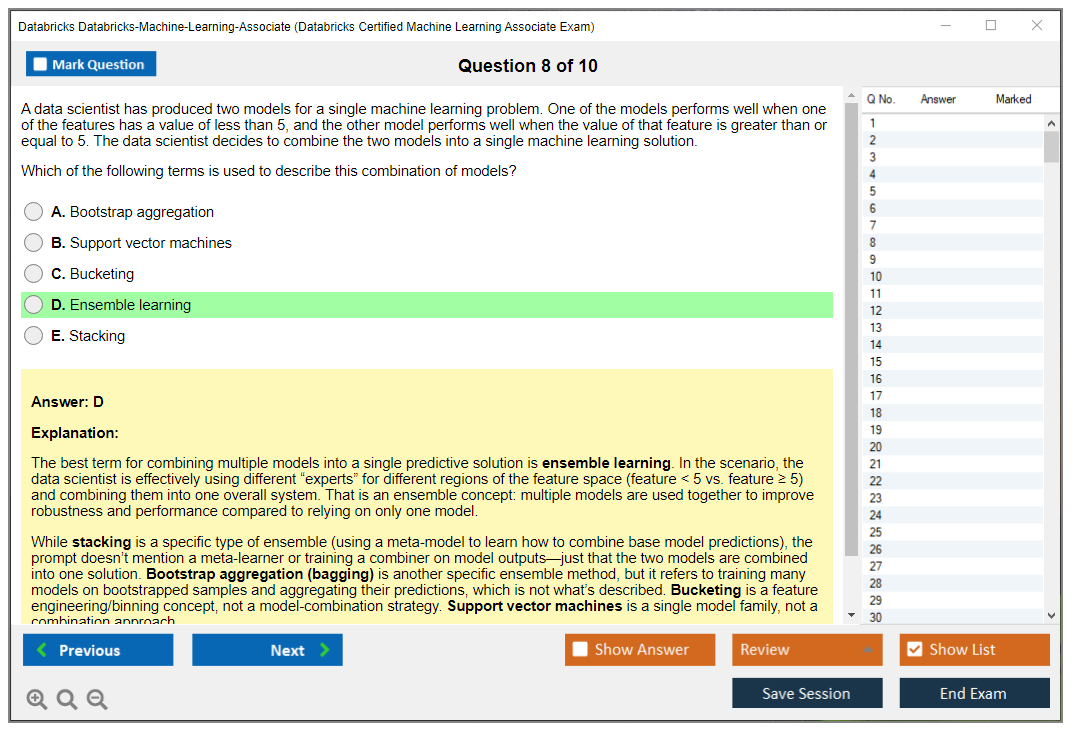

Questions are weighted equally and there's no penalty for wrong answers, so you should always guess rather than leave anything blank. Expect scenario-based questions that require practical knowledge application, including code snippets or architecture diagrams where you've gotta spot what's wrong in an MLflow model lifecycle on Databricks, or pick the cleanest approach for hyperparameter tuning in Databricks given some constraints.

question types you'll actually see

Single-answer multiple choice is common. Pick one and move on.

Multiple-answer questions also show up a lot, and these are where people burn time because you second-guess yourself, then reread the scenario, then notice one word like "production" or "batch" that flips the answer. Annoying.

You'll also see troubleshooting questions identifying errors in ML workflows, plus best-practice comparisons between approaches. Concept checks appear too, especially around Databricks Feature Store concepts and MLflow components. No fill-in-the-blank. No essays.

remote vs test center logistics

You can take it online proctored from home or office, or in person at a Kryterion test center, though locations can be limited depending on your area. For remote proctoring, you need a webcam and microphone, and you must install the secure browser before the exam starts. They'll record and monitor your screen through the whole session.

Quiet room required. No notes. No extra monitor. Photo ID verification happens before you begin, and if your setup is messy, you can lose time fast while the proctor fusses over your desk and lighting.

registration and scheduling without drama

You create an account on Databricks Academy or Kryterion Webassessor, then purchase an exam voucher or register directly through the portal. Schedule at least 24 hours in advance, pick your date, time, and delivery method, and then watch for the confirmation email with instructions.

Do the system check about 15 minutes before start. Earlier is better. Rescheduling is usually allowed up to 24 hours before the appointment, so if life happens, move it before you get stuck paying again.

retakes and waiting periods

There's no mandatory waiting period between the first and second attempt, which sounds nice until you realize it tempts people to rage retake without changing anything. After a second failed attempt, you hit a 14-day waiting period. You've also got a maximum of five attempts per 12-month period, and previous results don't carry over. No partial credit. No section exemptions. Every retake is the whole exam again, for the full fee.

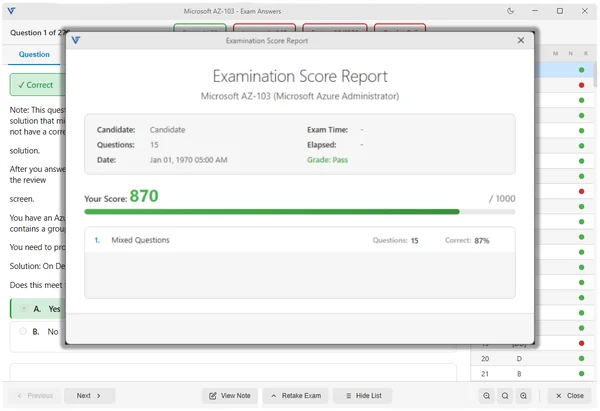

scoring and the passing score question

People always ask about the Databricks ML Associate passing score. Databricks doesn't always make the exact number feel front-and-center in a way that helps your prep, so treat it like this: aim to be consistently correct across objectives, not "I'll ace MLflow and bomb deployment." Your score report typically tells you how you did by topic, and if you fail, that breakdown is what you should use to build a tighter Databricks ML Associate study guide plan.

what makes it harder than it looks

The difficulty is beginner to intermediate, but it's sneaky. The exam rewards familiarity with the platform: where settings live, what components are called, what the recommended workflow is, and how Spark ML vs Python ML libraries in Databricks changes scaling and production choices.

Common pain points: MLflow tracking vs registry, Feature Store basics, model deployment and inference on Databricks, evaluation choices and leakage gotchas. The Databricks ML Associate exam objectives expect you to think in workflows, not isolated commands, so a "what should you do next" question can punish anyone who only memorized terms.

prep materials, practice tests, and renewal

For study materials, prioritize Databricks Academy content and the official docs around MLflow, Feature Store, model registry, and serving. Then build something small: a notebook that trains, tracks runs, registers a model, and simulates promotion across stages. That one project clears up a ton.

For a Databricks ML Associate practice test, look for questions that include scenarios and why answers are wrong, not just a dump of letter choices. My take? Do a diagnostic, review weak objectives, then do timed mocks to fix pacing.

As for the Databricks ML Associate renewal policy, check Databricks' current certification validity rules when you register since policies can change, but plan for recertification eventually and keep your skills current by staying active in the product.

quick answers people google

How much does it cost? Usually $200 USD, plus regional variation. Passing score? Not always emphasized publicly, so prep to be solid across topics. How hard is it? Intermediate if you've got hands-on time. Best prep? Official training, docs, and a scenario-heavy practice test. Does it expire? Possibly, verify current validity and renewal requirements when you book.

Databricks ML Associate Passing Score and Scoring System

What you need to know about the 70% threshold

So here's the deal.

The Databricks ML Associate exam needs a 70% to pass, which breaks down to 42 correct answers out of 60 total questions. That's pretty standard for associate-level certs, but it still trips people up because they don't realize how weirdly specific some questions get about MLflow tracking URIs or Feature Store APIs. We're talking really granular implementation details here.

The scoring system doesn't just tally up your raw correct answers and send you on your way, though. They've got what's called a scaled scoring approach that converts your raw performance to a standardized scale ranging from 200 to 800, and you need a passing scaled score of 500 or higher to actually clear it. This whole conversion process accounts for minor difficulty variations between different exam versions, so someone taking the test in March isn't disadvantaged compared to someone taking it in September just because they happened to get a slightly harder question pool.

That cut score (the 70% threshold) comes from psychometric analysis combined with job task analysis where Databricks worked with actual ML engineers to determine what represents minimum competence. It's not arbitrary. The passing standard stays consistent across all exam versions and delivery methods, whether you're testing at a Pearson VUE center or taking it online from your apartment. No curve exists based on how other candidates perform that day or month.

How they actually calculate your score

Raw score calculation? Straightforward. Count correct answers. Done.

But then the scaled scoring algorithm kicks in to normalize everything. All 60 questions carry equal weight regardless of difficulty level, which surprised me when I first learned that because you'd think the gnarly multi-step deployment scenario questions would count for more than a basic "what does MLflow log_metric() do" question, right?

No penalty for guessing whatsoever. This matters because if you're running out of time just click something on every remaining question since an unanswered question gets marked incorrect anyway and you're not losing additional points for wrong guesses. The scoring algorithm adjusts for exam form difficulty variations using item response theory and other statistical methods that, frankly, go over my head, but the point is they're trying to make sure a 500 scaled score means the same thing across all test versions.

Most people obsess over whether partial credit exists for multiple-choice questions. It doesn't. You're either right or wrong. The exam doesn't reveal which questions you missed or what the correct answers were, which is frustrating but standard practice for professional certifications.

One time I watched a guy in the testing center spend like three full minutes on a single question about Delta Lake time travel integration with MLflow, and I kept thinking he was wasting time that could've been used to at least guess on other questions. Clock management beats perfection when you're dealing with equal-weight scoring.

What shows up in your score report

You get your pass/fail status immediately when you finish the exam. Like the second you click that final submit button the screen changes and tells you whether you passed or not. If you passed, you'll see your scaled score (something between 500 and 800), and I've never met anyone who cared whether they got a 520 or a 780 because pass is pass.

Now, the really useful part of the score report? The domain-level performance breakdown.

They show you percentage correct across the major exam objective areas: experiment tracking with MLflow, Feature Store operations, model training and evaluation, deployment and inference, and data preparation workflows. This breakdown doesn't tell you exactly which questions you missed, but it clearly identifies whether you bombed the hyperparameter tuning section or crushed the Feature Store stuff.

Performance indicators typically show as "Below Target," "Near Target," or "Above Target" for each domain. When you pass, you get a digital badge issued to your Credly account within 24 hours, usually faster in my experience. The official certificate PDF becomes available in your Databricks Academy profile for download, and you can share that with employers or post it on LinkedIn or whatever.

When things don't go as planned

If you fail, you get immediate notification and a diagnostic report showing performance by domain. This is actually more detailed than what passing candidates get because Databricks wants to help you figure out where to focus your retake prep. The report identifies weak areas and sometimes includes generic recommendations like "review MLflow experiment tracking concepts" or "practice Feature Store table creation and retrieval."

You need to pay the full exam fee again to schedule your next attempt. Not gonna lie, that stings a bit, but that's how most certification programs work. The good news? There's no limit on total attempts within the retake policy constraints, and you keep access to the same study resources and practice materials you used before.

I always tell people to really study that domain breakdown if they fail. Don't just read everything again. Target your weak spots. If you scored 85% on data prep but only 45% on model deployment, then spend most of your retake prep time on deployment scenarios and inference patterns.

Making sense of the performance metrics

The domain breakdown typically covers five or six major areas aligned with the exam objectives. You might see categories like "ML Workflows and Data Preparation," "Experiment Tracking," "Feature Engineering," "Model Training and Tuning," and "Model Management and Deployment." Each category shows percentage correct, which lets you compare relative strengths across different stages of the ML workflow.

This diagnostic information becomes valuable for building a targeted study plan, especially if you're comparing your performance against the Databricks Certified Machine Learning Associate Exam objectives. Some people also find it helpful to contrast their ML Associate performance with how they did on related credentials like the Databricks Certified Data Engineer Associate Exam to understand their overall Databricks platform knowledge.

Timeline for getting your credential

Passing scores are valid immediately.

Your credential becomes official the moment that pass notification appears. The digital badge usually hits your Credly account within 24 to 48 hours, though I've seen it arrive in as little as 4 hours. The certificate PDF shows up in your Databricks Academy profile typically within the same timeframe.

Employers can verify your certification status through the Databricks certification registry using your email address or credential ID, and this verification remains available throughout your certification validity period. For the ML Associate that's typically two years before you need to think about renewal. The credential verification system's actually pretty solid compared to some other vendors where you have to manually send PDF certificates around.

Databricks ML Associate Exam Difficulty and Preparation Timeline

What this certification actually proves

The Databricks Certified Machine Learning Associate exam is basically your ticket to proving you won't panic when shipping ML on their platform. It's platform-first, not theory-first. Honestly, nobody cares if you memorized backpropagation formulas. You've gotta be comfortable writing code in notebooks, spinning up the right cluster, tracking experiments, and moving models through MLflow Model Registry without accidentally promoting the wrong version to production like some kinda amateur.

Look, the Databricks Machine Learning Associate certification validates you can run an end-to-end ML workflow on the Lakehouse using tools Databricks actually expects you to know: MLflow, Feature Store concepts, some Spark ML versus Python ML libraries decision-making in Databricks environments. The thing is, it's not testing whether you can derive XGBoost from scratch or explain gradient descent to your grandma. It's testing if you can train it, track it, register it, and deploy it the Databricks way, while also not completely faceplanting on metrics, validation, or basic governance stuff that matters in real production environments.

Who should take it (and who will hate it)

Data scientists with some cloud ML experience usually find it moderately challenging. Nothing too wild. Traditional ML engineers tend to underestimate how platform-specific this gets, then they'll spend a week wrestling with MLflow model lifecycle on Databricks details they really thought they "already knew" from other tools. Data engineers transitioning to ML can pass (honestly, I've seen it plenty) but they often need to slow down and shore up evaluation metrics and overfitting basics, because the exam assumes you can pick the right metric for classification versus regression and interpret what it's actually telling you, not just build pipelines that move data around.

Complete beginners? Oof. Possible, sure. Also painful.

You know what's weird? I've noticed people who spend months obsessing over Kaggle competitions sometimes struggle more than folks who just built one boring production system. Competition ML and platform ML are like cousins who don't talk at family reunions.

Cost, format, and scheduling stuff people forget

The Databricks-Machine-Learning-Associate exam cost varies by region and local taxes, and Databricks occasionally tweaks pricing without making a big announcement, so definitely check the certification page before you commit your credit card. Retakes also matter more than people think, because if you're borderline-ready and you fail, you just bought yourself a second invoice, a bruised ego, and another two-week wait before you can try again.

Question format's typically multiple choice and multiple select, timed, delivered via online proctoring or a testing provider depending on whatever Databricks' current setup happens to be. Registration's straightforward enough, but here's a scheduling tip nobody mentions: book a time when your brain actually works, not just when your calendar's empty. Do a quick system check the day before so you don't lose 20 precious minutes to webcam drama or audio issues while the proctor watches you sweat.

Passing score and scoring reality

People ask about the Databricks ML Associate passing score constantly. Databricks doesn't always publish a simple fixed number in a way that stays consistent across exam versions, so treat any exact score you see online as "maybe, at that specific time." What you should know? Scoring's usually not a casual vibe check where you can bomb MLflow questions and somehow "make it up" with generic ML trivia about decision trees.

If you fail, you typically get a score report with domain-level feedback. Not super detailed, I mean, it's not gonna tell you which exact questions you missed. Still useful though. You'll know if you got wrecked by Feature Store API syntax, model deployment and inference on Databricks patterns, or evaluation metrics interpretation.

How hard is it, really

How hard is the Databricks Machine Learning Associate certification? Moderate difficulty if you've had actual hands-on Databricks time, not just watched tutorial videos on double speed. The exam likes practical platform knowledge beyond theoretical ML understanding. That's where people seriously misjudge it, because they walk in with Coursera confidence and walk out realizing they never once registered a model, promoted stages, or debugged a distributed training job that failed because of memory issues or bad cluster configuration.

It's harder than vendor-neutral ML certifications because it's so Databricks-specific. You can't "general ML" your way through MLflow details, Feature Store concepts, or cluster/runtime choices that matter for performance. It's easier than the advanced ML Professional certification though, since the Associate exam doesn't expect you to be designing enterprise-grade systems from scratch or explaining architectural decisions to skeptical stakeholders.

Pass rates are rumored around 60 to 70% for adequately prepared candidates, which honestly tracks with what I see. Difficulty feels comparable to AWS ML Specialty or Google ML Engineer Associate, mostly because those also blend ML basics with "do you know our platform's weird little rules and when to use which service."

The pain points that actually burn time

MLflow experiment tracking gets people every single time. The mechanics seem easy at first glance, but the detail is what shows up on exam day. What gets logged where, how artifacts differ from metrics, how runs relate to experiments. And what "reproducibility" actually means in practice when your code's split across notebooks and scheduled jobs. Wait, did I log the environment or just the parameters?

Feature Store trips up a completely different crowd, usually engineers who think "it's just a database." Syntax, yes, but also workflow: creating features, writing them, serving them online versus offline. Understanding how training sets get built without leaking data across time or environments. Then you stack on the confusion around model registry stages and deployment options and suddenly you're second-guessing basic questions because "Staging versus Production" sounds simple until you're asked what changes operationally when you transition between them.

Other common headaches, mentioned quickly:

- AutoML capabilities and limitations on Databricks

- Batch inference patterns, real-time serving patterns

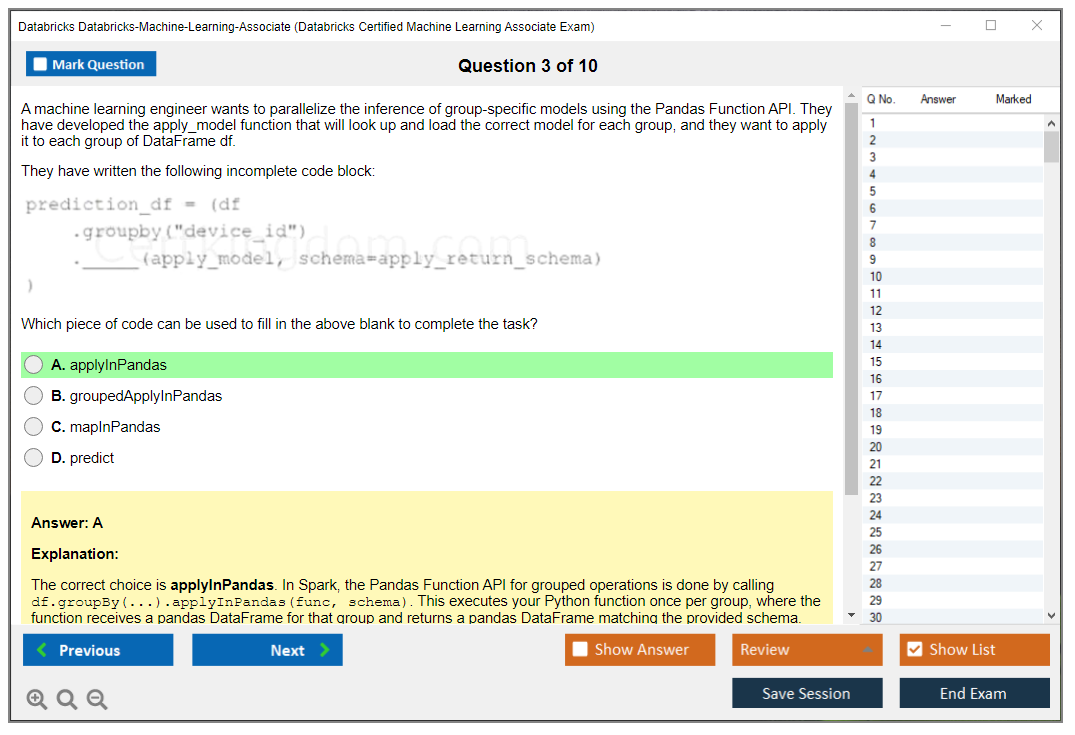

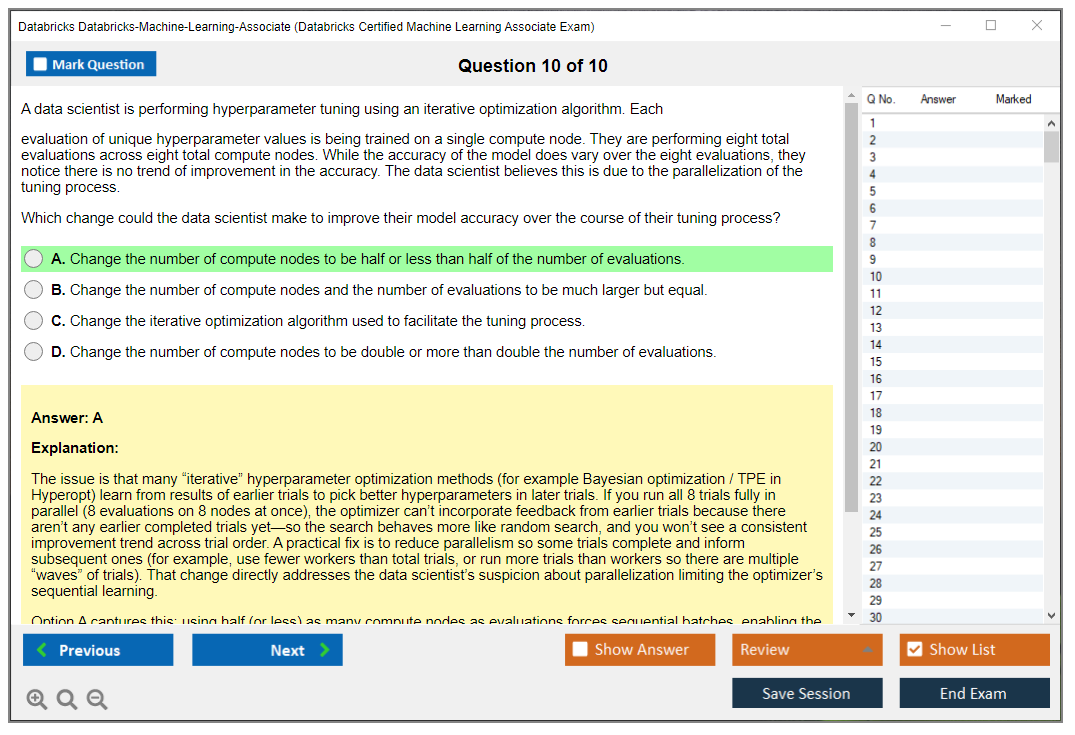

- Hyperparameter tuning in Databricks with Hyperopt inside Spark ML pipelines

- Troubleshooting distributed training errors that don't give helpful stack traces

- Managing model versions across lifecycle stages without losing track

- Knowing when to use Spark ML versus single-node Python libraries based on data size and compute requirements

Skills you need to be able to do, not just describe

You should be able to create and manage notebooks, configure clusters with ML Runtime, and implement MLflow tracking in Python code without desperately copy-pasting a mystery snippet from Stack Overflow. Logging parameters, metrics, and artifacts should feel normal. Boring even. Registering models to MLflow Model Registry should be muscle memory.

Feature work matters too, more than certification guides admit. Creating and accessing features in the Databricks Feature Store, then using them consistently for training and inference without accidentally introducing skew, is the kind of practical knowledge the exam rewards. Training with scikit-learn and XGBoost is common. Spark ML shows up too, and you need to know why you'd pick one over the other based on data volume. Evaluation's not optional: classification and regression metrics, interpreting confusion matrices and ROC curves, reading performance visualizations without guessing.

Deployment shows up way more than people expect. Batch scoring versus REST API serving. What changes, what breaks, what you monitor.

A realistic prep timeline (by background)

If you already live in Databricks daily (6+ months of real use), budget 40 to 60 hours over 4 to 6 weeks. ML practitioners new to Databricks usually need 80 to 100 hours over 8 to 10 weeks because they're constantly pausing to learn the workspace, jobs UI, clusters, and the Databricks way of doing things instead of whatever they're used to. Data engineers transitioning to ML should expect 100 to 120 hours over 10 to 12 weeks, because the ML foundations and metric selection really slow them down in ways they don't anticipate.

Python devs with ML theory but zero platform time land around 60 to 80 hours over 6 to 8 weeks, assuming they don't waste time fighting the interface. Complete beginners? 150+ hours over 3 to 4 months. Honestly you should build at least one small project end-to-end instead of just reading a Databricks ML Associate study guide and hoping muscle memory magically appears.

Part-time? 10 to 15 hours per week for 8 to 10 weeks is a sane plan. Intensive's 20 to 25 hours per week for 4 to 5 weeks, but that only works if you can do actual labs, not just binge videos passively.

Study materials, practice tests, and the strategy that works

For Databricks ML Associate exam objectives, start with official docs and training around MLflow, Model Registry, Feature Store, and deployment options. Don't skip the boring documentation pages. Then build something small: a notebook that trains a simple model, logs to MLflow, registers it, moves stages, and runs batch inference. Add Hyperopt tuning if you want extra pain and really wanna understand distributed hyperparameter search.

Practice tests matter, but only if they explain why you missed things, not just mark you wrong. If you want a focused bank for repetition and timing practice, Databricks-Machine-Learning-Associate Practice Exam Questions Pack is $36.99 and fits well after you've done hands-on work. I mean, don't make it your only resource. That's a recipe for surface-level memorization. Use it as pressure testing, then revisit weak domains, then do timed mocks again. If you like that format, you'll probably come back to Databricks-Machine-Learning-Associate Practice Exam Questions Pack during the final week for speed and stamina building.

Final-week checklist. Short stuff. Redo MLflow logging flows. Redo Model Registry transitions. Redo Feature Store basics. Then one last timed run using Databricks-Machine-Learning-Associate Practice Exam Questions Pack.

Renewal and staying current

People also ask about the Databricks ML Associate renewal policy, which is fair. Databricks certifications typically have a validity period and may require recertification when the exam version changes or major platform updates ship, so check your certification portal for the current rules because stuff shifts constantly. Databricks ships features fast. If you don't touch MLflow and deployment workflows for a year, you will absolutely forget the fiddly bits that matter.

Quick FAQ style answers

How much does the Databricks Certified Machine Learning Associate exam cost? Depends on your region and local taxes, so check the current listing for the Databricks-Machine-Learning-Associate exam cost and retake terms before budgeting. What's the passing score? Databricks may not publish a stable fixed number publicly, so focus on mastering the objectives instead of gaming a number. How hard is it? Moderate with hands-on Databricks experience. Challenging if you're new to MLflow and Feature Store concepts, and still easier than the Professional tier certification.

Databricks ML Associate Exam Objectives and Skills Measured

What's actually measured on this certification

The Databricks Certified Machine Learning Associate exam isn't some checkbox exercise. It tests whether you can build, track, and deploy ML models on Databricks without constantly Googling basic MLflow commands or freaking out when someone asks about Feature Store. I've seen people with solid Python ML skills absolutely bomb this thing because, honestly, they underestimated all the platform-specific stuff that comes up.

The exam breaks down into six domains. They're balanced pretty well. You're not spending 50% of time on one obscure topic, which is nice. Data preparation and workflows grab about 15-20%, which makes sense since that's where most ML projects actually start. Not with fancy neural nets like everyone imagines. Then MLflow experiment tracking takes up the biggest chunk at 20-25% because Databricks really, really wants you to know their ecosystem inside and out. I mean that's their whole business model so it tracks.

The data prep foundation everyone underestimates

Domain 1 covers ML workflows and data preparation. Not gonna lie, this trips up people who think "I already know pandas, I'm good." Yeah, but do you know when to use Spark DataFrames versus pandas on Databricks? That decision matters when you're dealing with datasets that don't fit in memory on a single node. And the thing is, that's most production scenarios.

You need to handle missing values intelligently. Outliers too. Data quality issues that crop up in real datasets, not the clean Kaggle stuff we all wish we worked with. The exam tests whether you understand data formats like Delta, Parquet, CSV. When each makes sense for ML workflows, because spoiler alert: they're not interchangeable. Delta's transactional guarantees matter when you're iterating on features and don't want to corrupt your training data mid-experiment.

Creating proper train-validation-test splits sounds basic. Until you realize temporal data needs different handling or you'll leak future information. I once saw a team spend three weeks debugging why their time-series model performed beautifully in backtesting but crashed in production, only to discover they'd randomly shuffled their temporal splits like it was image classification data. Brutal lesson. The Databricks Certified Data Engineer Associate exam covers some overlapping data engineering concepts, but this one's laser-focused on the ML context specifically.

MLflow tracking is huge and unavoidable

Domain 2 on experiment tracking with MLflow is where many candidates spend most of their prep time. For good reason. This is 20-25% of your score, so it's substantial. You need to understand all four MLflow components: Tracking, Projects, Models, Registry. But Tracking gets the most attention in real work and definitely on the exam.

Logging parameters and metrics? Table stakes. But organizing experiments with nested runs, understanding parent-child relationships when you're doing hyperparameter sweeps with dozens of runs? That's where it gets interesting. Where people actually struggle. I mean, when you've got 50 experimental runs cluttering your workspace, you better know how to structure that metadata or you'll drown.

Autologging is convenient for scikit-learn and XGBoost. But you need to know its limitations. Sometimes you're logging custom metrics that autologging doesn't capture. Or, wait, actually, sometimes it logs too much and you need to disable certain aspects. The exam will test whether you can retrieve logged information programmatically, not just stare at the UI hoping for insights. And honestly, managing experiment metadata and using search functionality effectively separates people who just "use" MLflow from those who actually master it in production environments.

Feature Store isn't optional anymore

Domain 3 focuses on Feature Store. 15-20% of the exam. This is newer territory for lots of ML folks who've been working in traditional environments where feature management is just chaos. The architecture and benefits aren't immediately obvious until you've dealt with feature reuse hell across multiple projects. Multiple teams stepping on each other's work.

Creating feature tables with proper primary keys and timestamps matters more than you'd think. Point-in-time lookups for temporal features prevent data leakage, which is absolutely critical for financial or time-series models where one mistake tanks your model's real-world performance. You need to understand both offline feature serving for batch training and online serving for real-time inference. They're different beasts. Totally different performance characteristics. Different infrastructure requirements.

The Databricks Machine Learning Associate practice test at $36.99 covers Feature Store scenarios pretty thoroughly. That helped me internalize the workflow before sitting for the real exam. Feature lineage and governance sound boring but they're what makes Feature Store valuable in production environments where multiple teams share features and you need to track who's using what.

Model training hits all the major libraries

Domain 4 is the chunkiest. 25-30% of your exam. It covers model training, tuning, and evaluation across multiple libraries. You're expected to work with scikit-learn, XGBoost, LightGBM, and TensorFlow. Not expert-level in all of them, but competent enough to train models and understand their quirks on Databricks infrastructure.

Hyperparameter tuning with Hyperopt is big here. Configuring parallel search using Spark Trials to use your cluster is a Databricks-specific optimization you won't learn from generic ML courses or YouTube tutorials. Databricks AutoML is also tested, which honestly feels like a gimme section if you've played with it even once. But you need to interpret the generated notebooks. Understand what it's doing under the hood, not just click buttons.

Evaluation metrics span classification and regression. Precision, recall, F1, ROC-AUC for classification problems. RMSE, MAE, R² for regression. The exam wants you picking the right metric for the business problem, not just defaulting to accuracy because it sounds good. Cross-validation strategies, learning curves, detecting overfitting. These are fundamentals but they come up in Databricks-specific contexts with distributed data that behaves differently than your laptop experiments.

Model management ties everything together

Domain 5 on Model Registry takes 15-20%. Domain 6 on deployment grabs another 15-20%. This is where the rubber meets the road. You're registering models, transitioning them through lifecycle stages: None, Staging, Production, Archived. Understanding model versioning and how to retrieve specific versions for inference becomes key when you've got multiple model iterations in production simultaneously.

Deployment options vary wildly. Batch inference on Spark DataFrames for scoring millions of records overnight. Real-time serving with REST APIs for low-latency predictions when users are waiting. The Databricks-Machine-Learning-Associate practice materials cover deployment patterns that show up repeatedly on the exam, like using pyfunc for custom model logic that doesn't fit standard frameworks.

Model monitoring in production isn't deeply covered. But you need awareness. A/B testing and canary deployments are mentioned enough that you should understand the concepts at minimum. If you're coming from a data engineering background and already passed the Databricks Certified Data Analyst Associate, you'll recognize some of the data quality monitoring overlap, which gives you a leg up.

How the domains connect in practice

What makes this exam tricky? Domains don't exist in isolation. A question about Feature Store might also involve MLflow tracking. A deployment scenario requires understanding model registry stages. The exam reflects actual workflows where you're loading data, engineering features, tracking experiments, registering the best model, and deploying it. All in one continuous pipeline that mirrors real-world projects.

The Databricks platform unifies these steps in ways that traditional ML stacks don't. That's why platform-specific knowledge matters so much here. You can't just wing it with generic ML knowledge and hope for the best.

Databricks ML Associate Prerequisites and Recommended Experience

The Databricks Certified Machine Learning Associate exam is basically Databricks asking, "Can you build and ship ML on our platform without getting lost?" Not theory-heavy. Not research-y. Practical stuff. Platform-first approach that doesn't mess around with academic rabbit holes you'll never use in production environments where people actually care about uptime and deployment velocity.

This is the Databricks Machine Learning Associate certification sweet spot. Early ML engineers. Data scientists who suddenly got told to "put it in production." Analytics folks moving into applied ML. Also engineers who can code but haven't lived inside Databricks yet. Some experience helps. A lot, honestly.

Who this is for (and who it isn't)

Look, if you've never trained a model, this will feel rough. If you've trained models locally but never touched clusters, MLflow, or a shared notebook workflow, it'll also feel rough, just in a different way, y'know?

You should take it if you're around the associate level and can already do basic ML tasks end to end, and you want a credential that maps to real job expectations on Databricks, not just "I read a scikit-learn tutorial once." Not gonna lie, people underestimate the platform bits. That's where a bunch of candidates slip and wonder what happened.

Cost and logistics you should know

People always ask about the Databricks-Machine-Learning-Associate exam cost. Real talk. Databricks sets pricing in their certification portal and it can vary by region and taxes, so check your local total at checkout before you commit. Retakes matter too. Not just emotionally, though that's valid. Financially.

Delivery is online proctored in most cases, and you schedule through the testing provider Databricks uses. Don't schedule at 10pm after a full workday, because honestly, that's how you miss easy questions you'd nail at 9am with coffee.

Scoring and passing expectations

The Databricks ML Associate passing score isn't something you should treat like a target to game. Short version: prepare well. Databricks may publish it, or they may keep it semi-opaque depending on the version of the exam and the provider, which is annoying but whatever. Either way, plan to be comfortably above the line instead of scraping by.

Also, exams like this often use scaled scoring, which means two different forms of the test can feel different but still be "fair." If you fail, you typically get a score report with domain feedback, which is annoying but useful for round two. Review it carefully.

Why the exam feels harder than people expect

Difficulty wise, I'd call it beginner-to-intermediate, but with a big asterisk attached. The hard part? It's switching contexts fast: Spark ML vs Python ML libraries in Databricks, MLflow tracking details, where a model artifact lives, how you register it, what "deployment" even means in Databricks terms, and what's happening behind the notebook magic when you run on a cluster instead of your laptop where everything just worked fine yesterday.

Common pain points show up around the MLflow model lifecycle on Databricks, Databricks Feature Store concepts, hyperparameter tuning in Databricks, and model deployment and inference on Databricks. You can "know ML" and still miss questions because you don't know the Databricks way, which is frustrating but real, and there's no getting around it.

What the exam measures (high level)

The Databricks ML Associate exam objectives usually orbit around: prepping data in a Databricks workflow, tracking experiments, basic feature engineering, training and evaluating models, and then managing the model and doing inference. Pretty standard. Responsible ML and governance basics can appear too, especially around permissions, access, and not doing wild stuff with sensitive data that'll get your company sued.

You don't need to be a statistician with three PhDs. You do need to be competent and know where stuff lives.

Official prerequisites vs what you actually need

Here's the official side: there are no formal Databricks ML Associate prerequisites required to register. No gatekeeping nonsense. No "must take X first." No prior Databricks certification required. Academic credentials or degrees aren't mandatory either, which is refreshing in a world where everyone wants proof you attended the right school before you can click a button.

Now the recommended side is where reality kicks in hard. Databricks recommends 6 to 12 months of hands-on platform experience, and honestly that tracks, because you need enough time to bump into the everyday stuff like cluster errors, library installs, and workspace permissions, and then fix them without panicking or Slacking your lead engineer every five minutes. Familiarity with Python programming language is necessary, not optional. Basic understanding of machine learning concepts is expected. Like you should know what overfitting means. Completion of Databricks Academy courses is strongly recommended, and if you're trying to do this fast, those courses are probably the most direct path because they teach the platform patterns the exam likes instead of making you reverse-engineer everything from StackOverflow threads.

I've seen people skip the Academy courses thinking they can wing it with general ML knowledge. Maybe one in ten pulls it off. The rest come back humbled and 150 bucks lighter.

Platform familiarity you should have before test day

You should be comfortable working through the Databricks workspace and user interface. Sounds basic, right? It's not. People waste time because they don't know where things live and they're clicking around like it's a scavenger hunt.

Notebooks matter a lot here. Creating and managing notebooks for analysis and ML, attaching to clusters, switching runtimes, understanding what state lives where instead of assuming everything's saved automatically. Then there's cluster configuration and ML Runtime selection, which is one of those topics candidates skim, then get absolutely wrecked by a question about dependencies or performance or why something works on one cluster and fails on another for reasons that seem arbitrary but aren't.

DBFS comes up too. Using Databricks File System for storage, moving files around, knowing what paths mean when you're not on your local machine anymore. Delta Lake basics show up in real work and on the exam: working with Delta Lake tables and data versioning, having a clue why that helps when you're iterating on training datasets and don't want to retrain from scratch every time. You should also know collaboration basics like notebook sharing, comments, version control integration, plus workspace permissions and access management so you don't confuse "I can see it" with "I can run it" because those are very different things.

A few more you'll want at least casual familiarity with: dbutils for common tasks, configuring libraries and dependencies on clusters without breaking everything, job scheduling and workflow orchestration, Databricks SQL for quick exploration when you need answers fast.

Python skills you can't fake

Python is non-negotiable here. Proficiency in Python 3.x syntax and core libraries, plus experience with NumPy for numerical computing, will keep you from tripping on questions that are supposed to be easy layups. Also, you should be comfortable reading code quickly without needing to trace every line like you're debugging a legacy codebase from 2003.

Basic ML foundations matter too, obviously. Overfitting. Validation splits. Metrics like precision/recall or RMSE depending on the problem type and what you're actually trying to optimize. Pipelines as a concept, not just a buzzword. If you can't explain why you'd tune hyperparameters or how you'd evaluate a model honestly without just saying "accuracy go up," you'll struggle with the scenario-based questions.

Study materials and practice strategy that work

If you want a Databricks ML Associate study guide approach that doesn't waste your time, start with Databricks Academy and the official docs on MLflow, Feature Store, and model registry. Those are gospel for this exam. Then build one small project end to end: ingest data, create a Delta table, train a model, track runs in MLflow, register the model, run batch inference. One project with real clicks, not just reading about it.

For a Databricks ML Associate practice test, pick one that matches the published objectives and explains answers thoroughly. Explanations are the whole point, not just "correct answer is B" with no context. Do a diagnostic first, review weak areas without skipping the boring parts, then do timed mocks under exam conditions. Last week, focus on speed, reading carefully without overthinking, not second-guessing basic platform behavior you already know.

Renewal and validity notes

The Databricks ML Associate renewal policy depends on Databricks' current certification validity rules, which can change without much warning, so check your certification dashboard for the exact expiration and recertification options available to you. Don't assume it's lifetime. It usually isn't in this industry.

FAQ quick answers

Cost: check the portal for the current Databricks-Machine-Learning-Associate exam cost with your taxes included. Passing score: the Databricks ML Associate passing score may be published or presented as scaled results depending on exam form and provider policies. Difficulty: doable for associate level, but hands-on Databricks experience matters more than people think when they're planning study time.

Best prep? Databricks Academy plus docs plus one real project plus a good practice test with explanations. Prereqs: no formal prerequisites to register, but you want 6 to 12 months on the platform, solid Python skills, basic ML concepts before you sit down and pay money for this thing.

Conclusion

Wrapping up your prep

Okay, real talk. The Databricks Certified Machine Learning Associate exam? You can't wing it. It demands actual platform skills. Not just theory you crammed the night before from some dusty textbook nobody really reads anyway, but genuine hands-on experience with MLflow model lifecycle on Databricks, understanding when you'd grab the Feature Store versus building your own features from scratch, and deploying models that don't immediately crumble when production traffic hits them.

The Databricks-Machine-Learning-Associate exam cost hovers around $200. That's not exactly pocket change for an associate-level cert. But if you're serious about ML engineering on Databricks, this certification really opens doors. Hiring managers actually look for it now. Just remember the Databricks ML Associate passing score sits at 70%, and you've got 90 minutes to prove your knowledge across 45 questions.

Study timelines vary wildly. Some folks breeze through in six weeks of focused study. Others need three solid months. Really depends on whether you're already writing Spark ML pipelines daily or coming from pure Python ML libraries in Databricks without much platform exposure beyond basics.

The exam objectives hit everything. Hyperparameter tuning in Databricks? Check. Model deployment and inference on Databricks? Absolutely. Plus all that MLflow experiment tracking that consistently trips people up. You can't just read about it and expect things to click. You need to spin up notebooks, break things intentionally, fix them, maybe break them again. That's how concepts actually stick in your brain instead of evaporating by exam day.

For renewal? The Databricks Machine Learning Associate certification lasts two years, then you'll need to recert. Worth planning for now so you're not scrambling later when the deadline sneaks up on you. I once let a different cert expire and had to retake the whole thing from scratch, which was about as fun as it sounds.

Test yourself first. When you're ready to validate your knowledge before dropping that exam fee, a solid Databricks ML Associate practice test makes all the difference between walking in confident versus sweating every single question. The Databricks-Machine-Learning-Associate Practice Exam Questions Pack gives you realistic scenarios mirroring what you'll actually face. Not generic ML trivia that sounds impressive but means nothing. Databricks-specific workflows, gotchas with Feature Store concepts, and deployment patterns showing up constantly on the real exam.

Don't wait until you've paid for the exam to discover you're shaky on model evaluation metrics or confused about when to use Spark ML vs Python ML libraries in Databricks.

Test yourself early. Find the gaps. Fix them methodically.

Then book your exam slot.

You've got this.