Cloudera Certification Exams Overview

What these certifications actually validate

Cloudera certification exams have changed a lot since the early Hadoop days. We've moved from that old CDH (Cloudera Distribution for Hadoop) world into the modern CDP (Cloudera Data Platform) ecosystem, and the certs mirror that shift. The skill validation now is completely different compared to what existed even five years ago when everyone was still figuring out whether Hadoop would even survive.

These exams validate real skills, not theoretical nonsense.

For administrators, you're proving capabilities to manage, configure, and troubleshoot both traditional Hadoop clusters plus those newer CDP environments. The CCA-500 and similar admin exams test whether you can actually keep clusters running, handle resource allocation, manage security policies, and diagnose issues when things go sideways at 2 AM.

Developer exams like CCA175 focus on building actual data processing applications with Spark and Hadoop. You're not answering multiple choice questions about what Spark does. You're writing code that transforms datasets, optimizes queries, and implements ETL workflows in live environments. Hands-on or nothing.

Data science fundamentals get checked through exams like DS-200, which covers analytics work and handling large-scale datasets. Then you've got specialist tracks for components like HBase (CCB-400) that dig deep into specific technologies. Really deep, like you're expected to know the internal architecture and optimization strategies that most engineers never touch. The CDP-0011 exam represents where Cloudera's headed now with modern data platform architecture spanning on-prem, hybrid, and multi-cloud deployments.

Who should actually care about these exams

System administrators managing big data infrastructure are obvious candidates.

Data engineers building ETL pipelines need these certs to prove they can actually implement the workflows they design. Anyone can draw a data flow diagram. Building one that processes terabytes efficiently is completely different. Spark developers creating distributed computing applications benefit from the CCA175 specifically since it tests real Spark coding skills under time constraints.

Data scientists working with large-scale datasets find value here too, though many data scientists I've worked with focus more on model building than infrastructure, which makes sense given their role but creates gaps. IT professionals transitioning to big data roles use these as career pivots. The certifications provide structured learning paths when you're coming from traditional IT backgrounds where distributed systems weren't really part of the conversation. Cloud architects implementing CDP solutions need to understand the platform deeply, which is where the newer CDP-focused credentials come in.

Here's something weird I've noticed. A lot of people chase these certs right after finishing bootcamps, thinking the credential alone will open doors. It doesn't work that way. Recruiters can tell pretty quickly if you've just crammed dumps versus actually worked with the tech.

What you need before starting

Entry-level certifications require basic Linux knowledge and some programming fundamentals. You can't walk in cold.

At minimum, you should be comfortable with command-line operations, understand basic scripting, and have conceptual knowledge of distributed systems. Intermediate certifications like CCD-410 expect hands-on cluster experience. Not just reading documentation, but actual time working with the technologies. I'm talking months, not weeks. Advanced certifications demand production environment expertise because the scenarios they throw at you reflect real-world complexity with all its messy edge cases, performance constraints, and those bizarre bugs that only surface when you've got three different services competing for the same resources at scale.

Specialist certifications require component-specific deep knowledge. You can't just skim the HBase documentation the night before and expect to pass CCB-400. These exams assume you've spent serious time with the specific technology.

How the exams actually work

Performance-based exams require actual task completion in live environments. This is what sets Cloudera apart from many vendor certs. You get access to a real cluster environment and have to complete specific tasks within the time limit. Configure this service. Write this Spark job. Optimize this query. Your work gets evaluated against specific criteria, not just whether you selected option B.

Multiple-choice knowledge assessments exist for some foundational certifications, but even these aren't trivial memorization exercises. Remote proctored exam options have become standard, which makes scheduling way easier than driving to testing centers. Though you need decent internet and a quiet space where you won't get interrupted.

Time limits vary by exam type. The performance-based developer exams typically give you two hours to complete a set of tasks. Sounds generous until you're actually in there troubleshooting why your Spark job isn't producing the expected output. Suddenly two hours feels like twenty minutes when you're stuck debugging a serialization issue. Scoring approaches differ too. Some exams score based on task completion percentage, others have minimum passing thresholds for different domains.

Why these certs still matter in 2026

Industry recognition is solid because Cloudera managed to position these as vendor-neutral yet vendor-backed credentials. Yeah, it's a Cloudera platform, but the skills translate across the big data ecosystem pretty smoothly when you're working with open-source components that show up everywhere. Proof of practical skills employers actually seek is the real value. Hiring managers know that someone who passed CCA175 can write functional Spark code under pressure.

Standing out in competitive job markets matters more now than ever. When you're competing against dozens of candidates with similar backgrounds, having performance-based certifications shows capability beyond resume claims. Professional development paths from beginner to expert levels give you clear progression. Start with fundamentals, move to specialized roles, advance to architect-level expertise.

Connection with modern cloud-native data platform trends? Critical.

The shift to CDP-0011 and similar credentials reflects where the industry's actually going: hybrid cloud, containerized workloads, data lakehouses that blend warehouse and lake architectures.

How they stack up against other credentials

Versus AWS, Azure, and Google Cloud data certifications, Cloudera certs offer different value. The cloud provider certs work great for platform-specific knowledge, but Cloudera focuses on portable skills that work across environments. Not gonna lie, having both types is ideal. Cloud provider cert for platform specifics, Cloudera for the underlying data processing technologies.

Pairing with Databricks and Snowflake certifications makes sense too. These aren't competing credentials so much as covering different layers of the stack. Databricks certs prove you know their specific platform implementation. Cloudera validates the foundational technologies. Unique focus on hybrid and multi-cloud data platform management is where Cloudera really stands apart. Most organizations aren't pure cloud, despite what the marketing slides say, and CDP addresses that reality.

Where things stand now

Transition from legacy CDH certifications to modern CDP-focused credentials is mostly complete. The older CCA-332 and upgrade exams like CCA-505 served their purpose but represent outdated technology stacks. Some older certifications remain useful if you're maintaining legacy environments. Plenty of companies still run CDH4 or CDH5 clusters that need administration, which creates this weird niche where those old certs actually have more practical value than you'd expect given how outdated the technology seems.

New certification tracks coming up for AI/ML and data lakehouse architectures show where Cloudera sees the market heading. The certification program's adapting to modern requirements rather than clinging to pure Hadoop heritage. That's actually smart, because the pure Hadoop model has been changing for years now toward more flexible, cloud-native approaches that barely resemble what we were doing in 2014.

Cloudera Certification Paths and Role-Based Roadmap

What these certifications actually prove

Cloudera certification exams are still one of the clearer "show me you can do the work" signals in big data. Not perfect, though. Still useful.

They validate different muscles, honestly. Admin track proves you can keep clusters alive at 2 a.m. Developer track proves you can ship Spark jobs that won't melt the YARN queue. CDP proves you understand where Cloudera's heading now (cloud and hybrid, whether your current employer likes that idea or not).

Look, if you're choosing between paths, start by asking what people actually pay you for: keeping platforms stable, writing pipelines, or designing where the whole thing runs.

Who should take them (and what you should already know)

If you've got Linux scars, you're ahead. Never tailed logs? You'll learn fast.

For admin exams, you want comfort with users, permissions, services, config files, and basic networking. Developer exams? You need fluency in at least one of Python or Scala, plus SQL that goes beyond SELECT star. For CDP, you want cloud concepts like IAM, networking, storage, and how managed services fundamentally change operational work.

One sentence reality check: these aren't trivia tests.

Role-based roadmap that actually makes sense

People ask me "Which Cloudera certification should I start with?" and I mean, the honest answer is, start where your day job's closest. The fastest way to pass is practicing on problems you already touch. The fastest way to get a raise is mapping the cert to tickets your team avoids.

Below's the role-based breakdown. Common sequences. Upgrade options for folks sitting on older CDH experience.

Administrator path (CCAH track)

This is the Hadoop administrator certification lane. It's for people who like reliability, repeatability, and knowing exactly which service is lying when metrics look fine but users are screaming.

Your core options here:

- CCA-500: Cloudera Certified Administrator for Apache Hadoop (CCAH) as the foundational admin certification

- CCA-410: Cloudera Certified Administrator for Apache Hadoop (CCAH) for CDH administration competency

- CCA-332: Cloudera Certified Administrator for Apache Hadoop CCAH covering cluster management essentials

Not gonna lie, the naming across generations can be confusing. But the intent's consistent: prove you can install, configure, monitor, and fix a Hadoop cluster under pressure, plus implement security without breaking every downstream job.

Skills validated typically land in five buckets. Cluster installation and configuration's the obvious one, but monitoring's where candidates get surprised because you need to correlate symptoms across services, not just restart daemons and hope. Troubleshooting's the real exam inside the exam, honestly. Security implementation matters too: Kerberos basics, authn vs authz thinking, and not leaving wide-open ports because "it's internal."

Upgrade paths exist if you already have older CDH background and want a clean "current enough" stamp:

- CCA-505: Cloudera Certified Administrator for Apache Hadoop (CCAH) CDH5 Upgrade

- Legacy option: CCA-470: Cloudera Certified Administrator for Apache Hadoop CDH4 Upgrade Exam

Career progression's pretty linear here. You start as Junior Hadoop Admin, then Senior, eventually Big Data Infrastructure Architect. The pay bump (aka Cloudera certification salary impact) usually shows up when you become the person who can design HA, capacity plans, and security models. Not when you merely pass the exam.

Typical prep timeline's 3 to 6 months depending on how much Linux and systems work you already do. If you're already on-call for a data platform, you can compress it because you're living the content daily. I knew a guy who prepped in six weeks but he was basically debugging HDFS permissions problems as his day job, so the exam felt like documentation review.

Developer path (CCDH track)

This is the Spark developer certification lane. It's for people who want to build data products and pipelines, not babysit services.

The flagship's CCA175: CCA Spark and Hadoop Developer Exam. It's the one hiring managers recognize most. I keep seeing it on job posts for data engineer roles even when the stack's "Spark on something else" because the skills translate.

Other developer exams in the CCDH family include:

- CCD-410: Cloudera Certified Developer for Apache Hadoop (CCDH) focused on MapReduce and Hadoop development

- CCD-333: Cloudera Certified Developer for Apache Hadoop for core development patterns

- Upgrade: CCD-470: Cloudera Certified Developer for Apache Hadoop CDH4 Upgrade Exam

What gets validated here's less about memorizing APIs and more about building correct transformations quickly. Spark application development, data transformation patterns, and working comfortably with RDD vs DataFrame vs Dataset tradeoffs are table stakes. SQL optimization sneaks in because even "Spark devs" spend a huge chunk of time making queries not run like absolute trash.

Prep timeline's usually 2 to 4 months for developers with Python or Scala background. If you already write Spark, you're mostly tightening up weak spots. Joins, partitioning behavior, shuffle costs, and reading explain plans without blinking. That's why this one ranks high when people ask about Cloudera exam difficulty ranking.

Career progression tends to go: Data Engineer, then Senior Spark Developer, then Big Data Solutions Architect. That last step's where you stop being judged on "can you code it" and start being judged on "did you design it so it survives scale, weird data, and changing requirements."

CDP path (modern Cloudera platform)

If you only take one thing from this post, take this: Cloudera's pushing CDP hard, and the CDP certs are the closest thing to future-proofing inside the Cloudera ecosystem.

The entry point's CDP-0011: CDP Generalist Exam. This is the Cloudera CDP certification roadmap starter because it tests platform understanding across cloud-native data management, hybrid deployment models, and the whole "unified data fabric" idea. Meaning you can run workloads across environments while keeping governance and security consistent, which is, the thing is, what most enterprises actually need rather than what they say they need in roadmap decks.

Skills validated here are more architectural than keyboard-mashing. You're looking at CDP architecture understanding, workload deployment concepts, security models, and data lifecycle management. Also, integration expectations matter: AWS, Azure, GCP, plus private cloud infrastructure, because a lot of companies are stuck in hybrid for years even if leadership says "cloud-first" in every meeting.

Timeline's shorter. 4 to 8 weeks is common if you already speak cloud, especially IAM and networking, because you're mapping known concepts to Cloudera's implementation rather than learning everything from zero.

Career positioning's clean: Cloud Data Engineer, CDP Administrator, Multi-Cloud Data Architect. And yes, this is where Cloudera certification career impact can be strongest right now because it fits with budget direction.

Specialist and data science paths (HBase, fundamentals)

These are for niche power moves. Not everyone needs them. Some teams absolutely do.

- CCB-400: Cloudera Certified Specialist in Apache HBase is for people who end up owning wide-column workloads. Schema design matters. Performance tuning, data modeling for HBase. The big mental shift's accepting that "tables" don't behave like your relational instincts want them to.

- DS-200: Data Science Essentials is more foundational: statistical analysis basics, machine learning fundamentals, and data exploration techniques. Great for career changers or analysts who keep getting pulled into "can you just build a model" requests.

Career niches here are real. NoSQL Database Administrator. HBase Performance Engineer. Big Data Analyst. If your org has HBase pain, the HBase specialist certification CCB-400 can make you the most important person in the room very quickly.

Recommended sequences by career goal

Infrastructure-focused professionals usually do CCA-332 or CCA-410 first, then add CDP-0011 once they want to talk hybrid and cloud with confidence.

Development-focused professionals should begin with CCA175, then layer on whichever component cert matches what they maintain. Maybe HBase, maybe more platform knowledge, depends on the job.

Career changers? Start lighter. DS-200 or CDP-0011 builds vocabulary and mental models before you commit to the heavier hands-on tracks.

Existing Hadoop pros should take upgrade exams like CCA-505 or CCD-470 if their resume screams "CDH4/CDH5 era" and they need a modern-ish validation without redoing everything.

Certification maintenance and recertification stuff

Validity periods vary by certification and generation, so always check the current policy for your specific exam family. Cloudera's changed how long credentials stay active across product shifts. Continuing education expectations are mostly practical: keep working with the platform, keep up with feature changes, and be ready to migrate when certifications get deprecated.

Migration paths matter. If you hold older CDH-based certs, the upgrade exams exist for a reason, and employers do understand that legacy Hadoop experience's still valuable, but they want proof you can operate in today's CDP-heavy world too.

What to expect on difficulty and prep

Difficulty's usually driven by three things: hands-on tasks, time pressure, and topic breadth. Admin exams punish "I know the theory" people. Developer exams punish "I can code, but I never optimize" people. CDP exams punish "I only know one environment" people.

Best Cloudera exam study resources are boring but effective: map objectives to official docs, build labs, break things on purpose, and take practice questions only after you can explain why the right answer's right. Practice tests help with pacing. Labs help you pass.

And the last FAQ I always get: CCAH vs CCDH differences? CCAH's uptime and governance. CCDH's data movement and compute logic. CDP-0011's platform direction and deployment thinking. Pick the one that matches the work you want more of.

Complete Cloudera Exam Catalog with Detailed Breakdowns

Developer certifications that actually matter

Look, if you're getting into big data development, the CCA175 Spark and Hadoop Developer is where most people start these days. And honestly? It's the one that'll actually help your career right now. This thing is performance-based, meaning you're not clicking through multiple choice questions like some boring HR compliance training. You get 8-12 actual problems to solve in a live cluster environment with a 120-minute timer ticking down.

Real Spark code. That's what you're writing. PySpark or Scala, your choice. The exam throws scenarios at you covering Spark SQL, DataFrames, RDD transformations, data ingestion from various sources, and working with file formats like Parquet, Avro, and JSON. You need about 70% of those scenarios completed correctly to pass. Sounds generous until you're 90 minutes in and debugging why your join isn't working the way you expected.

The CCD-410 developer cert focuses on MapReduce development and the traditional Hadoop ecosystem. Java-based MapReduce programming. HDFS operations. Hive queries, Pig scripts, and Sqoop data transfer. Not gonna lie, this one's losing relevance as everyone shifts to Spark, but if you're maintaining legacy systems or working somewhere that hasn't modernized their stack yet, it validates skills that are still paying bills. Same performance-based format with 8-10 scenarios over 120 minutes.

Then there's CCD-333, which is an earlier MapReduce-centric version covering CDH3/CDH4 platforms. Limited current relevance but recognized for legacy Hadoop expertise. Custom InputFormat/OutputFormat development, combiner functions, distributed cache usage. All that classic Hadoop stuff your senior engineers remember fondly while complaining about how complicated everything's gotten. The thing is, some companies haven't updated their infrastructure in years. I once worked at a place still running CDH3 in production because "the migration budget never got approved." Six years they said that. Anyway, this cert still shows up on job descriptions occasionally, especially at large enterprises with technical debt.

The CCD-470 upgrade exam was designed for professionals moving from CDH3 to CDH4 knowledge, focusing on API changes and migration considerations in a shorter 90-minute format. Historical value mostly.

Administrator track for infrastructure folks

The CCA-500 administrator certification is the foundational admin cert for CDH5. Different animal entirely. You get 60 questions over 120 minutes, all performance-based cluster management tasks. HDFS administration. YARN configuration. Service management, security implementation with Kerberos and Sentry. Cluster monitoring, troubleshooting when things break at 2am, backup and recovery procedures that you hope you never need but definitely will.

Resource management and capacity planning are huge parts of this. System administrators, DevOps engineers, infrastructure specialists.. this is your exam. I've seen people with this cert command serious respect because cluster administration is one of those things that looks easy until you're the one responsible for keeping everything running. I mean, anybody can launch services, but understanding why a node's dropping out of the cluster at random intervals? That's different.

The CCA-410 version is the updated admin cert for CDH4 with similar format but platform-specific variations. High availability configurations, federation, advanced HDFS features that make your clusters actually production-ready. Commissioning and decommissioning procedures. Performance tuning. All that operational stuff.

CCA-332 covered fundamental cluster administration across earlier CDH versions. Installation, configuration, monitoring, maintenance workflows. Still recognized in some circles but superseded by newer versions. Mixed feelings here.

For upgrade paths, there's CCA-505 for admins moving from CDH4 to CDH5 knowledge. Shorter 90-minute format concentrating on delta knowledge. YARN improvements, HDFS enhancements, new service components. And CCA-470 was the CDH3 to CDH4 upgrade path, covering that MRv1 to YARN transition that was a big deal back then.

Modern platform and cloud-focused exams

Here's where things get interesting. The CDP-0011 Generalist Exam reflects Cloudera's cloud-first strategy and honestly, this is probably where you should be looking if you're starting fresh in 2025. Multiple-choice format with 60 questions in 90 minutes covering CDP architecture, public cloud deployments on AWS and Azure, private cloud options. Pretty thorough stuff.

Data catalog functionality. Security and governance. Workload management across different use cases. Data engineering, data warehousing, machine learning workload types all running on the same platform. The Shared Data Experience (SDX) concepts are central to how CDP works, and understanding that architecture matters more than memorizing specific commands. Like, way more.

Cloud architects, CDP administrators, modern data platform engineers.. this exam validates you understand where Cloudera's actually heading as a company. Basic cloud platform knowledge and data management concepts are prerequisites, but you don't need years of Hadoop experience to approach this one. That's refreshing.

Specialist certifications for niche expertise

The CCB-400 HBase specialist cert is for advanced practitioners who need deep HBase expertise. Performance-based with hands-on tasks covering HBase architecture. Schema design. Row key strategies that make or break your performance, column family optimization. All key decisions that'll haunt you later if you mess them up. Read/write performance tuning, compaction management, region splitting. All the operational aspects that separate someone who's used HBase from someone who actually knows HBase.

Integration with MapReduce, Spark, and Phoenix for SQL access. Bulk loading techniques and data migration strategies. You get 8-10 practical scenarios in 120 minutes. NoSQL database administrators, HBase developers, performance engineers.. this is specialized knowledge that commands premium rates. We're talking real money.

Data science fundamentals

DS-200 Data Science Essentials is entry-level, multiple-choice format focusing on foundational concepts. Statistical analysis basics. Data exploration techniques, visualization principles. Nothing too scary yet. Machine learning algorithm categories and when to use what. Data preparation and feature engineering fundamentals, model evaluation and validation approaches that'll make sense once you've struggled through a few projects.

Fifty questions. Ninety minutes. Integration with Hadoop ecosystem for large-scale analytics is covered, but this is really about core data science concepts. Aspiring data scientists, analysts transitioning to big data, business intelligence professionals looking to expand their skillset.. good starting point before diving into more specialized machine learning or advanced analytics work.

Which path makes sense for you

Developer path? Start with CCA175 unless you're stuck maintaining MapReduce code, then CCD-410 might be necessary. Administrator path? CCA-500 is the foundation, but honestly, look hard at CDP-0011 if you're working with modern deployments. The industry moved to cloud and hybrid architectures, and the old on-premise-only knowledge matters less every year. That's just reality.

Specialist certifications like CCB-400 make sense when you're already working with those technologies and need validation, not as entry points. DS-200 works as a foundation if you're pivoting into data science but don't have the background yet.

Cloudera Exam Difficulty Ranking and What to Expect

why cloudera certs still matter

Look, Cloudera certification exams are this strange mashup of old-school Hadoop grit and modern CDP platform stuff. Some are straight knowledge checks. Others? They're the kind that make you sweat because you get dropped into a live environment and told to make things work. Fast.

If you're chasing job credibility, these exams can actually help. Hiring managers don't always know every vendor cert, but they absolutely understand "this person can administer Hadoop" or "this person can ship Spark code under pressure" when it's backed by a proctored exam. I've seen people land roles purely because they had the cert and could talk through what broke during their practice runs.

what these exams actually validate

Cloudera's historically been pretty strong at role validation. Their admin exams map to real operations work like services, configs, permissions, and triage. Developer exams map to building and debugging data pipelines, usually with Spark, sometimes with the older MapReduce-focused stuff.

Data science and CDP generalist exams land more on concepts, architecture, and platform literacy. Less terminal time. More "do you know what goes where" across the ecosystem, which is a different kind of hard because it rewards breadth and memory instead of muscle memory.

who should take them (and who should not)

If you're already working as a Hadoop admin, the Hadoop administrator certification track is basically you formalizing what you do every week. If you're a data engineer doing Spark ETL, the Spark developer certification angle makes sense, especially with CCA175 (CCA Spark and Hadoop Developer Exam).

Newer folks can start with knowledge-based exams first. Not because they're "easy", but because you're not fighting the exam environment at the same time you're learning what YARN even is.

Never touched Linux? Pause. Get that sorted first.

role-based roadmap that doesn't waste your time

Administrator path is the cleanest. You learn the platform, you learn how it breaks, you learn how to fix it. That points you at CCA-500 (Cloudera Certified Administrator for Apache Hadoop (CCAH)) or the similar CCA-410 depending on what your employer recognizes.

Developer path is more "build and ship". Spark, HDFS IO patterns, performance gotchas, and reading logs when your job fails for a dumb reason at 2 a.m. That's why CCA175 is the common pick.

CDP path? That's the modern platform view. The CDP Generalist Exam CDP-0011 isn't hands-on, but it hits architecture and services hard, and it fits teams migrating from on-prem CDH to CDP.

Specialist and data science path is for people who already live in a component. HBase folks know who they are. Same with legacy MapReduce devs.

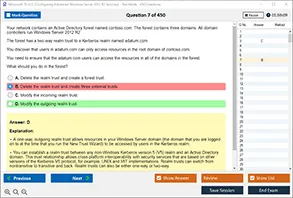

formats: performance-based vs knowledge-based

Performance-based exams are the ones people complain about on forums, for good reason. You're given tasks, a clock, and a cluster. You either complete the objective or you don't. No partial credit vibes. It's closer to real work than most certs, but it also means you can lose time to stuff that's not your fault, like a slow service restart.

Knowledge-based exams are multiple-choice or scenario questions. They feel calmer. Still tricky though. You can get wrecked by two answers that both sound right unless you remember the exact behavior of a service, a parameter name, or the "Cloudera-approved" way of describing an architecture.

factors that determine cloudera exam difficulty

Time pressure is the big one. In performance exams, you're not solving one problem. You're solving a pile of them. The real skill is finishing enough tasks inside the window while double-checking you didn't break something else, which sounds obvious until you're 90 minutes in and your brain starts skipping steps.

Breadth vs depth matters too. Some exams go wide across the Hadoop/Spark ecosystem, which punishes shallow study plans because you can't hide from weak areas. Others go deep into one component, which punishes people who only did generic "Hadoop overview" learning and never got hands-on with the sharp edges.

Troubleshooting complexity is another divider. A task that says "configure X" is one thing. A task that says "fix why this job fails" is totally different, because now logs, configs, permissions, and service dependencies all collide. You need a repeatable method while the timer keeps running.

Memorization versus practical application is also real. Some exams want syntax, flags, and exact config keys in your head. Performance exams mostly want you to know how to find the right place to change something and validate it worked, but you still need mental reference for the high-frequency commands because searching around wastes time.

Documentation availability is huge. Some environments let you access certain docs, some don't. Either way you can't rely on Stack Overflow or random blog posts, so you need to practice with official docs and your own notes, not the internet safety net.

Environmental factors are the silent killer. Cluster responsiveness, weird tool versions, a UI you're not used to. Even keyboard layout stuff. Familiarity with Hue, Cloudera Manager, terminal tooling, and basic Linux flow can be the difference between passing and timing out. I once burned fifteen minutes because I didn't realize the test environment had a non-standard shell prompt that threw off my tab completion habits.

difficulty ranking (beginner to advanced)

Here's my opinionated Cloudera exam difficulty ranking. It's not about ego. It's about how many ways the exam can surprise you.

Beginner:

Intermediate:

Advanced:

Legacy exams like CCA-505, CCA-470, CCD-333, and CCD-470 can be "advanced" in practice just because the material is older, less aligned with what people do daily now, and your normal modern study resources might not map perfectly.

beginner level starting points

DS-200 is foundational and multiple-choice, so you're mostly being tested on concepts and basic applied knowledge, not your ability to wrestle with a live cluster. Prep time is usually 2 to 4 weeks if you do a structured plan and actually take notes instead of watching videos at 1.5x speed and calling it learning. Pass rates tend to sit around 60 to 70% for people who prepare like adults.

CDP-0011 is similar in that it's architectural knowledge without hands-on requirements, so it's a great "first Cloudera cert" if you're in a pre-sales, analyst, junior engineer, or platform role and you need to speak CDP fluently without pretending you're an operator.

intermediate level core certs

CCA175 is performance-based and practical, but it's focused. You're mostly living in Spark land, writing code, reading outputs, and fixing what breaks. The hard part is speed plus correctness, because you can absolutely solve tasks slowly and still fail the exam. Feels brutal the first time you see it.

CCA-500 and CCA-410 are full admin exams. They cover documented topics, sure, but the scope is wide. The exam wants you to be comfortable with the platform, not just familiar. Expect 6 to 12 weeks of prep with hands-on practice if you're not already doing admin work daily. Pass rates often land around 50 to 60% because performance-based testing exposes gaps fast.

advanced level specialist and legacy

CCB-400 is deep HBase. Not "I read about column families once." Real component expertise. You need to understand data modeling tradeoffs, region behavior, operational tasks, and diagnosing real issues. If you're not already an HBase person, 12 to 16 weeks is normal prep time. The pass rates people report, usually 40 to 50%, match the reality that specialists do better than generalists here.

CCD-410 is rougher than people expect because the MapReduce programming scenarios can get complex. Modern engineers are often more fluent in Spark than classic MapReduce patterns. That gap shows up fast under exam conditions.

what makes performance-based exams hard

You're working inside a clock. Multiple complex scenarios. Different environment than your laptop. No external resources or forums when something weird happens. You need to keep moving, keep validating, and make peace with skipping a task if it's turning into a time sink.

Speed matters. Accuracy matters. Stress management matters too, not gonna lie. A four hour hands-on exam can turn your brain into mashed potatoes if you don't practice endurance and a checklist style of working.

what makes knowledge-based exams hard

Breadth. Lots of components. Similar-sounding answers. Questions that want the "best" answer, not a technically possible answer. Plus the classic trap of remembering syntax, parameters, and config options that you rarely type from memory at work because you normally check your runbooks.

Time is still a thing. You might have 50 to 60 questions. If you camp on the first ten trying to be perfect, you'll rush the last fifteen and miss easy points.

common pain points people report

Time management is the top complaint across performance-based exams, and I agree with it. People also get tripped up by unfamiliar tools and interfaces, like not knowing where a setting lives in a UI, or wasting time because they're not comfortable in the terminal.

Hands-on gaps hurt. So does insufficient practice with realistic scenarios. Another nasty one is troubleshooting under pressure without docs, especially when a fix requires a memorized parameter or a specific config key and you can't afford to guess wrong and create a new problem.

strategies that actually help

Hands-on practice in something that mirrors the exam setup is the best fix, even if it's a small lab cluster and not a perfect clone. Time-box your practice sessions. Force yourself to complete tasks fast, then review what slowed you down, because that's what the exam punishes.

Focus on the common scenarios first, then fill gaps. Build a systematic troubleshooting flow you can repeat when stressed. Check logs, confirm configs, validate permissions, verify service health, rerun. Only then start changing "random stuff".

Build a mental reference for critical commands and configs. Not everything. Just the high-frequency ones. Practice exams help for knowledge-based tests, and they also help you find weak areas early, which is the whole point of a study plan.

quick answers people ask me

Which Cloudera certification should I start with? If you want low friction, start with DS-200 or the CDP-0011, then move into CCA175 or CCAH depending on your job.

What's the difficulty level of Cloudera certification exams? Beginner exams are manageable with 2 to 4 weeks. Performance-based exams get hard fast because execution speed and troubleshooting are baked into the scoring.

How long does it take to prepare for CCA175 or CCAH? Most people need 6 to 12 weeks if they're doing real labs, not just reading.

Do Cloudera certifications help with salary increases and promotions? They can, especially when they match your role and you can talk through what you learned in an interview. Not magic though.

What are the best Cloudera exam study resources? Official objectives, hands-on labs, and realistic mock tasks. Courses help, but only if you touch the keyboard.

Study Resources and Preparation Strategies for Cloudera Exams

Where to actually start with study materials

Look, the official Cloudera documentation is your baseline. Everything else? Supplementary at best. Their exam blueprints break down exactly what topics show up on tests like the CCA175 or CCA-500, and honestly not enough people read these carefully before they start cramming random tutorials that may or may not even be relevant.

The exam objectives document for each certification maps out specific skills you've gotta demonstrate. For the CDP-0011 exam, you'll see they want you understanding data lifecycle management and security governance, while something like CCD-410 focuses heavily on MapReduce patterns and Spark transformations. Reading the blueprint first saves you from studying things that won't even appear on your exam. I mean, why waste hours on topics they don't test?

Cloudera University offers instructor-led training. Pretty expensive, honestly. But thorough, I'll give them that. Their courses align directly with certification paths, which matters more than people realize. The on-demand video courses are more budget-friendly and you can pause when concepts don't click immediately, which happens a lot with distributed computing topics. I've seen people blow through video courses in a weekend and then wonder why they fail exams because they didn't actually absorb the material. Just watched it play while checking their phones.

Practice questions that actually matter

Official practice exams from Cloudera give you the closest approximation to real test conditions. Not gonna lie, third-party practice tests vary wildly in quality and sometimes test knowledge that's completely irrelevant to what Cloudera actually cares about, like obscure configuration parameters nobody uses in production environments anymore.

Community forums? Tons of discussions about specific exam scenarios. The Cloudera Community has threads where people share what surprised them during exams, what topics showed up more than expected, and what they wish they'd studied harder. Knowledge base articles often explain edge cases and troubleshooting scenarios that appear in performance-based exam sections. The thing is, these real-world problems mirror what you'll face on test day.

Cloudera's blog regularly publishes posts about best practices for cluster administration, Spark optimization techniques, and CDP migration patterns. These aren't explicitly labeled as "exam prep" but they reflect the current thinking that shows up in exam questions. When they publish something about YARN resource management tuning, you can bet that topic appears on administrator certifications. Actually, I remember spending an entire weekend once just reading through their blog archives, which sounds boring but I stumbled across a post about memory tuning that explained executor allocation better than any official course I'd taken.

Getting your hands dirty with real environments

You need actual cluster practice. Period. Reading about HDFS replication factors is completely different from configuring them, watching them fail, and troubleshooting why your data blocks aren't distributing correctly across nodes. Theory only gets you so far.

Setting up a personal Hadoop cluster used to be a nightmare of dependency conflicts and configuration files. These days it's more manageable but still requires some patience and, honestly, a willingness to break things repeatedly. You can run a pseudo-distributed cluster on a decent laptop for basic practice with CCA-332 administrator tasks. For something like the CCA175 developer exam though, you'll want multiple nodes to really understand how Spark jobs distribute across executors.

Docker containers? big deal. Cloudera has official Docker images that let you spin up CDH or CDP clusters in minutes instead of hours. I've rebuilt my practice environment three times in one day when I was testing different HBase configurations for the CCB-400 specialist exam, and Docker made that actually feasible instead of making me want to throw my laptop out the window.

Cloud platforms for realistic scale testing

AWS offers EC2 instances where you can deploy multi-node clusters without buying physical hardware. The costs add up if you leave instances running all day, learned that the hard way, but for targeted practice sessions before your exam it's reasonable. You can snapshot your configurations and restore them later, which is perfect for practicing disaster recovery scenarios that appear on CCAH exams.

Google Cloud and Azure have similar capabilities. Google's Dataproc is actually pretty slick for Spark development practice since it handles a lot of cluster management automatically, though that means you miss some of the hands-on admin experience needed for tests like CCA-410. Mixed feelings on that tradeoff.

The key thing with cloud labs is you want to practice the actual exam tasks, not just follow tutorials. If the exam blueprint says you need to optimize a slow-running Spark job, you should intentionally write terrible Spark code and then fix it. Create scenarios where YARN containers fail and you have to investigate logs. Break your NameNode configuration and restore it from backups. Sounds masochistic but that's how you learn.

Building a study schedule that actually works

Most people underestimate prep time. The CCA-500 certification isn't something you cram for over a weekend unless you're already running Hadoop clusters professionally and even then it's risky. A realistic timeline for someone with basic distributed systems knowledge is four to six weeks of consistent study, maybe ten to fifteen hours per week, though I know people who needed longer depending on their background.

Start with the exam blueprint and divide topics into chunks. Week one might focus on HDFS architecture and command-line operations. Week two on YARN and resource management. Week three on Spark fundamentals. Don't try to learn everything at once because concepts build on each other and you'll just confuse yourself. I tried that approach once and retained basically nothing.

Schedule hands-on practice sessions at least twice a week. You need muscle memory for common commands and configuration patterns, the kind of automatic knowledge that kicks in under exam pressure. During my prep for the developer track, I probably ran Spark jobs on sample datasets fifty times until the syntax and optimization approaches became automatic.

Study groups and accountability systems

Some people swear by study groups. Honestly they work great if everyone's actually committed and at similar knowledge levels, but that's a big if. I've been in groups where one person dominates discussions and others just ride along, which helps nobody. But a good study group lets you explain concepts to each other, and teaching something is the fastest way to identify gaps in your own understanding. Suddenly you realize you can't actually articulate why YARN allocates containers the way it does.

Online communities like Reddit's big data subreddits or specific Cloudera certification Discord servers connect you with people preparing for the same exams. You can share practice questions, compare lab results, and get different perspectives on tricky topics like Hive query optimization or HDFS federation.

When mock exams reveal what you don't know

Take your first practice exam early in your prep cycle, not right before the real thing. You want to fail practice tests because that shows you what needs work. Counterintuitive but true. I scored maybe 60% on my first CCD-410 practice exam and it was actually helpful because it highlighted that my MapReduce knowledge was theoretical but I couldn't write actual working code under time pressure.

Time yourself during practice sessions. Exam time limits create pressure that changes how you think and work. What seems easy when you have unlimited time becomes challenging when you're watching a countdown timer and still have three tasks incomplete.

Review wrong answers thoroughly instead of just checking your score and moving on. Understand why the correct answer is right and why your approach was wrong. Sometimes you'll discover you had a basic misunderstanding about how a component works, which affects multiple exam topics and explains why you kept getting similar questions wrong.

Books and third-party courses as supplements

O'Reilly has several Hadoop and Spark books that provide deeper technical background than video courses typically cover. "Hadoop: The Definitive Guide" is dense but thorough for administrator paths. "Learning Spark" walks through practical examples that mirror developer exam scenarios, though I'll admit some sections can be dry.

Third-party course platforms like Udemy or Pluralsight offer Cloudera-focused content at lower prices than official training. Quality varies a lot though, so check recent reviews and verify the course content matches current exam blueprints, since Cloudera updates their certifications and some courses lag behind by a year or more.

The real preparation happens when you combine multiple resource types instead of relying on just one. Use official docs as your reference, video courses for concept introduction, hands-on labs for practical skills, and practice exams for assessment. That's how you build the well-rounded knowledge that Cloudera certification exams actually test. Not through any single magic resource.

Conclusion

Getting your prep dialed in

Look, I'm not gonna sugarcoat it. These Cloudera exams are brutal. The CCA175 Spark and Hadoop Developer exam especially will push you way harder than most vendor certs because you're actually writing code and manipulating data in a live environment, not just clicking through multiple-choice questions while half-watching Netflix. Can't just memorize some flashcards and call it a day.

Here's the thing, though.

Passing one of these? It really does separate you from the crowd. I mean, when you've got that CCA-500 or CCA-410 administrator cert, hiring managers know you can actually configure and maintain a production Hadoop cluster. Not just talk about it in interviews. The CDP-0011 Generalist exam is newer and honestly reflects where the industry's headed with Cloudera's current platform direction, so if you're choosing between older certs and that one, think about what fits with your career goals right now. Like, actually right now, not some vague five-year plan you scribbled on a napkin during lunch.

The developer track (CCD-410, CCD-333, and those upgrade exams like CCD-470) proves you can build actual data pipelines and applications. That's valuable. The specialist certs like CCB-400 for HBase show deep expertise in specific components, which can be your edge if you're gunning for senior roles or niche positions. And DS-200 bridges into the data science world if that's where you're heading. I had a buddy who went that route after years of pure engineering work and said it felt like learning to ride a bike again, but backwards.

Honestly the biggest mistake I see? People underestimating the hands-on portion. You need to practice in environments that mirror the actual exam conditions, not just read documentation and watch videos while pretending you're absorbing it.

That's where solid practice resources become necessary. The practice exams over at /vendor/cloudera/ cover all these certifications with realistic scenarios. From the CCA175 at /cloudera-dumps/cca175/ to the CCA-505 upgrade path at /cloudera-dumps/cca-505/ and everything in between. They're built to match the actual exam format and difficulty, which matters way more than generic study guides.

Don't rush it. Give yourself time to actually absorb the material and get comfortable in the terminal. But also don't overthink it to the point of paralysis. You'll never feel 100% ready, and that's normal. Book your exam, put in focused prep time, and trust that you've built the skills.

You've got this.